Where will AI go next?

Plus: What mass firings at Twitter mean for its AI workers.

To receive The Algorithm newsletter in your inbox every Monday, sign up here.

Welcome to the Algorithm!

This year we’ve seen a dizzying number of breakthroughs in generative AI, from AIs that can produce videos from just a few words to models that can generate audio based on snippets of a song.

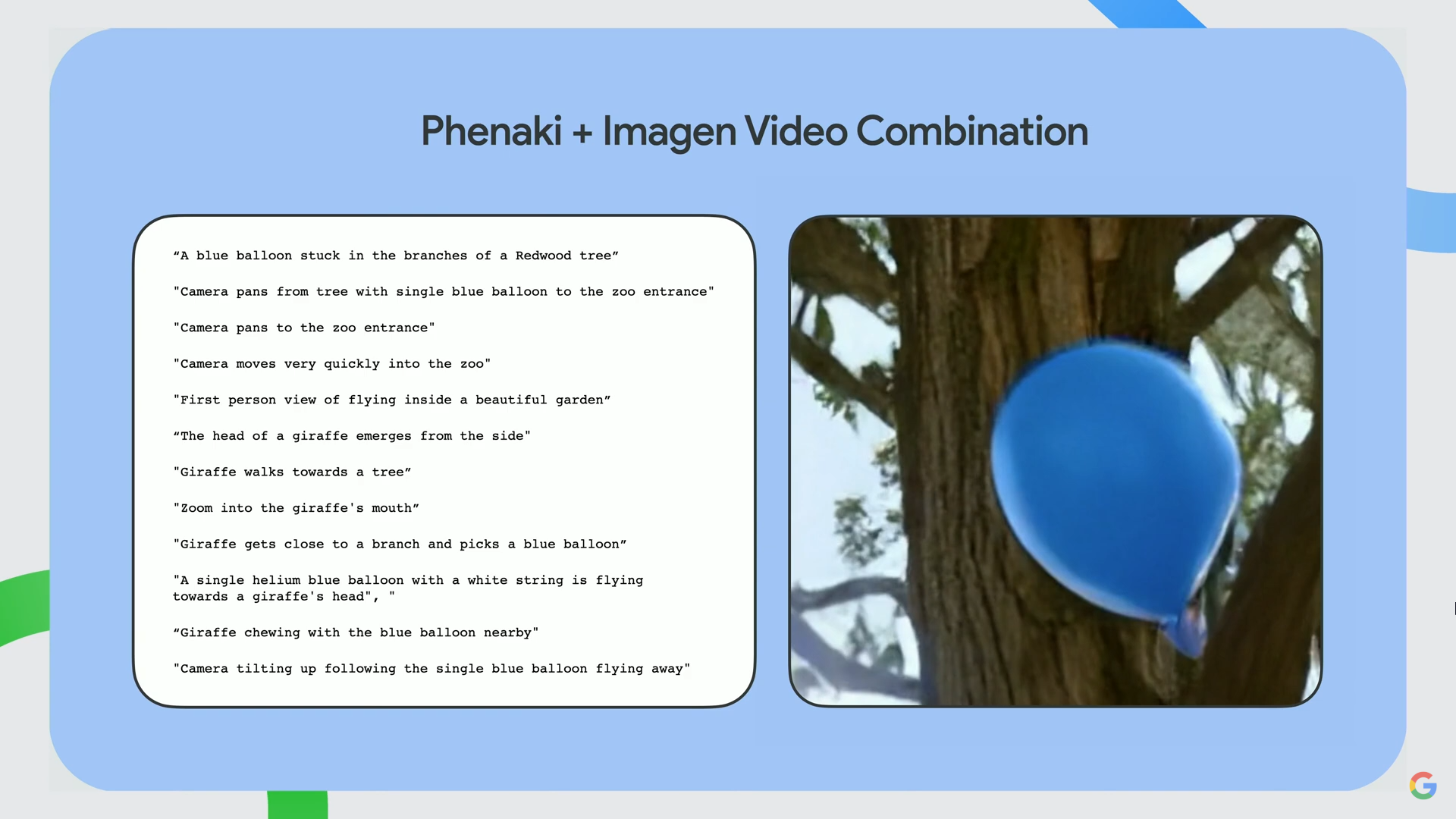

Last week, Google held an AI event in its swanky, brand-new offices by the Hudson River in Manhattan. Your correspondent stopped by to see what the fuss was about. In a continuation of current trends, Google announced a slew of advances in generative AI, including a system that combines its two text-to-video AI models, Phenaki and Imagen. Phenaki allows the system to generate video with a series of text prompts that functions as a sort of script, while Imagen makes the videos higher resolution.

But these models are still a long way from being rolled out for the general public to use. They still have some major problems, such as the ability to generate violent, sexist, racist, or copyright-violating content owing to the nature of the training data, which is mostly just scraped off the internet. One Google researcher told me these models were still in an early stage and that a lot of “stars had to align” before they could be used in actual products. It’s impressive AI research, but it’s also unclear how Google could monetize the technologies.

What could have a real-world impact a lot sooner is Google’s new project to develop a “universal speech model” that has been trained on over 400 languages, Zoubin Ghahramani, vice president of research at Google AI, said at the event. The company didn’t offer many details but said it will publish a paper in the coming months.

If it works out, this will represent a big leap forward in the capabilities of large language models, or LLMs. AI startup Hugging Face’s LLM BLOOM was trained on 46 languages, and Meta has been working on AI models that can translate hundreds of languages in real time. With more languages contributing training data to its model, Google will be able to offer its services to even more people. Incorporating hundreds of languages into one AI model could enable Google to offer better translations or captions on YouTube, or improve its search engine so it’s better at delivering results across more languages.

During my trip to the East Coast, I spoke with top executives at some of the world’s biggest AI labs to hear what they thought was going to be driving the conversation in AI next year. Here’s what they had to say:

Douglas Eck, principal scientist at Google Research and a research director for Google Brain, the company’s deep-learning research team

The next breakthrough will likely come from multimodal AI models, which are armed with multiple senses, such as the ability to use computer vision and audio to interpret things, Eck told me. The next big thing will be to figure out how to build language models into other AI models as they sense the world. This could, for example, help robots understand their surroundings through visual and language cues and voice commands.

Yann LeCun, Meta’s chief AI scientist

Generative AI is going to get better and better, LeCun said: “We’re going to have better ways of specifying what we want out of them.” Currently, the models react to prompts, but “right now, it’s very difficult to control what the text generation system is going to do,” he added. In the future, he hopes, “there’ll be ways to change the architecture a little bit so that there is some level of planning that is more deliberate.”

Raia Hadsell, research director at DeepMind

Hadsell, too, was excited about multimodal generative AI systems, which combine audio, language, and vision. By adding reinforcement learning, which allows AI models to train themselves by trial and error, we might be able to see AI models with “the ability to explore, have autonomy, and interact in environments,” Hadsell told me.

Deeper Learning

What mass firings at Twitter mean for its AI workers

As we reported last week, Twitter may have lost more than a million users since Elon Musk took over. The firm Bot Sentinel, which tracks inauthentic behavior on Twitter by analyzing more than 3.1 million accounts and their activity daily, believes that around 877,000 accounts were deactivated and a further 497,000 were suspended between October 27 and November 1. That’s more than double the usual number.

To me, it’s clear why that’s happening. Users are betting that the platform is going to become a less fun place to hang out. That’s partly because they’ve seen Musk laying off teams of people who work to make sure the platform is safe, including Twitter’s entire AI ethics team. It’s likely something Musk will come to regret. The company is already rehiring engineers and product managers for 13 positions related to machine learning, including roles involved in privacy, platform manipulation, governance, and defense of online users against terrorism, violent extremism, and coordinated harm. But we can only wonder what damage has been done already, especially with the US midterm elections imminent.

Setting a worrying example: The AI ethics team, led by applied AI ethics pioneer Rumman Chowdhury, was doing some really impressive stuff to rein in the most toxic side effects of Twitter’s content moderation algorithms, such as giving outsiders access to their data sets to find bias. As I wrote last week, AI ethicists already face a lot of ignorance about and pushback against their work, which can lead them to burn out. Those left behind at Twitter will face pressure to fix the same problems, but with far fewer resources than before. It’s not going to be pretty. And as the global economy teeters on the edge of a recession, it’s a really worrying sign that top executives such as Musk think AI ethics, a field working to ensure that AI systems are fair and safe, is the first thing worth axing.

Bits and Bytes

This tool lets anyone see the bias in AI image generators

A tool by Hugging Face researcher Sasha Luccioni lets anyone test how text-to-image generation AI Stable Diffusion produces biased outcomes for certain word combinations. (Vice)

Algorithms quietly run the city of DC—and maybe your hometown

A new report from the Electronic Privacy Information Center found that Washington, DC, uses algorithms in 20 agencies, more than a third of them related to policing or criminal justice. (Wired)

Meta does protein folding

Following in DeepMind’s footsteps to apply AI to biology, Meta has unveiled an AI that reveals the structures of hundreds of millions of the least understood proteins. The company says that with 600 million structures, their model is three times larger than anything before. (Meta)

Thanks for reading!

Melissa

Deep Dive

Artificial intelligence

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

Google DeepMind’s new generative model makes Super Mario–like games from scratch

Genie learns how to control games by watching hours and hours of video. It could help train next-gen robots too.

What’s next for generative video

OpenAI's Sora has raised the bar for AI moviemaking. Here are four things to bear in mind as we wrap our heads around what's coming.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.