The world’s top deepfake artist is wrestling with the monster he created

It’s June in Dalian, China, a city on a peninsula that sticks out into the Yellow Sea a few hundred miles from Beijing in one direction and from the North Korean border in the other. Hao Li is standing inside a cavernous, angular building that might easily be a Bond villain’s lair. Outside, the weather is sweltering, and security is tight. The World Economic Forum’s annual conference is in town.

Near Li, politicians and CEOs from around the world take turns stepping into a booth. Inside, they laugh as their face is transformed into that of a famous person: Bruce Lee, Neil Armstrong, or Audrey Hepburn. The trick happens in real time, and it works almost flawlessly.

The remarkable face-swapping machine wasn’t set up merely to divert and amuse the world’s rich and powerful. Li wants these powerful people to consider the consequences that videos doctored with AI—“deepfakes”—could have for them, and for the rest of us.

Misinformation has long been a popular tool of geopolitical sabotage, but social media has injected rocket fuel into the spread of fake news. When fake video footage is as easy to make as fake news articles, it is a virtual guarantee that it will be weaponized. Want to sway an election, ruin the career and reputation of an enemy, or spark ethnic violence? It’s hard to imagine a more effective vehicle than a clip that looks authentic, spreading like wildfire through Facebook, WhatsApp, or Twitter, faster than people can figure out they’ve been duped.

As a pioneer of digital fakery, Li worries that deepfakes are only the beginning. Despite having helped usher in an era when our eyes cannot always be trusted, he wants to use his skills to do something about the looming problem of ubiquitous, near-perfect video deception.

The question is, might it already be too late?

Rewriting reality

Li isn’t your typical deepfaker. He doesn’t lurk on Reddit posting fake porn or reshoots of famous movies modified to star Nicolas Cage. He’s spent his career developing cutting-edge techniques to forge faces more easily and convincingly. He has also messed with some of the most famous faces in the world for modern blockbusters, fooling millions of people into believing in a smile or a wink that was never actually there. Talking over Skype from his office in Los Angeles one afternoon, he casually mentions that Will Smith stopped in recently, for a movie he’s working on.

Actors often come to Li’s lab at the University of Southern California (USC) to have their likeness digitally scanned. They are put inside a spherical array of lights and machine vision cameras to capture the shape of their face, facial expressions, and skin tone and texture down to the level of individual pores. A special-effects team working on a movie can then manipulate scenes that have already been shot, or even add an actor to a new one in post-production.

Such digital deception is now common in big-budget movies. Backgrounds are often rendered digitally, and it’s common for an actor’s face to be pasted onto a stunt person’s in an action scene. That’s led to some breathtaking moments for moviegoers, as when a teenage Princess Leia briefly appeared at the end of Rogue One: A Star Wars Story, even though the actress who had played Leia, Carrie Fisher, was nearly 60 when the movie was shot.

Making these effects look good normally requires significant expertise and millions of dollars. But thanks to advances in artificial intelligence, it is now almost trivial to swap two faces in a video, using nothing more powerful than a laptop. With a little extra knowhow, you can make a politician, a CEO, or a personal enemy say or do anything you want (as in the video at the top of the story, in which Li mapped Elon Musk's likeness onto my face).

A history of trickery

In person, Li looks more cyberpunk than Sunset Strip. His hair is shaved into a Mohawk that flops down on one side, and he often wears a black T-shirt and leather jacket. When speaking, he has an odd habit of blinking in a way that betrays late nights spent in the warm glow of a computer screen. He isn’t shy about touting the brilliance of his tech, or what he has in the works. During conversations, he likes to whip out a smartphone to show you something new.

Li grew up in Saarbrücken, Germany, the son of Taiwanese immigrants. He attended a French-German high school and learned to speak four languages fluently (French, German, English, and Mandarin). He remembers the moment that he decided to spend his time blurring the line between reality and fantasy. It was 1993, when he saw a huge dinosaur lumber into view in Steven Spielberg’s Jurassic Park. As the actors gawped at the computer-generated beast, Li, then 12, grasped what technology had just made possible. “I realized you could now basically create anything, even things that don’t even exist,” he recalls.

Li got his PhD at ETH Zurich, a prestigious technical university in Switzerland, where one of his advisors remembers him as both a brilliant student and an incorrigible prankster. Videos accompanying academic papers sometimes included less-than-flattering caricatures of his teachers.

Shortly after joining USC, Li created facial tracking technology used to make a digital version of the late actor Paul Walker for the action movie Furious 7. It was a big achievement, since Walker, who died in a car accident halfway through shooting, had not been scanned beforehand, and his character needed to appear in so many scenes. Li’s technology was used to paste Walker’s face onto the bodies of his two brothers, who took turns acting in his place in more than 200 scenes.

The movie, which grossed $1.5 billion at the box office, was the first to depend so heavily on a digitally re-created star. Li mentions Walker’s virtual role when talking about how good video trickery is becoming. “Even I can’t tell which ones are fake,” he says with a shake of his head.

Virtually you

In 2009, less than a decade before deepfakes emerged, Li developed a way to capture a person’s face in real time and use it to operate a virtual puppet. This involved using the latest depth sensors and new software to map that face, and its expressions, to a mask made of deformable virtual material.

Most important, the approach worked without the need to add dozens of motion-tracking markers to a person’s face, a standard industry technique for tracking face movement. Li contributed to the development of software called Faceshift, which would later be commercialized as a university spinoff. The company was acquired by Apple in 2015, and its technology was used to create the Animoji software that lets you turn yourself into a unicorn or a talking pile of poop on the latest iPhones.

Li and his students have published dozens of papers on such topics as avatars that mirror whole body movements, highly realistic virtual hair, and simulated skin that stretches the way real skin does. In recent years, his group has drawn on advances in machine learning and especially deep learning, a way of training computers to do things using a large simulated neural network. His research has also been applied to medicine, helping develop ways of tracking tumors inside the body and modeling the properties of bones and tissue.

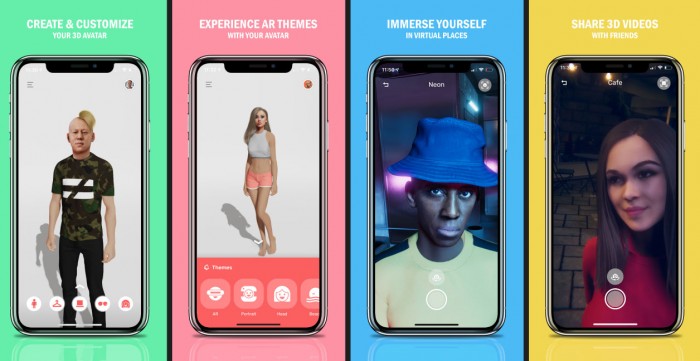

Today, Li splits his time between teaching, consulting for movie studios, and running a new startup, Pinscreen. The company uses more advanced AI than is behind deepfakes to make virtual avatars. Its app turns a single photo into a photorealistic 3D avatar in a few seconds. It employs machine-learning algorithms that have been trained to map the appearance of a face onto a 3D model using many thousands of still images and corresponding 3D scans. The process is improved using what are known as generative adversarial networks, or GANs (which are not used for most deepfakes). This means having one algorithm produce fake images while another judges whether they are fake, a process that gradually improves the fakery. You can have your avatar perform silly dances and try on different outfits, and you can control the avatar’s facial expressions in real time, using your own face via the camera on your smartphone.

A former employee, Iman Sadeghi, is suing Pinscreen, alleging it faked a presentation of the technology at the the SIGGRAPH conference in 2017. MIT Technology Review has seen letters from several experts and SIGGRAPH organizers dismissing those claims.

Pinscreen is working with several big-name clothing retailers that see its technology as a way to let people try garments on without having to visit a physical store. The technology could also be big for videoconferencing, virtual reality, and gaming. Just imagine a Fortnite character that not only looks like you, but also laughs and dances the same way.

Underneath the digital silliness, though, is an important trend: AI is rapidly making advanced image manipulation the province of the smartphone rather than the desktop. FaceApp, developed by a company in Saint Petersburg, Russia, has drawn millions of users, and recent controversy, by offering a one-click way to change a face on your phone. You can add a smile to a photo, remove blemishes, or mess with your age or gender (or someone else’s). Dozens more apps offer similar manipulations at the click of a button.

Not everyone is excited about the prospect of this technology becoming ubiquitous. Li and others are “basically trying to make one-image, mobile, and real-time deepfakes,” says Sam Gregory, director of Witness, a nonprofit focused on video and human rights. “That’s the threat level that worries me, when it [becomes] something that’s less easily controlled and more accessible to a range of actors.”

Fortunately, most deepfakes still look a bit off. A flickering face, a wonky eye, or an odd skin tone make them easy enough to spot. But just as an expert can remove such flaws, advances in AI promise to smooth them out automatically, making the fake videos both simpler to create and harder to detect.

Even as Li races ahead with digital fakery, he is also troubled by the potential for harm. “We’re sitting in front of a problem,” he says.

Catching imposters

US policymakers are especially concerned about how deepfakes might be used to spread more convincing fake news and misinformation ahead of next year’s presidential election. Earlier this month, the House Intelligence Committee asked Facebook, Google, and Twitter how they planned to deal with the threat of deepfakes. Each company said it was working on the problem, but none offered a solution.

DARPA, the US military’s well-funded research agency, is also worried about the rise of digital manipulation. In 2016, before deepfakes became a thing, DARPA launched a program called Media Forensics, or MediFor, to encourage digital forensics experts to develop automated tools for catching manipulated imagery. A human expert might use a range of methods to spot photographic forgeries, from analyzing inconsistencies in a file’s data or the characteristics of specific pixels to hunting for physical inconsistencies such as a misplaced shadow or an improbable angle.

MediFor is now largely focused on spotting deepfakes. Detection is fundamentally harder than creation because AI algorithms can learn to hide things that give fakes away. Early deepfake detection methods include tracking unnatural blinking and weird lip movements. But the latest deepfakes have already learned to automatically smooth out such glitches.

Earlier this year, Matt Turek, DARPA program manager for MediFor, asked Li to demonstrate his fakes to the MediFor researchers. This led to a collaboration with Hany Farid, a professor at UC Berkeley and one of the world’s foremost authorities on digital forensics. The pair are now engaged in a digital game of cat-and-mouse, with Li developing deepfakes for Farid to catch, and then refining them to evade detection.

Farid, Li, and others recently released a paper outlining a new, more powerful way to spot deepfakes. It hinges on training a machine-learning algorithm to recognize the quirks of a specific individual’s facial expressions and head movements. If you simply paste someone’s likeness onto another face, those features won’t be carried over. It would require a lot of computer power and training data—i.e., images or video of the person—to make a deepfake that incorporates these characteristics. But one day it will be possible. “Technical solutions will continue to improve on the defensive side,” says Turek. “But will that be perfect? I doubt it.”

Pixel perfect

Back in Dalian, it’s clear that people are starting to wake up to the danger of deepfakes. The morning before I met with Li, a European politician had stepped into the face-swap booth, only for his minders to stop him. They were worried that the system might capture his likeness in detail, making it easier for someone to create fake clips of him.

As he watches people using the booth, Li tells me that there is no technical reason why deepfakes should be detectable. “Videos are just pixels with a certain color value,” he says.

Making them perfect is just a matter of time and resources, and as his collaboration with Farid shows, it’s getting easier all the time. “We are witnessing an arms race between digital manipulations and the ability to detect those,” he says, “with advancements of AI-based algorithms catalyzing both sides.”

The bad news, Li thinks, is that he will eventually win. In a few years, he reckons, undetectable deepfakes could be created with a click. “When that point comes,” he says, “we need to be aware that not every video we see is true.”

Deep Dive

Artificial intelligence

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

Google DeepMind’s new generative model makes Super Mario–like games from scratch

Genie learns how to control games by watching hours and hours of video. It could help train next-gen robots too.

What’s next for generative video

OpenAI's Sora has raised the bar for AI moviemaking. Here are four things to bear in mind as we wrap our heads around what's coming.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.