A new way to build tiny neural networks could create powerful AI on your phone

Neural networks are the core software of deep learning. Even though they’re so widespread, however, they’re really poorly understood. Researchers have observed their emergent properties without actually understanding why they work the way they do.

Now a new paper out of MIT has taken a major step toward answering this question. And in the process the researchers have made a simple but dramatic discovery: we’ve been using neural networks far bigger than we actually need. In some cases they’re 10—even 100—times bigger, so training them costs us orders of magnitude more time and computational power than necessary.

Put another way, within every neural network exists a far smaller one that can be trained to achieve the same performance as its oversize parent. This isn’t just exciting news for AI researchers. The finding has the potential to unlock new applications—some of which we can’t yet fathom—that could improve our day-to-day lives. More on that later.

But first, let’s dive into how neural networks work to understand why this is possible.

How neural networks work

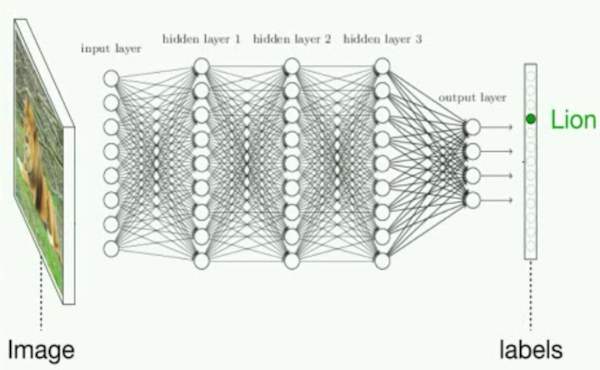

You may have seen neural networks depicted in diagrams like the one above: they’re composed of stacked layers of simple computational nodes that are connected in order to compute patterns in data.

The connections are what’s important. Before a neural network is trained, these connections are assigned random values between 0 and 1 that represent their intensity. (This is called the “initialization” process.) During training, as the network is fed a series of, say, animal photos, it tweaks and tunes those intensities—sort of like the way your brain strengthens or weakens different neuron connections as you accumulate experience and knowledge. After training, the final connection intensities are then used in perpetuity to recognize animals in new photos.

While the mechanics of neural networks are well understood, the reason they work the way they do has remained a mystery. Through lots of experimentation, however, researchers have observed two properties of neural networks that have proved useful.

Observation #1. When a network is initialized before the training process, there’s always some likelihood that the randomly assigned connection strengths end up in an untrainable configuration. In other words, no matter how many animal photos you feed the neural network, it won’t achieve a decent performance, and you just have to reinitialize it to a new configuration. The larger the network (the more layers and nodes it has), the less likely that is. Whereas a tiny neural network may be trainable in only one of every five initializations, a larger network may be trainable in four of every five. Again, why this happens had been a mystery, but that’s why researchers typically use very large networks for their deep-learning tasks. They want to increase their chances of achieving a successful model.

Observation #2. The consequence is that a neural network usually starts off bigger than it needs to be. Once it’s done training, typically only a fraction of its connections remain strong, while the others end up pretty weak—so weak that you can actually delete, or “prune,” them without affecting the network’s performance.

For many years now, researchers have exploited this second observation to shrink their networks after training to lower the time and computational costs involved in running them. But no one thought it was possible to shrink their networks before training. It was assumed that you had to start with an oversize network and the training process had to run its course in order to separate the relevant connections from the irrelevant ones.

Jonathan Frankle, the MIT PhD student who coauthored the paper, questioned that assumption. “If you need way fewer connections than what you started with,” he says, “why can’t we just train the smaller network without the extra connections?” Turns out you can.

The lottery ticket hypothesis

The discovery hinges on the reality that the random connection strengths assigned during initialization aren’t, in fact, random in their consequences: they predispose different parts of the network to fail or succeed before training even happens. Put another way, the initial configuration influences which final configuration the network will arrive at.

By focusing on this idea, the researchers found that if you prune an oversize network after training, you can actually reuse the resultant smaller network to train on new data and preserve high performance—as long as you reset each connection within this downsized network back to its initial strength.

From this finding, Frankle and his coauthor Michael Carbin, an assistant professor at MIT, propose what they call the “lottery ticket hypothesis.” When you randomly initialize a neural network’s connection strengths, it’s almost like buying a bag of lottery tickets. Within your bag, you hope, is a winning ticket—i.e., an initial configuration that will be easy to train and result in a successful model.

This also explains why observation #1 holds true. Starting with a larger network is like buying more lottery tickets. You’re not increasing the amount of power that you’re throwing at your deep-learning problem; you’re simply increasing the likelihood that you will have a winning configuration. Once you find the winning configuration, you should be able to reuse it again and again, rather than continue to replay the lottery.

Next steps

This raises a lot of questions. First, how do you find the winning ticket? In their paper, Frankle and Carbin took a brute-force approach of training and pruning an oversize network with one data set to extract the winning ticket for another data set. In theory, there should be much more efficient ways of finding—or even designing—a winning configuration from the start.

Second, what are the training limits of a winning configuration? Presumably, different kinds of data and different deep-learning tasks would require different configurations.

Third, what is the smallest possible neural network that you can get away with while still achieving high performance? Frankle found that through an iterative training and pruning process, he was able to consistently reduce the starting network to between 10% and 20% of its original size. But he thinks there’s a chance for it to be even smaller.

Already, many research teams within the AI community have begun to conduct follow-up work. A researcher at Princeton recently teased the results of a forthcoming paper addressing the second question. A team at Uber also published a new paper on several experiments investigating the nature of the metaphorical lottery tickets. Most surprising, they found that once a winning configuration has been found, it already achieves significantly better performance than the original untrained oversize network before any training whatsoever. In other words, the act of pruning a network to extract a winning configuration is itself an important method of training.

Neural network nirvana

Frankle imagines a future where the research community will have an open-source database of all the different configurations they’ve found, with descriptions for what tasks they’re good for. He jokingly calls this “neural network nirvana.” He believes it would dramatically accelerate and democratize AI research by lowering the cost and speed of training, and by allowing people without giant data servers to do this work directly on small laptops or even mobile phones.

It could also change the nature of AI applications. If you can train a neural network locally on a device instead of in the cloud, you can improve the speed of the training process and the security of the data. Imagine a machine-learning-based medical device, for example, that could improve itself through use without needing to send patient data to Google’s or Amazon’s servers.

“We’re constantly bumping up against the edge of what we can train,” says Jason Yosinski, a founding member of Uber AI Labs who coauthored the follow-up Uber paper, “meaning the biggest networks you can fit on a GPU or the longest we can tolerate waiting before we get a result back.” If researchers could figure out how to identify winning configurations from the get-go, it would reduce the size of neural networks by a factor of 10, even 100. The ceiling of possibility would dramatically increase, opening a new world of potential uses.

Deep Dive

Artificial intelligence

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

Google DeepMind’s new generative model makes Super Mario–like games from scratch

Genie learns how to control games by watching hours and hours of video. It could help train next-gen robots too.

What’s next for generative video

OpenAI's Sora has raised the bar for AI moviemaking. Here are four things to bear in mind as we wrap our heads around what's coming.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.