Cookbooks, Wikipedia, and auto-generated Spanglish: The quirky ways AI researchers gather data

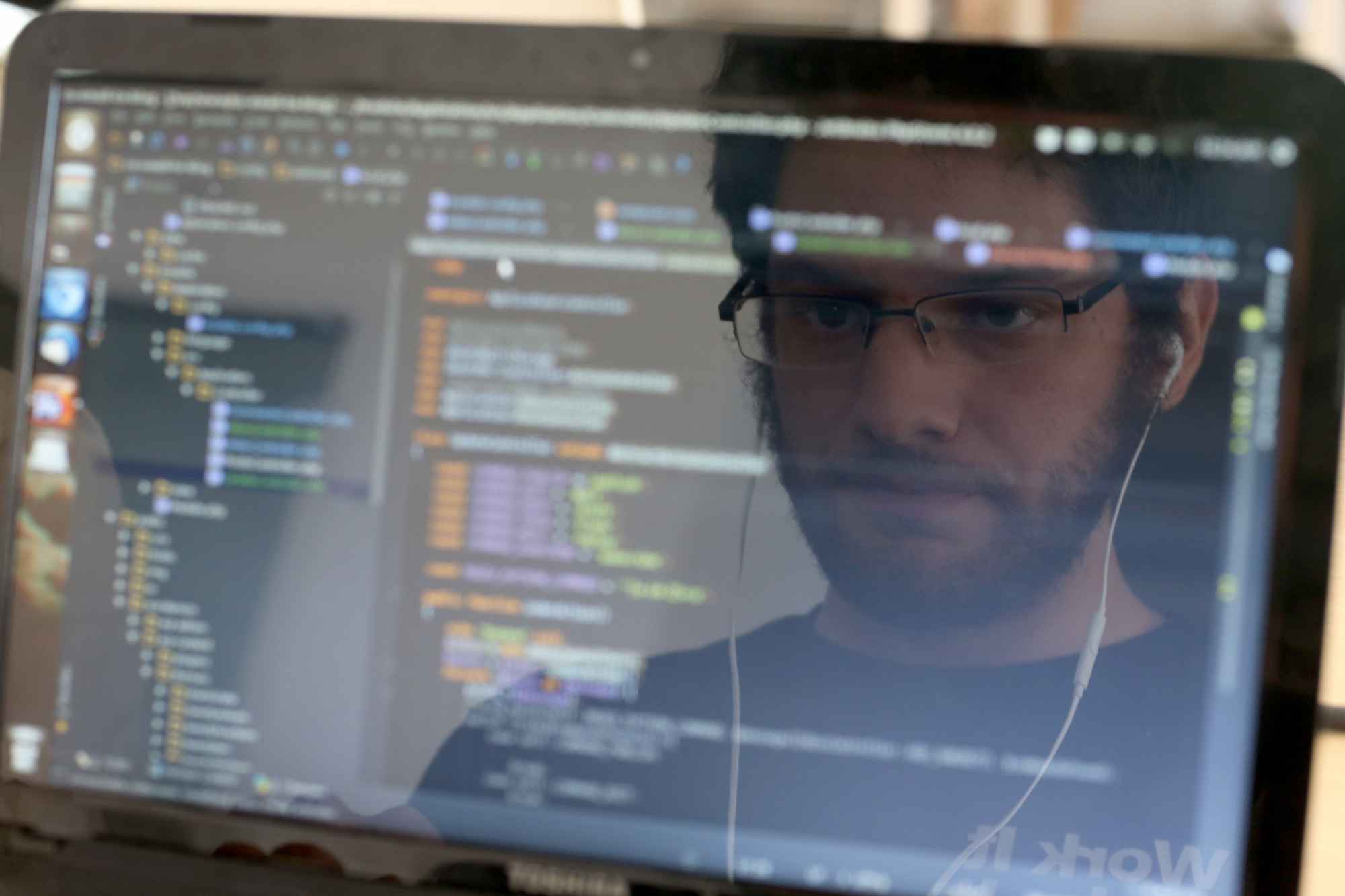

Data is the oil that fuels AI development, and it gives us many of the advances we take for granted: YouTube captions, Spotify music recommendations, those creepy ads that follow you around the Internet.

But when it comes to collecting useful data, AI experts often have to get creative. Take natural-language processing (NLP), a subfield of AI that focuses on teaching computers how to parse human language. At the annual Conference on Empirical Methods in NLP, experts presented a broad range of research that drew on information gathered in some ingenious ways. We’ve summarized four of our favorite projects below.

SPANGLISH

Among the papers on multilingual NLP this year, Microsoft presented one that focused on processing “code-mixed language”—text or speech that switches fluidly between two languages. Considering that more than half of the world’s population is multilingual, this understudied area is important.

The researchers started with Spanglish (Spanish and English), but they lacked enough Spanglish text to train the machine. As common as code-mixing is in multilingual conversation, it’s rarely found in text. To overcome that challenge, the researchers wrote a program to pop English into the Microsoft Bing translator and weave some phrases from the Spanish translation back into the original text. The program made sure the words and phrases that were swapped had the same meaning. Just like that, they were able to create as much Spanglish as they needed.

The resulting NLP model outperformed previous models that were trained on just Spanish and English separately. The researchers hope that their work will eventually help develop multilingual chatbots that can speak naturally in code-mixed language.

COOKBOOKS

Recipes are great for making food, but they can also provide nourishment for machines. They all follow a similar step-by-step pattern, and they often include pictures that correspond with the text—an excellent source of structured data for teaching machines to comprehend text and images at the same time. That’s why researchers at Hacettepe University in Turkey compiled a giant data set of around 20,000 illustrated cooking recipes. They hope it will be a new resource for benchmarking the performance of joint image-text comprehension.

What they call “RecipeQA” will build on previous research that has focused on machine reading comprehension and visual comprehension separately. In the former, the machine must understand a question and a related passage to find the answer; in the latter, it searches for the answer in a related photo instead. Having text and photos side by side increases the complexity of the task because the photos and text may share complementary or redundant information.

SHORTER SENTENCES

Google wants AI to spruce up your prose. To this end, researchers there created the largest ever data set for breaking up long sentences into smaller ones with the equivalent meaning. Where would you find massive amounts of editing data? Wikipedia, of course.

From Wikipedia’s rich edit history, the research team extracted instances in which people split long sentences. The result: 60 times more distinct sentence-split examples and 90 times more vocabulary words than were found in the previous benchmark data set for this task. The data set also spans multiple languages.

When they trained a machine-learning model on their new data, it achieved 91% accuracy. (Here, the percentage reflects the proportion of sentences that retained their meaning and grammatical correctness after being rewritten.) In comparison, a model trained on previous data reached only 32% accuracy. When they combined both data sets and trained another model, it achieved 95% accuracy. The researchers concluded that future improvements could be made by finding even more sources of data.

SOCIAL-MEDIA BIAS

Studies have shown that the language we generate can be a great predictor of our race, gender, and age, even if that information is never explicitly stated. With that in mind, researchers at Bar-Ilan University in Israel and the Allen Institute for Artificial Intelligence tried using AI to de-bias text by removing those embedded indicators.

To acquire enough data that could represent the language patterns across different demographics, they turned to Twitter. They gathered a bunch of tweets from users that were evenly distributed between non-Hispanic whites and non-Hispanic blacks; between men and women; and between people in the 18-34 and above-35 age groups.

They then used an adversarial approach—pitting two neural networks against one another—to see if they could automatically remove the inherent demographic indicators within the tweets. One neural net tried to predict the demographics, while the other tried to tweak the text to be completely neutral, with the goal of driving down the first model’s prediction accuracy to 50% (or chance). The approach ultimately mitigated race, gender, and age indicators significantly but not entirely.

Deep Dive

Artificial intelligence

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

What’s next for generative video

OpenAI's Sora has raised the bar for AI moviemaking. Here are four things to bear in mind as we wrap our heads around what's coming.

Is robotics about to have its own ChatGPT moment?

Researchers are using generative AI and other techniques to teach robots new skills—including tasks they could perform in homes.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.