Andrew Ng Has a Chatbot That Can Help with Depression

I’m a little embarrassed to admit this, but I’ve been seeing a virtual therapist.

It’s called Woebot, and it’s a Facebook chatbot developed by Stanford University researchers that offers interactive cognitive behavioral therapy. And Andrew Ng, a prominent figure who previously led efforts to develop and apply the latest AI technologies at Google and Baidu, is now lending his backing to the project by joining the board of directors of the company offering its services.

“If you look at the societal need, as well as the ability of AI to help, I think that digital mental-health care checks all the boxes,” Ng says. “If we can take a little bit of the insight and empathy [of a real therapist] and deliver that, at scale, in a chatbot, we could help millions of people.”

For the past few days I’ve been trying out its advice for understanding and managing thought processes and for dealing with depression and anxiety. While I don’t think I’m depressed, I found the experience positive. This is especially impressive given how annoying I find most chatbots to be.

“Younger people are the worst served by our current systems,” says Alison Darcy, a clinical research psychologist who came up with the idea for Woebot while teaching at Stanford in July 2016. “It’s also very stigmatized and expensive.”

Darcy, who met Ng at Stanford, says the work going on there in applying techniques like deep learning to conversational agents inspired her to think that therapy could be delivered by a bot. She says it is possible to automate cognitive behavioral therapy because it follows a series of steps for identifying and addressing unhelpful ways of thinking. And recent advances in natural-language processing have helped make chatbots more useful within limited domains.

Depression is certainly a big problem. It is now the leading form of disability in the U.S., and 50 percent of U.S. college students report suffering from anxiety or depression.

Darcy and colleagues tried several different prototypes on college volunteers, and they found the chatbot approach to be particularly effective. In a study they published this year in a peer-reviewed medical journal, Woebot was found to reduce the symptoms of depression in students over the course of two weeks.

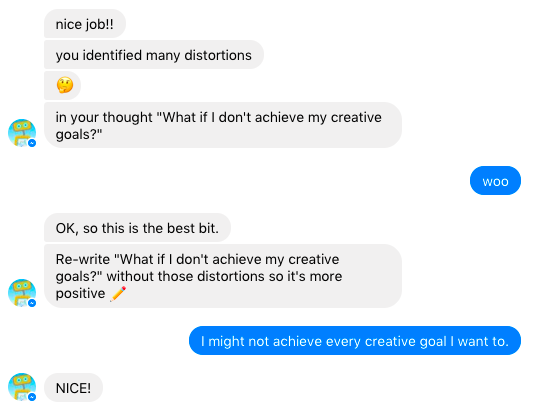

In my own testing, I found Woebot to be surprisingly good at what it does. A chatbot might seem like a crude way to deliver therapy, especially given how clumsy many virtual helpers often are. But Woebot works smoothly thanks to a clever interface and some pretty impressive natural-language technology. The software states up front that no person will see your answers, but it also offers ways of reaching someone if your situation is serious. I mostly used predefined answers that it offered me, but even when I strayed from the script a little, it didn’t get tripped up. If you try, though, I’m sure it’s possible to flummox it.

You are guided through conversations with Woebot, but the system is able to understand a pretty wide range of answers. It checks in with you every day and directs you through the steps. For example, when I tried telling Woebot I was stressed about work, the bot offered ways of reframing my feelings to make them seem more positive.

The emergence of a real AI therapist is, in a sense, pretty ironic. The very first chatbot, Eliza, developed at MIT in 1966 by Joseph Weizenbaum, was designed to mimic a “Rogerian psychologist.” Eliza used a few clever tricks to create the illusion of an intelligent conversation—for example, repeating answers back to a person or offering open-ended questions such as “In what way?” and “Can you think of a specific example?” Weizenbaum was amazed to find that people seemed to believe they were talking to a real therapist, and that some offered up very personal secrets.

Darcy also says both Eliza and Woebot are effective because a conversation is a natural way to communicate distress and receive emotional support. She adds that people seem happy to suspend their disbelief, and seem to enjoy talking to Woebot as if it were a real therapist. “People talk about their problems for a reason,” she says. “Therapy is conversational.”

Ng says he expects AI to deliver further advances in language in coming years, but it will still be relatively crude (see “AI’s Language Problem”). He says better ways of parsing the meaning of language will help make the tool more effective, though. Some other mental-health experts also seem positive about the prospect of applying such technology to treatment.

“To the extent that the Woebot can replicate the way that a therapist can help explain concepts and facilitate trying out new coping skills, this approach may be even more helpful than working through a workbook,” says Michael Thase, a professor of psychiatry at the University of Pennsylvania and an expert on cognitive behavioral therapy. “There is good evidence that people with milder levels of depression can benefit from various kinds of online or Web-based therapy approaches.”

But Thase adds that studies have shown such technology to work best in conjunction with help from a real person. “Some time with a real therapist is helpful,” he says.

Deep Dive

Artificial intelligence

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

Google DeepMind’s new generative model makes Super Mario–like games from scratch

Genie learns how to control games by watching hours and hours of video. It could help train next-gen robots too.

What’s next for generative video

OpenAI's Sora has raised the bar for AI moviemaking. Here are four things to bear in mind as we wrap our heads around what's coming.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.