Mind-Reading Algorithms Reconstruct What You’re Seeing Using Brain-Scan Data

One of the more interesting goals in neuroscience is to reconstruct perceived images by analyzing brain scans. The idea is to work out what people are looking at by monitoring the activity in their visual cortex.

The difficulty, of course, is finding ways to efficiently process the data from functional magnetic resonance imaging (fMRI) scans. The task is to map the activity in three-dimensional voxels inside the brain to two-dimensional pixels in an image.

That turns out to be hard. fMRI scans are famously noisy, and the activity in one voxel is well known to be influenced by activity in other voxels. This kind of correlation is computationally expensive to deal with; indeed, most approaches simply ignore it. And that significantly reduces the quality of the image reconstructions they produce.

So an important goal is to find better ways to crunch the data from fMRI scans and so produce more accurate brain-image reconstructions.

Today, Changde Du at the Research Center for Brain-Inspired Intelligence in Beijing, China, and a couple of pals, say they have developed just such a technique. Their trick is to process the data using deep-learning techniques that handle nonlinear correlations between voxels more capably. The result is a much better way to reconstruct the way a brain perceives images.

Changde and co start with several data sets of fMRI scans of the visual cortex of a human subject looking at a simple image—a single digit or a single letter, for example. Each data set consists of the scans and the original image.

The task is to find a way to use the fMRI scans to reproduce the perceived image. In total, the team has access to over 1,800 fMRI scans and original images.

They treat this as a straightforward deep-learning task. They use 90 percent of the data to train the network to understand the correlation between the brain scan and the original image.

They then test the network on the remaining data by feeding it the scans and asking it to reconstruct the original images.

The big advantage of this approach is that the network learns which voxels to use to reconstruct the image. That avoids the need to process the data from them all.

It also learns how the data from these voxels is correlated. That’s important because if the correlations are ignored, they end up being treated like noise and discarded. So the new approach—the so-called deep generative multiview model—exploits these correlations and distinguishes them from real noise.

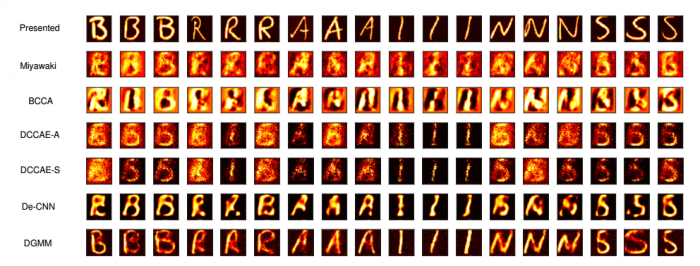

To evaluate the deep generative multiview model, Changde and co compare its results from those of a number of other brain image reconstruction techniques. They do this using standard image comparison methods to see how closely the reconstructed images match the originals.

The results make for interesting reading. In general, the reconstructed images are clear representations of the originals. In many cases, they are significantly more accurate than other techniques can manage.

The image comparison metrics confirm this. “Extensive experimental comparisons demonstrate that our approach can reconstruct visual images from fMRI measurements more accurately,” say Changde and co.

That’s interesting work with significant implications. The ability to reconstruct brain images is an important stepping stone in the work to create better brain-machine interfaces. Next steps will include ways to analyze more complex scenes and moving images. Changde and co say their approach could also be applied to other brain encoding problems such as audio and physical tasks.

Beyond that, who knows. From here, it is only a short imaginative leap to brain-scan techniques that reveal what people are thinking or dreaming. Just imagine!

Ref: arxiv.org/abs/1704.07575: Sharing Deep Generative Representation for Perceived Image Reconstruction from Human Brain Activity

Deep Dive

Artificial intelligence

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

Google DeepMind’s new generative model makes Super Mario–like games from scratch

Genie learns how to control games by watching hours and hours of video. It could help train next-gen robots too.

What’s next for generative video

OpenAI's Sora has raised the bar for AI moviemaking. Here are four things to bear in mind as we wrap our heads around what's coming.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.