How AI experts are using GPT-4

Plus: Chinese tech giant Baidu just released its answer to ChatGPT.

This story originally appeared in The Algorithm, our weekly newsletter on AI. To get stories like this in your inbox first, sign up here.

WOW, last week was intense. Several leading AI companies had major product releases. Google said it was giving developers access to its AI language models, and AI startup Anthropic unveiled its AI assistant Claude. But one announcement outshined them all: OpenAI’s new multimodal large language model, GPT-4. My colleague William Douglas Heaven got an exclusive preview. Read about his initial impressions.

Unlike OpenAI’s viral hit ChatGPT, which is freely accessible to the general public, GPT-4 is currently accessible only to developers. It’s still early days for the tech, and it’ll take a while for it to feed through into new products and services. Still, people are already testing its capabilities out in the open. Here are my top picks of the fun ways they’re doing that.

Hustling

In an example that went viral on Twitter, Jackson Greathouse Fall, a brand designer, asked GPT-4 to make as much money as possible with an initial budget of $100. Fall said he acted as a “human liaison” and bought anything the computer program told him to.

GPT-4 suggested he set up an affiliate marketing site to make money by promoting links to other products (in this instance, eco-friendly ones). Fall then asked GPT-4 to come up with prompts that would allow him to create a logo using OpenAI image-generating AI system DALL-E 2. Fall also asked GPT-4 to generate content and allocate money for social media advertising.

The stunt attracted lots of attention from people on social media wanting to invest in his GPT-4-inspired marketing business, and Fall ended up with $1,378.84 cash on hand. This is obviously a publicity stunt, but it’s also a cool example of how the AI system can be used to help people come up with ideas.

Productivity

Big tech companies really want you to use AI at work. This is probably the way most people will experience and play around with the new technology. Microsoft wants you to use GPT-4 in its Office suite to summarize documents and help with PowerPoint presentations—just as we predicted in January, which already seems like eons ago.

Not so coincidentally, Google announced it will embed similar AI tech in its office products, including Google Docs and Gmail. That will help people draft emails, proofread texts, and generate images for presentations.

Health care

I spoke with Nikhil Buduma and Mike Ng, the cofounders of Ambience Health, which is funded by OpenAI. The startup uses GPT-4 to generate medical documentation based on provider-patient conversations. Their pitch is that it will alleviate doctors’ workloads by removing tedious bits of the job, such as data entry.

Buduma says GPT-4 is much better at following instructions than its predecessors. But it’s still unclear how well it will fare in a domain like health care, where accuracy really matters. OpenAI says it has improved some of the flaws that AI language models are known to have, but GPT-4 is still not completely free of them. It makes stuff up and presents falsehoods confidently as facts. It’s still biased. That’s why the only way to deploy these models safely is to make sure human experts are steering them and correcting their mistakes, says Ng.

Writing code

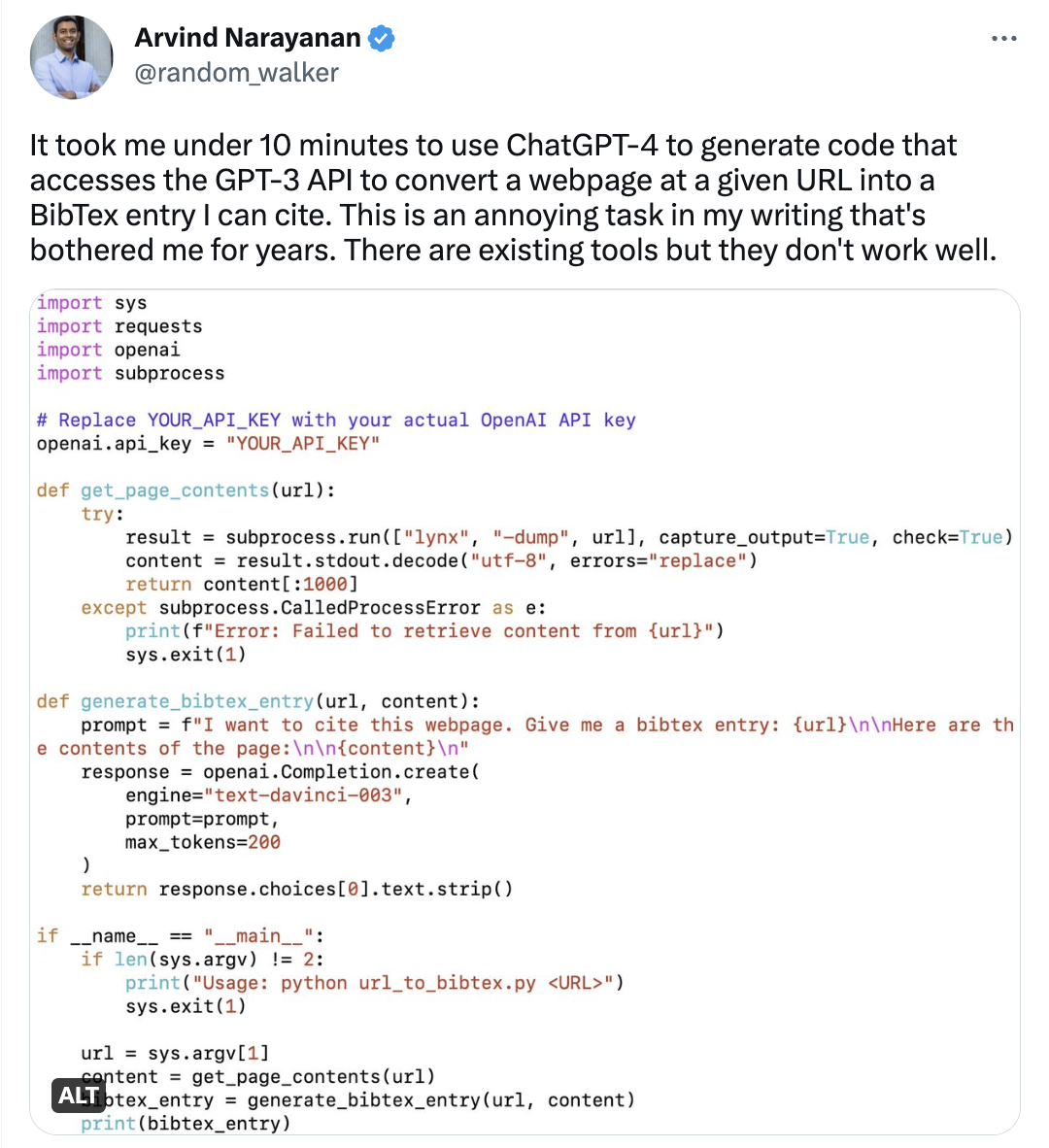

Arvind Narayanan, a computer science professor at Princeton University, saysit took him less than 10 minutes to get GPT-4 to generate code that converts URLs to citations.

Narayanan says he’s been testing AI tools for text generation, image generation, and code generation, and that he finds code generation to be the most useful application. “I think the benefit of LLM [large language model] code generation is both time saved and psychological,” he tweeted.

In a demo, OpenAI cofounder Greg Brockman used GPT-4 to create a website based on a very simple image of a design he drew on a napkin. As Narayanan points out, this is exactly where the power of these AI systems lies: automating mundane, low-stakes, yet time-consuming tasks.

Writing books

Reid Hoffman, cofounder and executive chairman of LinkedIn and an early investor in OpenAI, says he used GPT-4 to help write a book called Impromptu: Amplifying Our Humanity through AI. Hoffman reckons it’s the first book cowritten by GPT-4. (Its predecessor ChatGPT has been used to create tons of books.)

Hoffman got access to the system last summer and has since been writing up his thoughts on the different ways the AI model could be used in education, the arts, the justice system, journalism, and more. In the book, which includes copy-pasted extracts from his interactions with the system, he outlines his vision for the future of AI, uses GPT-4 as a writing assistant to get new ideas, and analyzes its answers.

A quick final word … GPT-4 is the cool new shiny toy of the moment for the AI community. There’s no denying it is a powerful assistive technology that can help us come up with ideas, condense text, explain concepts, and automate mundane tasks. That’s a welcome development, especially for white-collar knowledge workers.

However, it’s notable that OpenAI itself urges caution around use of the model and warns that it poses several safety risks, including infringing on privacy, fooling people into thinking it’s human, and generating harmful content. It also has the potential to be used for other risky behaviors we haven’t encountered yet. So by all means, get excited, but let’s not be blinded by the hype. At the moment, there is nothing stopping people from using these powerful new models to do harmful things, and nothing to hold them accountable if they do.

Deeper Learning

Chinese tech giant Baidu just released its answer to ChatGPT

So. Many. Chatbots. The latest player to enter the AI chatbot game is Chinese tech giant Baidu. Late last week, Baidu unveiled a new large language model called Ernie Bot, which can solve math questions, write marketing copy, answer questions about Chinese literature, and generate multimedia responses.

A Chinese alternative: Ernie Bot (the name stands for “Enhanced Representation from kNowledge IntEgration;” its Chinese name is 文心一言, or Wenxin Yiyan) performs particularly well on tasks specific to Chinese culture, like explaining a historical fact or writing a traditional poem. Read more from my colleague Zeyi Yang.

Even Deeper Learning

Language models may be able to “self-correct” biases—if you ask them to

Large language models are infamous for spewing toxic biases, thanks to the reams of awful human-produced content they get trained on. But if the models are large enough, they may be able to self-correct for some of these biases. Remarkably, all we might have to do is ask.

That’s a fascinating new finding by researchers at AI lab Anthropic, who tested a bunch of language models of different sizes, and different amounts of training. The work raises the obvious question whether this “self-correction” could and should be baked into language models from the start. Read the full story by Niall Firth to find out more.

Bits and Bytes

Google made its generative AI tools available for developers

Another Google announcement got overshadowed by the OpenAI hype train: the company has made some of its powerful AI technology available for developers through an API that lets them build products on top of its large language model PaLMs. (Google)

Midjourney’s text-to-image AI has finally mastered hands

Image-generating AI systems are going to get ridiculously good this year. Exhibit A: The latest iteration of text-to-image AI system Midjourney can now create pictures of humans with five fingers. Until now, mangled digits were a telltale sign an image was generated by a computer program. The upshot of all this is that it’s only going to become harder and harder to work out what’s real and what’s not. (Ars Technica)

A new tool could let artists protect their images from being scraped for AI

Researchers at the University of Chicago have released a tool that allows artists to add a sort of protective digital layer to their work that prevents it from being used to train image-generating AI models. (University of Chicago)

Runway launched a more powerful text-to-video AI system

Advances in generative AI just keep coming Runway, the video-editing startup that co-created the text-to-image model Stable Diffusion, has released a significant update to its generative video-making software one month after launching the previous version. The new model, called Gen-2, improves on Gen-1, which Will Douglas Heaven wrote about here, by upping the quality of its generated video and adding the ability to generate videos from scratch with only a text prompt.

Thanks for reading!

Melissa

Deep Dive

Artificial intelligence

What’s next for generative video

OpenAI's Sora has raised the bar for AI moviemaking. Here are four things to bear in mind as we wrap our heads around what's coming.

Is robotics about to have its own ChatGPT moment?

Researchers are using generative AI and other techniques to teach robots new skills—including tasks they could perform in homes.

An AI startup made a hyperrealistic deepfake of me that’s so good it’s scary

Synthesia's new technology is impressive but raises big questions about a world where we increasingly can’t tell what’s real.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.