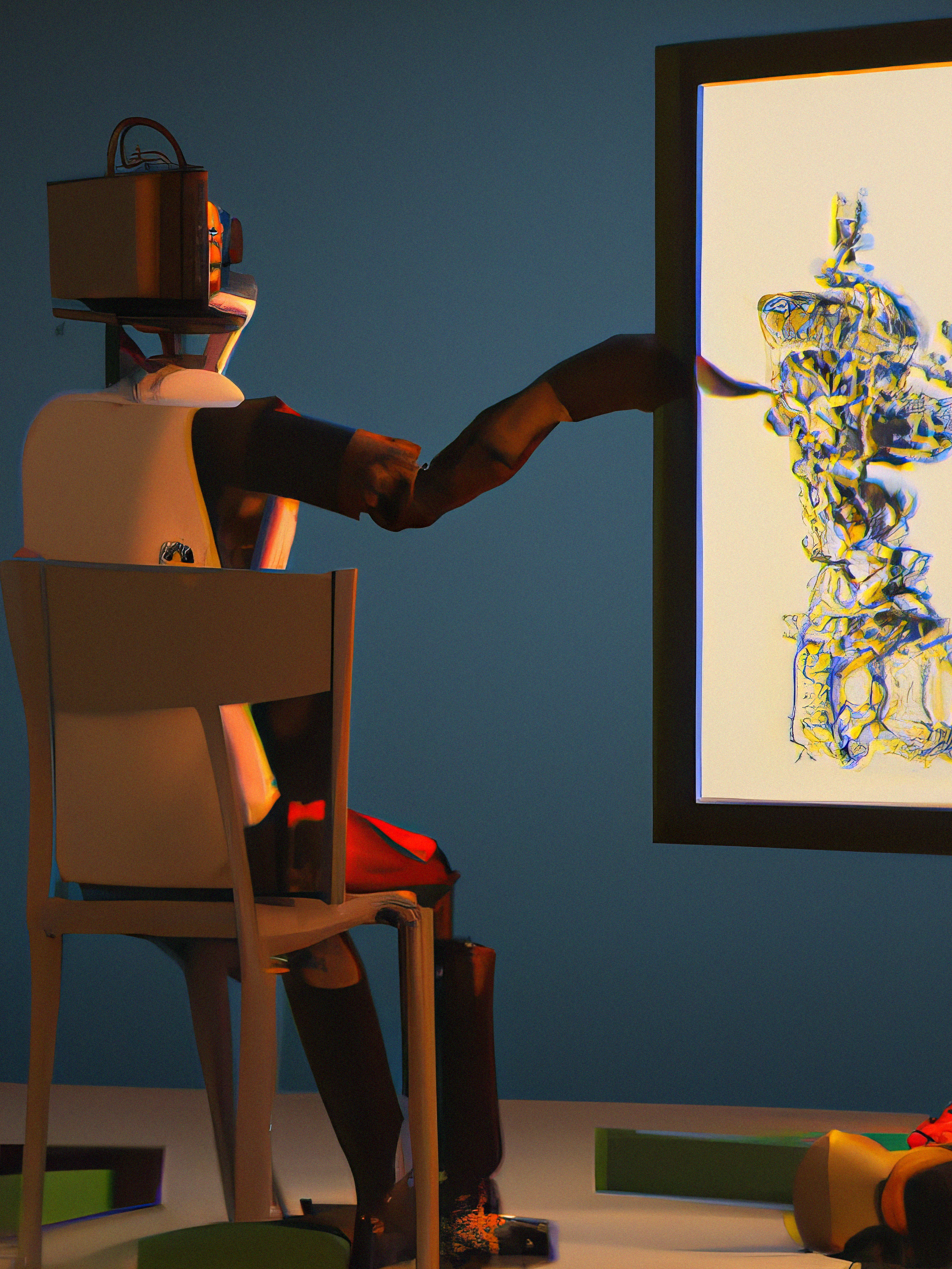

AI that makes images: 10 Breakthrough Technologies 2023

AI models that generate stunning imagery from simple phrases are evolving into powerful creative and commercial tools.

WHO

OpenAI, Stability AI, Midjourney, Google

WHEN

Now

OpenAI introduced a world of weird and wonderful mash-ups when its text-to-image model DALL-E was released in 2021. Type in a short description of pretty much anything, and the program spat out a picture of what you asked for in seconds. DALL-E 2, unveiled in April 2022, was a massive leap forward. Google also launched its own image-making AI, called Imagen.

Yet the biggest game-changer was Stable Diffusion, an open-source text-to-image model released for free by UK-based startup Stability AI in August. Not only could Stable Diffusion produce some of the most stunning images yet, but it was designed to run on a (good) home computer.

By making text-to-image models accessible to all, Stability AI poured fuel on what was already an inferno of creativity and innovation. Millions of people have created tens of millions of images in just a few months. But there are problems, too. Artists are caught in the middle of one of the biggest upheavals in a decade. And, just like language models, text-to-image generators can amplify the biased and toxic associations buried in training data scraped from the internet.

The tech is now being built into commercial software, such as Photoshop. Visual-effects artists and video-game studios are exploring how it can fast-track development pipelines. And text-to-image technology has already advanced to text-to-video. The AI-generated video clips demoed by Google, Meta, and others in the last few months are only seconds long, but that will change. One day movies could be made just by feeding a script into a computer.

Nothing else in AI grabbed people’s attention more last year—for the best and worst reasons. Now we wait to see what lasting impact these tools will have on creative industries—and the entire field of AI.

No one knows where the rise of generative AI will leave us. Read more here.

Deep Dive

Artificial intelligence

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

Google DeepMind’s new generative model makes Super Mario–like games from scratch

Genie learns how to control games by watching hours and hours of video. It could help train next-gen robots too.

What’s next for generative video

OpenAI's Sora has raised the bar for AI moviemaking. Here are four things to bear in mind as we wrap our heads around what's coming.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.