How to solve AI’s inequality problem

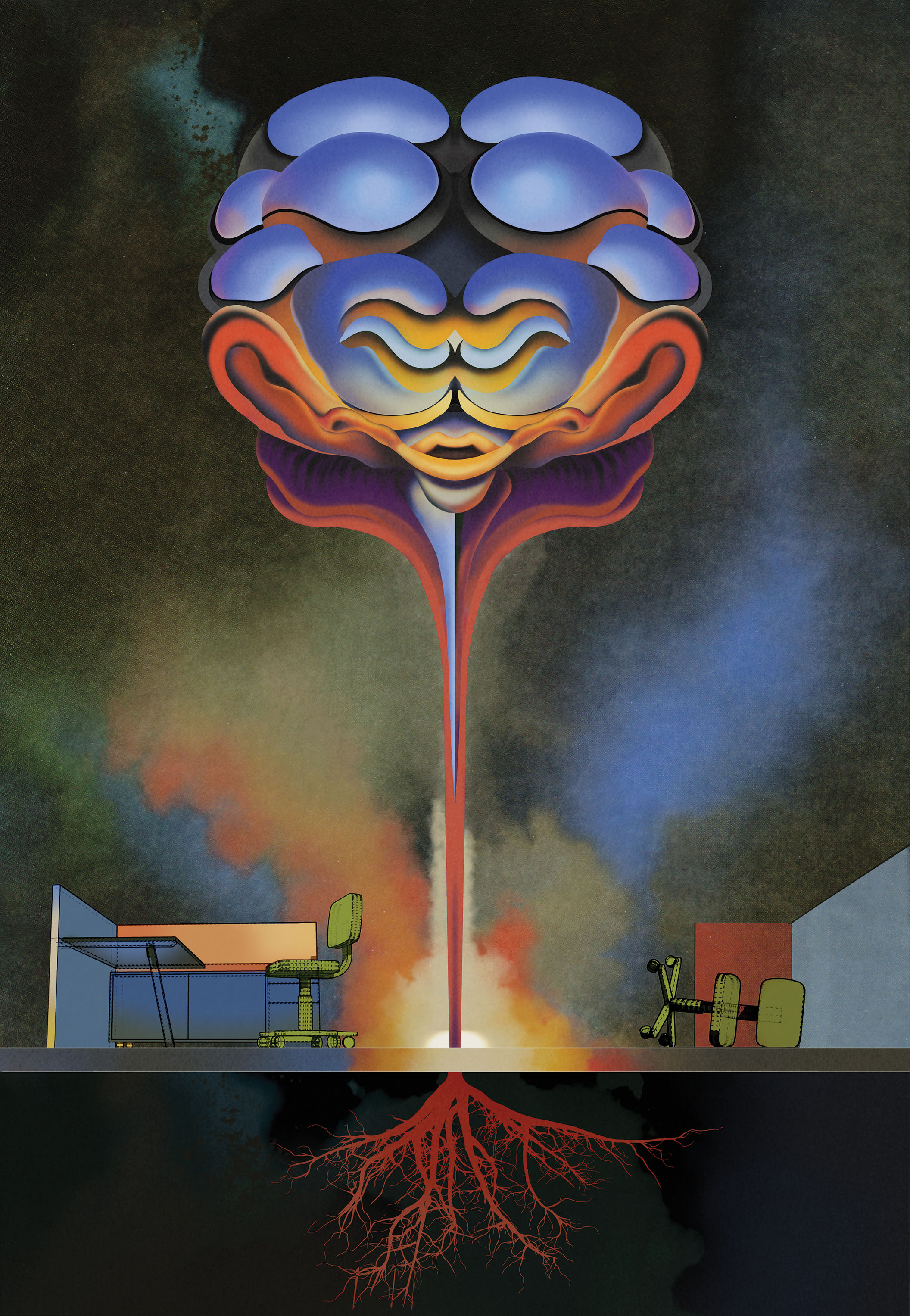

New digital technologies are exacerbating inequality. Here’s how scientists creating AI can make better choices.

The economy is being transformed by digital technologies, especially in artificial intelligence, that are rapidly changing how we live and work. But this transformation poses a troubling puzzle: these technologies haven’t done much to grow the economy, even as income inequality worsens. Productivity growth, which economists consider essential to improving living standards, has largely been sluggish since at least the mid-2000s in many countries.

Why are these technologies failing to produce more economic growth? Why aren’t they fueling more widespread prosperity? To get at an answer, some leading economists and policy experts are looking more closely at how we invent and deploy AI and automation—and identifying ways we can make better choices.

In an essay called “The Turing Trap: The Promise & Peril of Human-Like Artificial Intelligence,” Erik Brynjolfsson, director of the Stanford Digital Economy Lab, writes of the way AI researchers and businesses have focused on building machines to replicate human intelligence. The title, of course, is a reference to Alan Turing and his famous 1950 test for whether a machine is intelligent: Can it imitate a person so well that you can’t tell it isn’t one? Ever since then, says Brynjolfsson, many researchers have been chasing this goal. But, he says, the obsession with mimicking human intelligence has led to AI and automation that too often simply replace workers, rather than extending human capabilities and allowing people to do new tasks.

For Brynjolfsson, an economist, simple automation, while producing value, can also be a path to greater inequality of income and wealth. The excessive focus on human-like AI, he writes, drives down wages for most people “even as it amplifies the market power of a few” who own and control the technologies. The emphasis on automation rather than augmentation is, he argues in the essay, the “single biggest explanation” for the rise of billionaires at a time when average real wages for many Americans have fallen.

Brynjolfsson is no Luddite. His 2014 book, coauthored with Andrew McAfee, is called The Second Machine Age: Work, Progress, and Prosperity in a Time of Brilliant Technologies. But he says the thinking of AI researchers has been too limited. “I talk to many researchers, and they say: ‘Our job is to make a machine that is like a human.’ It’s a clear vision,” he says. But, he adds, “it’s also kind of a lazy, low bar.’”

In the long run, he argues, far more value is created by using AI to produce new goods and services, rather than simply trying to replace workers. But he says that for businesses, driven by a desire to cut costs, it’s often easier to just swap in a machine than to rethink processes and invest in technologies that take advantage of AI to expand the company’s products and improve the productivity of its workers.

Recent advances in AI have been impressive, leading to everything from driverless cars to human-like language models. Guiding the trajectory of the technology is critical, however. Because of the choices that researchers and businesses have made so far, new digital technologies have created vast wealth for those owning and inventing them, while too often destroying opportunities for those in jobs vulnerable to being replaced. These inventions have generated good tech jobs in a handful of cities, like San Francisco and Seattle, while much of the rest of the population has been left behind. But it doesn’t have to be that way.

Daron Acemoglu, an MIT economist, provides compelling evidence for the role automation, robots, and algorithms that replace tasks done by human workers have played in slowing wage growth and worsening inequality in the US. In fact, he says, 50 to 70% of the growth in US wage inequality between 1980 and 2016 was caused by automation.

That’s mostly before the surge in the use of AI technologies. And Acemoglu worries that AI-based automation will make matters even worse. Early in the 20th century and during previous periods, shifts in technology typically produced more good new jobs than they destroyed, but that no longer seems to be the case. One reason is that companies are often choosing to deploy what he and his collaborator Pascual Restrepo call “so-so technologies,” which replace workers but do little to improve productivity or create new business opportunities.

At the same time, businesses and researchers are largely ignoring the potential of AI technologies to expand the capabilities of workers while delivering better services. Acemoglu points to digital technologies that could allow nurses to diagnose illnesses more accurately or help teachers provide more personalized lessons to students.

Government, AI scientists, and Big Tech are all guilty of making decisions that favor excessive automation, says Acemoglu. Federal tax policies favor machines. While human labor is heavily taxed, there is no payroll tax on robots or automation. And, he says, AI researchers have “no compunction [about] working on technologies that automate work at the expense of lots of people losing their jobs.”

But he reserves his strongest ire for Big Tech, citing data indicating that US and Chinese tech giants fund roughly two-thirds of AI work. “I don’t think it’s an accident that we have so much emphasis on automation when the future of technology in this country is in the hands of a few companies like Google, Amazon, Facebook, Microsoft, and so on that have algorithmic automation as their business model,” he says.

Backlash

Anger over AI’s role in exacerbating inequality could endanger the technology’s future. In her new book Cogs and Monsters: What Economics Is, and What It Should Be, Diane Coyle, an economist at Cambridge University, argues that the digital economy requires new ways of thinking about progress. “Whatever we mean by the economy growing, by things getting better, the gains will have to be more evenly shared than in the recent past,” she writes. “An economy of tech millionaires or billionaires and gig workers, with middle-income jobs undercut by automation, will not be politically sustainable.”

Improving living standards and increasing prosperity for more people will require greater use of digital technologies to boost productivity in various sectors, including health care and construction, says Coyle. But people can’t be expected to embrace the changes if they’re not seeing the benefits—if they’re just seeing good jobs being destroyed.

In a recent interview with MIT Technology Review, Coyle said she fears that tech’s inequality problem could be a roadblock to deploying AI. “We’re talking about disruption,” she says. “These are transformative technologies that change the ways we spend our time every day, that change business models that succeed.” To make such “tremendous changes,” she adds, you need social buy-in.

Instead, says Coyle, resentment is simmering among many as the benefits are perceived to go to elites in a handful of prosperous cities.

In the US, for instance, during much of the 20th century the various regions of the country were—in the language of economists—“converging,” and financial disparities decreased. Then, in the 1980s, came the onslaught of digital technologies, and the trend reversed itself. Automation wiped out many manufacturing and retail jobs. New, well-paying tech jobs were clustered in a few cities.

According to the Brookings Institution, a short list of eight American cities that included San Francisco, San Jose, Boston, and Seattle had roughly 38% of all tech jobs by 2019. New AI technologies are particularly concentrated: Brookings’s Mark Muro and Sifan Liu estimate that just 15 cities account for two-thirds of the AI assets and capabilities in the United States (San Francisco and San Jose alone account for about one-quarter).

The dominance of a few cities in the invention and commercialization of AI means that geographical disparities in wealth will continue to soar. Not only will this foster political and social unrest, but it could, as Coyle suggests, hold back the sorts of AI technologies needed for regional economies to grow.

Part of the solution could lie in somehow loosening the stranglehold that Big Tech has on defining the AI agenda. That will likely take increased federal funding for research independent of the tech giants. Muro and others have suggested hefty federal funding to help create US regional innovation centers, for example.

A more immediate response is to broaden our digital imaginations to conceive of AI technologies that don’t simply replace jobs but expand opportunities in the sectors that different parts of the country care most about, like health care, education, and manufacturing.

Changing minds

The fondnesss that AI and robotics researchers have for replicating the capabilities of humans often means trying to get a machine to do a task that’s easy for people but daunting for the technology. Making a bed, for example, or an espresso. Or driving a car. Seeing an autonomous car navigate a city’s street or a robot act as a barista is amazing. But too often, the people who develop and deploy these technologies don’t give much thought to the potential impact on jobs and labor markets.

Anton Korinek, an economist at the University of Virginia and a Rubenstein Fellow at Brookings, says the tens of billions of dollars that have gone into building autonomous cars will inevitably have a negative effect on labor markets once such vehicles are deployed, taking the jobs of countless drivers. What if, he asks, those billions had been invested in AI tools that would be more likely to expand labor opportunities?

When applying for funding at places like the US National Science Foundation and the National Institutes of Health, Korinek explains, “no one asks, ‘How will it affect labor markets?’”

Katya Klinova, a policy expert at the Partnership on AI in San Francisco, is working on ways to get AI scientists to rethink the ways they measure success. “When you look at AI research, and you look at the benchmarks that are used pretty much universally, they’re all tied to matching or comparing to human performance,” she says. That is, AI scientists grade their programs in, say, image recognition against how well a person can identify an object.

Such benchmarks have driven the direction of the research, Klinova says. “It’s no surprise that what has come out is automation and more powerful automation,” she adds. “Benchmarks are super important to AI developers—especially for young scientists, who are entering en masse into AI and asking, ‘What should I work on?’”

But benchmarks for the performance of human-machine collaborations are lacking, says Klinova, though she has begun working to help create some. Collaborating with Korinek, she and her team at Partnership for AI are also writing a user guide for AI developers who have no background in economics to help them understand how workers might be affected by the research they are doing.

“It’s about changing the narrative away from one where AI innovators are given a blank ticket to disrupt and then it’s up to the society and government to deal with it,” says Klinova. Every AI firm has some kind of answer about AI bias and ethics, she says, “but they’re still not there for labor impacts.”

The pandemic has accelerated the digital transition. Businesses have understandably turned to automation to replace workers. But the pandemic has also pointed to the potential of digital technologies to expand our abilities. They’ve given us research tools to help create new vaccines and provided a viable way for many to work from home.

As AI inevitably expands its impact, it will be worth watching to see whether this leads to even greater damage to good jobs—and more inequality. “I’m optimistic we can steer the technology in the right way,” says Brynjolfsson. But, he adds, that will mean making deliberate choices about the technologies we create and invest in.

Reviewed

“The Turing Trap: The Promise & Peril of Human-Like Artificial Intelligence”

Erik Brynjolfsson

Daedalus, Spring 2022

“The wrong kind of AI? Artificial intelligence and the future of labour demand”

Daron Acemoglu and Pascual Restrepo

Cambridge Journal Of Regions, Economy and Society, March 2020

Cogs and Monsters: What Economics Is, and What It Should Be

Diane Coyle

Princeton University Press

Deep Dive

Artificial intelligence

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

Google DeepMind’s new generative model makes Super Mario–like games from scratch

Genie learns how to control games by watching hours and hours of video. It could help train next-gen robots too.

What’s next for generative video

OpenAI's Sora has raised the bar for AI moviemaking. Here are four things to bear in mind as we wrap our heads around what's coming.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.