Don’t underestimate the cheapfake

On November 30, Chinese foreign ministry spokesman Lijian Zhao pinned an image to his Twitter profile. In it, a soldier stands on an Australian flag and grins maniacally as he holds a bloodied knife to a boy’s throat. The boy, whose face is covered by a semi-transparent veil, carries a lamb. Alongside the image, Zhao tweeted, “Shocked by murder of Afghan civilians & prisoners by Australian soldiers. We strongly condemn such acts, &call [sic] for holding them accountable.”

The tweet is referencing a recent announcement by the Australian Defence Force, which found “credible information” that 25 Australian soldiers were involved in the murders of 39 Afghan civilians and prisoners between 2009 and 2013. The image purports to show an Australian soldier about to slit the throat of an innocent Afghan child. Explosive stuff.

Except the image is fake. Upon closer examination, it’s not even very convincing. It could have been put together by a Photoshop novice. This image is a so-called cheapfake, a piece of media that has been crudely manipulated, edited, mislabeled, or improperly contextualized in order to spread disinformation.

The cheapfake is now at the heart of a major international incident. Australia’s prime minister, Scott Morrison, said China should be “utterly ashamed” and demanded an apology for the “repugnant” image. Beijing has refused, instead accusing Australia of “barbarism” and of trying to “deflect public attention” from alleged war crimes by its armed forces in Afghanistan.

There are two important political lessons to draw from this incident. The first is that Beijing sanctioned the use of a cheapfake by one of its top diplomats to actively spread disinformation on Western online platforms. China has traditionally exercised caution in such matters, aiming to present itself as a benign and responsible superpower. This new approach is a significant departure.

The cheapfake is now at the heart of a major international incident.

More broadly, however, this skirmish also shows the growing importance of visual disinformation as a political tool. Over the last decade, the proliferation of manipulated media has reshaped political realities. (Consider, for instance, the cheapfakes that catalyzed a genocide against the Rohingya Muslims in Burma, or helped spread covid disinformation.) Now that global superpowers are openly sharing cheapfakes on social media, what’s to stop them (or any other actor) from deploying more sophisticated visual disinformation as it emerges?

For years, journalists and technologists have warned about the dangers of “deepfakes.” Broadly, deepfakes are a type of “synthetic media’” that has been manipulated or created by artificial intelligence. They can also be understood as the “superior” successor to cheapfakes.

Technological advances are simultaneously improving the quality of visual disinformation and making it easier for anyone to generate. As it becomes possible to produce deepfakes through smartphone apps, almost anyone will be able to create sophisticated visual disinformation at next to no cost.

False alarm

Deepfake warnings reached a fever pitch ahead of the US presidential election this year. For months, politicians, journalists, and academics debated how to counter the perceived threat. In the run-up to the vote, state legislatures in Texas and California even preemptively outlawed the use of deepfakes to sway elections.

In retrospect, these fears were overstated. Apart from a few interesting developments, including a tongue-in-cheek creation by the Russian-state broadcaster Russia Today (RT) in which a defeated Donald Trump admits to being Russian president Vladimir Putin’s pawn, there was little election-related deepfakery to report. Certainly nothing materialized that could be objectively said to have swayed the outcome. Rather than being used to sabotage or exploit politicians, deepfakes are still most often used to create nonconsensual porn.

Although deepfakes haven’t yet become the weapons of mass disinformation that some predicted, there’s no room for complacency. The potential risk is largely mitigated, for now, by technical limitations. As deepfake-creation technologies improve, the floodgates will open.

And even before then, the mere awareness of deepfakes is already having a harmful effect. In the near future, bad actors will be able to produce deepfakes about everything, and simply dismiss any authentic media as fake. This “double bonus” for bad actors is known as the “liar’s dividend.” Although the term was coined in a seminal 2018 paper on deepfakes, it doesn’t pertain to deepfakes alone. The concept extends to all disinformation, including cheapfakes.

Cheapfakes everywhere

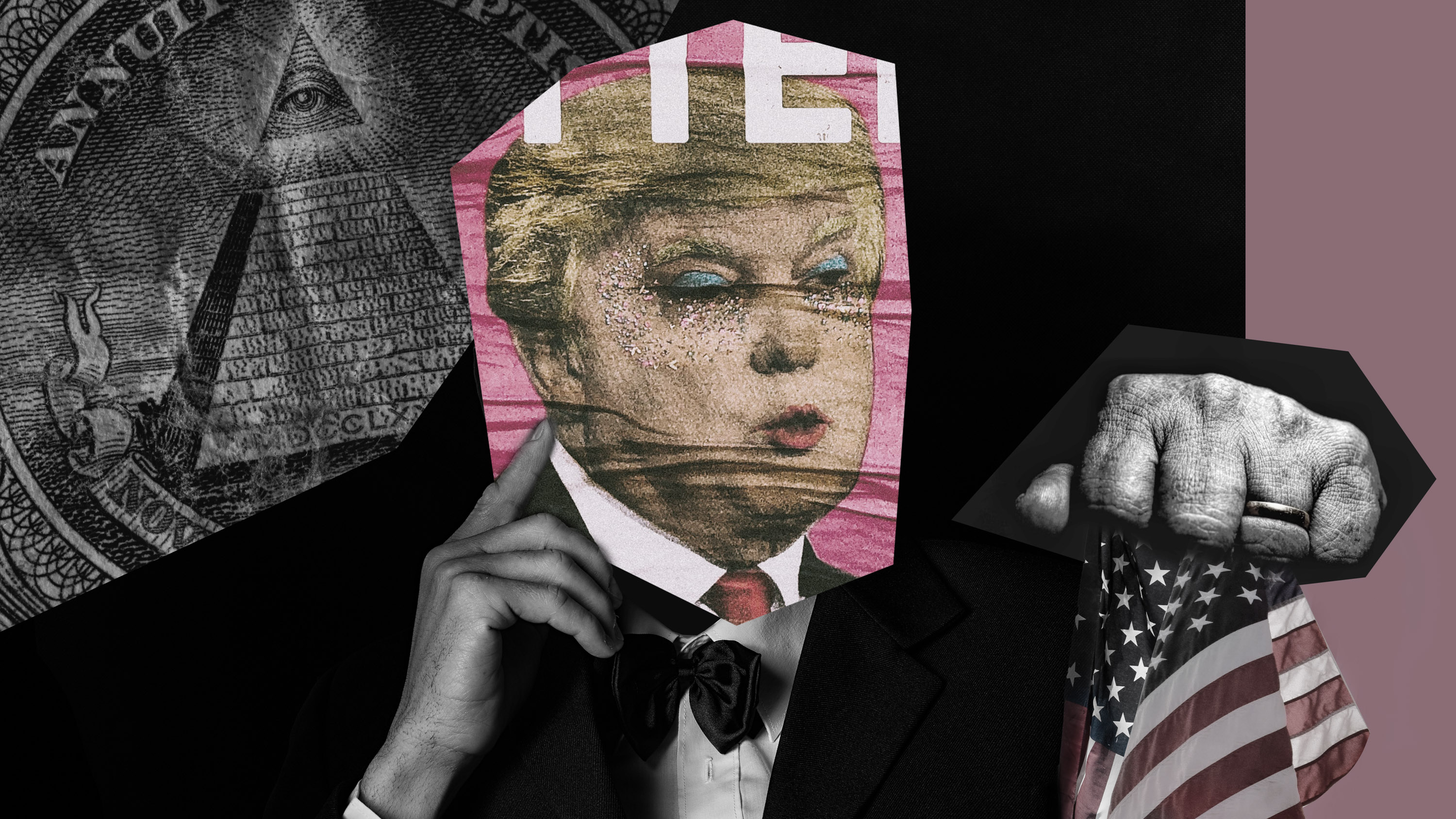

Since the infamous doctored video of House Speaker Nancy Pelosi appeared in 2019, cheapfakes have become a regular feature of US political life. This year, they helped sustain a high-profile disinformation operation advanced by the president and his closest associates. The false assertion that the election was marred by widespread voter fraud was consistently reinforced by cheapfakes.

One example is a viral video of President-elect Joe Biden saying, “We have put together, I think, the most extensive and inclusive voter fraud organization in the history of American politics.” When properly contextualized, Biden’s comments can be understood as describing a program to protect voters in the event of baseless litigation regarding the election result. Nonetheless, the clip was shared by both Trump and press secretary Kayleigh McEnany and portrayed as an admission of fraud.

Even as votes were still being counted, authentic videos of election workers transcribing votes and collecting ballots in a routine manner were shared, by people including Trump himself, as “evidence” of vote dumping and tampering. Meanwhile, a viral cheapfake showing a man “tearing up” ballots turned out to be the work of a TikTok prankster.

The allegations of widespread voter fraud are baseless, and courts across America are dismissing attempts by Trump’s legal team to dispute the election result. Earlier this month, Attorney General Bill Barr (who steps down on December 23) finally admitted that the US Justice Department has uncovered no evidence of fraud.

But cheapfakes appear to have had real-world consequences: in early December, Gabriel Sterling, Georgia’s voting-system implementation manager, cited instances of intimidation and death threats against election workers, pleading, “It’s all gone too far! It has to stop!” A Georgia election worker had to go into hiding after a 34-second cheapfake that falsely accused him of throwing away an absentee ballot went viral.

Belief in the narrative of the “rigged election” falls starkly along partisan lines. A Politico/Morning Consult poll conducted after the election found that 70% of Republican voters said they did not believe it had been “free and fair.” The number of GOP voters expressing similar distrust of the process before the election was 35%. Conversely, only half of Democratic voters (52%) said they believed the election would be “free and fair” before November 3. In polls conducted after Biden’s victory, the figure skyrocketed to 90%.

What to believe?

The growing prevalence of visual disinformation appears to be affecting politics in two distinct ways. First, it’s feeding the proliferation of all kinds of disinformation. Bad actors are acting with more impunity, confident that they can avoid scrutiny and accountability. At the time of writing, the cheapfake shared by Lijian Zhao was still pinned to his Twitter profile.

The cheapfakes of today offer valuable lessons about the deepfakes of the future.

Second, the growing prevalence of visual disinformation makes us more susceptible to all disinformation. As the public becomes more aware of the many ways in which media can be manipulated, it will become more skeptical of all media, including authentic media.

This skepticism makes it easier for bad actors to dismiss real events as fake. It may also lead to increasingly subjective and partisan interpretations of events by the public itself. Consider, for example, the widely held belief among Republican voters that the 2020 US election was not free and fair. This is demonstrably false, but as public opinion data suggests, it’s not only Republicans voters who distrust the electoral process. Until they won, Democratic voters were also skeptical. If a Republican candidate wins in 2024, will public opinion flip again along partisan lines?

While the most dire predictions about politically motivated deepfakes were not realized in 2020, we must analyze their evolution in the context of cheapfakes and other forms of political disinformation. The cheapfakes of today offer valuable lessons about the deepfakes of the future. The question, then, should not be “When will political deepfakes emerge?” but “How we can mitigate the many ways in which visual disinformation is already reshaping our political reality?”

Nina Schick is the author of Deepfakes: The Coming Infocalypse.

Deep Dive

Artificial intelligence

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

Google DeepMind’s new generative model makes Super Mario–like games from scratch

Genie learns how to control games by watching hours and hours of video. It could help train next-gen robots too.

What’s next for generative video

OpenAI's Sora has raised the bar for AI moviemaking. Here are four things to bear in mind as we wrap our heads around what's coming.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.