IBM has built a new drug-making lab entirely in the cloud

The news: IBM has built a new chemistry lab called RoboRXN in the cloud. It combines AI models, a cloud computing platform, and robots to help scientists design and synthesize new molecules while working from home.

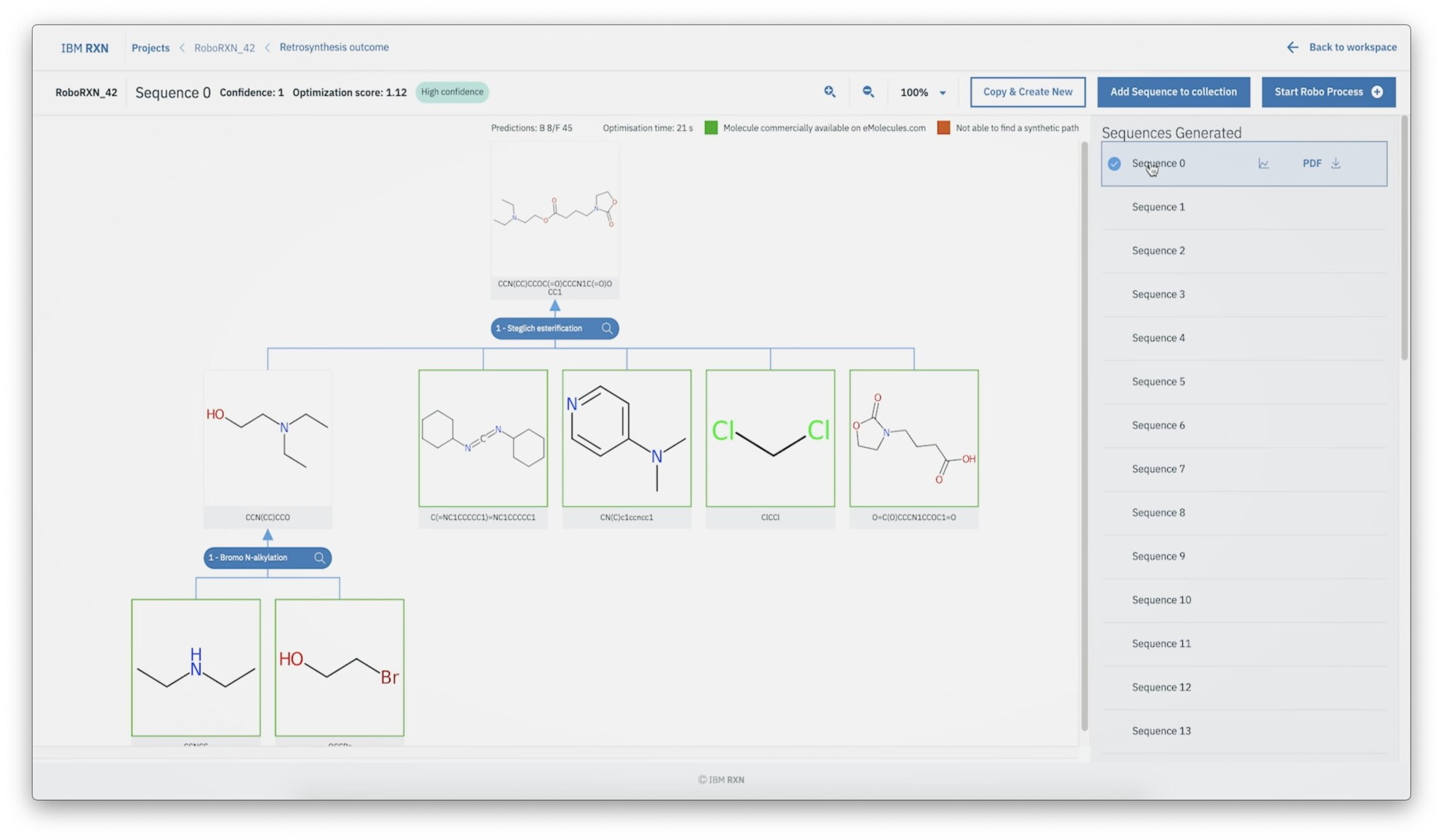

How it works: The online lab platform allows scientists to log on through a web browser. On a blank canvas, they draw the skeletal structure of the molecular compounds they want to make, and the platform uses machine learning to predict the ingredients required and the order in which they should be mixed. It then sends the instructions to a robot in a remote lab to execute. Once the experiment is done, the platform sends a report to the scientists with the results.

Why it matters: New drugs and materials traditionally require an average of 10 years and $10 million to discover and bring to market. Much of that time is taken up by the laborious repetition of experiments to synthesize new compounds and learn from trial and error. IBM hopes that a platform like RoboRXN could dramatically speed up that process by predicting the recipes for compounds and automating experiments. In theory, it would lower the costs of drug development and allow scientists to react faster to health crises like the current pandemic, in which social distancing requirements have caused slowdowns in lab work.

Not alone: IBM is not the only one hoping to use AI and robotics to accelerate chemical synthesis. A number of academic labs and startups are also working toward the same goal. But the concept of allowing users to submit molecules remotely and receive analysis on the synthesized molecule is a valuable addition of IBM’s platform, says Jill Becker, the CEO of one startup, Kebotix: “With RoboRXN, IBM takes an important step to speed up discovery.”

Deep Dive

Artificial intelligence

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

Google DeepMind’s new generative model makes Super Mario–like games from scratch

Genie learns how to control games by watching hours and hours of video. It could help train next-gen robots too.

What’s next for generative video

OpenAI's Sora has raised the bar for AI moviemaking. Here are four things to bear in mind as we wrap our heads around what's coming.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.