An Indian politician is using deepfake technology to win new voters

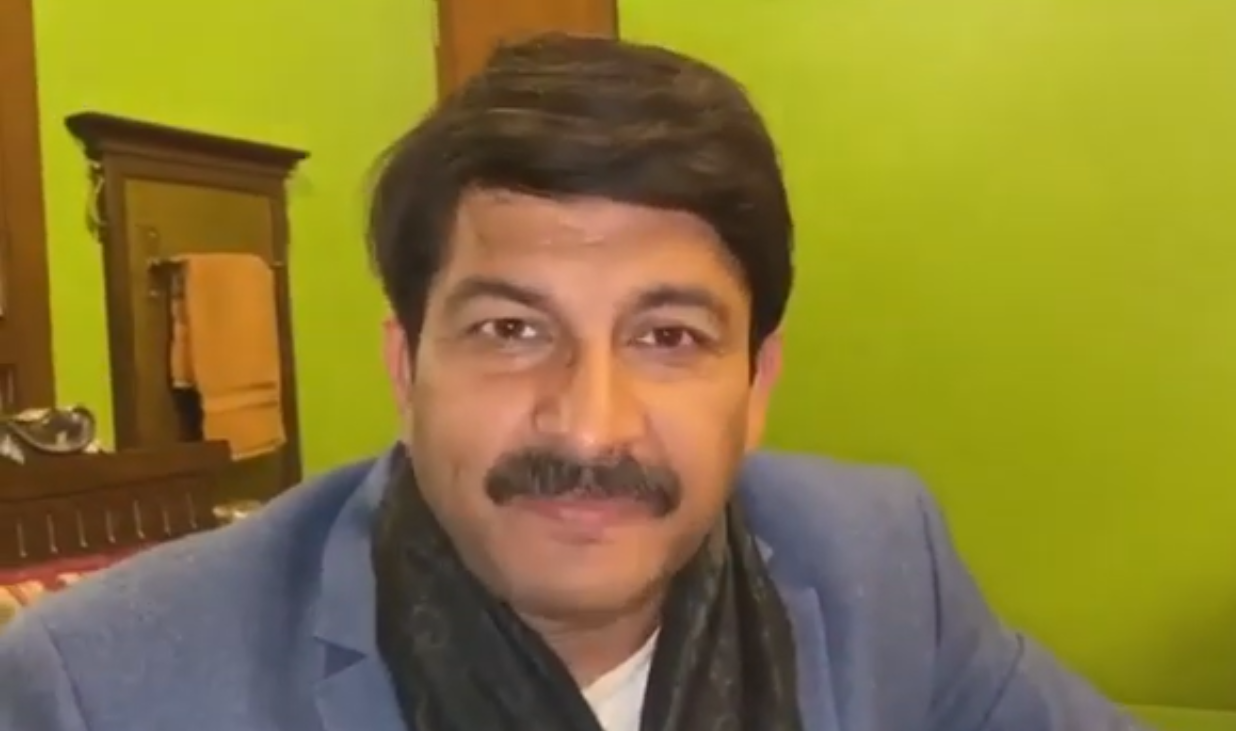

The news: A deepfake of the president of India’s ruling Bharatiya Janata Party (BJP), Manoj Tiwari, went viral on WhatsApp in the country earlier this month, ahead of legislative assembly elections in Delhi, according to Vice. It’s the first time a political party anywhere has used a deepfake for campaigning purposes. In the original video Tiwari speaks in English, criticizing his political opponent Arvind Kejriwal and encouraging voters to vote for the BJP. The second video has been manipulated using deepfake technology so his mouth moves convincingly as he speaks in Haryanvi, the Hindi dialect spoken by the target voters for the BJP.

The purpose: The BJP has partnered with political communications firm The Ideaz Factory to create deepfakes that let it target voters across the over 20 different languages used in India. The party told Vice that the Tiwari deepfake reached approximately 15 million people in 5,800 WhatsApp groups.

Causing alarm: This isn’t the first time deepfakes have popped up during a political campaign. For example, last December, researchers made a fake video of the two candidates in the UK’s general election endorsing each other. It wasn’t supposed to sway the vote, however—merely to raise awareness about deepfake technology. This case in India seems to be the first time deepfakes have been used for a political campaign. The big risk is that we reach a point where people can no longer trust what they see or hear. In that scenario, a video wouldn’t even need to be digitally altered for people to denounce it as fake. It’s not hard to imagine the corrosive impact that would have on an already fragile political landscape.

Sign up here to our daily newsletter The Download to get your dose of the latest must-read news from the world of emerging tech.

Deep Dive

Artificial intelligence

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

Google DeepMind’s new generative model makes Super Mario–like games from scratch

Genie learns how to control games by watching hours and hours of video. It could help train next-gen robots too.

What’s next for generative video

OpenAI's Sora has raised the bar for AI moviemaking. Here are four things to bear in mind as we wrap our heads around what's coming.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.