A plan to advance AI by exploring the minds of children

The next big breakthroughs in artificial intelligence may depend on exploring our own minds.

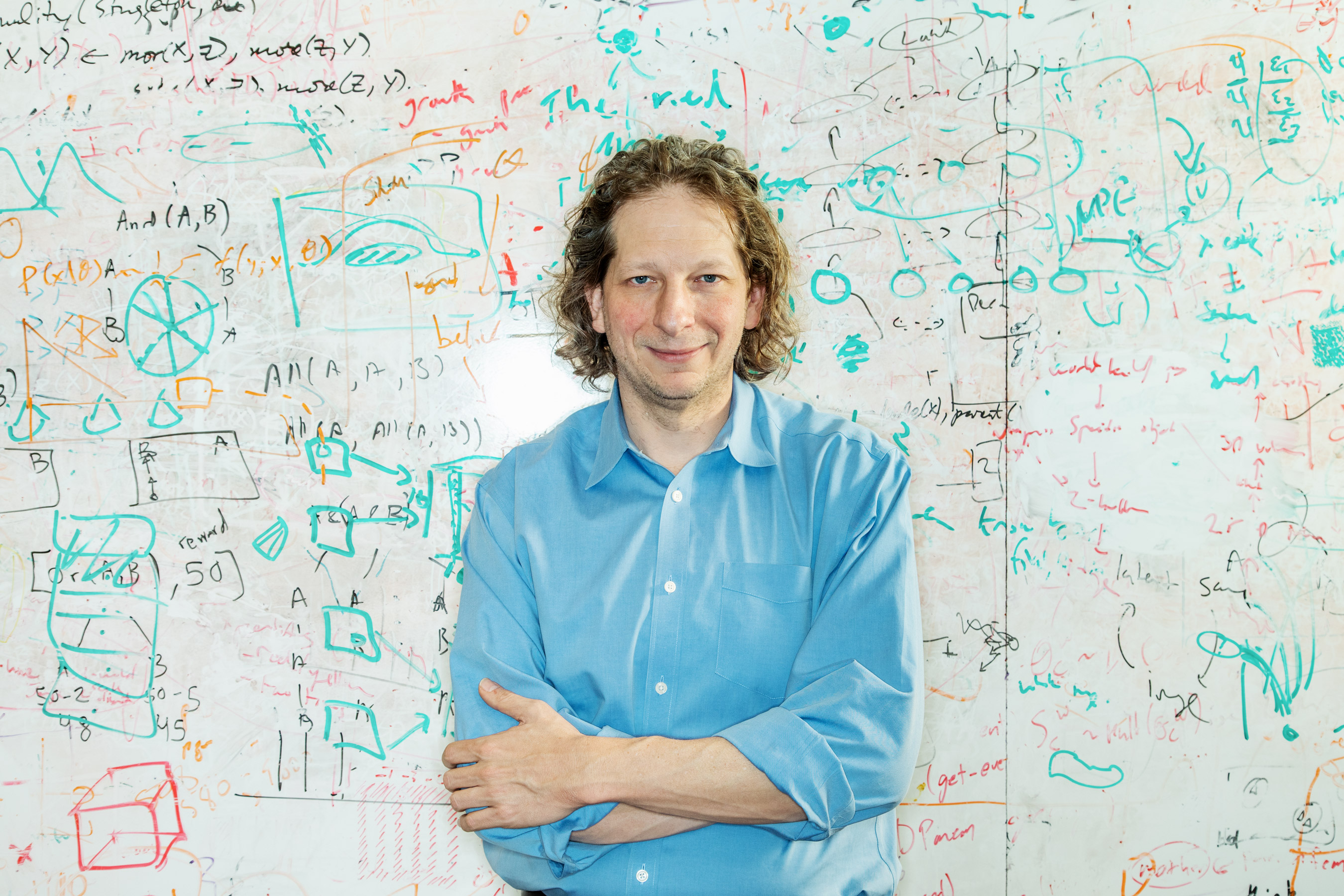

So says Josh Tenenbaum, who leads the Computational Cognitive Science lab at MIT and is the head of a major new AI project called the MIT Quest for Intelligence.

The project brings computer scientists and engineers together with neuroscientists and cognitive psychologists to explore research that might lead to fundamental progress in artificial intelligence. Tenenbaum outlined the project, and his vision for advancing AI, at EmTech, a conference held at MIT this week by MIT Technology Review.

"Imagine we could build a machine that starts off like a baby and learns like a child," he said. "If we could do this it’d be the basis for artificial intelligence that is actually intelligent, machine learning that could actually learn.”

Some stunning advances have been made in AI in recent years, but these have largely been built upon a handful of key breakthroughs in machine learning, especially large, or deep, neural networks. Deep learning has, for instance, given computers the ability to recognize words in speech and faces in images as accurately as a person can. Deep learning also underpins spectacular progress in game-playing programs, including DeepMind’s AlphaGo, and it has contributed to improvements in self-driving vehicles and robotics. But they are all missing something.

"None of these systems are truly intelligent," he said. "None of them have the flexible, common sense, general intelligence of a two year old, or even a one year old. So what’s missing? What’s the gap?"

Tenenbaum’s research focuses on exploring cognitive science in order to understand human intelligence. His work has, for example, explored how even small children are able to visualize aspects of the world using a kind of innate 3-D model. This gives humans greater instinctive understanding of the physical world than a computer or robot has. "Children’s play is really serious business," he said. "They’re experiments. And that’s what makes humans the smartest learners in the known universe.”

Tenenbaum has also done groundbreaking work developing computer programs capable of mimicking some of the more elusive aspects of the human mind, often using probabilistic techniques. For instance, in 2015 he and two other researchers created computer programs capable of learning to recognize new handwritten characters, as well as certain objects in images, after seeing just a few examples. This is important because the best machine-learning programs typically require huge quantities of training data. iSee, a self-driving-car company that draws inspiration from this research, was spun out of Tenenbaum’s lab last year.

The Quest for Intelligence, announced in February, also seeks to explore the societal impact of artificial intelligence. This means accounting for the technology’s fundamental limitations or shortcomings, as well as issues such as algorithmic bias and explainability.

Tenenbaum notes that the original vision for artificial intelligence, a vision that is now more than 50 years old, sought to draw inspiration from human intelligence, but without much scientific grounding. “The fields of cognitive science and neuroscience are now more mature,” he says. “This should make this project special.”

Deep Dive

Artificial intelligence

What’s next for generative video

OpenAI's Sora has raised the bar for AI moviemaking. Here are four things to bear in mind as we wrap our heads around what's coming.

Is robotics about to have its own ChatGPT moment?

Researchers are using generative AI and other techniques to teach robots new skills—including tasks they could perform in homes.

An AI startup made a hyperrealistic deepfake of me that’s so good it’s scary

Synthesia's new technology is impressive but raises big questions about a world where we increasingly can’t tell what’s real.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.