How a gaming chip could someday save your life

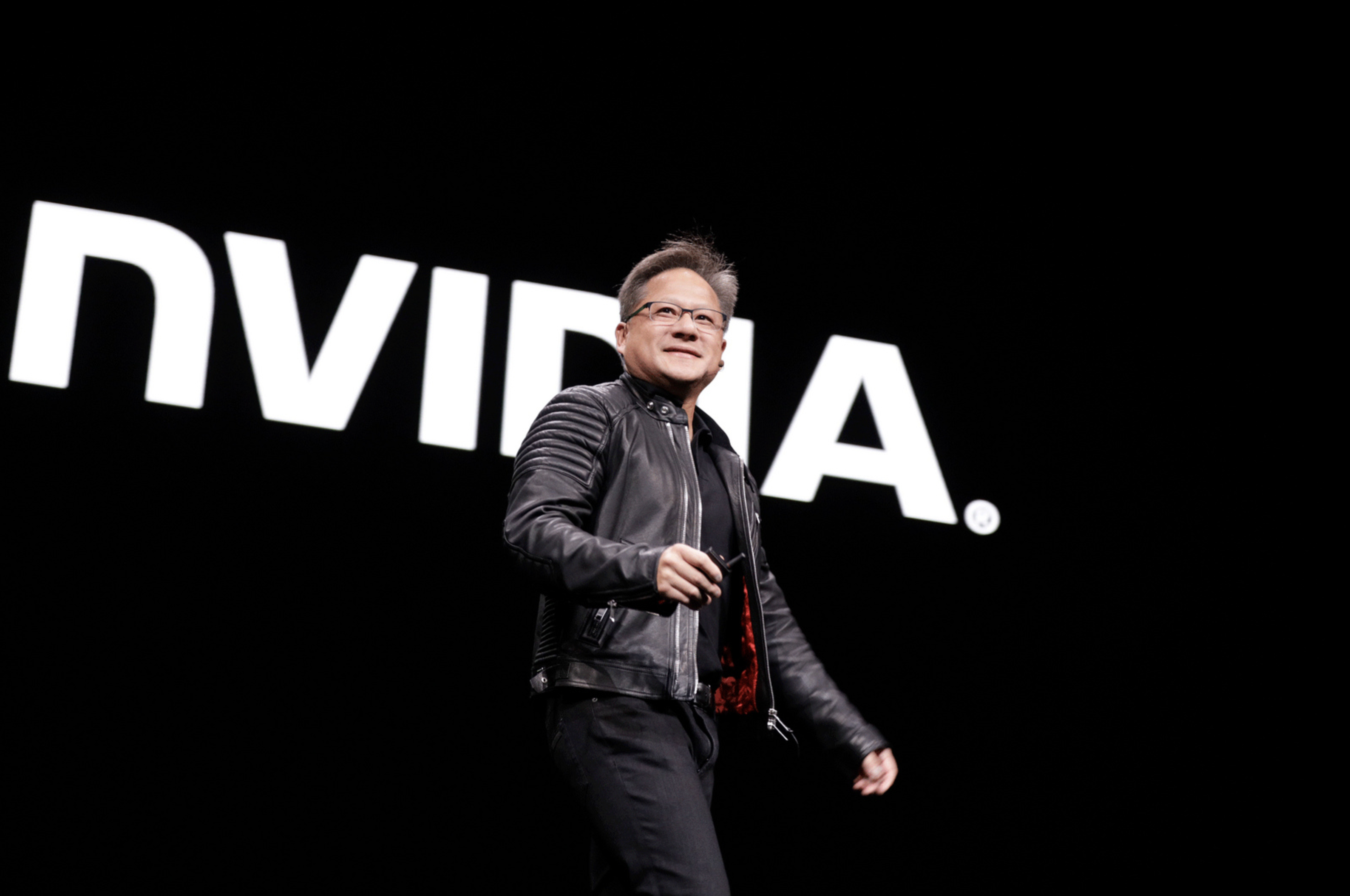

Jensen Huang, the billionaire CEO of Nvidia, has made a fortune by supplying the hardware used for artificial-intelligence algorithms. He’s now betting that AI is about to become an indispensable part of medicine.

In the early 1990s, Huang recognized that the limitations of general-purpose computer chips and the rise of computer gaming would be likely to increase demand for specialized graphics processors. During the late ’90s and 2000s, the company he cofounded found huge success making high-end graphics chips for gamers.

More recently Huang and Nvidia have ridden a different technology wave, supplying the hardware used to train and run the deep-learning algorithms that have been key to a recent renaissance in artificial intelligence. Deep learning requires huge amounts of training data and powerful computer hardware, and Nvidia’s graphics processors offer just the right kind of parallel processing to make these algorithms fly.

Huang now reckons that the AI algorithms are going to revolutionize medicine and health care, and he’s betting hospitals, doctors, and medical researchers will be Nvidia’s next big customer base. “The amount of data that health care has is enormous, and it’s the perfect example of unstructured data. And yet the computational use of that data is fairly limited,” Huang told MIT Technology Review. “One area that is a perfect first entry is medical imaging.”

A growing number of research papers show that deep learning can be used—in principle—to automate the identification of disease in medical images. Researchers at Stanford have shown that the technique can detect skin cancer in photos. A team at Google found that it can be used to identify anomalies in chest x-rays. Nvidia says that more than half the papers presented at the International Conference on Medical Image Computing and Computer Assisted Intervention, the most important event for the medical imaging field, involved some form of deep learning.

Nvidia already works with a number of companies that make medical imaging instruments, and these companies are all looking to add more computational analysis to their systems, Huang says. He believes it should also be possible to connect existing equipment to such a system, so that images are analyzed and the results are presented to technicians and doctors.

“In the future,” Huang says, “these machines will be augmented by supercomputers, turning them into modern, amazing medical instruments, just as cloud computing has done for cell phones.”

Last month, Nvidia announced a product that aims to do something like this. It consists of racks of powerful computer chips, and it comes loaded with software for tasks like sharpening MRI images and creating visualizations of ultrasound data. The system could also support machine-learning techniques for identifying signs of disease in images.

John Guttag, a professor of computer science at MIT, says medical imaging is going to be transformed by the use of machine learning, and deep learning in particular. However, he says the most immediate impact of AI on medicine will be in medical research. “There’s going to be a dramatic change in how we do research, and that will have an indirect effect on care,” he says. “We can look at 20,000 scans of Alzheimer’s patients and learn things we couldn’t learn with the naked eye.”

Guttag says the technology will eventually end up in hospitals and clinics, too, but things are moving more slowly. It may prove difficult to get doctors and patients to accept AI diagnosis, he says, if the system doesn’t also offer recommendations or a good explanation for its conclusion. Many machine-learning models, especially in deep learning, are notoriously difficult to interrogate (see “The dark secret at the heart of AI”).

The challenges haven’t daunted a growing number of companies now looking to turn research advances into clinical tools. The US Food and Drug Administration has already approved some AI techniques for clinical use, including technology for identifying signs of diabetic retinopathy in retinal images, a product for recognizing signs of stroke in CT scans, and a cloud-based oncology platform.

But it simply isn’t realistic to imagine that a lot of what doctors do could be automated. Algorithms might help them analyze more data than would otherwise be possible, and they could get better at simple forms of diagnosis. But a patient’s prognosis and treatment options may depend on a wide range of factors, including that person’s unique medical history. Making judgments under these circumstances is far more challenging for a machine.

Regardless of the challenges, the opportunities for Nvidia are too good to ignore.

Atul Butte, a professor at the UCSF School of Medicine and an expert on the use of technology in health care, says hospitals will inevitably invest more in the hardware needed to run deep-learning algorithms. He says, “There are faculty at UCSF and elsewhere that are already using Nvidia boards and equipment to train deep-learning models on medical images, including mammography, ultrasounds, and more.”

Deep Dive

Artificial intelligence

What’s next for generative video

OpenAI's Sora has raised the bar for AI moviemaking. Here are four things to bear in mind as we wrap our heads around what's coming.

Is robotics about to have its own ChatGPT moment?

Researchers are using generative AI and other techniques to teach robots new skills—including tasks they could perform in homes.

An AI startup made a hyperrealistic deepfake of me that’s so good it’s scary

Synthesia's new technology is impressive but raises big questions about a world where we increasingly can’t tell what’s real.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.