Big Data Exposes Big Falsehoods

When Vladimir Putin took power “a few seconds before 2000,” the world’s attention was elsewhere. Introducing himself with a short Russian broadcast, he promised, “Freedom of speech, freedom of conscience, freedom of mass media, freedom of property rights—these basic principles of the civilized society—will be under the safe protection of the state.” Ever since, the Kremlin has steadily tightened its ligature around the Russian media. In 2000, Putin signed Russia’s Information Security Doctrine. Updated last December, it is now a third the length of its predecessor.

“Those missing two thirds are all the tasks Russia undertook to prevent outside influence,” says Keir Giles, a researcher with the Conflict Studies Research Centre in the U.K. “They’ve had a couple of decades to put this in place, and there’s been a real acceleration over the last four years.” An original analysis undertaken for MIT Technology Review by Semantic Visions, a Czech startup generating “complex open-source intelligence” risk assessments, confirms the Kremlin’s grip.

Using the “world’s largest semantic news database,” Semantic Visions explored the MH17 shoot-down in 2014 alongside other major stories in Russia’s war against Ukraine (see “Russian Disinformation Technology”). They compared 328,614,220 English-language and 58,207,194 Russian-language articles, with an average length of 3,000 characters, between January 2014 and April 2016. These are culled from over 25 million sources the analysts at Semantic Visions have discovered and classified, of which they analyze around half a million daily. The results illuminate the domestic and foreign interests of Kremlin propaganda, and they cast an unflattering light on aspects of Western media coverage.

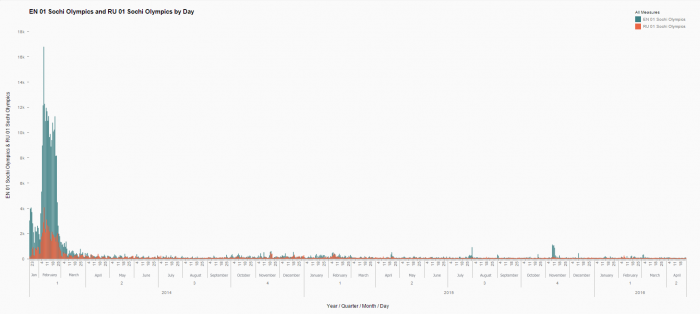

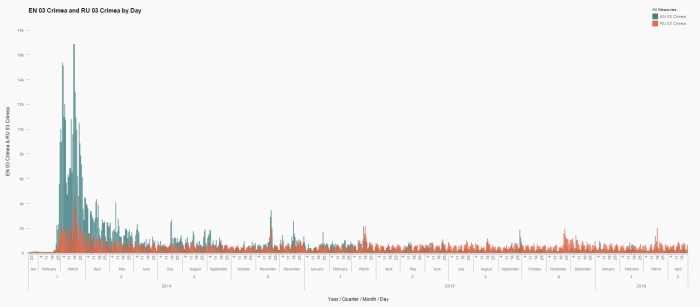

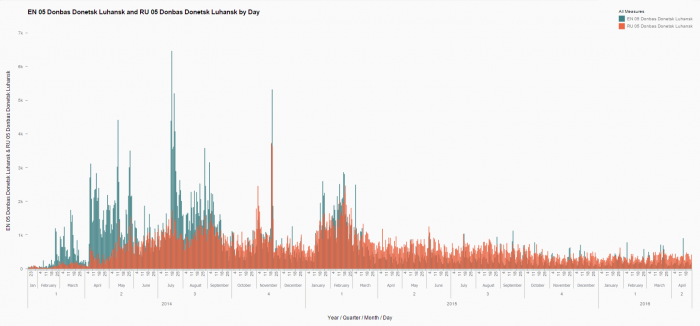

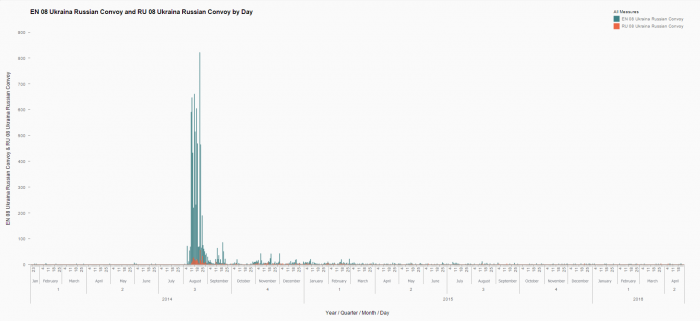

Semantic Visions CEO Frantisek Vrabel explains: “We chose the politically more neutral Sochi Olympics as a ‘control’ to show the normal proportion, around one to 5.6, of Russian (red) to English (blue) language articles for an international event.” Russian interest in Crimea is sustained, contrasting with Western media interest. The invasion in eastern Ukraine follows a similar pattern.

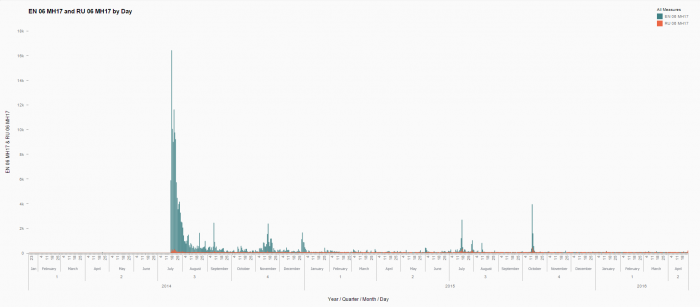

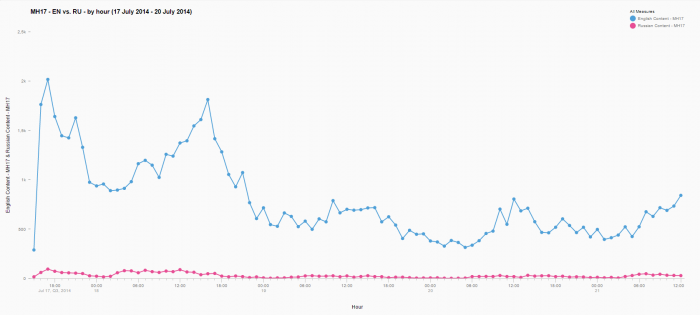

Data for the shoot-down of MH17, however, is unusual. “The initial Russian story was that MH17 was taken down by a Ukraine jet fighter—so you would think they would use it to support their version,” says Vrabel. “But they knew they had done something wrong. It’s almost as if they were trying to downplay it.”

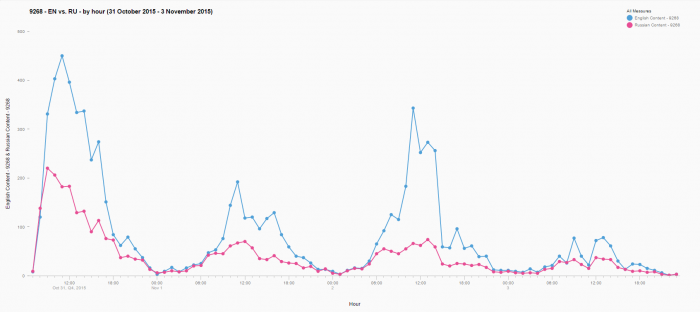

Semantic Visions then ran a more detailed hour-by-hour analysis, plotting coverage in the 90 hours following the tragedy. The difference becomes even more stark in comparison with Metrojet Flight 9268, which exploded on October 31, 2015, over the Sinai Peninsula, with the loss of 219 Russians, four Ukrainians, and a Belarussian. (Although the explosion is still under investigation, both Russia and Egypt suspect a bomb, claimed by ISIS.)

Here Russian-language data over the subsequent 90 hours closely tracks global English results, even though domestic interest accounts for a greater proportion than in the Sochi “control.”

A month after the MH17 shoot-down, Russia ran a successful information operation aimed at the Western media. Claiming to be running a humanitarian convoy, they garnered global attention with hundreds of trucks purporting to offer “humanitarian assistance.” (The Institute for Propaganda Analysis called such “feel good” language “glittering generalities.”) That the Russians wouldn’t allow reporters to see inside most of the trucks, and that they were accompanied by attack helicopters, was less important than the proximity of the words “Russian,” “aid,” and “humanitarian” in the headlines. This charade “was not intended for the Russian public, but for a global audience in their informational war,” says Vrabel.

Big data has other uses, too. In January 2016, a British inquiry named several perpetrators in the polonium 210 poisoning of Alexander Litvinenko. The investigators said Putin had probably approved this act of what the Russian security services calls “wet business” (mokorye delo). Semantic Visions tracked this story, too, analyzing who covered or shared it. Vrabel says the resultant database of sources lets them identify “who is infected with Russian propaganda.”

Deep Dive

Artificial intelligence

What’s next for generative video

OpenAI's Sora has raised the bar for AI moviemaking. Here are four things to bear in mind as we wrap our heads around what's coming.

Is robotics about to have its own ChatGPT moment?

Researchers are using generative AI and other techniques to teach robots new skills—including tasks they could perform in homes.

An AI startup made a hyperrealistic deepfake of me that’s so good it’s scary

Synthesia's new technology is impressive but raises big questions about a world where we increasingly can’t tell what’s real.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.