Facebook may stop the data leaks, but it’s too late: Cambridge Analytica’s models live on

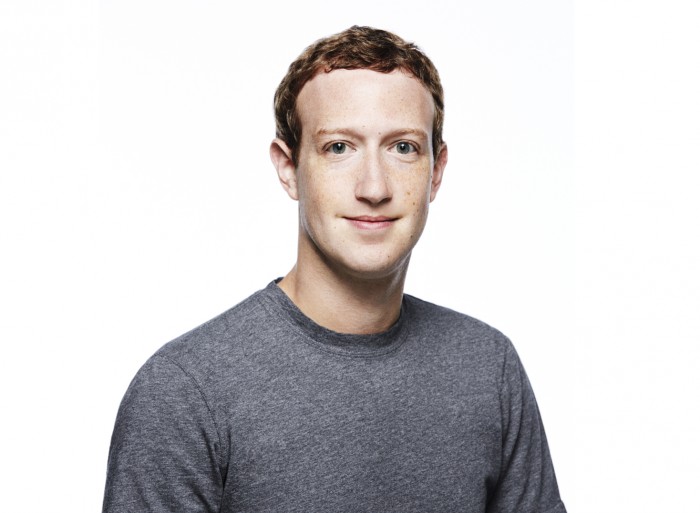

Today Facebook is releasing a tool that will allow its users to see if their Facebook profile was harvested by Cambridge Analytica. Facebook says 87 million people were affected, 71 million of them in the US. It makes for a compelling story about egregious personal privacy harms committed in pursuit of electoral victories. In response, Facebook has clamped down on access to its users’ data, and in his testimony to Congress this week, CEO Mark Zuckerberg promises to make political advertising on the platform more transparent.

But focusing solely on the purloined data is a mistake. Much more important are the behavioral models Cambridge Analytica built from the data. Even though the company claims to have deleted the data sets in 2015 in response to Facebook’s demands, those models live on, and can still be used to target highly specific groups of voters with messages designed to leverage their psychological traits. Although the stolen data sets represent a massive collection of individual privacy harms, the models are a collective harm, and far more pernicious.

In what follows, I argue that Cambridge Analytica and its parent and sister companies were among the first to figure out how to turn behavioral algorithms into a portable worldview—a financially valuable, politically potent model of how humans behave and how society should be structured. To understand Cambridge Analytica, the anti-democratic vision it represents, and the potentially illegal behavior that its techniques may make possible, follow the models.

(In the interest of full disclosure, I have worked as a consultant for Facebook on topics not directly related to this story.)

The data

First, let’s discuss that data. As has been widely reported, the original data set was collected by Global Science Research (GSR), a small firm belonging to University of Cambridge quantitative psychologist Aleksandr Kogan, for SCL, the parent company of Cambridge Analytica. Kogan used a personality quiz named “thisismydigitallife,” hosted on Facebook, to obtain access to the Facebook profiles of the 270,000 people who took the quiz. That allowed him to use Facebook’s API—which was then much more permissive than it is today—to scrape the data of their Facebook friends, allegedly a total of 87 million. (That’s much more than the 30 million profiles that SCL says it contracted for.) Cambridge Analytica then combined the Facebook data with other data sets to build robust, integrated profiles of 30 million US voters.

In what remains a murky and ethically dubious exchange, it appears that Kogan claimed to Facebook that he was collecting this data for only academic purposes, despite the clear commercial intent of his contract with SCL (page 67) and discussion of commercial purposes with his home university. Reselling this data in the fashion GSR was contracted for was clearly a violation of Facebook’s terms of service. Facebook overlooked red flags about the amount of data being collected because of Kogan’s academic credentials, and now claims that he deceived people at Facebook about his intents.

However, though Cambridge Analytica built profiles of 30 million US voters, its central goal wasn’t to craft ads targeted at those people. Rather, the models it built from that much smaller cohort of 270,000 quiz-takers would allow an advertiser to create proxy profiles of much larger collections of similar people on Facebook. This capability is what enabled the company to craft ads precisely targeted at small groups of voters based on personality traits. And it continues to exist even after the original data is deleted.

The method

Kogan’s technique—emulating an innovation by psychology researchers at the Cambridge Psychometrics Centre, particularly Michal Kosinski and David Stillwell—was to use a scientifically validated psychology quiz inside Facebook’s API system. By taking the quiz, users also granted him access to a gold mine of behavioral data—essentially the record of their “likes” on Facebook.

This allowed Kogan to correlate that relatively inexpensive behavioral record with an otherwise expensive psychological measurement, all of it conveniently formatted by Facebook. Although this method was fairly new when Cambridge Analytica seized upon it in 2014, recent research has shown commercial advertising built with the Cambridge researchers’ psychological techniques to be significantly more effective than non-targeted ads.

The value of this data is predicated on the emerging field of digital psychometrics, the science of quantitative measurement of psychological and personality characteristics. All people are presumed to fit somewhere on a matrix of “high” and “low” rankings of certain traits—most commonly the “OCEAN” or “Big 5” traits: openness to experience, conscientiousness, extraversion, agreeableness, and neuroticism.

Once a model is produced and verified, the data set it was trained on is largely irrelevant.

Cambridge Analytica used machine-learning algorithms to discover correlations between these traits and people’s behaviors on Facebook. Often the results of such analysis can appear odd or counterintuitive. For example, Kosinski and Stillwell’s research had found that people who liked singer Tom Waits rank among the most “open” personalities, and those who like the band Placebo are among the most “neurotic.”

When combined with traditional campaign data sets, however, such counterintuitive profiles become much more useful. For example, the Trump digital campaign using Cambridge Analytica’s model could identify which specific micro-targeted ads were trending in small geographic regions among specific personalities of Facebook users, and then direct the on-the-ground campaign to reinforce those messages in the form of a Trump stump speech. That style of campaign analytics would be particularly useful for a candidate like Trump, who tended to avoid specific policy discussions and would instead improvise with keywords and emotional themes very effectively.

The central node for all this work was Facebook’s Custom Audience and Lookalike targeting tools. These tools allow an advertiser to target Facebook users based on narrow parameters, including finding users that “look like” a proxy profile. Using these native advertising tools (often under the guidance of embedded Facebook staff), the behavioral models built from Kogan’s purloined data enabled Cambridge Analytica and the Trump digital campaign to identify parameters that would find users with desirable psychometric traits.

Those odd correlations between personality scores and, say, which band a user “likes,” are therefore more useful than they look at first. According to SCL’s CEO, Alexander Nix (an account disputed by former Trump campaign staff), on a single day the Trump data operation tested 175,000 unique ads on Facebook. Their goal was not to find the single best ad, but to fine-tune the micro-targeting model through feedback.

Once a model is produced and verified, the data set it was trained on is largely irrelevant. New data about voters are needed to run the models on, but any large national campaign will have such data. So it was likely inconsequential, from Cambridge Analytica’s perspective, that Facebook demanded that it delete Kogan’s data in 2015. The individual privacy violations were real, and needed to be addressed, but the models lived on.

The people

To understand how these models were put together and why they are potentially so influential, it’s necessary to understand the political actors behind them.

Before Cambridge Analytica was founded, its parent company SCL had largely been focused on tracking, analyzing, and manipulating popular opinion abroad for US and UK military and diplomatic services. But in 2013, in the aftermath of the Mitt Romney campaign’s digital collapse, SCL’s Nix was introduced to Republican billionaire donors Robert Mercer and his daughter Rebekah Mercer. Also present was Steve Bannon, the head of Breitbart News and a regular Mercer business partner, who would later become Donald Trump’s campaign manager.

Robert Mercer had made his fortune by innovating high-speed algorithmic trading for his hedge fund, computationally finding tiny marginal advantages in the market billions of times. In other words, he was an early true believer in the power of algorithms to drive large-scale change one small event at a time. Bannon, already running a small media empire, had long been interested in advancing a right-wing populist agenda. Together, they faced a problem SCL could address technologically: how to make psychologically tailored advertising and cultural content that would appear to be organically popular, giving them significantly more influence over public discourse than they would otherwise have been able to achieve.

The more campaigns there were, the better the model got.

Mercer reportedly committed $15 million to a joint venture with SCL, forming a shell company in December 2013—Cambridge Analytica—with Bannon as vice president. A similar shell arrangement established AggregateIQ (AIQ), a Canadian company that provided similar services with the same or similar behavioral models, in the US and the UK, including for the 2016 pro-Brexit campaign.

The role of Cambridge Analytica was relatively simple: as a place to park the intellectual property that would be licensed from SCL for use in US elections. What was that intellectual property? Behavioral models, which would very soon include the purloined Facebook data. Kogan’s contract with SCL would be signed in early June 2014 (page 67, also page 42), and leaked correspondence from the University of Cambridge indicates that Kogan was first in conversation with Cambridge Analytica in January 2014, one month after the company was formed, discussing the delivery of data sets and models.

The models were bulked up by integrating data from existing consumer and voter data sets, adding new survey data, and then testing the results. A firm owned by Mark Block, the Republican political consultant who introduced Nix to the Mercers, was contracted to perform polling; it was aggressive enough to warrant consumer complaints (see 5/29/14 complaint). One of Cambridge Analytica’s earliest customers (page 44) was a political action committee (PAC) named ForAmerica, whose primary financier was the Mercers. By late 2014, ForAmerica was recognized for innovating a method for using social media to persuade its millions of followers to engage in real-world actions.

Cambridge Analytica was then contracted by a series of smaller-scale GOP campaigns, such as governorships, state legislatures and senatorial races, to do marketing based on “personality clusters.” Another early client was the John Bolton SuperPAC, run by Trump’s now national security advisor. It spent $1.2 million on polling services from AIQ while receiving $5 million from the Mercers. Among its products were ads for the 2014 campaign of North Carolina senator Thom Tillis, which tailored ads to psychographic profiles.

Why do these relationships matter? Because for a team building a behavioral model, this assortment of characters in the GOP orbit could be viewed not as discrete clients but as contiguous sources of more input for a model that spans multiple elections. In other words, the more campaigns there were, the better the model got.

The product

There has been plenty of skeptical analysis of just how useful SCL’s psychographic tools were. In contrast to Nix’s flamboyant salesmanship of the method, critics have routinely responded by calling it snake oil. Where Cambridge Analytica was hired to run digital campaigns, it bungled some basic operations (especially for Ted Cruz, whose website it failed to launch on time). And SCL staff often rubbed others working on Trump’s digital campaign the wrong way.

The models may have helped in constructing Trump’s lose-the-electorate, win-the-electoral-college strategy.

However, none of Cambridge Analytica’s many Republican critics has yet said its models were not useful. Moreover, some reporting indicates that the models were used primarily to target voters in swing states and to hone Trump’s stump speeches in those states. That shows that the campaign understood that these models are most useful when applied in a focused manner. They may have helped in constructing Trump’s lose-the-electorate, win-the-electoral-college strategy.

And while they have their limitations, behavioral profiles are very good at estimating demographics, including political leanings, gender, location, and ethnicity. A behavioral profile of seemingly innocuous “likes” paired with other data sets is both a good-enough map to far more information about a potential voter, and a way to predict what types of content they might find engaging.

Ultimately, then, if we strip out the context of the 2016 election and the odd correlations that these algorithms find in Facebook behavioral data, the role that psychometrics plays is actually fairly straightforward: it is another criterion among many by which to create tranches of voters and learn from iterative feedback about how those tranches respond to ads.

Still, psychographics has more sinister consequences for democratic governance than the traditional demographic segmentation used by political campaigns. Paul-Olivier Dehaye, the Geneva-based mathematician and privacy entrepreneur who has been at the forefront of tracking SCL’s operations, told me he believes that the psychometric models allow Cambridge Analytica to turn Facebook’s custom audience tools into a “find users with similar psychology” tool. David Carroll, the US professor suing Cambridge Analytica under UK data privacy laws, said that the company was “super sampling” the electorate by “matching voter files to de-anonymize and build enriched [profiles] with commercial data” in search of population segments that could be nudged.

And in the testimony (timestamp 11:57) that he gave to the UK Parliament in March, Christopher Wylie, the former Cambridge Analytica employee who blew the whistle on its data collection practices, claims the Trump campaign wanted psychometrics for the purpose of nudging people’s “inner demons”:

“If you can create a psychological profile of a person who is more prone to adopting certain forms of ideas—conspiracies, for example—and you can create a profile of what that person looks like in data terms, you can predict how likely someone is going to be to adopt more conspiratorial messaging. You can then target them with advertising or blogs or websites or what everyone now calls fake news so that they start seeing all of these ideas and stories around them in their digital environment.”

According to Wylie, SCL’s content was unusually effective. A core measure of success in online marketing is the “conversion rate,” the percentage of people who take an action—such as making a purchase or signing up for a list—after seeing an ad. In normal commercial online marketing, a conversion rate of 1 to 2 percent is typically considered successful. Wylie claimed (11:47 in the testimony) that SCL consistently saw conversion rates of 5 to 7 percent, and sometimes 10 percent. This resonates with studies showing that emotional arousal drives online engagement, and platforms reward such engagement with more visibility and/or income.

While Facebook's changes will make political advertising more transparent, they won't render Cambridge Analytica's models obsolete.

These “dark ads” were likely each seen by only small numbers of people, and they were likely not the same campaign material found on television or in print. Neither US election law nor Facebook policy required their public disclosure. Facebook has released socially explosive dark ads allegedly bought by Russian disinformation campaigns, but not those bought by any US political campaign or PAC from the 2016 electoral cycle.

Altogether this means that, whatever the shortcomings of Cambridge Analytica’s methods, the Trump digital campaigns may have successfully used algorithmic models built from stolen Facebook data to target the paranoid vulnerabilities of a relatively small cohort of Facebook users in electorally important regions. That is a modest but plausible goal, potentially capable of moving the margins on an election.

Facebook recently announced voluntary changes that would largely eliminate the political dark ads ecosystem from its platform, and the Honest Ads Act proposed in the Senate would do much of the same across the internet. But while these changes will make political advertising more transparent, they won't render Cambridge Analytica's models obsolete. It will still be possible to micro-target ads based on psychometric profiling.

Legal questions

Because it’s the models, not the data, where the actual economic value resides, and because models are easily portable, they raise several questions to do with campaign-finance law in the US.

First, Mercer-supported organizations were simultaneously both funding and earning from this behavioral modeling: for instance, the ForAmerica PAC paid Cambridge Analytica for its services. This arrangement obscures the economic value of the models, potentially hiding unrecorded in-kind donations. Did the prices charged for these services represent fair market value for the research and development behind the models?

Second, Wylie’s testimony (11:15, 13:32 and 13:41 timestamps) alleged that there was a “franchise” framework designed to get around electoral laws, including those that prevent foreign nationals from running US elections. As Gizmodo reported, publicly accessible code shows that AIQ—a firm incorporated in Canada—built the GOP’s data-management project, code-named Ripon, from the stolen Facebook data. Will Facebook demand that the RNC shut down Ripon and delete the models that foreign nationals built with data stolen from a US corporation?

Third, because the core intellectual property here is algorithmic models owned by SCL/Cambridge Analytica/AIQ, it is almost certain that the same or nearly the same model is being used in multiple campaigns, either in whole or in part. Under US law, PACs and campaigns are not allowed to coordinate on strategy, staff, or expenses. But a machine-learning algorithm is always iteratively learning from the data sets to which it is exposed, regardless of legal boundaries. If campaigns and PACs are using the same models, are they, in effect, asynchronously coordinating on core campaign decisions and data collection activities?

Both Cambridge Analytica and the Trump campaign say that RNC voter files were the database of record, but that Cambridge Analytica was “intelligence on top” of the voter file. In a BBC interview, Nix stated that although the Trump campaign “started over” with new data, “legacy behavior models” were used across campaign cycles and between campaigns, including the Cruz and Trump campaigns. As we can now see, those models were legacies of previous GOP campaigns, including PACs like John Bolton’s. And they may now be in the hands of Emerdata, a new shell company set up last fall by the Mercers and Nix. Cambridge Analytica claimed recently that it immediately destroyed all of the raw data and “any of its derivatives in our system,” but it’s not clear if that includes derivative models. Nor has Facebook yet publicly mentioned an effort to destroy those models.

The consequences for democracy

Democracy, at its least cynical, is the possibility of collective rationality about shared governance. Micro-targeted, psychologically tailored dark ads turn each viewer into an electorate of one. Disagreement and debate are rendered only as emotional states rather than being an indispensable aspect of civic life. The behavioral algorithms used by Cambridge Analytica cause collective harm because they undermine the possibility of collective decision making by rendering it impossible to peer into each other’s realities. In that way, people who can afford to spend millions of dollars on modeling an entire electorate using data stolen from one of the world’s most influential corporations can turn an algorithm into a portable worldview.

How behavioral models travel both show us the concrete relationship between the major actors behind Cambridge Analytica and raise significant questions about whether current election law is capable of handling the age of algorithmic electioneering. Whether or not Cambridge Analytica really tipped the balance in favor of Trump, what we have seen thus far is only a dry run for these models. Every time they ingest new data sets, every time they can be refined and repurposed and passed to a new organization, is simply another experiment.

Like any experiment, it could be a failure. But defending democratic norms and institutions is going to require building resilience against such iterative tools regardless of who uses them. Simply protecting personal data is not enough. We need to protect the possibility of collective rationality. As Dehaye testified (14:14), the focus on individual persuasion through personal data misses the collective harm. Follow the models, because that is where the collective harms are found.

Jacob Metcalf is a technology ethics Researcher at Data & Society and the Pervade project, and a consultant for tech companies at Ethical Resolve.

Deep Dive

Policy

Is there anything more fascinating than a hidden world?

Some hidden worlds--whether in space, deep in the ocean, or in the form of waves or microbes--remain stubbornly unseen. Here's how technology is being used to reveal them.

A brief, weird history of brainwashing

L. Ron Hubbard, Operation Midnight Climax, and stochastic terrorism—the race for mind control changed America forever.

What Luddites can teach us about resisting an automated future

Opposing technology isn’t antithetical to progress.

Africa’s push to regulate AI starts now

AI is expanding across the continent and new policies are taking shape. But poor digital infrastructure and regulatory bottlenecks could slow adoption.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.