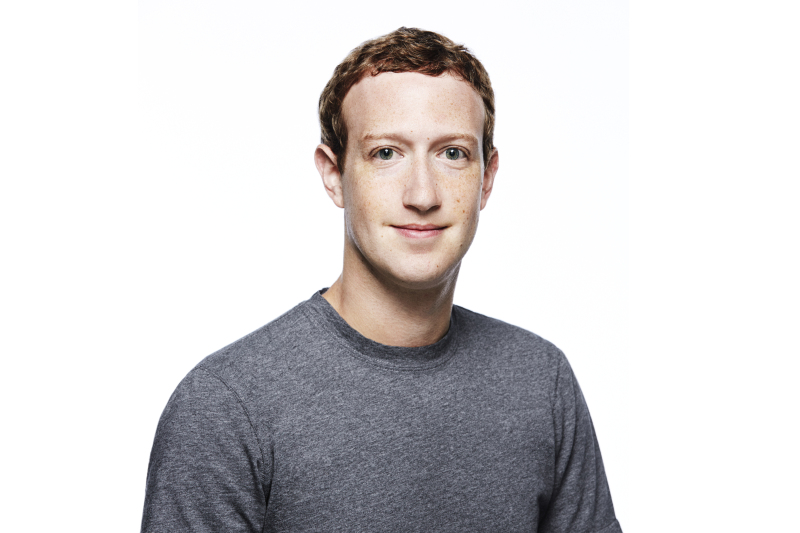

Internet “power user” Mark Zuckerberg knows Facebook has issues

In the wake of a scandal involving the improper sharing of millions of people’s data, Facebook CEO Mark Zuckerberg said Wednesday that the company realizes it has a broader responsibility for protecting its billions of users—though he also acknowledged it will take a couple more years to fix the problems.

Zuckerberg made the remarks during an hour-long question-and-answer conference call with journalists. It was held just hours after Facebook disclosed in a blog post that 87 million people’s information might have been shared with Cambridge Analytica, a data broker involved in the 2016 Trump presidential campaign. Last month Cambridge Analytica was suspended by Facebook after the Observer and the New York Times reported that the firm had access to user data it shouldn’t have had. Facebook’s post also said that starting April 9 it will let users know if their information may have been shared with the company.

During the call, Zuckerberg talked about how the company is trying to improve the ways it safeguards users’ data—something he says has been going on for the past year and is likely to take another two years. He noted that while Facebook already has privacy tools available for its users, the company is trying to take a broader view of its responsibility to protect them from those who may want to abuse their personal data or manipulate the platform to get greater distribution for fake news or hate speech.

Among the other topics discussed on the call:

- Zuckerberg said the estimate released Wednesday that up to 87 million people were affected by the Cambridge Analytica data breach is a “conservative estimate.” (Earlier reports had estimated 50 million.)

- In response to a question about how Facebook is working to keep the social network from being used to meddle in political elections—something that we now know has happened in the past—he said Facebook has 15,000 people working on security and content reviewing, and this will ramp up to more than 20,000 by the end of 2018.

- Zuckerberg is planning to testify before the US House Energy and Commerce Committee on April 11 about how his company keeps user information safe; on Wednesday he added that he will send either Mike Schroepfer, Facebook’s chief technology officer, or Chris Cox, its chief product officer, to answer additional questions in other countries.

- Zuckerberg said that as far as he’s aware, Facebook’s board has not discussed whether he should step down from his post as chairman.

- Asked whether Facebook might consider collecting less of the user data that can be used to target ads at individuals—a move that might be bad for business but, perhaps, good for users—Zuckerberg said users want ads that are relevant to their interests, such as tech or skiing. “People tell us that if they’re going to see ads they want the ads to be good,” he said, adding soon after that “the feedback is overwhelmingly on the side of wanting a better experience.”

- He said Facebook is working on a scorecard, of sorts, to measure the prevalence of content the company wants to “drive down,” such as fake news and hate speech. Zuckerberg hopes to make this available for other online platforms to use, too.

- Zuckerberg said that since the Cambridge Analytica scandal broke in March, the company hasn’t noticed a “meaningful impact” in the form of users or advertisers defecting. Still, social-media protests such as people spreading hashtags like “#DeleteFacebook” speak to “people feeling like this is a massive breach of trust,” he said, “and we have a lot of work to do to repair that.”

- Despite a report that he isn’t quite ready to commit to following a strict new European Union data-protection rule outside of Europe, Zuckerberg said the company intends to make the same kinds of privacy controls and settings available everywhere, not just in the EU.

- Asked about how he protects his personal privacy online, Zuckerberg, who described himself (somewhat jokingly) as a “power user of the internet,” dodged specifics and instead advised that people follow best practices around security, like changing passwords regularly and using two-factor authentication. <

Keep Reading

Most Popular

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

How scientists traced a mysterious covid case back to six toilets

When wastewater surveillance turns into a hunt for a single infected individual, the ethics get tricky.

The problem with plug-in hybrids? Their drivers.

Plug-in hybrids are often sold as a transition to EVs, but new data from Europe shows we’re still underestimating the emissions they produce.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.