The Problem with Serious Games: Solved

Here’s an imaginary scenario: you’re a law enforcement officer confronted with John, a 21-year-old male suspect who is accused of breaking into a private house on Sunday evening and stealing a laptop, jewelry, and some cash. Your job is to find out whether John has an alibi and if so whether it is coherent and believable.

That’s exactly the kind of scenario that police officers the world over face on a regular basis. But how do you train for such a situation? How do you learn the skills necessary to gather the right kind of information?

An increasingly common way of doing this is with serious games, those designed primarily for purposes other than entertainment. In the last 10 years or so, medical, military, and commercial organizations all over the world began to experiment with game-based scenarios that are designed to teach people how to perform their jobs and tasks in realistic situations.

But there is a problem with serious games which require realistic interaction with another person. It’s relatively straightforward to design one or two scenarios that are coherent, lifelike, and believable but it’s much harder to generate them continually on an ongoing basis.

Imagine in the example above that John is a computer-generated character. What kind of activities could he describe that would serve as a believable, coherent alibi for Sunday evening? And how could he do it a thousand times, each describing a different realistic alibi. Therein lies the problem.

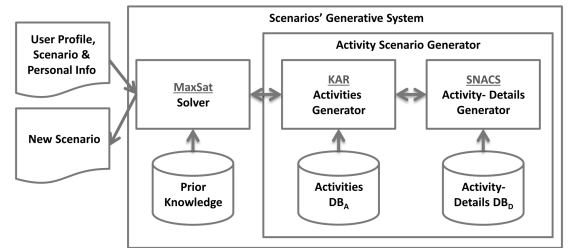

Today, Sigal Sina at Bar-Ilan University in Israel, and a couple pals, say they’ve solved this problem. These guys have come up with a novel way of generating ordinary, realistic scenarios that can be cut and pasted into a serious game to serve exactly this purpose. The secret sauce in their new approach is to crowdsource the new scenarios from real people using Amazon’s Mechanical Turk service.

The approach is straightforward. Sina and co simply ask Turkers to answer a set of questions asking what they did during each one-hour period throughout various days, offering bonuses to those who provide the most varied detail.

They then analyze the answers, categorizing activities by factors such as the times they are performed, the age and sex of the person doing it, the number of people involved, and so on.

This then allows a computer game to cut and paste activities into the action at appropriate times. So, for example, the computer can select an appropriate alibi for John on a Sunday evening by choosing an activity described by a male Turker for the same time while avoiding activities that a woman might describe for a Friday morning, which might otherwise seem unbelievable. The computer also changes certain details in the narrative, such as names, locations, and so on to make the narrative coherent with John’s profile.

Sina and co tested the resulting narratives by asking dozens of people to rate how authentic and coherent they seemed and compared this to how they judged the authenticity of the original, real narratives supplied by the Turkers. The results are impressive, with no significant difference between the ratings.

These guys have even begun to use that new technique in real games. “We have begun integrating this approach within a scenario-based training application for novice investigators within the law enforcement departments to improve their questioning skills,” say Sina and co. These investigators will now find that John has an almost unlimited set of new alibis to draw on.

That solves a significant problem with serious games. Until now, developers have had to spend an awful lot of time producing realistic content, a process known as procedural content generation. That’s always been straightforward for things like textures, models, and terrain in game settings. Now, thanks to this new crowdsourcing technique, it can be just as easy for human interactions in serious games, too.

Ref: arxiv.org/abs/1402.5034: Using the Crowd to Generate Content for Scenario-Based Serious-Games

Keep Reading

Most Popular

How scientists traced a mysterious covid case back to six toilets

When wastewater surveillance turns into a hunt for a single infected individual, the ethics get tricky.

The problem with plug-in hybrids? Their drivers.

Plug-in hybrids are often sold as a transition to EVs, but new data from Europe shows we’re still underestimating the emissions they produce.

What’s next for generative video

OpenAI's Sora has raised the bar for AI moviemaking. Here are four things to bear in mind as we wrap our heads around what's coming.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.