Collective data rights can stop big tech from obliterating privacy

Protecting individual data is not enough when the harms are collective

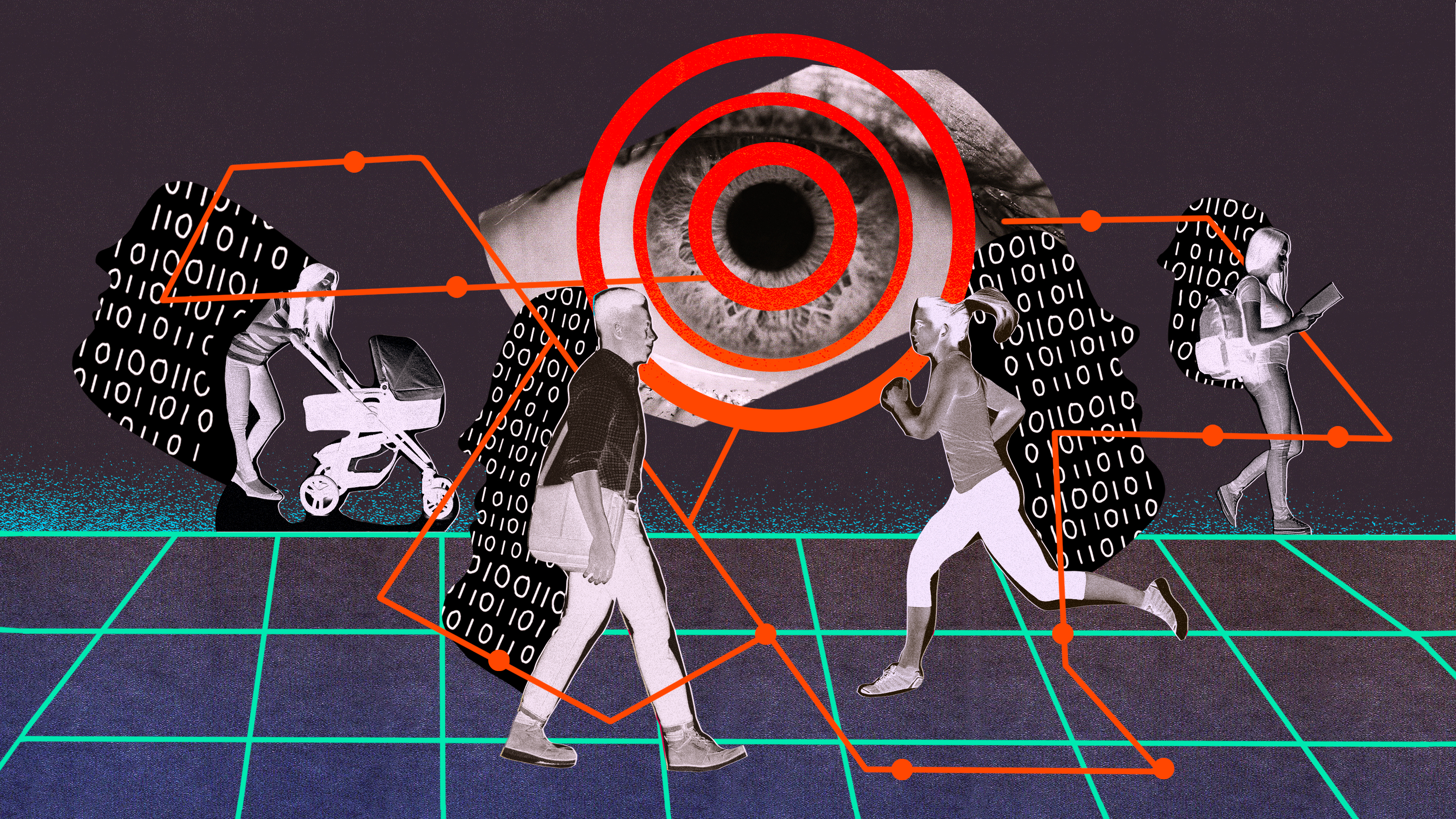

Every person engaged with the networked world constantly creates rivers of data. We do this in ways we are aware of, and ways that we aren’t. Corporations are eager to take advantage.

Take, for instance, NumberEight, a startup, that, according to Wired, “helps apps infer user activity based on data from a smartphone’s sensors: whether they’re running or seated, near a park or museum, driving or riding a train.” New services based on such technology, “will combine what they know about a user’s activity on their own apps with information on what they’re doing physically at the time.” With this information, “instead of building a profile to target, say, women over 35, a service could target ads to ‘early risers.’”

Such ambitions are widespread. As this recent Harvard Business Review article puts it, “Most CEOs recognize that artificial intelligence has the potential to completely change how organizations work. They can envision a future in which, for example, retailers deliver individualized products before customers even request them—perhaps on the very same day those products are made.” As corporations use AI in more and more distinct domains, the article foretells, “their AI capabilities will rapidly compound, and they’ll find that the future they imagined is actually closer than it once appeared.”

Even today, let alone in such a future, technology can completely obliterate privacy. Coming up with laws and policies to stop it from doing so is a vital task for governments.As the Biden administration and Congress contemplate federal privacy legislation they must not succumb to a common fallacy. Laws guarding the privacy of people’s data are not only about protecting individuals. They are also about protecting our rights as members of groups—as part of society as a whole.

The harm to any one individual in a group that results from a violation of privacy rights might be relatively small or hard to pin down, but the harm to the group as a whole can be profound. Say Amazon uses its data on consumer behavior to figure out which products are worth copying and then undercuts the manufacturers of products it sells, like shoes or camera bags. Though the immediate harm is to the shoemaker or the camera-bag maker, the longer-term—and ultimately more lasting—harm is to consumers, who are robbed over the long run of the choices that come from transacting in a truly open and equitable marketplace. And whereas the shoemaker or camera-bag manufacturer can try to take legal action, it’s much tougher for consumers to demonstrate how Amazon’s practices harm them.

This can be a tricky concept to understand. Class action lawsuits, where many individuals join together even though each might have suffered only a small harm, are a good conceptual analogy. Big tech companies understand the commercial benefits they can derive from analyzing the data of groups while superficially protecting the data of individuals through mathematical techniques like differential privacy. But regulators continue to focus on protecting individuals or, at best, protected classes like people of particular genders, ages, ethnicities, or sexual orientations.

If an algorithm discriminates against people by sorting them into groups that do not fall into these protected classes, antidiscrimination laws don’t apply in the United States. (Profiling techniques like those Facebook uses to help machine-learning models sort users are probably illegal under European Union data protection laws, but this has not yet been litigated.) Many people will not even know that they were profiled or discriminated against, which makes it tough to bring legal action. They no longer feel the unfairness, the injustice, firsthand—and that has historically been a precondition to launching a claim.

Individuals should not have to fight for their data privacy rights and be responsible for every consequence of their digital actions. Consider an analogy: people have a right to safe drinking water, but they aren’t urged to exercise that right by checking the quality of the water with a pipette every time they have a drink at the tap. Instead, regulatory agencies act on everyone’s behalf to ensure that all our water is safe. The same must be done for digital privacy: it isn’t something the average user is, or should be expected to be, personally competent to protect.

There are two parallel approaches that should be pursued to protect the public.

One is better use of class or group actions, otherwise known as collective redress actions. Historically, these have been limited in Europe, but in November 2020 the European parliament passed a measure that requires all 27 EU member states to implement measures allowing for collective redress actions across the region. Compared with the US, the EU has stronger laws protecting consumer data and promoting competition, so class or group action lawsuits in Europe can be a powerful tool for lawyers and activists to force big tech companies to change their behavior even in cases where the per-person damages would be very low.

Class action lawsuits have most often been used in the US to seek financial damages, but they can also be used to force changes in policy and practice. They can work hand in hand with campaigns to change public opinion, especially in consumer cases (for example, by forcing Big Tobacco to admit to the link between smoking and cancer, or by paving the way for car seatbelt laws). They are powerful tools when there are thousands, if not millions, of similar individual harms, which add up to help prove causation. Part of the problem is getting the right information to sue in the first place. Government efforts, like a lawsuit brought against Facebook in December by the Federal Trade Commission (FTC) and a group of 46 states, are crucial. As the tech journalist Gilad Edelman puts it, “According to the lawsuits, the erosion of user privacy over time is a form of consumer harm—a social network that protects user data less is an inferior product—that tips Facebook from a mere monopoly to an illegal one.” In the US, as the New York Times recently reported, private lawsuits, including class actions, often “lean on evidence unearthed by the government investigations.” In the EU, however, it’s the other way around: private lawsuits can open up the possibility of regulatory action, which is constrained by the gap between EU-wide laws and national regulators.

Which brings us to the second approach: a little-known 2016 French law called the Digital Republic Bill. The Digital Republic Bill is one of the few modern laws focused on automated decision making. The law currently applies only to administrative decisions taken by public-sector algorithmic systems. But it provides a sketch for what future laws could look like. It says that the source code behind such systems must be made available to the public. Anyone can request that code.

Importantly, the law enables advocacy organizations to request information on the functioning of an algorithm and the source code behind it even if they don’t represent a specific individual or claimant who is allegedly harmed. The need to find a “perfect plaintiff” who can prove harm in order to file a suit makes it very difficult to tackle the systemic issues that cause collective data harms. Laure Lucchesi, the director of Etalab, a French government office in charge of overseeing the bill, says that the law’s focus on algorithmic accountability was ahead of its time. Other laws, like the European General Data Protection Regulation (GDPR), focus too heavily on individual consent and privacy. But both the data and the algorithms need to be regulated.

The need to find a “perfect plaintiff” who can prove harm in order to file a suit makes it very difficult to tackle the systemic issues that cause collective data harms.

Apple promises in one advertisement: “Right now, there is more private information on your phone than in your home. Your locations, your messages, your heart rate after a run. These are private things. And they should belong to you.” Apple is reinforcing this individualist’s fallacy: by failing to mention that your phone stores more than just your personal data, the company obfuscates the fact that the really valuable data comes from your interactions with your service providers and others. The notion that your phone is the digital equivalent of your filing cabinet is a convenient illusion. Companies actually care little about your personal data; that is why they can pretend to lock it in a box. The value lies in the inferences drawn from your interactions, which are also stored on your phone—but that data does not belong to you.

Google’s acquisition of Fitbit is another example. Google promises “not to use Fitbit data for advertising,” but the lucrative predictions Google needs aren’t dependent on individual data. As a group of European economists argued in a recent paper put out by the Centre for Economic Policy Research, a think tank in London, “it is enough for Google to correlate aggregate health outcomes with non-health outcomes for even a subset of Fitbit users that did not opt out from some use of using their data, to then predict health outcomes (and thus ad targeting possibilities) for all non-Fitbit users (billions of them).” The Google-Fitbit deal is essentially a group data deal. It positions Google in a key market for heath data while enabling it to triangulate different data sets and make money from the inferences used by health and insurance markets.

What policymakers must do

Draft bills have sought to fill this gap in the United States. In 2019 Senators Cory Booker and Ron Wyden introduced an Algorithmic Accountability Act, which subsequently stalled in Congress. The act would have required firms to undertake algorithmic impact assessments in certain situations to check for bias or discrimination. But in the US this crucial issue is more likely to be taken up first in laws applying to specific sectors such as health care, where the danger of algorithmic bias has been magnified by the pandemic’s disparate impacts on US population groups.

In late January, the Public Health Emergency Privacy Act was reintroduced to the Senate and House of Representatives by Senators Mark Warner and Richard Blumenthal. This act would ensure that data collected for public health purposes is not used for any other purpose. It would prohibit the use of health data for discriminatory, unrelated, or intrusive purposes, including commercial advertising, e-commerce, or efforts to control access to employment, finance, insurance, housing, or education. This would be a great start. Going further, a law that applies to all algorithmic decision making should, inspired by the French example, focus on hard accountability, strong regulatory oversight of data-driven decision making, and the ability to audit and inspect algorithmic decisions and their impact on society.

Three elements are needed to ensure hard accountability: (1) clear transparency about where and when automated decisions take place and how they affect people and groups, (2) the public’s right to offer meaningful input and call on those in authority to justify their decisions, and (3) the ability to enforce sanctions. Crucially, policymakers will need to decide, as has been recently suggested in the EU, what constitutes a “high risk” algorithm that should meet a higher standard of scrutiny.

Clear Transparency

The focus should be on public scrutiny of automated decision making and the types of transparency that lead to accountability. This includes revealing the existence of algorithms, their purpose, and the training data behind them, as well as their impacts—whether they have led to disparate outcomes, and on which groups if so.

Public participation

The public has a fundamental right to call on those in power to justify their decisions. This “right to demand answers” should not be limited to consultative participation, where people are asked for their input and officials move on. It should include empowered participation, where public input is mandated prior to the rollout of high-risks algorithms in both the public and private sectors.

Sanctions

Finally, the power to sanction is key for these reforms to succeed and for accountability to be achieved. It should be mandatory to establish auditing requirements for data targeting, verification, and curation, to equip auditors with this baseline knowledge, and to empower oversight bodies to enforce sanctions, not only to remedy harm after the fact but to prevent it.

The issue of collective data-driven harms affects everyone. A Public Health Emergency Privacy Act is a first step. Congress should then use the lessons from implementing that act to develop laws that focus specifically on collective data rights. Only through such action can the US avoid situations where inferences drawn from the data companies collect haunt people’s ability to access housing, jobs, credit, and other opportunities for years to come.

Deep Dive

Policy

A brief, weird history of brainwashing

L. Ron Hubbard, Operation Midnight Climax, and stochastic terrorism—the race for mind control changed America forever.

Africa’s push to regulate AI starts now

AI is expanding across the continent and new policies are taking shape. But poor digital infrastructure and regulatory bottlenecks could slow adoption.

Why the Chinese government is sparing AI from harsh regulations—for now

The Chinese government may have been tough on consumer tech platforms, but its AI regulations are intentionally lax to keep the domestic industry growing.

AI was supposed to make police bodycams better. What happened?

New AI programs that analyze bodycam recordings promise more transparency but are doing little to change culture.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.