I asked an AI to tell me how beautiful I am

Computers are ranking the way people look—and the results are influencing the things we do, the posts we see, and the way we think.

I first came across Qoves Studio through its popular YouTube channel, which offers polished videos like “Does the hairstyle make a pretty face?,” “What makes Timothée Chalamet attractive?,” and “How jaw alignment influences social perceptions” to millions of viewers.

Qoves started as a studio that would airbrush images for modeling agencies; now it is a “facial aesthetics consultancy” that promises answers to the “age-old question of what makes a face attractive.” Its website, which features chalky sketches of Parisian-looking women wearing lipstick and colorful hats, offers a range of services related to its plastic surgery consulting business: advice on beauty products, for example, and tips on how to enhance images using your computer. But its most compelling feature is the “facial assessment tool”: an AI-driven system that promises to look at images of your face to tell you how beautiful you are—or aren’t—and then tell you what you can do about it.

Last week, I decided to try it. Following the site’s instructions, I washed off the little makeup I was wearing and found a neutral wall brightened by a small window. I asked my boyfriend to take some close-up photos of my face at eye level. I tried hard to not smile. It was the opposite of glamorous.

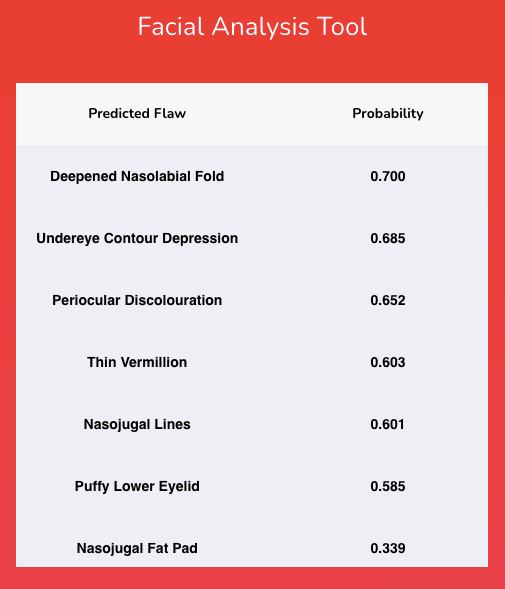

I uploaded the most bearable photo, and within milliseconds Qoves returned a report card of the 10 “predicted flaws” on my face. Topping the list was a 0.7 probability of nasolabial folds, followed by a 0.69 probability of under-eye contour depression, and a 0.66 probability of periocular discoloration. In other words, it suspected (correctly) that I have dark bags under my eyes and smile lines, both of which register as problematic with the AI.

The report helpfully returned recommendations that I might take to address my flaws. First, a suggested article about smile lines informed me that they “may need injectable or surgical intervention.” If I wished, I could upgrade to a fuller report of surgical recommendations, written by doctors, at tiers of $75, $150, and $250. It also suggested five serums I could try first, each featuring a different skin-care ingredient—retinol, neuropeptides, hyaluronic acid, EGF, and TNS. I’d only heard of retinol. Before bed that night I looked through the ingredients of my face moisturizer to see what it contained.

I was intrigued. The tool had broken my appearance down into a list of bite-size issues—a laser trained on what it thought was wrong with my appearance.

Qoves, however, is just one small startup with 20 employees in an ocean of facial analysis companies and services. There is a growing industry of facial analysis tools driven by AI, each claiming to parse an image for characteristics such as emotions, age, or attractiveness. Companies working on such technologies are a darling of venture capital, and such algorithms are used in everything from online cosmetic sales to dating apps. These beauty scoring tools, readily available for purchase online, use face analysis and computer vision to evaluate things like symmetry, eye size, and nose shape to sort through and rank millions of pieces of visual content and surface the most attractive people.

These algorithms train a sort of machine gaze on photographs and videos, spitting out numerical values akin to credit ratings, where the highest scores can unlock the best online opportunities for likes, views, and matches. If that prospect isn’t concerning enough, the technology also exacerbates other problems, say experts. Most beauty scoring algorithms are littered with inaccuracies, ageism, and racism—and the proprietary nature of many of these systems means it is impossible to get insight into how they really work, how much they’re being used, or how they affect users.

“Mirror, mirror on the wall …”

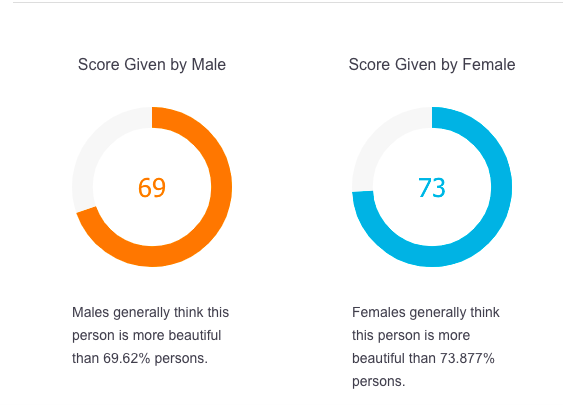

Tests like the ones available from Qoves are all over the internet. One is run by the world’s largest open facial recognition platform, Face++. Its beauty scoring system was developed by the Chinese imaging company Megvii and, like Qoves, uses AI to examine your face. But instead of detailing what it sees in clinical language, it boils down its findings into a percentage grade of likely attractiveness. In fact, it returns two results: one score that predicts how men might respond to a picture, and the other that represents a female perspective. Using the service’s free demo and the same unglamorous photo, I quickly got my results. “Males generally think this person is more beautiful than 69.62% of persons” and “Females generally think this person is more beautiful than 73.877%”.

It was anticlimactic, but better than I had expected. A year into the pandemic, I can see the impact of stress, weight, and closed hair salons on my appearance. I retested the tool with two other photos of myself from Before, both of which I liked. My scores improved, nudging me near the top 25th percentile.

Beauty is often subjective and personal: our loved ones appear attractive to us when they are healthy and happy, and even when they are sad. Other times it’s a collective judgment: ranking systems like beauty pageants or magazine lists of the most beautiful people show how much we treat attractiveness like a prize. This assessment can also be ugly and uncomfortable: when I was a teenager, the boys in my high school would shout numbers from one to 10 at girls who walked past in the hallway. But there’s something eerie about a machine rating the beauty of somebody’s face—it’s just as unpleasant as shouts at school, but the mathematics of it feel disturbingly un-human.

Under the hood

Although the concept of ranking people’s attractiveness is not new, the way these particular systems work is a relatively fresh development: Face++ released its beauty scoring feature in 2017.

When asked for detail on how the algorithm works, a spokesperson for Megvii would only say that it was “developed about three years ago in response to local market interest in entertainment-related apps.” The company’s website indicates that Chinese and Southeast Asian faces were used to train the system, which attracted 300,000 developers soon after it launched, but there is little other information.

A spokesperson for Megvii says that Face++ is an open-source platform and it cannot control the ways in which developers might use it, but the website suggests “cosmetic sales” and “matchmaking” as two potential applications.

The company’s known customers include the Chinese government’s surveillance system, which blankets the country with CCTV cameras, as well as Alibaba and Lenovo. Megvii recently filed for an IPO and is currently valued at $4 billion. According to reporting in the New York Times, it is one of three facial recognition companies that assisted the Chinese government in identifying citizens who might belong to the Uighur ethnic minority.

How convolutional neural networks work

Convolutional neural networks look at images and videos to parse and categorize the objects they depict at multiple levels of analysis. One layer of the network might identify, say, the edges of a human hand; another might find fingers, another hands, another arms, and another people.

Further layers respond to higher levels of abstraction: if this is a tree, what has the network learned about trees? If it’s a street, what are the things on a street that it should expect to see? For example, a convolutional neural network might rate an image of a face with a sharp jawline as very likely to be attractive because it determined that jaw sharpness was a particularly strong indicator of the human beauty score it was trained to replicate.

Qoves, meanwhile, was more forthcoming about how its face analysis works. The company, which is based in Australia, was founded as a photo retouching firm in 2019 but switched to a combination of AI-driven analysis and plastic surgery in 2020. Its system uses a common deep-learning technique known as a convolutional neural network, or CNN. The CNNs used to rate attractiveness typically train on a data set of hundreds of thousands of pictures that have already been manually scored for attractiveness by people. By looking at the pictures and the existing ratings, the system infers what factors people consider attractive so that it can make predictions when shown new images.

Other big companies have invested in beauty AIs in recent years. They include the American cosmetics retailer Ulta Beauty, valued at $18 billion, which developed a skin analysis tool. Nvidia and Microsoft backed a “robot beauty pageant” in 2016, which challenged entrants to develop the best AI to determine attractiveness.

According to Evan Nisselson, a partner at LDV Capital, vision technology is still in its early stages, which creates "significant investment opportunities and upside." LDV estimates that there will be 45 billion cameras in the world by next year, not including those used for manufacturing or logistics and claims that visual data will be the key data input for AI systems in the near future. Nisselson says facial analysis is "a huge market" that will, over the course of time, involve "re-invention of the tech stack to get to the same or closer to or even better than a human's eye."

Qoves founder Shafee Hassan claims that beauty scoring might be even more widespread. He says that social media apps and platforms often use systems that scan people’s faces, score them for attractiveness, and give more attention to those who rank higher. “What we’re doing is doing something similar to Snapchat, Instagram, and TikTok,” he says. “but we’re making it more transparent.”

He adds: “They’re using the same neural network and they’re using the same techniques, but they’re not telling you that [they’ve] identified that your face has these nasolabial folds, it has a thin vermilion, it has all of these things, therefore [they’re] going to penalize you as being a less attractive individual.”

I reached out to a number of companies—including dating services and social media platforms—and asked whether beauty scoring is part of their recommendation algorithms. Instagram and Facebook have denied using such algorithms. TikTok and Snapchat declined to comment on the record.

“Big black boxes”

Recent advances in deep learning have dramatically changed the accuracy of beauty AIs. Before deep learning, facial analysis relied on feature engineering, where a scientific understanding of facial features would guide the AI. The formula for an attractive face, for example, might be set to reward wide eyes and a sharp jaw. “Imagine looking at a human face and seeing a Leonardo da Vinci–style depiction of all the proportions and the spacing between the eyes and that type of thing,” says Serge Belongie, a computer vision professor at Cornell University. With the advent of deep learning, “it became all about big data and big black boxes of neural net computation that just crunched on huge amounts of labeled data,” he says. “And at the end of the day, it works better than all the other stuff that we toiled on for decades.”

But there’s a catch. “We're still not totally sure how it works,” says Belongie. “Industry’s happy, but academia is a little puzzled.” Because beauty is highly subjective, the best a deep-learning beauty AI can do is to accurately regurgitate the preferences of the training data used to teach it. Even though some AI systems now rate attractiveness as accurately as the humans in a training set, that means the systems also display an equal amount of bias. And importantly, because the system is inscrutable, placing guardrails on the algorithm that might minimize the bias is a difficult and computationally costly task.

Belongie says there are applications of this sort of technology that are more anodyne and less problematic than scoring a face for attractiveness—a tool that can recommend the most beautiful photograph of a sunset on your phone, for example. But beauty scoring is different. “That, to me, is a very scary endeavor,” he says.

Even if training data and commercial uses are as unbiased and safe as possible, computer vision has technical limitations when it comes to human skin tones. The imaging chips found in cameras are preset to process a particular range of them. Historically “some skin tones were simply left off the table,” according to Belongie, “which means that the photos themselves may not have even been developed with certain skin tones in mind. Even the noblest of ambitions in terms of capturing all forms of human beauty may not have a chance because the brightness values aren’t even represented accurately.”

And these technical biases manifest as racism in commercial applications. In 2018, Lauren Rhue, an economist who is an assistant professor of information systems at the University of Maryland, College Park, was shopping for facial recognition tools that might aid her work studying digital platforms when she stumbled on this set of unusual products.

“I realized that there were scoring algorithms for beauty,” she says. “And I thought, that seems impossible. I mean, beauty is completely in the eye of the beholder. How can you train an algorithm to determine whether or not someone is beautiful?” Studying these algorithms soon became a new focus for her research.

Looking at how Face++ rated beauty, she found that the system consistently ranked darker-skinned women as less attractive than white women, and that faces with European-like features such as lighter hair and smaller noses scored higher than those with other features, regardless of how dark their skin was. The Eurocentric bias in the AI reflects the bias of the humans who scored the photos used to train the system, codifying and amplifying it—regardless of who is looking at the images. Chinese beauty standards, for example, prioritize lighter skin, wide eyes, and small noses.

Beauty scores, she says, are part of a disturbing dynamic between an already unhealthy beauty culture and the recommendation algorithms we come across every day online. When scores are used to decide whose posts get surfaced on social media platforms, for example, it reinforces the definition of what is deemed attractive and takes attention away from those who do not fit the machine’s strict ideal. “We’re narrowing the types of pictures that are available to everybody,” says Rhue.

It’s a vicious cycle: with more eyes on the content featuring attractive people, those images are able to gather higher engagement, so they are shown to still more people. Eventually, even when a high beauty score is not a direct reason a post is shown to you, it is an indirect factor.

In a study published in 2019, she looked at how two algorithms, one for beauty scores and one for age predictions, affected people’s opinions. Participants were shown images of people and asked to evaluate the beauty and age of the subjects. Some of the participants were shown the score generated by an AI before giving their answer, while others were not shown the AI score at all. She found that participants without knowledge of the AI’s rating did not exhibit additional bias; however, knowing how the AI ranked people’s attractiveness made people give scores closer to the algorithmically generated result. Rhue calls this the “anchoring effect.”

“Recommendation algorithms are actually changing what our preferences are,” she says. “And the challenge from a technology perspective, of course, is to not narrow them too much. When it comes to beauty, we are seeing much more of a narrowing than I would have expected.”

"I didn’t see any reason for not evaluating your flaws, because there are ways you can fix it."

Shafee Hassan, Qoves Studio

At Qoves, Hassan says he has tried to tackle the issue of race head on. When conducting a detailed facial analysis report—the kind that clients pay for—his studio attempts to use data to categorize the face according to ethnicity so that everyone won’t simply be evaluated against a European ideal. “You can escape this Eurocentric bias just by becoming the best-looking version of yourself, the best-looking version of your ethnicity, the best-looking version of your race,” he says.

But Rhue says she worries about this kind of ethnic categorization being embedded deeper into our technological infrastructure. “The problem is, people are doing it, no matter how we look at it, and there's no type of regulation or oversight,” she says. “If there is any type of strife, people will try to figure out who belongs in which category.”

“Let’s just say I've never seen a culturally sensitive beauty AI,” she says.

Recommendation systems don’t have to be designed to evaluate for attractiveness to end up doing it anyway. Last week, German broadcaster BR reported that one AI used to evaluate potential employees displayed biases based on appearance. And in March 2020, the parent company of TikTok, ByteDance, came under criticism for a memo that instructed content moderators to suppress videos that displayed “ugly facial looks,” people who were “chubby,” those with “a disformatted face” or “lack of front teeth,” “senior people with too many wrinkles,” and more. Twitter recently released an auto-cropping tool for photographs that appeared to prioritize white people. When tested on images of Barack Obama and Mitch McConnell, the auto-cropping AI consistently cropped out the former president.

“Who’s the fairest of them all?”

When I first spoke to Qoves founder Hassan by video call in January, he told me, “I’ve always believed that attractive people are a race of their own.”

When he started out in 2019, he says, his friends and family were very critical of his business venture. But Hassan believes he is helping people become the best possible version of themselves. He takes his inspiration from the 1997 movie Gattaca, which takes place in a “not-too-distant future” where genetic engineering is the default means of conception. Genetic discrimination segments society, and Ethan Hawke’s character, who was conceived naturally, has to steal the identity of a genetically perfected person in order to get around the system.

It’s usually considered a deeply dystopian film, but Hassan says it left an unexpected mark.

“It was very interesting to me, because the whole idea was that a person can determine their fate. The way they want to look is part of their fate,” he says. “With how far modern medicine has come, I didn’t see any reason for not evaluating your flaws, because there are ways you can fix it.”

His clients seem to agree. He claims that many of them are actors and actresses, and that the company receives anywhere from 50 to 100 orders for detailed medical reports each day—so many it is having trouble keeping up with demand. For Hassan, fighting the coming “classism” between those who are deemed beautiful and those society thinks are ugly is core to his mission. “What we’re trying to do is help the average person,” he told me.

There are other ways to “help the average person,” however. Every expert I spoke to said that disclosure and transparency from companies that use beauty scoring are paramount. Belongie believes that pressuring companies to reveal the workings of their recommendation algorithms will help keep users safe. “The company should own it and say yes, we are using facial beauty prediction and here’s the model. And here’s a representative gallery of faces that we think, based on your browsing behavior, you find attractive. And I think that the user should be aware of that and be able to interact with it.” He says that features like Facebook’s ad transparency tool are a good start, but “if the companies are not doing that, and they’re doing something like Face++ where they just casually assume we all agree on beauty ... there may be power brokers who simply made that decision.”

Of course, the industry would have to first confess that it uses these scoring models in the first place, and the public would have to be aware of the issue. And though the past year has brought attention and criticism to facial recognition technology, several researchers I spoke with said that they were surprised by the lack of awareness about this use of it. Rhue says the most surprising thing about beauty scoring has been how few people are examining it as a topic. She is not persuaded that the technology should be developed at all.

As Hassan reviewed my own flaws with me, he assured me that a good moisturizer and some weight loss should do the trick. And though the aesthetics of my face won’t determine my career trajectory, he encouraged me to take my results seriously.

“Beauty,” he reminded me, “is a currency.”

Deep Dive

Humans and technology

Building a more reliable supply chain

Rapidly advancing technologies are building the modern supply chain, making transparent, collaborative, and data-driven systems a reality.

Building a data-driven health-care ecosystem

Harnessing data to improve the equity, affordability, and quality of the health care system.

Let’s not make the same mistakes with AI that we made with social media

Social media’s unregulated evolution over the past decade holds a lot of lessons that apply directly to AI companies and technologies.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.