The US military is trying to read minds

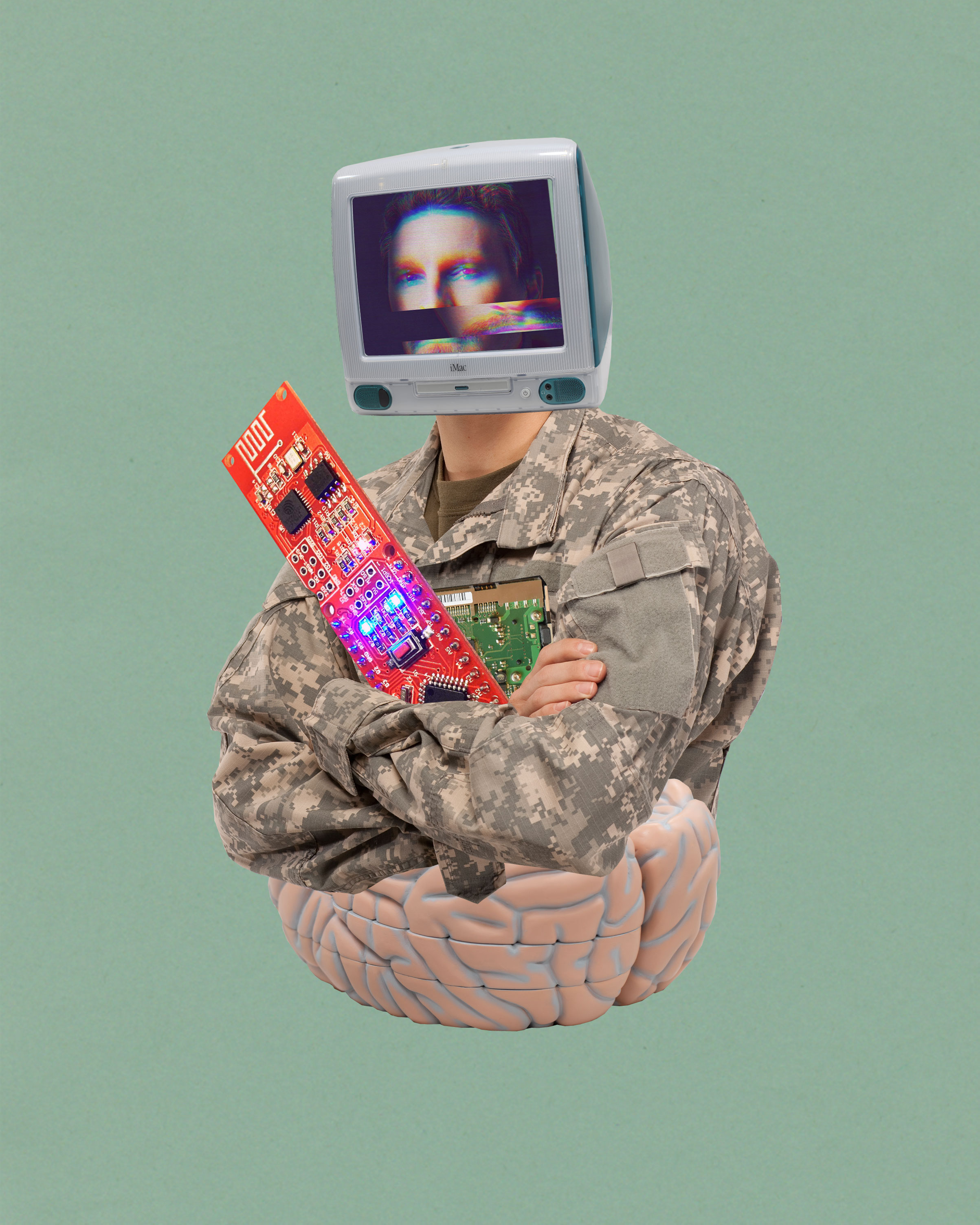

A new DARPA research program is developing brain-computer interfaces that could control “swarms of drones, operating at the speed of thought.” What if it succeeds?

In August, three graduate students at Carnegie Mellon University were crammed together in a small, windowless basement lab, using a jury-rigged 3D printer frame to zap a slice of mouse brain with electricity.

The brain fragment, cut from the hippocampus, looked like a piece of thinly sliced garlic. It rested on a platform near the center of the contraption. A narrow tube bathed the slice in a solution of salt, glucose, and amino acids. This kept it alive, after a fashion: neurons in the slice continued to fire, allowing the experimenters to gather data. An array of electrodes beneath the slice delivered the electric zaps, while a syringe-like metal probe measured how the neurons reacted. Bright LED lamps illuminated the dish. The setup, to use the lab members’ lingo, was kind of hacky.

A monitor beside the rig displayed stimulus and response: jolts of electricity from the electrodes were followed, milliseconds later, by neurons firing. Later, the researchers would place a material with the same electrical and optical properties as a human skull between the slice and the electrodes, to see if they could stimulate the mouse hippocampus through the simulated skull as well.

They were doing this because they want to be able to detect and manipulate signals in human brains without having to cut through the skull and touch delicate brain tissue. Their goal is to eventually develop accurate and sensitive brain-computer interfaces that can be put on and taken off like a helmet or headband—no surgery required.

Human skulls are less than a centimeter thick: the exact thickness varies from person to person and place to place. They act as a blurring filter that diffuses waveforms, be they electrical currents, light, or sound. Neurons in the brain can be as small as a few thousandths of a millimeter in diameter and generate electrical impulses as weak as a twentieth of a volt.

The students’ experiment was intended to collect a baseline of data with which they could compare results from a new technique that Pulkit Grover, the team’s principal investigator, hopes to develop.

“Nothing like this is [now] possible, and it’s really hard to do,” Grover says. He co-leads one of six teams taking part in the Next-generation Nonsurgical Neurotechnology Program, or N³, a $104 million effort launched this year by the Defense Advanced Research Projects Agency, or DARPA. While Grover’s team is manipulating electrical and ultrasound signals, other teams use optical or magnetic techniques. If any of these approaches succeed, the results will be transformative.

Surgery is expensive, and surgery to create a new kind of super-warrior is ethically complicated. A mind-reading device that requires no surgery would open up a world of possibilities. Brain-computer interfaces, or BCIs, have been used to help people with quadriplegia regain limited control over their bodies, and to enable veterans who lost limbs in Iraq and Afghanistan to control artificial ones. N³ is the US military’s first serious attempt to develop BCIs with a more belligerent purpose. “Working with drones and swarms of drones, operating at the speed of thought rather than through mechanical devices—those types of things are what these devices are really for,” says Al Emondi, the director of N³.

UCLA computer scientist Jacques J. Vidal first used the term “brain-computer interface” in the early 1970s; it’s one of those phrases, like “artificial intelligence,” whose definition evolves as the capabilities it describes develop. Electroencephalography (EEG), which records electrical activity in the brain using electrodes placed on the skull, might be regarded as the first interface between brains and computers. By the late 1990s, researchers at Case Western Reserve University had used EEG to interpret a quadriplegic person’s brain waves, enabling him to move a computer cursor by way of a wire extending from the electrodes on his scalp.

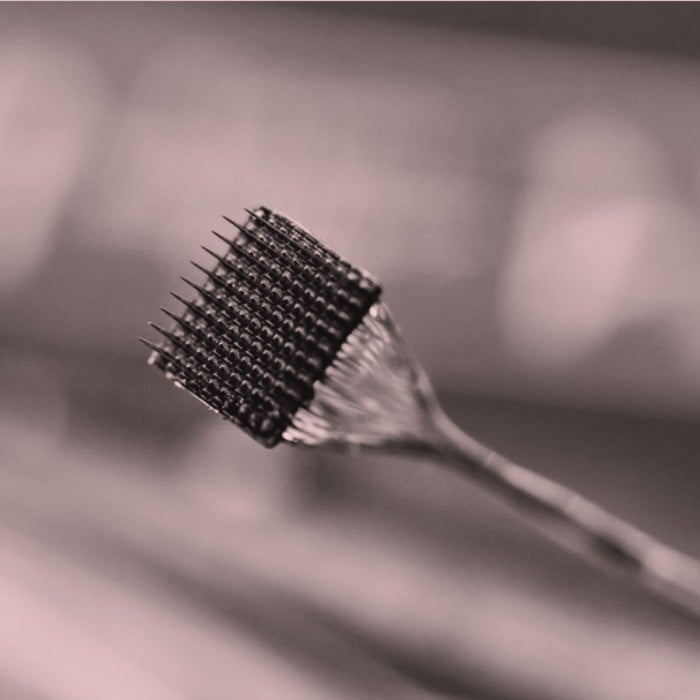

Both invasive and noninvasive techniques for reading from the brain have advanced since then. So too have devices that stimulate the brain with electrical signals to treat conditions such as epilepsy. Arguably the most powerful mechanism developed to date is called a Utah array. It looks like a little bed of spikes, about half the size of a pinkie nail in total, that can penetrate a given part of the brain.

One day in 2010, while on vacation in North Carolina’s Outer Banks, Ian Burkhart dived into the ocean and banged his head on a sandbar. He crushed his spinal cord and lost function from the sixth cervical nerve on down. He could still move his arms at the shoulder and elbow, but not his hands or legs. Physical therapy didn’t help much. He asked his doctors at Ohio State University’s Wexner Medical Center if there was anything more they could do. It turned out that Wexner was hoping to conduct a study together with Battelle, a nonprofit research company, to see if they could use a Utah array to reanimate the limbs of a paralyzed person.

Where EEG shows the aggregate activity of countless neurons, Utah arrays can record the impulses from a small number of them, or even from a single one. In 2014, doctors implanted a Utah array in Burkhart’s head. The array measured the electric field at 96 places inside his motor cortex, 30,000 times per second. Burkhart came into the lab several times a week for over a year, and Battelle researchers trained their signal processing algorithms to capture his intentions as he thought, arduously and systematically, about how he would move his hand if he could.

A thick cable, connected to a pedestal coming out of Burkhart’s skull, sent the impulses measured by the Utah array to a computer. The computer decoded them and then transmitted signals to a sleeve of electrodes that nearly covered his right forearm. The sleeve activated his muscles to perform the motions he intended, such as grasping, lifting, and emptying a bottle, or removing a credit card from his wallet.

That made Burkhart one of the first people to regain control of his own muscles through such a “neural bypass.” Battelle—another of the teams in the N³ program—is now working with him to see if they can achieve the same results without a skull implant.

That means coming up not just with new devices, but with better signal processing techniques to make sense of the weaker, muddled signals that can be picked up from outside the skull. That’s why the Carnegie Mellon N³ team is headed by Grover—an electrical engineer by training, not a neuroscientist.

“I’m super motivated for it— more than anyone else in the room.”

Soon after Grover arrived at Carnegie Mellon, a friend at the University of Pittsburgh Medical School invited him to sit in on clinical meetings for epilepsy patients. He began to suspect that a lot more information about the brain could be inferred from EEG than anyone was giving it credit for—and, conversely, that clever manipulation of external signals could have effects deep within the brain. A few years later, a team led by Edward Boyden at MIT’s Center for Neurobiological Engineering published a remarkable paper that went far beyond Grover’s general intuition.

Boyden’s group had applied two electrical signals, of high but slightly different frequencies, to the outside of the skull. These didn’t affect neurons close to the surface of the brain but those deeper inside it. In a phenomenon known as constructive interference, they combined to produce a lower-frequency signal that stimulated the neurons to fire.

Grover and his group are now working to extend Boyden’s results with hundreds of electrodes placed on the surface of the skull, both to precisely target small regions in the interior of the brain and to “steer” the signal so that it can switch from one brain region to another while the electrodes stay in place. It’s an idea, Grover says, that neuroscientists would be unlikely to have had.

Meanwhile, at the Johns Hopkins University Applied Physics Laboratory (APL), another N³ team is using a completely different approach: near-infrared light.

Current understanding is that neural tissue swells and contracts when neurons fire electrical signals. Those signals are what scientists record with EEG, a Utah array, or other techniques. APL’s Dave Blodgett argues that the swelling and contraction of the tissue is as good a signal of neural activity, and he wants to build an optical system that can measure those changes.

The techniques of the past couldn’t capture such tiny physical movements. But Blodgett and his team have already shown that they can see the neural activity of a mouse when it flicks a whisker. Ten milliseconds after a whisker flicks, Blodgett records the corresponding neurons firing using his optical measurement technique. (There are 1,000 milliseconds in a second, and 1,000 microseconds in a millisecond.) In exposed neural tissue, his team has recorded neural activity within 10 microseconds—just as quickly as a Utah array or other electrical methods.

The next challenge is to do all that through the skull. This might sound impossible: after all, skulls are not transparent to visible light. But near-infrared light can travel through bone. Blodgett’s team fires low-powered infrared lasers through the skull and then measures how the light from those lasers is scattered. He hopes this will let them infer what neural activity is taking place. The approach is less well proven than using electrical signals, but these are exactly the types of risks that DARPA programs are designed to take.

Back at Battelle, Gaurav Sharma is developing a new type of nanoparticle that can cross the blood-brain barrier. It’s what DARPA calls a minimally invasive technique. The nanoparticle has a magnetically sensitive core inside a shell made of a material that generates electricity when pressure is applied. If these nanoparticles are subjected to a magnetic field, the inner core puts stress on the shell, which then generates a small current. A magnetic field is much better than light for “seeing” through the skull, Sharma says. Different magnetic coils allow the scientists to target specific parts of the brain, and the process can be reversed—electric currents can be converted to magnetic fields so the signals can be read.

It remains to be seen which, if any, of these approaches will succeed. Other N³ teams are using various combinations of light, electric, magnetic, and ultrasound waves to get signals in and out of the brain. The science is undoubtedly exciting. But that excitement can obscure how ill-equipped the Pentagon and corporations like Facebook, which are also developing BCIs, are to address the host of ethical, legal, and social questions a noninvasive BCI gives rise to. How might swarms of drones controlled directly by a human brain change the nature of warfare? Emondi, the head of N³, says that neural interfaces will be used however they are needed. But military necessity is a malleable criterion.

In August, I visited a lab at Battelle where Burkhart had spent the previous several hours thinking into a new sleeve, outfitted with 150 electrodes that stimulate his arm muscles. He and researchers hoped they could get the sleeve to work without having to rely on the Utah array to pick up brain signals.

Ian Burkhart, at left, was paralyzed by an accident and is working with researchers at Battelle to develop better brain-computer interfaces.

Burkhart had a Utah array, shown at right, implanted in his motor cortex in 2014. The Battelle group is now trying to develop a way to read his brain signals without a surgical implant.

If your spinal cord has been broken, thinking about moving your arm is hard work. Burkhart was tired. “There’s a graded performance: how hard am I thinking about something translates into how much movement,” he told me. “Whereas before [the accident] you don’t think, ‘Open your hand’”—the rest of us just pick up the bottle. “But I’m super motivated for it—more than anyone else in the room,” he said. Burkhart made it easy to see the technology’s potential.

He told me that since he started working with the Utah array, he’s become stronger and more dexterous even when he isn’t using it—so much so that he now lives on his own, requiring assistance only a few hours a day. “I talk more with my hands. I can hold onto my phone,” he says. “If it gets worked out to something that I can use every day, I’d wear it as long as I can.”

Paul Tullis is a writer living in Amsterdam.

Deep Dive

Humans and technology

Building a more reliable supply chain

Rapidly advancing technologies are building the modern supply chain, making transparent, collaborative, and data-driven systems a reality.

Building a data-driven health-care ecosystem

Harnessing data to improve the equity, affordability, and quality of the health care system.

Let’s not make the same mistakes with AI that we made with social media

Social media’s unregulated evolution over the past decade holds a lot of lessons that apply directly to AI companies and technologies.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.