Predicting Three Mile Island

It was still dark outside when the first thing went wrong.

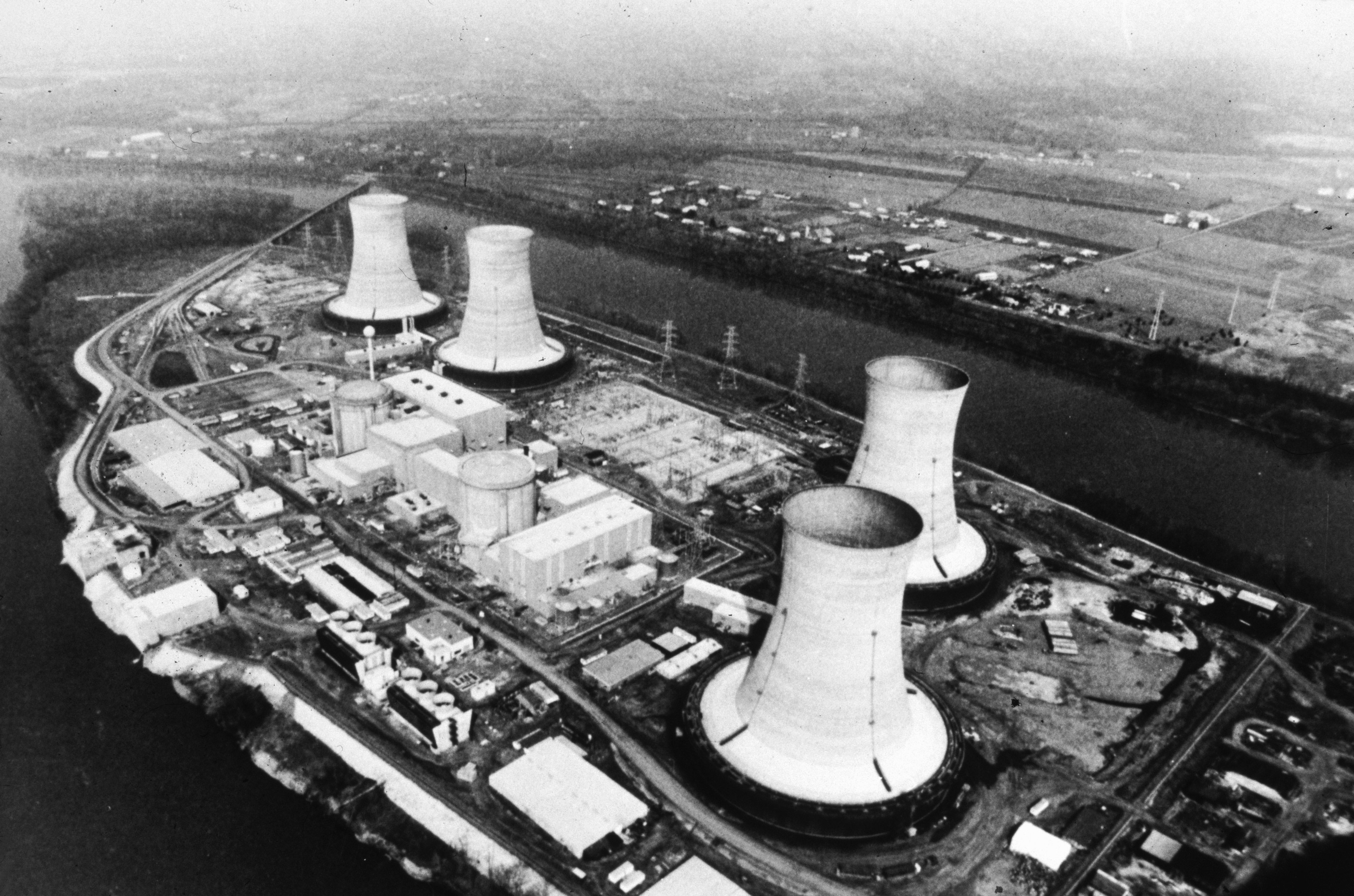

At 4 a.m. on March 28, 1979, a minor malfunction caused a relief valve to open on one of the nuclear reactor units at the Three Mile Island power plant. The valve got stuck and released cooling water from around the core, causing the reactor to automatically shut off.

While that would not have posed any harm had the situation been properly managed, several instrument malfunctions meant that the workers running the plant had no way of knowing it had lost coolant. Amid the chaos of ringing alarms and flashing warning lights, the operators took a series of actions that made conditions much worse, allowing the reactor core to partially melt down. The plant’s containment systems prevented a serious release of radioactive material, but a small amount of radioactive xenon, krypton, and iodine gas leaked into the atmosphere and about 140,000 people were forced to evacuate their homes.

For many, the widely publicized incident was a wake-up call about the potential dangers of nuclear power. No new plants were built for more than 30 years afterward.

The disaster shook the industry to its core, but Norman Rasmussen, PhD ’56, a professor in the Department of Nuclear Engineering at MIT, had warned four years earlier of the danger of a very similar scenario. And as fate would have it, the meltdown he predicted happened just 13 miles from his hometown of Harrisburg, Pennsylvania.

In 1972, the US Atomic Energy Commission had hired Rasmussen, a father of two who had taught at MIT since finishing his PhD in 1956, to conduct a study on public risk from nuclear accidents in the United States. The paper, written by Rasmussen and a team of more than 40 experts, was the first probabilistic study of nuclear power.

The Reactor Safety Study—WASH-1400, often referred to simply as the Rasmussen Report—used probabilistic risk assessment techniques to predict the likelihood of various scenarios that might unfold at nuclear power plants. Prior studies had used deterministic methods of risk assessment, which focused on the disaster outcomes of a given scenario instead of calculating their probabilities. Rasmussen’s report pointed out that small-break loss-of-coolant accidents were a more probable threat than large-break ones. And in addition to conducting a general assessment of pumps and valves, Rasmussen and his team argued that human reliability was a necessary factor to consider because if automatic systems malfunctioned, humans would have to intervene.

This ran contrary to the prevailing notions about nuclear power safety management. The physics community at the time largely assumed that the built-in safety mechanisms on nuclear power plants were sufficient to safely handle any accident in a timely fashion—in other words, that technical back-ups were more important than human actions.

“The human role was largely ignored and, if considered at all, the operators were assumed to only undertake actions favorable to safety,” wrote Jan van Erp in an Argonne National Lab report on the TMI accident.

Van Erp realized that the Rasmussen Report should have been a warning of disaster to come. But at the time it was published in 1975, the analysis met substantial criticism and backlash—ironically, largely on grounds that it underplayed the risks. The American Physical Society said nuclear power posed far greater dangers than Rasmussen and his team predicted, and the Union of Concerned Scientists published a 150-page critique of the paper.

Subsequent review of the Rasmussen Report by what had by then become the Nuclear Regulatory Commission showed that Rasmussen did underestimate the uncertainties in some situations. One reviewer called the report “inscrutable.” So in January 1979, the NRC decided to withdraw its endorsement of the executive summary of Rasmussen’s study.

The night before the announcement Rasmussen got a call late in the evening about the NRC’s plans. He later told his friend and colleague Michael Golay, another MIT professor, that he lay awake all night worrying.

“He was truthfully upset, as you’d expect,” Golay says. “He was about to be embarrassed nationally.”

Two months later, Three Mile Island melted down.

The meltdown was caused by a small loss of coolant—not a large one, as other reports had envisioned. And, as Rasmussen had predicted, the incident was exacerbated by a series of human errors.

It turns out that Rasmussen and his colleagues were nearly spot-on about a couple of other things as well: Rasmussen predicted the high probability of an accident like Three Mile Island and also correctly predicted that the health effects of such an accident would be negligible in their severity.

“[Three Mile Island] was essentially a vindication of the report,” Golay says.

Over the years following the disaster at Three Mile Island, people began to realize the value of Rasmussen’s study and its use of risk assessment in the context of nuclear power. By 1995, the NRC came out with a formal policy statement that recommended employing such analysis.

“They basically backed away from their 1979 rejection [of the Rasmussen Report] and its methods and said, ‘Use the methods. They can help us do a better job.’” Golay says.

The risk assessment method that Rasmussen pioneered was used more and more frequently, and today it is one of the essential tools for evaluating nuclear safety all over the world. Rasmussen died in 2003, having lived to see his report accepted by the nuclear physics community.

“Rasmussen’s strength was he was really good at integrating information from diverse sources into a consistent approach to a problem,” Golay says. “It’s amazing how that first report has stood the test of time. People still cite it. It’s hard to get it right to such a degree on the first pass.”

Keep Reading

Most Popular

How scientists traced a mysterious covid case back to six toilets

When wastewater surveillance turns into a hunt for a single infected individual, the ethics get tricky.

The problem with plug-in hybrids? Their drivers.

Plug-in hybrids are often sold as a transition to EVs, but new data from Europe shows we’re still underestimating the emissions they produce.

What’s next for generative video

OpenAI's Sora has raised the bar for AI moviemaking. Here are four things to bear in mind as we wrap our heads around what's coming.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.