The first DDoS attack was 20 years ago. This is what we’ve learned since.

July 22, 1999, is an ominous date in the history of computing. On that day, a computer at the University of Minnesota suddenly came under attack from a network of 114 other computers infected with a malicious script called Trin00.

This code caused the infected computers to send superfluous data packets to the university, overwhelming its computer and preventing it handling legitimate requests. In this way, the attack knocked out the university computer for two days.

This was the world’s first distributed denial of service (DDoS) attack. But it didn’t take long for the tactic to spread. In the months that followed, numerous other websites became victims, including Yahoo, Amazon, and CNN. Each was flooded with data packets that prevented it from accepting legitimate traffic. And in each case, the malicious data packets came from a network of infected computers.

Since then, DDoS attacks have become common. Malicious actors also make a lucrative trade in extorting protection money from websites they threaten to attack. They even sell their services on the dark web. A 24-hour DDoS attack against a single target can cost as little as $400.

But the cost to the victim can be huge in terms of lost revenue or damaged reputation. That in turn has created a market for cyberdefense that protects against these kinds of attacks. In 2018, this market was worth a staggering €2 billion. All this raises the important question of whether more can be done to defend against DDoS attacks.

Today, 20 years after the first attack, Eric Osterweil from George Mason University in Virginia and colleagues explore the nature of DDoS attacks, how they have evolved, and whether there are foundational problems with network architecture that need to be addressed to make it safer. The answers, they say, are far from straightforward: “The landscape of cheap, compromisable, bots has only become more fertile to miscreants, and more damaging to Internet service operators.”

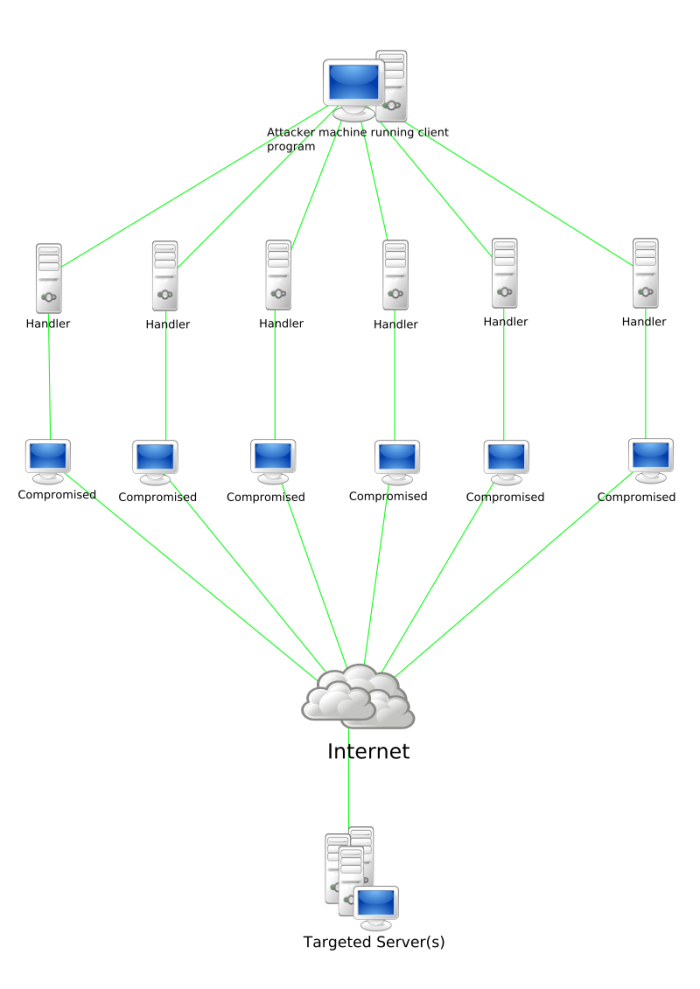

First some background. DDoS attacks usually unfold in stages. In the first stage, a malicious intruder infects a computer with software designed to spread across a network. This first computer is known as the “master,” because it can control any subsequent computers that become infected. The other infected computers carry out the actual attack and are known as “daemons.”

Common victims at this first stage are university or college computer networks, because they are connected to a wide range of other devices.

A DDoS attack begins when the master computer sends a command to the daemons that includes the address of the target. The daemons then start sending large numbers of data packets to this address. The goal is to overwhelm the target with traffic for the duration of the attack. The largest attacks today send malicious data packets at a rate of terabits per second.

The attackers often go to considerable lengths to hide their location and identity. For example, the daemons often use a technique called IP address spoofing to hide their address on the internet. Master computers can also be difficult to trace because they need only send a single command to trigger an attack. And an attacker can choose to use daemons only in countries that are difficult to access, even though they themselves may be located elsewhere.

Defending against these kinds of attacks is hard because it requires concerted actions by a range of operators. The first line of defense is to prevent the creation of the daemon network in the first place. This requires system administrators to regularly update and patch the software they use and to encourage good hygiene among users of their network—for example, regularly changing passwords, using personal firewalls, and so on.

Internet service providers can also provide some defense. Their role is in forwarding data packets from one part of a network to another, depending on the address in each data packet’s header. This is often done with little or no consideration for where the data packet came from.

But that could change. The header contains not only the target address but also the source address. So in theory, it is possible for an ISP to examine the source address and block packets that contain obviously spoofed sources.

However, this is computationally expensive and time consuming. And since the ISPs are not necessarily the targets in a DDoS attack, they have limited incentive to employ expensive mitigation procedures.

Finally, the target itself can take steps to mitigate the effects of an attack. One obvious step is to filter out the bad data packets as they arrive. That works if they are easy to spot and if the computational resources are in place to cope with the volume of malicious traffic.

But these resources are expensive and must be continually updated with the latest threats. They sit unused most of the time, springing into action only when an attack occurs. And even then, they may not cope with the biggest attacks. So this kind of mitigation is rare.

Another option is to outsource the problem to a cloud-based service that is better equipped to handle such threats. This centralizes the problems of DDoS mitigation in “scrubbing centers,” and many cope well. But even these can have trouble dealing with the largest attacks.

All that raises the question of whether more can be done. “How can our network infrastructure be enhanced to address the principles that enable the DDoS problem?” ask Osterweil and co. And they say the 20th anniversary of the first attack should offer a good opportunity to study the problem in more detail. “We believe that what is needed are investigations into what fundamentals enable and exacerbate DDoS,” they say.

One important observation about DDoS attacks is that the attack and the defense are asymmetric. A DDoS attack is typically launched from many daemons all over the world, and yet the defense takes place largely at a single location—the node that is under attack.

An important question is whether networks could or should be modified to include a kind of distributed defense against these attacks. For example, one way forward might be to make it easier for ISPs to filter out spoofed data packets.

Another idea is to make data packets traceable as they travel across the internet. Each ISP could mark a sample of data packets—perhaps one in 20,000—as they are routed so that their journey could later be reconstructed. That would allow the victim and law enforcement agencies to track the source of an attack, even after it has ended.

These and other ideas have the potential to make the internet a safer place. But they require agreement and willingness to act. Osterweil and co think the time is ripe for action: “This is a call to action: the research community is our best hope and best qualified to take up this call.”

Ref: arxiv.org/abs/1904.02739 : 20 Years of DDoS: A Call to Action

Deep Dive

Computing

How ASML took over the chipmaking chessboard

MIT Technology Review sat down with outgoing CTO Martin van den Brink to talk about the company’s rise to dominance and the life and death of Moore’s Law.

How Wi-Fi sensing became usable tech

After a decade of obscurity, the technology is being used to track people’s movements.

Why it’s so hard for China’s chip industry to become self-sufficient

Chip companies from the US and China are developing new materials to reduce reliance on a Japanese monopoly. It won’t be easy.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.