Robots That Learn Through Repetition, Not Programming

Eugene Izhikevich thinks you shouldn’t have to write code in order to teach robots new tricks. “It should be more like training a dog,” he says. “Instead of programming, you show it consistent examples of desired behavior.”

Izhikevich’s startup, Brain Corporation, based in San Diego, has developed an operating system for robots called BrainOS to make that possible. To teach a robot running the software to pick up trash, for example, you would use a remote control to repeatedly guide its gripper to perform that task. After just minutes of repetition, the robot would take the initiative and start doing the task for itself. “Once you train it, it’s fully autonomous,” says Izhikevich, who is cofounder and CEO of the company.

Izhikevich says the approach will make it easier to produce low-cost service robots capable of simple tasks. Programming robots to behave intelligently normally requires significant expertise, he says, pointing out that the most successful home robot today is the Roomba, released in 2002. The Roomba is preprogrammed to perform one main task: driving around at random to cover as much of an area of floor as possible.

Brain Corporation hopes to make money by providing its software to entrepreneurs and companies that want to bring intelligent, low-cost robots to market. Later this year, Brain Corporation will start offering a ready-made circuit board with a smartphone processor and BrainOS installed to certain partners. Building a trainable robot would involve connecting that “brain” to a physical robot body.

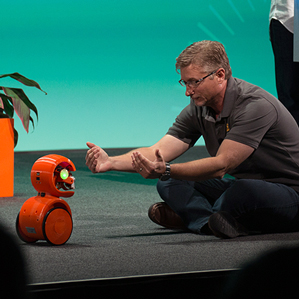

The chip on that board is made by mobile processor company Qualcomm, which is an investor in Brain Corporation. At the Mobile Developers Conference in San Francisco last week, a wheeled robot with twin cameras powered by one of Brain Corporation’s circuit boards was trained live on stage.

In one demo, the robot, called EyeRover, was steered along a specific route around a chair, sofa, and other obstacles a few times. It then repeated the route by itself. In a second demo, the robot was taught to come when a person beckoned to it. One person held one hand close to the robot’s twin cameras, so that EyeRover could lock onto it. A second person then maneuvered the robot forward and back in synchronization with the trainer’s hand. After being led through a rehearsal of the movements just twice, the robot correctly came when summoned.

Those quick examples are hardly sophisticated. But Izhikevich says more extensive training conducted over days or weeks could teach a robot to perform a more complicated task such as pulling weeds out of the ground. A company would need to train only one robot, and could then copy its software to new robots with the same design before they headed to store shelves.

Brain Corporation’s software is based on a combination of several different artificial intelligence techniques. Much of the power comes from using artificial neural networks, which are inspired by the way brain cells communicate, says Izhikevich. Brain Corporation was previously collaborating with Qualcomm on new forms of chip that write artificial neural networks into silicon (see “Qualcomm to Build Neuro-Inspired Chips”). Those “neuromorphic” chips, as they are known, are purely research projects for the moment. But they might eventually offer a more powerful and efficient way to run software like BrainOS.

Brain Corporation previously experimented with reinforcement learning, where a robot starts out randomly trying different behaviors, and a trainer rewards it with a virtual treat when it does the right thing. The approach worked, but had its downsides. “Robots tend to harm themselves when they do that,” says Izhikevich.

Training robots through demonstration is a common technique in research labs, says Manuela Veloso, a robotics professor at Carnegie Mellon University. But the technique has been slower to catch on in the world of commercial robotics, she says. The only example on the market is the two-armed Baxter robot, aimed at light manufacturing. It can be trained in a new production line task by someone manually moving its arms to direct it through the motions it needs to perform (see “This Robot Could Transform Manufacturing”).

Sonia Chernova, an assistant professor in robotics at Worcester Polytechnic Institute, says that most other industrial robot companies are now working to add that type of learning to their own robots. But she adds that training could be tricky for mobile robots, which typically have to deal with more complex environments.

Izhikevich acknowledges that training a robot via demonstration, while faster than programming it, produces less predictable behavior. You wouldn’t want to use the technique to ensure that an autonomous car could detect jaywalkers, for example, he says. But for many simple tasks, it could be acceptable. “Missing 2 percent of the weeds or strawberries you were supposed to pick is okay,” he says. “You can get them tomorrow.”

Keep Reading

Most Popular

How scientists traced a mysterious covid case back to six toilets

When wastewater surveillance turns into a hunt for a single infected individual, the ethics get tricky.

The problem with plug-in hybrids? Their drivers.

Plug-in hybrids are often sold as a transition to EVs, but new data from Europe shows we’re still underestimating the emissions they produce.

What’s next for generative video

OpenAI's Sora has raised the bar for AI moviemaking. Here are four things to bear in mind as we wrap our heads around what's coming.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.