Inside Facebook’s Artificial Intelligence Engine Room

Access Facebook from the western half of North America and there’s a good chance your data will be pulled from a computer cooled by the juniper- and sage-scented air of central Oregon’s high desert.

In the town of Prineville, home to roughly 9,000 people, Facebook stores the data of hundreds of millions more. Rows and rows of computers stand inside four giant buildings totaling nearly 800,000 square feet, precisely aligned to let in the dry and generally cool summer winds that blow in from the northwest. The aisles of stacked servers with blinking blue and green lights make a dull roar as they process logins, likes, and LOLs.

Facebook has lately added some new machines to the mix in Prineville. The company has installed new, high-powered servers designed to speed up efforts to train software to do things like translate posts between languages, be a smarter virtual assistant, or follow written narratives.

Facebook’s new Big Sur servers are designed around high-powered processors of a kind originally developed for graphics processing, known as GPUs. These chips underpin recent leaps in artificial intelligence technology that have come from a technique known as deep learning. Software has become strikingly better at understanding images and speech thanks to the power of GPUs allowing old ideas about how to train software to be applied to much larger, more complex data sets (see “Teaching Machines to Understand Us”).

Kevin Lee, an engineer at Facebook who works on the servers, says they help Facebook’s researchers train software using more data, by working faster. “These servers are purpose-built hardware for AI research and machine learning,” he says. “GPUs can take a photo and split it into tiny pieces and work on them all at once.”

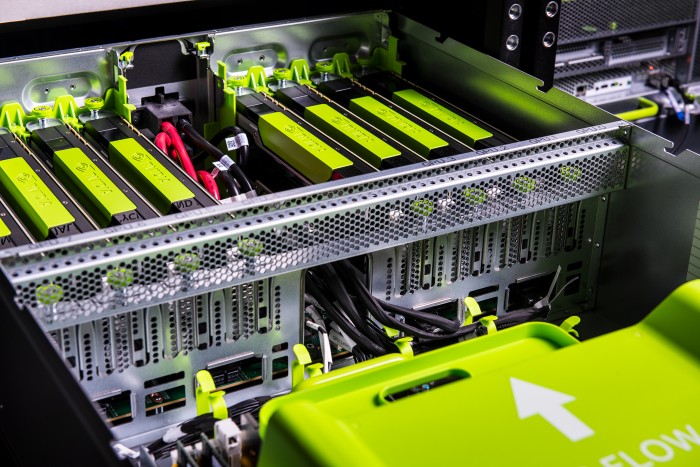

Facebook builds each Big Sur server around eight GPUs made by Nvidia, the leading supplier of such chips. Lee declined to say exactly how many of the servers have been deployed but said the company has “thousands” of GPUs at work. Big Sur servers have been installed in the company’s Prineville and Ashburn, Virginia, data centers.

Because GPUs are extremely power hungry, Facebook has to pack them less densely than it does other types of server in the data center, to avoid creating hot spots that would make things harder for the cooling system and require extra power. Eight Big Sur servers are stacked into a seven-foot-tall rack that might otherwise hold 30 standard Facebook servers that do the more routine work of serving up user data.

Facebook is far from alone in running giant data centers or collecting GPUs to power machine learning research. Microsoft, Google, and Chinese search company Baidu have all relied on GPUs to power deep learning research.

The social network is unusual in that it has opened up the designs for Big Sur and its other server designs, as well as the plans for its Prineville data center. The company contributes them to a nonprofit called the Open Compute Project, started by Facebook in 2011 to encourage computing companies to work together on designs for low-cost, high-efficiency data center hardware. The project is seen as having helped Asian hardware companies and squeezing traditional vendors such as Dell and HP.

Facebook’s director of AI research, Yann LeCun, said when Big Sur was announced earlier this year that he believed making the designs available could accelerate progress in the field by enabling more organizations to build powerful machine learning infrastructure (see “Facebook Joins Stampede of Tech Giants Giving Away Artificial Intelligence Technology”).

Future machine learning servers built on Facebook’s plans may not be built around the GPUs at their heart today, though. Multiple companies are working on new chip designs more specifically tailored to the math of deep learning than GPUs.

Google announced in May that it had started using a chip of its own design, called a TPU, to power deep learning software in products such as speech recognition. The current chip appears to be suited to running algorithms after they have been trained, not the initial training step that Big Sur servers are designed to expedite, but Google is working on a second-generation chip. Nvidia and several startups including Nervana Systems are also working on chips customized for deep learning (see “Intel Outside As Other Companies Prosper from AI Chips”).

Eugenio Culurciello, an associate professor at Purdue University, says that the usefulness of deep learning means such chips look sure to be very widely used. “There’s been a big need for a while and it’s only growing,” he says.

Asked whether Facebook was working on its own custom chips, Lee says the company is “looking into it.”

Keep Reading

Most Popular

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

How scientists traced a mysterious covid case back to six toilets

When wastewater surveillance turns into a hunt for a single infected individual, the ethics get tricky.

The problem with plug-in hybrids? Their drivers.

Plug-in hybrids are often sold as a transition to EVs, but new data from Europe shows we’re still underestimating the emissions they produce.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.