Light Chips Could Mean More Energy-Efficient Data Centers

A microprocessor that uses optical connections instead of electrical wires to shuttle data around has long been the dream of chip designers, but the attempt to fabricate one has frustrated them for years.

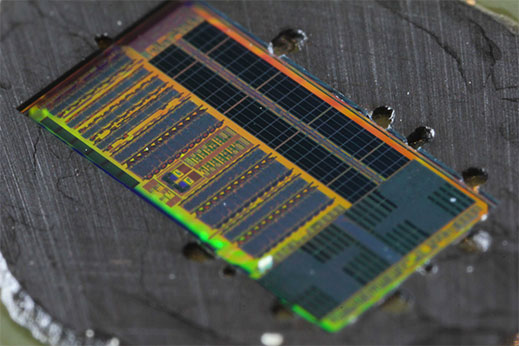

Now a prototype described in the journal Nature offers a promising and practical approach. The electronic-optical microprocessor, developed by a group of researchers at MIT, the University of California, Berkeley, and the University of Colorado, Boulder, integrates over 70 million transistors and 850 optical components. The system uses optical fibers, transmitters, and receivers to send data between a processor chip and a memory chip. In a demo, it runs a graphics program to display and manipulate a 3-D image, a task that requires using the internal optical connections to fetch data from memory and run instructions.

Optical connections can carry more data faster than electrical ones consuming the same amount of power. The data transfers in the prototype occurred at a rate of 300 gigabits per second per square millimeter, which the researchers say is 10 to 50 times the rate for a comparable off-the-shelf electronic microprocessor. That boost in bandwidth could save a lot of energy in data centers, says Chen Sun, a researcher at the University of California, Berkeley. He estimates that 20 to 30 percent of the energy used in data-center servers is spent transferring data between processor, memory, and networking cards. According to an analysis by the Natural Resources Defense Council, data centers in the United States will consume 140 billion kilowatt-hours of electricity a year by 2020, costing $13 billion and emitting 100 million metric tons of carbon.

While optical connections are widely used for long-distance telecommunication connections, bringing them into servers and onto chips has been difficult. Optical components have been expensive to make, requiring dedicated processes and materials that are tricky or impossible to integrate into existing semiconductor production lines.

Getting optical components on the same chip as electronics is particularly hard. Researchers have been able to combine only very simple circuits with optical parts, and these systems have remained pricey, says Sun. He and his collaborators hope to keep costs down by manufacturing their devices on existing semiconductor equipment. The prototype chips were made at a working semiconductor manufacturing facility that’s operated by GlobalFoundries in Fishkill, NY. The researchers sent them their designs and then got back their test chips. This fab is of an older generation; making the integrated chips on the state-of-the-art equipment used to make today’s best circuits will require more work.

Achieving an on-chip optical connection between memory and processor using conventional silicon wafers in an ordinary foundry is “a significant technological accomplishment,” says Shayan Mookherjea, an electrical engineer at the University of California, San Diego, who is also developing optical connections for data centers. However, he points out that making the chips this way requires etching off part of the silicon backing that might otherwise allow light to leak out of the working parts of the chip. That could be tricky to do reliably.

Sun is optimistic. This May, he founded a company to commercialize the designs. Ayar Labs of Berkeley is developing products aimed at data centers and may be ready to bring a product to market in as few as two years, he says.

Keep Reading

Most Popular

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

How scientists traced a mysterious covid case back to six toilets

When wastewater surveillance turns into a hunt for a single infected individual, the ethics get tricky.

The problem with plug-in hybrids? Their drivers.

Plug-in hybrids are often sold as a transition to EVs, but new data from Europe shows we’re still underestimating the emissions they produce.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.