Best of 2015: Why Self-Driving Cars Must Be Programmed to Kill

When it comes to automotive technology, self-driving cars are all the rage. Standard features on many ordinary cars include intelligent cruise control, parallel parking programs, and even automatic overtaking—features that allow you to sit back, albeit a little uneasily, and let a computer do the driving.

So it’ll come as no surprise that many car manufacturers are beginning to think about cars that take the driving out of your hands altogether (see “Drivers Push Tesla’s Autopilot Beyond Its Abilities”). These cars will be safer, cleaner, and more fuel-efficient than their manual counterparts. And yet they can never be perfectly safe.

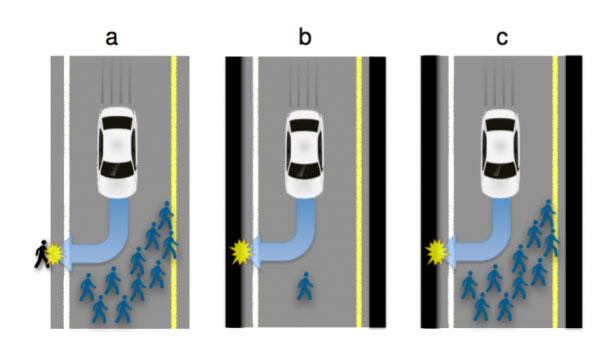

And that raises some difficult issues. How should the car be programmed to act in the event of an unavoidable accident? Should it minimize the loss of life, even if it means sacrificing the occupants, or should it protect the occupants at all costs? Should it choose between these extremes at random? (See also “How to Help Self-Driving Cars Make Ethical Decisions.”)

The answers to these ethical questions are important because they could have a big impact on the way self-driving cars are accepted in society. Who would buy a car programmed to sacrifice the owner?

So can science help?

Keep Reading

Most Popular

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

How scientists traced a mysterious covid case back to six toilets

When wastewater surveillance turns into a hunt for a single infected individual, the ethics get tricky.

The problem with plug-in hybrids? Their drivers.

Plug-in hybrids are often sold as a transition to EVs, but new data from Europe shows we’re still underestimating the emissions they produce.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.