The Tricky Challenge of Making Machines That “See”

The following was originally published as “Real-World Machines” in the July/August 1968 issue of Technology Review. Our senior editor for AI, Will Knight, recently caught up with Minsky in a video interview that you can see here.

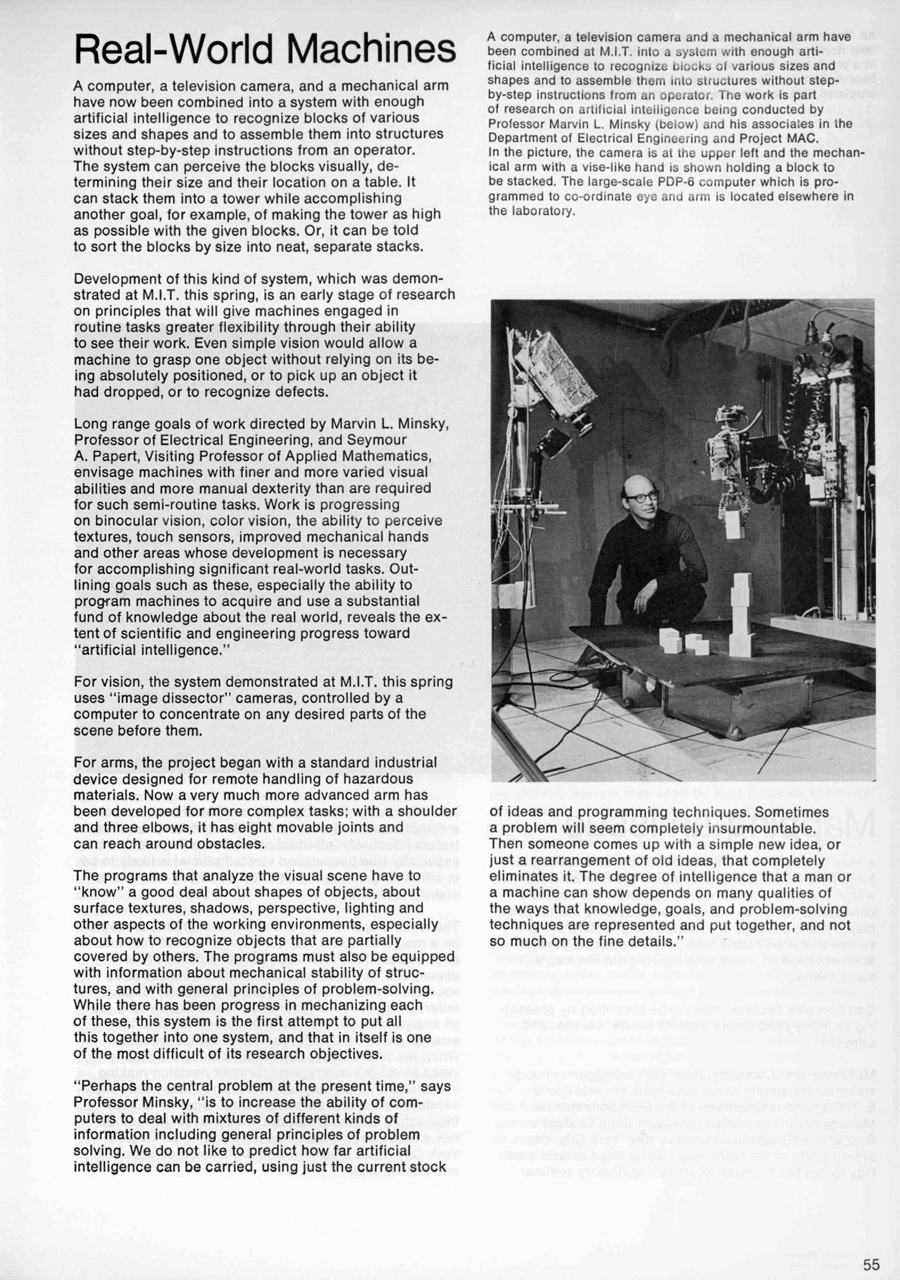

A computer, a television camera, and a mechanical arm have now been combined into a system with enough artificial intelligence to recognize blocks of various sizes and shapes and to assemble them into structures without step-by-step instructions from an operator. The system can perceive the blocks visually, determining their size and their location on a table. It can stack them into a tower while accomplishing another goal, for example, of making the tower as high as possible with the given blocks. Or, it can be told to sort the blocks by size into neat, separate stacks.

Development of this kind of system, which was demonstrated at M.I.T. this spring, is an early stage of research on principles that will give machines engaged in routine tasks greater flexibility through their ability to see their work. Even simple vision would allow a machine to grasp one object without relying on its being absolutely positioned, or to pick up an object it had dropped, or to recognize defects.

Long range goals of work directed by Marvin L. Minsky, Professor of Electrical Engineering, and Seymour A. Papert, Visiting Professor of Applied Mathematics, envisage machines with finer and more varied visual abilities and more manual dexterity than are required for such semi-routine tasks. Work is progressing on binocular vision, color vision, the ability to perceive textures, touch sensors, improved mechanical hands and other areas whose development is necessary for accomplishing significant real-world tasks. Outlining goals such as these, especially the ability to program machines to acquire and use a substantial fund of knowledge about the real world, reveals the extent of scientific and engineering progress toward “artificial intelligence.”

For vision, the system demonstrated at M.I.T. this spring uses “image dissector” cameras, controlled by a computer to concentrate on any desired parts of the scene before them.

For arms, the project began with a standard industrial device designed for remote handling of hazardous materials. Now a very much more advanced arm has been developed for more complex tasks; with a shoulder and three elbows, it has eight movable joints and can reach around obstacles.

The programs that analyze the visual scene have to “know” a good deal about shapes of objects, about surface textures, shadows, perspective, lighting and other aspects of the working environments, especially about how to recognize objects that are partially covered by others. The programs must also be equipped with information about mechanical stability of structures, and with general principles of problem-solving. While there has been progress in mechanizing each of these, this system is the first attempt to put all this together into one system, and that in itself is one of the most difficult of its research objectives.

“Perhaps the central problem at the present time,” says Professor Minsky, “is to increase the ability of computers to deal with mixtures of different kinds of information including general principles of problem solving. We do not like to predict how far artificial intelligence can be carried, using just the current stock of ideas and programming techniques. Sometimes a problem will seem completely insurmountable. Then someone comes up with a simple new idea, or just a rearrangement of old ideas, that completely eliminates it. The degree of intelligence that a man or a machine can show depends on many qualities of the ways that knowledge, goals, and problem-solving techniques are represented and put together, and not so much on the fine details.”

Keep Reading

Most Popular

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

How scientists traced a mysterious covid case back to six toilets

When wastewater surveillance turns into a hunt for a single infected individual, the ethics get tricky.

The problem with plug-in hybrids? Their drivers.

Plug-in hybrids are often sold as a transition to EVs, but new data from Europe shows we’re still underestimating the emissions they produce.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.