Data Analysis Is Creating New Business Opportunities

Sitting in the left-field upper deck to watch the San Francisco Giants play baseball on May 11 would cost you eight bucks if you’d bought the ticket in late April. If you wanted the same ticket for the May 21 game, though, you’d have to pay $45.50.

The capabilities of software have finally caught up with what scalpers have always known: ticket prices should depend on demand. With the help of a data analytics system from Qcue, a company in Austin, Texas, the Giants have adopted dynamic pricing, which enables ticket prices to change depending on circumstances that affect demand—even up to the last minute. The system quantified just how low prices would need to be in order to fill seats at a Wednesday-night game against the mediocre Arizona Diamondbacks, and how much more people would be likely to pay for a Saturday-afternoon game against the Giants’ cross-bay rivals, the Oakland A’s.

The Giants organization credits dynamic pricing with a 6 percent increase in ticket revenue last year over what the team could otherwise have expected from a winning season that culminated in a World Series victory. In fact, the Giants are now experimenting with other versions of dynamic pricing, like using weather data to figure out the optimal price for beer.

It’s but one example of a new generation of database and data analytics technologies offering new ways for companies to reach and—they hope—please their customers. This is the big idea that we will explore throughout May in Business Impact.

Databases are as old as computers and have been at the heart of most companies’ operations for decades, but the last few years have seen significant advances in both the hardware and the software involved. Pools of data are getting dramatically larger, and methods of analyzing it are getting dramatically faster.

That’s why dynamic pricing is moving beyond the airline industry and its mainframe computers. Yet even as the analysis can now be done on less sophisticated hardware, it is able to work in more complex situations like a ballpark, which can have thousands of seats in numerous pricing tiers. Because of this improved power of many kinds of data analysis, more businesses are likely to try to use it to squeeze improvements out of their operations, from attracting and keeping customers to figuring out which new products to introduce and how to price them.

These new technologies don’t yet have a catchy name, like “the cloud” for centrally located computing. They’re sometimes called “Big Data,” but that term is applicable only to some of the changes under way. Their magnitude was summed up in a March report by a team of Credit Suisse analysts, who said the IT world “stands at the cusp of the most significant revolution in database and application architectures in 20 years.”

Big tech companies such as IBM, Oracle, and Hewlett-Packard have spent billions of dollars to acquire companies that offer business analytics or database technologies. Data-related startups are also on the rise in Silicon Valley; most big VC firms now have a partner specializing in the field. The data being analyzed doesn’t even have to come in the form of transaction records or other numbers. For example, CalmSea, a startup formed in 2009 by several data industry veterans, sells a product that lets retailers glean useful insights from the vast amounts of information about them on social networks. The retailers might figure out that they should offer special deals to loyal customers, or launch finely tuned marketing efforts in response to changes in public sentiment.

Big consulting companies are also ramping up their data-related practices. “So much new data is out there,” says Brian McCarthy, director of strategy for analytics at Accenture. “Companies are trying to figure out how to extract value from all the noise.”

All of this is made possible by two separate strands of technology.

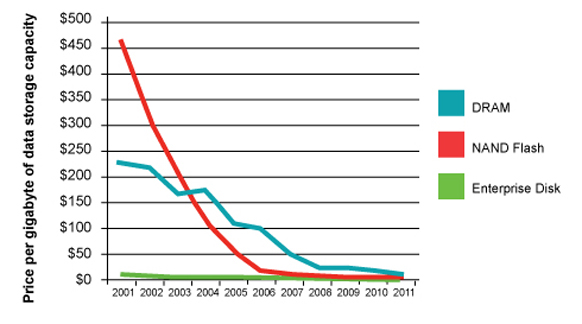

* Traditional database and business analytics tools are being reëngineered so that they no longer need to store data on a traditional disk drive. Instead, they can operate entirely in a computer’s onboard memory—the kind that’s not in a separate drive but hardwired into the heart of the machine. This “in-memory” approach would have been prohibitively expensive a few years ago but is now feasible because of the continually declining prices for flash memory, the same solid-state technology used in mobile phones and music players.

Operations inside a computer’s solid-state memory run faster than those that need to access the moving parts of a disk drive, sometimes by a factor of 100. As a result, database software gets an immediate performance increase.

But there are numerous other, less obvious, advantages as well. Steve Graves, CEO of McObject, which sells an in-memory database, says that traditional database software usually goes to elaborate lengths to minimize disk usage and improve performance. Those lines of code can simply be omitted in an in-memory version of the program, making performance even snappier.

Another advantage of in-memory systems is that they simplify a number of messy, time-consuming tasks that now must occur before data stored for accounting purposes can be used to analyze a company’s operations. This work usually lands in the lap of a company’s already overworked IT crew, creating a bottleneck for departments trying to put corporate data to good use. SAP cofounder Hasso Plattner is so enthusiastic about the quest for new in-memory data tools that he coauthored a book on the topic.

* The other significant new development in data involves traditional disk drives assembled in configurations of previously unthinkable size. Much of this work was first done at Google, in connection with its effort to index the entire Web. But public versions of many of those tools have since been developed, Linux-style, by the open-source community.

The best-known is Hadoop, a large-scale data storage approach being used by a growing number of businesses. The biggest known Hadoop cluster is a 25-petabyte system of 2,000 machines at Facebook. (A petabyte is a thousand terabytes, or 1 followed by 15 0s.) Google is believed to operate even larger clusters, but the company doesn’t discuss the matter.

These two architectural approaches to databases—in-memory and mega-scale—are being enhanced by changes in database software.

Traditional databases were designed to facilitate “transactions,” such as updating a bank account when an ATM withdrawal is made. They tend to be rigidly structured, with well-defined fields; the database for a payroll department would probably include fields for an employee’s name, Social Security number, tax filing status, and the like. The questions you can ask about the data are limited by the fields into which the data was entered in the first place. In contrast, the new approaches have a more forgiving way of handling “unstructured” data, like the contents of a Web page. As a result, users can ask questions that might not have even occurred to them when they were first setting up the database.

These two new data approaches have something else in common: they take advantage of new algorithmic insights. One example is “noSQL,” a new, less-is-more approach to querying a database; in the interest of speed, it dispenses with many of the less-used features of Structured Query Language, long a database standard. Another example is “columnar” databases, which are based on research showing that data software runs more quickly when information is, in effect, stored in columns instead of rows.

Obviously, enormous Web properties like Google or Facebook need new database approaches. But data technology vendors also trumpet success stories that have little or nothing to do with the Web.

For example, an in-memory system called HANA, made by SAP, enables the power-tool company Hilti to get customer information for its sales teams in a few seconds, rather than the several hours required by its traditional data warehousing software. That gives the sales staff virtually instant insights into a customer’s operations and order history.

Circle of Blue, a nonprofit that studies global water issues, is about to use in-memory products from QlikTech to aggregate masses of data for a complex study of the Great Lakes, says executive director J. Carl Ganter.

Financial-service providers are using Hadoop systems from a company called Cloudera in their fraud detection efforts, basically adding all the information they can get their hands on in an attempt to find new methods by which their payments networks might be abused.

A common theme is that the new systems make databases so fast and easy that companies invariably begin to find new uses for them. A big retailer, for example, might get into the habit of keeping a record of every click and mouse movement from every visitor to its site, with the goal of finding fresh insights about customer choices.

And as companies see the cost of storing data plummet, they are adjusting their notions of how much data they need to keep. Petabytes of storage aren’t just for Google anymore.

Lee Gomes, a writer in San Francisco, has covered Silicon Valley for 20 years, much of that time as a reporter for the Wall Street Journal.

Keep Reading

Most Popular

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

How scientists traced a mysterious covid case back to six toilets

When wastewater surveillance turns into a hunt for a single infected individual, the ethics get tricky.

The problem with plug-in hybrids? Their drivers.

Plug-in hybrids are often sold as a transition to EVs, but new data from Europe shows we’re still underestimating the emissions they produce.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.