A Prosthesis for Speech

For more than eight years, Erik Ramsey has been trapped in his own body. At 16, Ramsey suffered a brain-stem injury after a car crash, leaving him with a condition known as “locked-in” syndrome. Unlike other forms of paralysis, locked-in patients can still feel sensation, but they cannot move on their own, and they are unable to control the complex vocal muscles required to speak. In Ramsey’s case, his eyes are his only means of communication: skyward for yes, downward for no.

Now researchers at Boston University are developing brain-reading computer software that in essence translates thoughts into speech. Combined with a speech synthesizer, such brain-machine interfacing technology has enabled Ramsey to vocalize vowels in real time–a huge step toward recovering full speech for Ramsey and other patients with paralyzing speech disorders. The researchers are presenting their work at the annual Acoustical Society of America meeting in Paris this week.

“The question is, can we get enough information out that produces intelligible speech?” asks Philip Kennedy of Neural Signals, a brain-computer interface developer based in Atlanta. “I think there’s a fair shot at this at this point.”

Kennedy and Frank Guenther, an associate professor at Boston University’s Department of Cognitive and Neural Systems, have been decoding activity within Ramsey’s brain for the past three years via a permanent electrode implanted beneath the surface of his brain, in a region that controls movement of the mouth, lips, and jaw. During a typical session, the team asks Ramsey to mentally “say” a particular sound, such as “ooh” or “ah.” As he repeats the sound in his head, the electrode picks up local nerve signals, which are sent wirelessly to a computer. The software then analyzes those signals for common patterns that most likely denote that particular sound.

The software is designed to translate neural activity into what are known as formant frequencies, the resonant frequencies of the vocal tract. For example, if your mouth is open wide and your tongue is pressed to the base of the mouth, a certain sound frequency is created as air flows through, based on the position of the vocal musculature. Different muscle positioning creates a different frequency. Guenther trained the computer to recognize patterns of neural signals linked to specific movements of the mouth, jaw, and lips. He then translated these signals into the correlating sound frequencies and programmed a sound synthesizer to project these frequencies back out through a speaker in audio form.

So far, Guenther and Kennedy have programmed the synthesizer to play back sounds within 50 milliseconds–that is, almost instantaneously–from when Ramsey first “voiced” them in his head. This audio playback feature has allowed Ramsey to practice mentally voicing vowels, first by hearing his initial “utterance,” then by adjusting his mental sound representation to improve the next playback. Jonathan Brumberg, a PhD student in Guenther’s lab, says that while each trial has been slow-going–it takes great effort on Ramsey’s part–the results have been promising. “At this point, he can do these vowel sounds pretty well,” says Brumberg. “We’re now fairly confident the same can be accomplished with consonants.”

However, as there are four times as many consonants as vowels, it may take years for the team to decode all the sounds, not to mention string them together to recognize and produce fluent speech. Brumberg says that the team may need to implant more electrodes, in areas solely devoted to the tongue, lips, or mouth, to get an accurate picture of more-complex sounds such as consonants.

“The electrode is only capturing about 56 distinct neural signals,” says Brumberg. “But you have to think: there are billions of cells in the brain with trillions of connections, and we are only sampling a very small portion of what is there.”

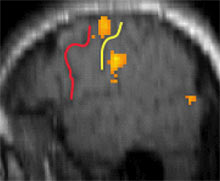

The team has no immediate plans to implant Ramsey with additional electrodes. However, Guenther is also exploring noninvasive methods of studying speech production in normal volunteers. He and Brumberg are scanning the brains of normal speakers using functional magnetic resonance imaging (fMRI). As volunteers perform various tasks, such as naming pictures and mentally repeating various sounds and words, active brain areas light up in response.

Guenther and Brumberg plan to analyze these scans for common patterns, zeroing in on specific regions related to certain sounds, with the goal of one day implanting additional electrodes in these regions. The researchers say that decoding signals within these areas may help translate speech for people with disorders such as locked-in syndrome and other forms of paralysis.

“For patients with certain kinds of speech-related disorders originating in the peripheral nervous system, this approach is highly promising,” says Vincent Gracco, director of the Center for Research on Language, Mind and Brain at McGill University. “There is the potential to provide a useful means of communicating for patients with no functioning speech, in ways that have not been explored.”

Keep Reading

Most Popular

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

How scientists traced a mysterious covid case back to six toilets

When wastewater surveillance turns into a hunt for a single infected individual, the ethics get tricky.

The problem with plug-in hybrids? Their drivers.

Plug-in hybrids are often sold as a transition to EVs, but new data from Europe shows we’re still underestimating the emissions they produce.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.