Higher Games

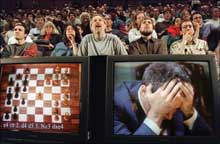

In the popular imagination, chess isn’t like a spelling bee or Trivial Pursuit, a competition to see who can hold the most facts in memory and consult them quickly. In chess, as in the arts and sciences, there is plenty of room for beauty, subtlety, and deep originality. Chess requires brilliant thinking, supposedly the one feat that would be–forever–beyond the reach of any computer. But for a decade, human beings have had to live with the fact that one of our species’ most celebrated intellectual summits–the title of world chess champion–has to be shared with a machine, Deep Blue, which beat Garry Kasparov in a highly publicized match in 1997. How could this be? What lessons could be gleaned from this shocking upset? Did we learn that machines could actually think as well as the smartest of us, or had chess been exposed as not such a deep game after all?

The following years saw two other human-machine chess matches that stand out: a hard-fought draw between Vladimir Kramnik and Deep Fritz in Bahrain in 2002 and a draw between Kasparov and Deep Junior in New York in 2003, in a series of games that the New York City Sports Commission called “the first World Chess Championship sanctioned by both the Fédération Internationale des Échecs (FIDE), the international governing body of chess, and the International Computer Game Association (ICGA).”

The verdict that computers are the equal of human beings in chess could hardly be more official, which makes the caviling all the more pathetic. The excuses sometimes take this form: “Yes, but machines don’t play chess the way human beings play chess!” Or sometimes this: “What the machines do isn’t really playing chess at all.” Well, then, what would be really playing chess?

This is not a trivial question. The best computer chess is well nigh indistinguishable from the best human chess, except for one thing: computers don’t know when to accept a draw. Computers–at least currently existing computers–can’t be bored or embarrassed, or anxious about losing the respect of the other players, and these are aspects of life that human competitors always have to contend with, and sometimes even exploit, in their games. Offering or accepting a draw, or resigning, is the one decision that opens the hermetically sealed world of chess to the real world, in which life is short and there are things more important than chess to think about. This boundary crossing can be simulated with an arbitrary rule, or by allowing the computer’s handlers to step in. Human players often try to intimidate or embarrass their human opponents, but this is like the covert pushing and shoving that goes on in soccer matches. The imperviousness of computers to this sort of gamesmanship means that if you beat them at all, you have to beat them fair and square–and isn’t that just what Kasparov and Kramnik were unable to do?

Yes, but so what? Silicon machines can now play chess better than any protein machines can. Big deal. This calm and reasonable reaction, however, is hard for most people to sustain. They don’t like the idea that their brains are protein machines. When Deep Blue beat Kasparov in 1997, many commentators were tempted to insist that its brute-force search methods were entirely unlike the exploratory processes that Kasparov used when he conjured up his chess moves. But that is simply not so. Kasparov’s brain is made of organic materials and has an architecture notably unlike that of Deep Blue, but it is still, so far as we know, a massively parallel search engine that has an outstanding array of heuristic pruning techniques that keep it from wasting time on unlikely branches.

True, there’s no doubt that investment in research and development has a different profile in the two cases; Kasparov has methods of extracting good design principles from past games, so that he can recognize, and decide to ignore, huge portions of the branching tree of possible game continuations that Deep Blue had to canvass seriatim. Kasparov’s reliance on this “insight” meant that the shape of his search trees–all the nodes explicitly evaluated–no doubt differed dramatically from the shape of Deep Blue’s, but this did not constitute an entirely different means of choosing a move. Whenever Deep Blue’s exhaustive searches closed off a type of avenue that it had some means of recognizing, it could reuse that research whenever appropriate, just like Kasparov. Much of this analytical work had been done for Deep Blue by its designers, but Kasparov had likewise benefited from hundreds of thousands of person-years of chess exploration transmitted to him by players, coaches, and books.

It is interesting in this regard to contemplate the suggestion made by Bobby Fischer, who has proposed to restore the game of chess to its intended rational purity by requiring that the major pieces be randomly placed in the back row at the start of each game (randomly, but in mirror image for black and white, with a white-square bishop and a black-square bishop, and the king between the rooks). Fischer Random Chess would render the mountain of memorized openings almost entirely obsolete, for humans and machines alike, since they would come into play much less than 1 percent of the time. The chess player would be thrown back onto fundamental principles; one would have to do more of the hard design work in real time. It is far from clear whether this change in rules would benefit human beings or computers more. It depends on which type of chess player is relying most heavily on what is, in effect, rote memory.

The fact is that the search space for chess is too big for even Deep Blue to explore exhaustively in real time, so like Kasparov, it prunes its search trees by taking calculated risks, and like Kasparov, it often gets these risks precalculated. Both the man and the computer presumably do massive amounts of “brute force” computation on their very different architectures. After all, what do neurons know about chess? Any work they do must use brute force of one sort or another.

It may seem that I am begging the question by describing the work done by Kasparov’s brain in this way, but the work has to be done somehow, and no way of getting it done other than this computational approach has ever been articulated. It won’t do to say that Kasparov uses “insight” or “intuition,” since that just means that Kasparov himself has no understanding of how the good results come to him. So since nobody knows how Kasparov’s brain does it–least of all Kasparov himself–there is not yet any evidence at all that Kasparov’s means are so very unlike the means exploited by Deep Blue.

People should remember this when they are tempted to insist that “of course” Kasparov plays chess in a way entirely different from how a computer plays the game. What on earth could provoke someone to go out on a limb like that? Wishful thinking? Fear?

In an editorial written at the time of the Deep Blue match, “Mind over Matter” (May 10, 1997), the New York Times opined:

The real significance of this over-hyped chess match is that it is forcing us to ponder just what, if anything, is uniquely human. We prefer to believe that something sets us apart from the machines we devise. Perhaps it is found in such concepts as creativity, intuition, consciousness, esthetic or moral judgment, courage or even the ability to be intimidated by Deep Blue.

The ability to be intimidated? Is that really one of our prized qualities? Yes, according to the Times:

Nobody knows enough about such characteristics to know if they are truly beyond machines in the very long run, but it is nice to think that they are.

Why is it nice to think this? Why isn’t it just as nice–or nicer–to think that we human beings might succeed in designing and building brainchildren that are even more wonderful than our biologically begotten children? The match between Kasparov and Deep Blue didn’t settle any great metaphysical issue, but it certainly exposed the weakness in some widespread opinions. Many people still cling, white-knuckled, to a brittle vision of our minds as mysterious immaterial souls, or–just as romantic–as the products of brains composed of wonder tissue engaged in irreducible noncomputational (perhaps alchemical?) processes. They often seem to think that if our brains were in fact just protein machines, we couldn’t be responsible, lovable, valuable persons.

Finding that conclusion attractive doesn’t show a deep understanding of responsibility, love, and value; it shows a shallow appreciation of the powers of machines with trillions of moving parts.

Daniel Dennett is the codirector of the Center for Cognitive Studies at Tufts University, where he is also a professor of philosophy.

Keep Reading

Most Popular

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

How scientists traced a mysterious covid case back to six toilets

When wastewater surveillance turns into a hunt for a single infected individual, the ethics get tricky.

The problem with plug-in hybrids? Their drivers.

Plug-in hybrids are often sold as a transition to EVs, but new data from Europe shows we’re still underestimating the emissions they produce.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.