Kim Hazelwood

Reëngineering software on the fly.

Imagine having a team of mechanics pull apart and retune your car’s engine as you hurtle down the highway, without making the engine miss a stroke. That’s the nature of the challenge that motivates Kim Hazelwood, an assistant professor of computer science, who has created tools to rewrite software as a computer is executing it. Before she started working on the problem in grad school, “I would have said there’s no way you can just take programs and change them and have every program work,” says Hazelwood. But industry giants like Intel and researchers around the world have used her subsequent achievements to do just that.

Hazelwood’s approach contradicts one of the most important notions in computer programming–abstraction. Abstraction means that software is built in layers: an application runs on top of an operating system, which runs on top of the hardware. Each layer does its best to conceal its inner workings. That way, someone writing, say, a Web browser doesn’t have to know all the engineering that went into a processor. At times, however, it would be useful for the application and hardware layers to communicate more directly. For example, some modern processors reduce electricity consumption by turning off portions of the chip until they are needed, but an application that causes this to happen excessively can shorten the life of the chip. Hazelwood’s software can monitor the processor and detect when subsystems are being turned off and on too often. It then analyzes the software instructions that are triggering the problem and substitutes more hardware-friendly commands that do the same job.

The ability to monitor and modify applications while they’re running could be widely useful. For example, it could make it easier to compensate for hardware bugs, divide tasks among multiple processors, run software on different processor architectures, and even defend against malicious software.

Aaron Dollar

Creating flexible robotic hands.

Aaron Dollar, an assistant professor of mechanical engineering at Yale, has invented a robot with a soft touch. His plastic hand is deft enough to grasp a wide variety of objects without damaging them. What’s more, it’s cheaper and requires less processing power than the metal hands typically used in robots.

Dollar’s design uses plastic fingers that can lightly brush against an object–whether it’s a wine glass, beach ball, or telephone–before firming up their grip. Few researchers have used soft plastic in robotics before, partly because it can be difficult to shape small, precise parts out of such materials. To get around this problem, Dollar mills wax molds for each finger. He places sensors and cables in the molds and then pours in layers of three types of plastic with varying degrees of softness–for fingers, joints, and finger pads. Once the plastics harden and are removed from the molds, the fingers are ready to be hooked up to a base. Dollar’s design has already been licensed to one robotics manufacturer, and because it replicates the flexibility and gentleness of a human hand, he is investigating whether it could work as a prosthetic.

Rikin Gandhi

Educating farmers through locally produced video.

About 600 million people in India depend directly on agriculture for their livelihood. One of the ways the country’s ministry of agriculture tries to help them is by broadcasting videos about farming techniques. In one, for example, officials describe how to plant a fern called azolla in otherwise unusable wet spots; it can be used to make extra cattle feed that enables cows to give more milk. But partly because of cultural and ethnic differences between the ministry workers and the villagers, the government advice is widely ignored.

Rikin Gandhi, founder of the nonprofit Digital Green, has developed a pilot project that offers a solution: simple videos starring local farmers themselves. Gandhi demonstrated that for every dollar spent, the system persuaded seven times as many farmers to adopt new ideas as an existing program of training and visits.

Gandhi–who helped launch the program as a 2006 project at Microsoft Research, India–spent six months testing various video schemes in villages in the state of Karnataka before concluding that featuring local farmers was the key. Villagers produce the videos using handheld camcorders; workers from partner nongovernmental organizations then check the quality of the videos and the accuracy of the advice before screening them in the villages with handheld projectors. So far 500 videos have been made, but three times that number–which should reach four times as many villages–are currently planned.

Indrani Medhi

Building interfaces for the illiterate.

Information is at the fingertips of anyone with access to a laptop or smart phone. But what if the user is one of the 774 million adults worldwide who cannot read? This is the problem that obsesses Indrani Medhi. Based at Microsoft Research India’s Bangalore lab, she has conducted field research in India, South Africa, and the Philippines to design text-free interfaces that could help illiterate and semiliterate people find jobs, get medical information, and use cell-phone-based banking services.

Meaningful computer icons are rarely the same from one culture to another, Medhi says, so she used symbols, audio cues, and cartoons that are specific to particular poor communities. But then she encountered another hurdle. Even when users became familiar with the hardware and the interfaces, Medhi realized, they still did not fully understand how information relevant to their lives could possibly be contained in or delivered by a computer.

The key to overcoming this problem, she discovered, is to offer a five-minute video dramatization when an application is launched, illustrating exactly how it is supposed to work. For example, the one that accompanies her job-search interface features an upper-middle-class couple that needs a domestic helper. The husband posts the requirements to a job website that is subsequently accessed by unemployed and illiterate women at a community center. The video ends with a woman being hired.

Andrey Rybalchenko

Stopping software from getting stuck in loops.

Computer scientist Andrey Rybalchenko has developed a new method for finding software bugs. Traditional automated testing systems detect when programs do “bad things” that lead to crashes, forcing the program to quit. By focusing on crashes, however, such testing often misses a significant class of bugs–those that allow the software to keep running but leave it unable to accept new input or do anything useful. In essence, Rybalchenko instead tries to identify when a program is doing “good things,” such as making progress through loops or responding to other programs.

In a collaboration with Microsoft that began in 2006, Rybalchenko incorporated his methods into Terminator, a commercial program used to find bugs in the device drivers that mediate between an operating system and various pieces of hardware. Countless device drivers have been created by third-party developers, and they are often responsible for software failures that users blame on the OS. So detecting these bugs improves both actual and perceived OS reliability.

Rybalchenko is currently seeking ways to detect similar bugs that can appear when many processors work simultaneously on the same task but fail to coördinate properly and begin competing instead. Now that processor speed has plateaued at a little over three gigahertz, this kind of problem will become more and more significant as manufacturers turn to multicore systems to continue improving performance.

T. Scott Saponas

Detecting complex gestures with an armband interface.

Fingers flicking through the air, T. Scott Saponas is rocking a solo in the video game Guitar Hero–without a guitar. A soft band around his forearm monitors the muscles moving his fingers and hand. The band hides a ring of six electrodes that pick up the weak electrical signals produced by active muscle tissue. The signals are relayed to a computer, which in turn controls the game.

Most previous work on muscle interfaces has focused on controlling broad movements of prosthetic limbs by detecting the activity of individual muscles. To recognize more detailed gestures, Saponas developed software capable of processing the jumble of signals from the mass of muscles in the arm. The system has potential for more than just video games. A jogger using Saponas’s armband could tense his or her hand muscles to switch tracks on an MP3 player without breaking stride, or a mechanic whose hands were busy inside an engine could use it to control a heads-up display.

Saponas created the software as a graduate student at the University of Washington. Now working at Microsoft Research, he is interested in combining the muscle interface with other sensors, including accelerometers and gyroscopes, to provide additional precision.

Jian Sun

Better image searches.

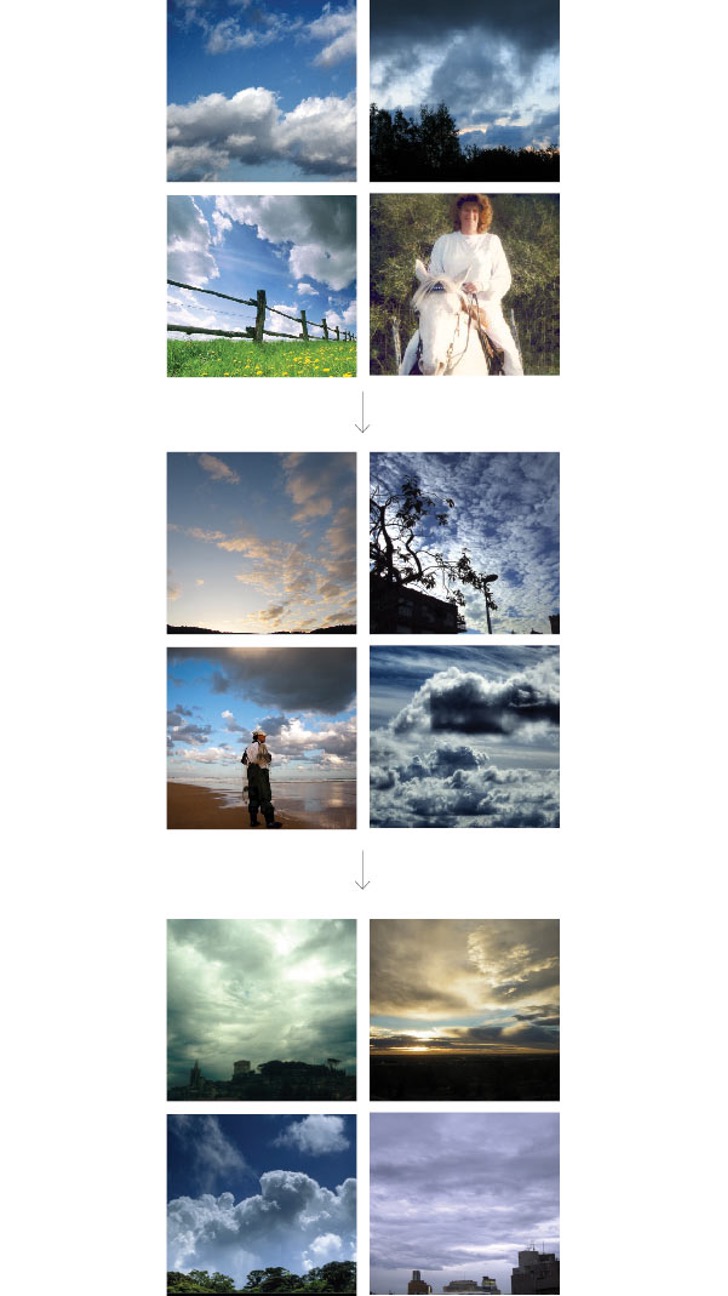

Problem: Images are hard for search engines to index because computers find it difficult to identify their content. Algorithms called classifiers can sort images using statistical techniques, but that presents something of a chicken-and-egg problem: ideally, “you need millions of [classified] images to train a classifier,” says Jian Sun, a researcher at Microsoft Research Asia in Beijing.

Solution: Sun developed a way to make it easy for humans to train computers in picture classification. With his system, which was recently incorporated into Microsoft’s Bing Images search engine, users enter a search term–say, “cloudy sky.” Using its existing classification algorithm, Bing makes its best attempt to present a grid of images that match the search term. The user can click on a nearly right image and ask to see similar pictures, repeating the process until the perfect image appears. As the user refines the search, each click is fed back into the classifier. This means the next time a user searches for “cloudy sky,” Bing will immediately present a more relevant set of images than before. The system is also being used to help other researchers develop image search algorithms; incorporating results from Bing, Sun has released a training database containing 100,000 categorized images.

Richard Tibbetts

Reacting to large amounts of data in real time.

Organizations such as businesses and governments are increasingly obsessed with gathering data, but the results often overwhelm them when they need to act quickly. Richard Tibbetts, CTO of StreamBase Systems in Lexington, MA, has developed a data processing system that can accept large amounts of rapidly changing input and distill it into the information that organizations feel they need to make sound decisions.

Traditionally, organizations have turned to databases to store and manipulate large amounts of information. Typically, however, these databases aren’t good at processing data in real time; uers have to wait until an entire data set has been accumulated. But Tibbetts has invented a new set of techniques for managing data. In particular, he invented a language called StreamSQL EventFlow, which can process a stream of data as it arrives, analyze it, make decisions about it, and take actions such as trading a stock or flagging a trend.

StreamBase counts government agencies, investment banks, and hedge funds among its clients. The technology has been used to monitor activity on battlefields as combat unfolds and to help businesses react as stock market conditions change.