Sponsored

Modern data architectures fuel innovation

More diverse data estates require a new strategy—and the infrastructure to support it.

In association withKyndryl

Companies have contended with a deluge of data for years. And while most have not yet found a good way of managing it all, the challenges—diverse data sources, types, and structures and new environments and platforms—have grown ever more complex. At the same time, deriving value from data has become a business imperative, making the consequences of not managing your organization’s data more severe—from lack of critical business insights to the hobbling of AI implementations.

Greater data complexity leads to greater consequences

Modern data architectures fuel innovation

Data is not only increasing in volume, velocity, and variety, but also the data estate has become increasingly intricate. For years, organizations have struggled with data being sequestered in separate silos within the company. Today, data location adds another layer of complexity, with some of the data on premises, some of it in the cloud, and some of it coming in streams from the edge. By 2025, more than 50% of enterprise-critical data will be created and processed outside the data center or cloud, Gartner analysts estimate. In order to be truly data-driven, organizations realize, they must reach both wider and deeper into their operations, identifying and digesting data and information from various departments and sources.

“Each line of business is driving digital transformation in its own way,” says Naveen Kamat, executive director and CTO of data and AI services at Kyndryl, an IT infrastructure services provider. “They are setting up their own apps in the cloud, which generate data daily. Then there’s web and social media data coming in. The enterprise data estate is becoming much, much bigger; it’s becoming much more complex to manage.”

The insurance industry provides an example of today’s data landscape complexity. One substantial challenge to good data management in insurance is a plethora of legacy systems built up over the years, says Ali Shahkarami, chief data officer at Allianz Global Corporate & Specialty (AGCS). “That’s especially true for international companies operating across borders with different products, regulatory requirements, and reporting requirements,” he notes. “The ability to do that centrally and in a consistent manner is a big challenge. It impacts everything you build with data and analytics.”

Unfortunately, while data management has become more challenging, data management skills have become harder to come by. The number of skilled data personnel has stayed the same or even dropped over the last decade, even as the number of data and application silos have increased, says Gartner. That means it takes more time than ever to meet integrated data analytics needs.

The consequences for organizations that fail to manage their data effectively and efficiently are becoming dire. For one thing, the cost of inadequate data management is growing. The cost of poor data can be about 20% of revenue, estimated Thomas C. Redman, president of consultancy Data Quality Solutions, in a co-authored MIT Sloan Management Review article.

“Almost all work is plagued by bad data,” write Redman and Thomas H. Davenport. “The salesperson who corrects errors in data received from marketing, the data scientist who spends 80% of his or her time wrangling data, the finance team that spends three-quarters of its time reconciling reports, the decision maker who doesn’t believe the numbers and instructs his or her staff to validate them.”

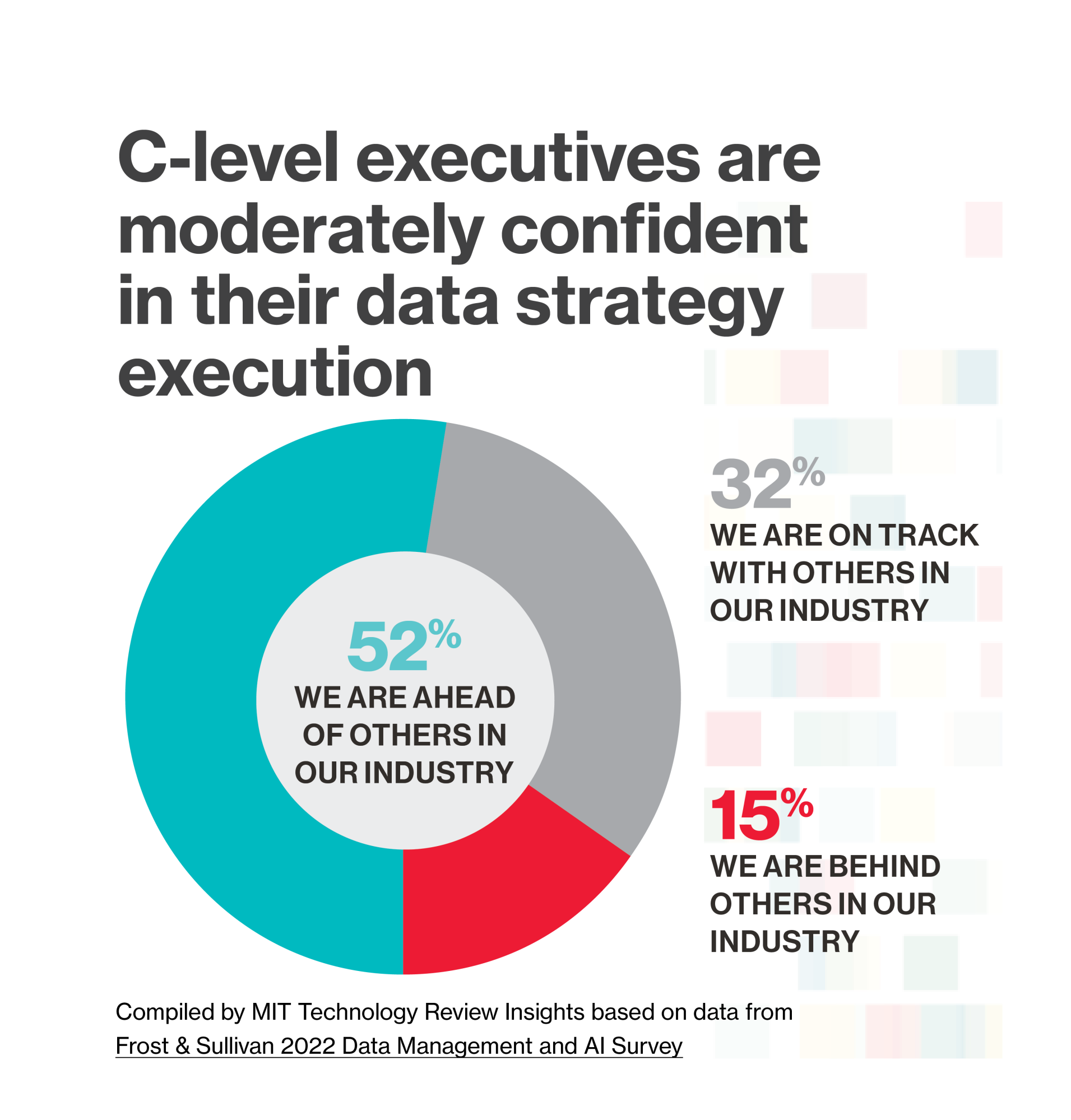

Redman and Davenport estimate that less than 5% of companies use their data and data science to gain a competitive edge. “Companies are not seizing the strategic potential in their data,” they conclude.

When it comes to implementing advanced technologies, such as machine learning and artificial intelligence, inadequate data management represents a substantial barrier. Not only could AI programs be ineffective, but “without the right data, building AI is risky and possibly dangerous” if data bias, diversity, and systematic labeling are not part of a data management strategy, says Rita Sallam, distinguished vice president and analyst at Gartner.

This content was produced by Insights, the custom content arm of MIT Technology Review. It was not written by MIT Technology Review’s editorial staff.

Deep Dive

Computing

How ASML took over the chipmaking chessboard

MIT Technology Review sat down with outgoing CTO Martin van den Brink to talk about the company’s rise to dominance and the life and death of Moore’s Law.

Why it’s so hard for China’s chip industry to become self-sufficient

Chip companies from the US and China are developing new materials to reduce reliance on a Japanese monopoly. It won’t be easy.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.