The covid tech that is intimately tied to China’s surveillance state

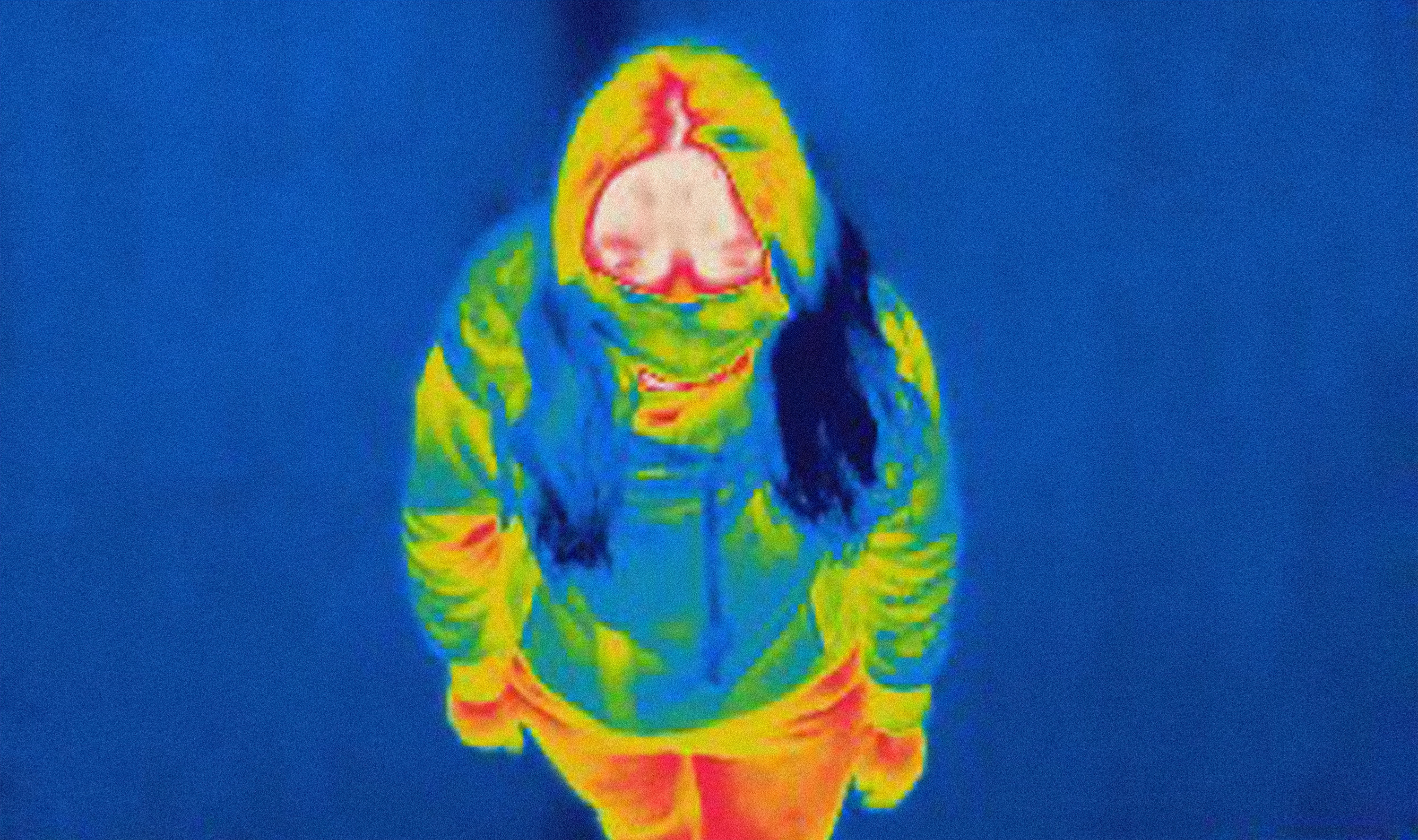

Heat-sensing cameras and face recognition systems may help fight covid-19—but they also make us complicit in the high-tech oppression of Uyghurs.

Sometime in mid-2019, a police contractor in the Chinese city of Kuitun tapped a young college student from the University of Washington on the shoulder as she walked through a crowded market intersection. The student, Vera Zhou, didn’t notice the tapping at first because she was listening to music through her earbuds as she weaved through the crowd. When she turned around and saw the black uniform, the blood drained from her face. Speaking in Chinese, Vera’s native language, the police officer motioned her into a nearby People’s Convenience Police Station—one of more than 7,700 such surveillance hubs that now dot the region.

On a monitor in the boxy gray building, she saw her face surrounded by a yellow square. On other screens she saw pedestrians walking through the market, their faces surrounded by green squares. Beside the high-definition video still of her face, her personal data appeared in a black text box. It said that she was Hui, a member of a Chinese Muslim group that makes up around 1 million of the population of 15 million Muslims in Northwest China. The alarm had gone off because she had walked beyond the parameters of the policing grid of her neighborhood confinement. As a former detainee in a re-education camp, she was not officially permitted to travel to other areas of town without explicit permission from both her neighborhood watch unit and the Public Security Bureau. The yellow square around her face on the screen indicated that she had once again been deemed a “pre-criminal” by the digital enclosure system that held Muslims in place. Vera said at that moment she felt as though she could hardly breathe.

Kuitun is a small city of around 285,000 in Xinjiang’s Tacheng Prefecture, along the Chinese border with Kazakhstan. Vera had been trapped there since 2017 when, in the middle of her junior year as a geography student at the University of Washington (where I was an instructor), she had taken a spur-of-the-moment trip back home to see her boyfriend. After a night at a movie theater in the regional capital Ürümchi, her boyfriend received a call asking him to come to a local police station. There, officers told him they needed to question his girlfriend: they had discovered some suspicious activity in Vera’s internet usage, they said. She had used a virtual private network, or VPN, in order to access “illegal websites,” such as her university Gmail account. This, they told her later, was a “sign of religious extremism.”

It took some time for what was happening to dawn on Vera. Perhaps since her boyfriend was a non-Muslim from the majority Han group and they did not want him to make a scene, at first the police were quite indirect about what would happen next. They just told her she had to wait in the station.

When she asked if she was under arrest, they refused to respond.

“Just have a seat,” they told her. By this time she was quite frightened, so she called her father back in her hometown and told him what was happening. Eventually, a police van pulled up to the station: She was placed in the back, and once her boyfriend was out of sight, the police shackled her hands behind her back tightly and shoved her roughly into the back seat.

Pre-criminals

Vera Zhou didn’t think the war on terror had anything to do with her. She considered herself a non-religious fashionista who favored chunky earrings and dressing in black. She had gone to high school near Portland, Oregon, and was on her way to becoming an urban planner at a top-ranked American university. She had planned to reunite with her boyfriend after graduation and have a career in China, where she thought of the economy as booming. She had no idea that a new internet security law had been implemented in her hometown and across Xinjiang at the beginning of 2017, and that this was how extremist “pre-criminals,” as state authorities referred to them, were being identified for detention. She did not know that a newly appointed party secretary of the region had given a command to “round up everyone who should be rounded up” as part of the “People’s War.”

Now, in the back of the van, she felt herself losing control in a wave of fear. She screamed, tears streaming down her face, “Why are you doing this? Doesn’t our country protect the innocent?” It seemed to her like it was a cruel joke, like she had been given a role in a horror movie, and that if she just said the right things they might snap out of it and realize it was all a mistake.

For the next few months, Vera was held with 11 other Muslim minority women in a second-floor cell in a former police station on the outskirts of Kuitun. Like Vera, others in the room were also guilty of cyber “pre-crimes.” A Kazakh woman had installed WhatsApp on her phone in order to contact business partners in Kazakhstan. A Uyghur woman who sold smartphones at a bazaar had allowed multiple customers to register their SIM cards using her ID card.

Around April 2018, without warning, Vera and several other detainees were released on the provision that they report to local social stability workers on a regular basis and not try to leave their home neighborhoods.

Whenever her social stability worker shared something on social media, Vera was always the first person to support her by liking it and posting it to her own account.

Every Monday, her probation officer required that Vera go to a neighborhood flag-raising ceremony and participate by loudly singing the Chinese national anthem and making statements pledging her loyalty to the Chinese government. By this time, due to widely circulated reports of detention for cyber-crimes in the small town, it was known that online behavior could be detected by the newly installed automated internet surveillance systems. Like everyone else, Vera recalibrated her online behavior. Whenever the social stability worker assigned to her shared something on social media, Vera was always the first person to support her by liking it and posting it on her own account. Like everyone else she knew, she started to “spread positive energy” by actively promoting state ideology.

After she was back in her neighborhood, Vera felt that she had changed. She thought often about the hundreds of detainees she had seen in the camp. She feared that many of them would never be allowed out since they didn’t know Chinese and had been practicing Muslims their whole lives. She said her time in the camp also made her question her own sanity. “Sometimes I thought maybe I don’t love my country enough,” she told me. “Maybe I only thought about myself.”

But she also knew that what had happened to her was not her fault. It was the result of Islamophobia being institutionalized and focused on her. And she knew with absolute certainty that an immeasurable cruelty was being done to Uyghurs and Kazakhs because of their ethno-racial, linguistic, and religious differences.

"I just started to stay home all the time"

Like all detainees, Vera had been subjected to a rigorous biometric data collection that fell under the population-wide assessment process called “physicals for all,” before she was taken to the camps. The police had scanned Vera’s face and irises, recorded her voice signature, and collected her blood, fingerprints, and DNA—adding this precise high-fidelity data to an immense dataset that was being used to map the behavior of the population of the region. They had also taken her phone away to have it and her social media accounts scanned for Islamic imagery, connections to foreigners, and other signs of “extremism.” Eventually they gave it back, but without any of the US-made apps like Instagram.

For several weeks, she began to find ways around the many surveillance hubs that had been built every several hundred meters. Outside of high-traffic areas many of them used regular high-definition surveillance cameras that could not detect faces in real time. Since she could pass as Han and spoke standard Mandarin, she would simply tell the security workers at checkpoints that she forgot her ID and would write down a fake number. Or sometimes she would go through the exit of the checkpoint, “the green lane,” just like a Han person, and ignore the police.

One time, though, when going to see a movie with a friend, she forgot to pretend that she was Han. At a checkpoint at the theater she put her ID on the scanner and looked into the camera. Immediately an alarm sounded and the mall police contractors pulled her to the side. As her friend disappeared into the crowd, Vera worked her phone frantically to delete her social media account and erase the contacts of people who might be detained because of their association with her. “I realized then that it really wasn’t safe to have friends. I just started to stay at home all the time.”

Eventually, like many former detainees, Vera was forced to work as an unpaid laborer. The local state police commander in her neighborhood learned that she had spent time in the United States as a college student, so he asked Vera’s probation officer to assign her to tutor his children in English.

“I thought about asking him to pay me,” Vera remembers. “But my dad said I need to do it for free. He also sent food with me for them, to show how eager he was to please them.”

The commander never brought up any form of payment.

In October 2019, Vera’s probation officer told her that she was happy with Vera’s progress and she would be allowed to continue her education back in Seattle. She was made to sign vows not to talk about what she had experienced. The officer said, “Your father has a good job and will soon reach retirement age. Remember this.”

In the fall of 2019, Vera returned to Seattle. Just a few months later, across town, Amazon—the world’s wealthiest technology company—received a shipment of 1,500 heat-mapping camera systems from the Chinese surveillance company Dahua. Many of these systems, which were collectively worth around $10 million, were to be installed in Amazon warehouses to monitor the heat signatures of employees and alert managers if workers exhibited covid symptoms. Other cameras included in the shipment were distributed to IBM and Chrysler, among other buyers.

Dahua was just one of the Chinese companies that was able to capitalize on the pandemic. As covid began to move beyond the borders of China in early 2020, a group of medical research companies owned by the Beijing Genomics Institute, or BGI, radically expanded, establishing 58 labs in 18 countries and selling 35 million covid-19 tests to more than 180 countries. In March 2020, companies such as Russell Stover Chocolates and US Engineering, a Kansas City, Missouri–based mechanical contracting company, bought $1.2 million worth of tests and set up BGI lab equipment in University of Kansas Medical System facilities.

And while Dahua sold its equipment to companies like Amazon, Megvii, one of its main rivals, deployed heat-mapping systems to hospitals, supermarkets, campuses in China, and to airports in South Korea and the United Arab Emirates.

Yet, while the speed and intention of this response to protect workers in the absence of an effective national-level US response was admirable, these Chinese companies are also tied up in forms of egregious human rights abuses.

Dahua is one of the major providers of “smart camp” systems that Vera Zhou experienced in Xinjiang (the company says its facilities are supported by technologies such as “computer vision systems, big data analytics and cloud computing”). In October 2019, both Dahua and Megvii were among eight Chinese technology firms placed on a list that blocks US citizens from selling goods and services to them (the list, which is intended to prevent US firms from supplying non-US firms deemed a threat to national interests, prevents Amazon from selling to Dahua, but not buying from them). BGI’s subsidiaries in Xinjiang were placed on the US no-trade list in July 2020.

Amazon’s purchase of Dahua heat-mapping cameras recalls an older moment in the spread of global capitalism that was captured by historian Jason Moore’s memorable turn of phrase: “Behind Manchester stands Mississippi.”

What did Moore mean by this? In his rereading of Friedrich Engels’s analysis of the textile industry that made Manchester, England, so profitable, he saw that many aspects of the British Industrial Revolution would not have been possible without the cheap cotton produced by slave labor in the United States. In a similar way, the ability of Seattle, Kansas City, and Seoul to respond as rapidly as they did to the pandemic relies in part on the way systems of oppression in Northwest China have opened up a space to train biometric surveillance algorithms.

The protections of workers during the pandemic depends on forgetting about college students like Vera Zhou. It means ignoring the dehumanization of thousands upon thousands of detainees and unfree workers.

At the same time, Seattle also stands before Xinjiang.

Amazon has its own role in involuntary surveillance that disproportionately harms ethno-racial minorities given its partnership with US Immigration and Customs Enforcement to target undocumented immigrants and its active lobbying efforts in support of weak biometric surveillance regulation. More directly, Microsoft Research Asia, the so-called “cradle of Chinese AI,” has played an instrumental role in the growth and development of both Dahua and Megvii.

Chinese state funding, global terrorism discourse, and US industry training are three of the primary reasons why a fleet of Chinese companies now leads the world in face and voice recognition. This process was accelerated by a war on terror that centered on placing Uyghurs, Kazakhs, and Hui within a complex digital and material enclosure, but it now extends throughout the Chinese technology industry, where data-intensive infrastructure systems produce flexible digital enclosures throughout the nation, though not at the same scale as in Xinjiang.

China’s vast and rapid response to the pandemic has further accelerated this process by rapidly implementing these systems and making clear that they work. Because they extend state power in such sweeping and intimate ways, they can effectively alter human behavior.

Alternative approaches

The Chinese approach to the pandemic is not the only way to stop it, however. Democratic states like New Zealand and Canada, which have provided testing, masks, and economic assistance to those forced to stay home, have also been effective. These nations make clear that involuntary surveillance is not the only way to protect the well-being of the majority, even at the level of the nation.

In fact, numerous studies have shown that surveillance systems support systemic racism and dehumanization by making targeted populations detainable. The past and current US administrations’ use of the Entity List to halt sales to companies like Dahua and Megvii, while important, is also producing a double standard, punishing Chinese firms for automating racialization while funding American companies to do similar things.

Increasing numbers of US-based companies are attempting to develop their own algorithms to detect racial phenotypes, though through a consumerist approach that is premised on consent. By making automated racialization a form of convenience in marketing things like lipstick, companies like Revlon are hardening the technical scripts that are available to individuals.

As a result, in many ways race continues to be an unthought part of how people interact with the world. Police in the United States and in China think about automated assessment technologies as tools they have to detect potential criminals or terrorists. The algorithms make it appear normal that Black men or Uyghurs are disproportionately detected by these systems. They stop the police, and those they protect, from recognizing that surveillance is always about controlling and disciplining people who do not fit into the vision of those in power. The world, not China alone, has a problem with surveillance.

To counteract the increasing banality, the everydayness, of automated racialization, the harms of biometric surveillance around the world must first be made apparent. The lives of the detainable must be made visible at the edge of power over life. Then the role of world-class engineers, investors, and public relations firms in the unthinking of human experience, in designing for human reeducation, must be made clear. The webs of interconnection—the way Xinjiang stands behind and before Seattle— must be made thinkable.

—This story is an edited excerpt from In The Camps: China’s High-Tech Penal Colony, by Darren Byler (Columbia Global Reports, 2021.) Darren Byler is an assistant professor of international studies at Simon Fraser University, focused on the technology and politics of urban life in China.

Deep Dive

Policy

Is there anything more fascinating than a hidden world?

Some hidden worlds--whether in space, deep in the ocean, or in the form of waves or microbes--remain stubbornly unseen. Here's how technology is being used to reveal them.

A brief, weird history of brainwashing

L. Ron Hubbard, Operation Midnight Climax, and stochastic terrorism—the race for mind control changed America forever.

What Luddites can teach us about resisting an automated future

Opposing technology isn’t antithetical to progress.

Africa’s push to regulate AI starts now

AI is expanding across the continent and new policies are taking shape. But poor digital infrastructure and regulatory bottlenecks could slow adoption.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.