Sponsored

In the data decade, data can be both an advantage and a burden

Study reveals businesses are struggling to reconcile conflicting data realities caused by overwhelmed technology, people, and processes.

Provided byDell Technologies

In 2016, Dell Technologies commissioned our first Digital Transformation Index (DT Index) study to assess the digital maturity of businesses around the globe. We have since commissioned the study every two years to track businesses’ digital maturity.

Sam Grocott is Senior Vice President of Business Unit Marketing at Dell Technologies.

Our third installment of the DT Index, launched in 2020 (the year of the pandemic), revealed that “data overload/unable to extract insights from data” was the third highest-ranking barrier to transformation, up from 11th place in 2016. That is a huge jump from the bottom to close to the top of the ranking of barriers to digital transformation.

These findings point to a curious paradox—data has the potential to become businesses’ number one barrier to transformation while also being their greatest asset. To learn more about why this paradox exists and where businesses need the most help, we commissioned a study with Forrester Consulting to dig deeper.

The resulting study, based on a survey with 4,036 senior decision-makers with responsibility for their companies’ data strategy, titled: Unveiling Data Challenges Afflicting Businesses Around the World, is available to read now.

Candidly, the study confirms our concerns: in this data decade, data has become both a burden and an advantage for many businesses—which one depends on how data-ready the business might be.

While Forrester identifies several data paradoxes hindering businesses today, three major contradictions stood out for me.

1. The perception paradox

Two-thirds of respondents would say their business is data-driven and state “data is the lifeblood of their organization.” But only 21% say they treat data as capital and prioritize its use across the business today.

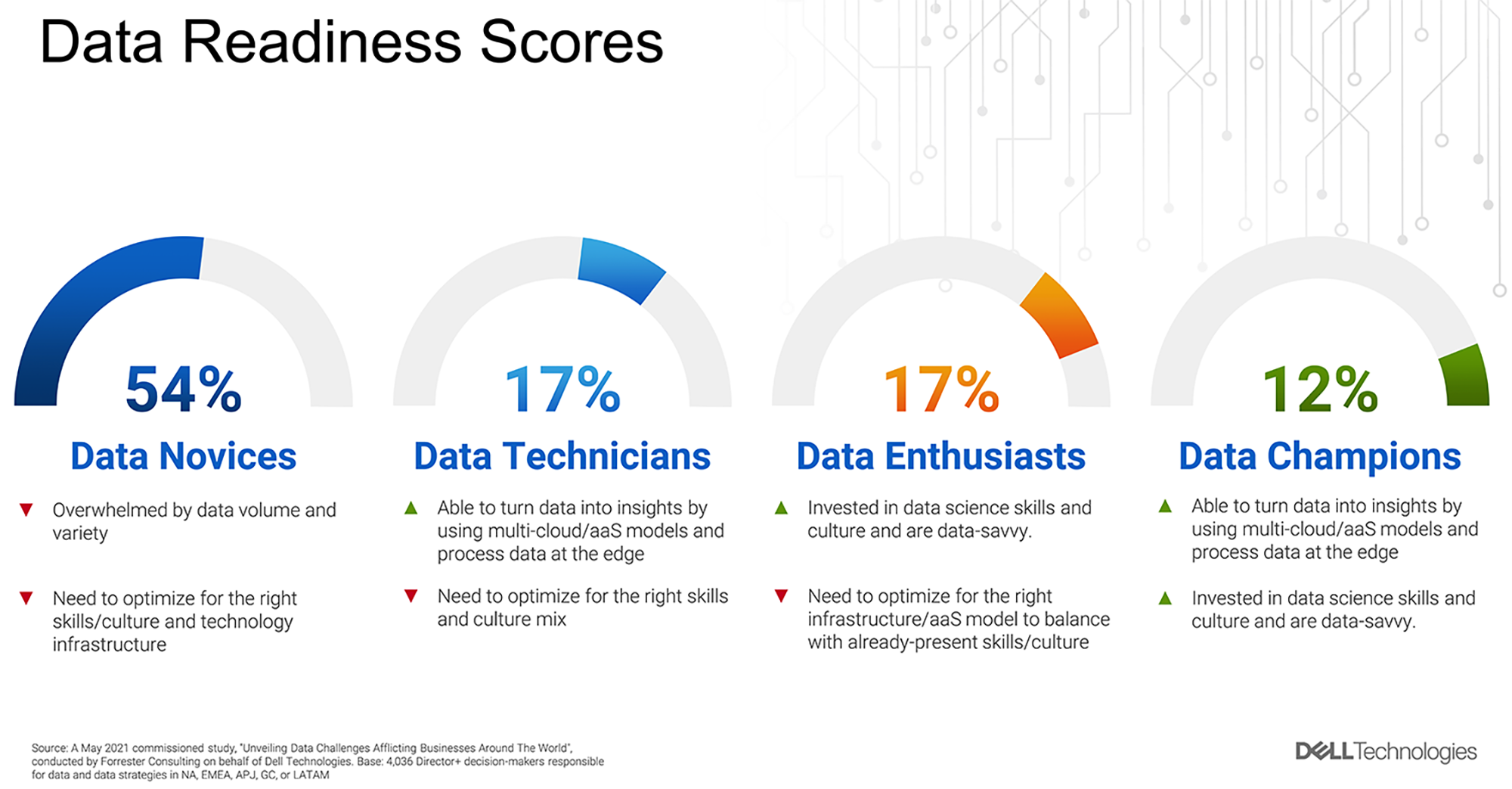

Clearly, there’s a disconnect here. To provide some clarity, Forrester created an objective measure of businesses’ data readiness (see figure).

The results showed that 88% of businesses are yet to progress either their data technology and processes and/or their data culture and skills. In fact, only 12% of businesses are defined as Data Champions: companies that are actively engaged in both areas (technology/process and culture/skills).

2. The “want more than they can handle” paradox

The research also shows that businesses need more data, but they have too much data to handle right now: 70% say they are gathering data faster than they can analyze and use, yet 67% say they constantly need more data than their current capabilities provide.

While this is a paradox, it’s not all that surprising when you consider the research holistically, such as the proportion of companies that are yet to secure data advocacy at a Boardroom level and fall back to an IT strategy that can’t scale (i.e., bolting on more data lakes).

The implications of this paradox are profound and far-reaching. Six in 10 businesses are battling with data silos; 64% of respondents complain they have such a glut of data they can’t meet security and compliance requirements, and 61% say their teams are already overwhelmed by the data they have.

3. The “seeing without doing” paradox

While economies have suffered during the pandemic, the on-demand sector has expanded rapidly, igniting a new wave of data-first, data-anywhere businesses that pay for what they use and only use what they need—determined by the data that they generate and analyze.

Although these businesses are emerging, and doing very well, they’re still relatively small in number. Only 20% of businesses have moved the majority of their applications and infrastructure to an as-a-service model—even though more than 6 in 10 believe an as-a-service model would enable firms to be more agile, scale, and provision applications without complexity.

Achieving breakthrough together

The research is sobering,but there is hope on the horizon. Businesses are looking to revise their data strategies with a multi-cloud environment, by moving to a data-as-a-service model and automating data processes with machine learning.

Granted, they have a lot to do to prime the pumps for a proliferation of data. Still, there is a path forward, by firstly modernizing their IT infrastructure so they can meet data where it lives, at the edge. This incorporates bringing businesses’ infrastructure and applications closer to where data needs to be captured, analyzed and acted on–while avoiding data sprawl, by maintaining a consistent multi-cloud operating model.

Secondly, by optimizing data pipelines, so data can flow freely and securely while being augmented by AI/ML; and thirdly, by developing software to deliver the personalized, integrated experiences customers crave.

The staggering volume, variety and velocity of data may seem overpowering but with the right technology, processes and culture, businesses can tame the data beast, innovate with it, and create new value.

To learn more about the study, visit www.delltechnologies.com/dataparadox.

This content was produced by Dell Technologies. It was not written by MIT Technology Review’s editorial staff.

Keep Reading

Most Popular

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

How scientists traced a mysterious covid case back to six toilets

When wastewater surveillance turns into a hunt for a single infected individual, the ethics get tricky.

The problem with plug-in hybrids? Their drivers.

Plug-in hybrids are often sold as a transition to EVs, but new data from Europe shows we’re still underestimating the emissions they produce.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.