The great chip crisis threatens the promise of Moore’s Law

A shortage of microchips threatens to slow the decades of innovation fueled by the promise of ever faster, cheaper computing power.

A year into the covid-19 pandemic, Apple commemorated the growing array of devices featuring its custom M1 chip with great fanfare, including a “Mission Implausible” ad on TV featuring a young man running across the rooftops of its “spaceship” campus in Cupertino and infiltrating the facility to “steal” the breakthrough microprocessor from a MacBook and place it inside an iPad Pro.

Apple’s custom-designed chip is the latest triumph for Moore’s Law, the observation turned self-fulfilling prophecy that chipmakers can double the number of transistors on a chip every few years. The M1 packs 16 billion transistors on a microprocessor the size of a large postage stamp. It’s a marvel of today’s semiconductor manufacturing prowess.

But even as Apple celebrated the M1, the world was facing an economically devastating shortage of microchips, particularly the relatively cheap ones that make many of today’s technologies possible.

Automakers have been shutting down assembly lines and laying off workers because they can’t get enough $1 chips. Manufacturers have resorted to building vehicles without the chips necessary for navigation systems, digital rear-view mirrors, display touch screens, and fuel management systems. Overall, the global automotive industry could lose more than $110 billion to the shortage in 2021.

Production has also slowed for smartphones, laptops, video-game consoles, TVs, and even smart appliances, all because of the lack of cheap microchips. Their use is so essential and so widespread that some observers think the chip crisis could threaten the global economic recovery from the pandemic.

The global shortage is shining a harsh spotlight on the semiconductor industry’s ability to deliver cheaper and more powerful microchips. The longstanding promise of chips with ever more capabilities inspired engineers, programmers, and product designers to create generations of new products and services. Moore’s Law has been more than just a road map for the semiconductor industry—it has governed technological change over the last half-century.

Now that promise of more computing power everywhere is crumpling, but not because chipmakers have finally run up against the physical limits of technology to make ever smaller transistors. Instead, the growing costs of sustaining Moore’s Law have encouraged consolidation among chipmakers and created more choke points in the immensely complex business of chip production.

Even as microchips have become essential in so many products, their development and manufacturing have come to be dominated by a small number of producers with limited capacity—and appetite—for churning out the commodity chips that are a staple for today’s technologies. And because making chips requires hundreds of manufacturing steps and months of production time, the semiconductor industry cannot quickly pivot to satisfy the pandemic-fueled surge in demand.

After decades of fretting about how we will carve out features as small as a few nanometers on silicon wafers, the spirit of Moore’s Law—the expectation that cheap, powerful chips will be readily available—is now being threatened by something far more mundane: inflexible supply chains.

A lonely frontier

Twenty years ago, the world had 25 manufacturers making leading-edge chips. Today, only Taiwan Semiconductor Manufacturing Company (TSMC) in Taiwan, Intel in the United States, and Samsung in South Korea have the facilities, or fabs, that produce the most advanced chips. And Intel, long a technology leader, is struggling to keep up, having repeatedly missed deadlines for producing its latest generations.

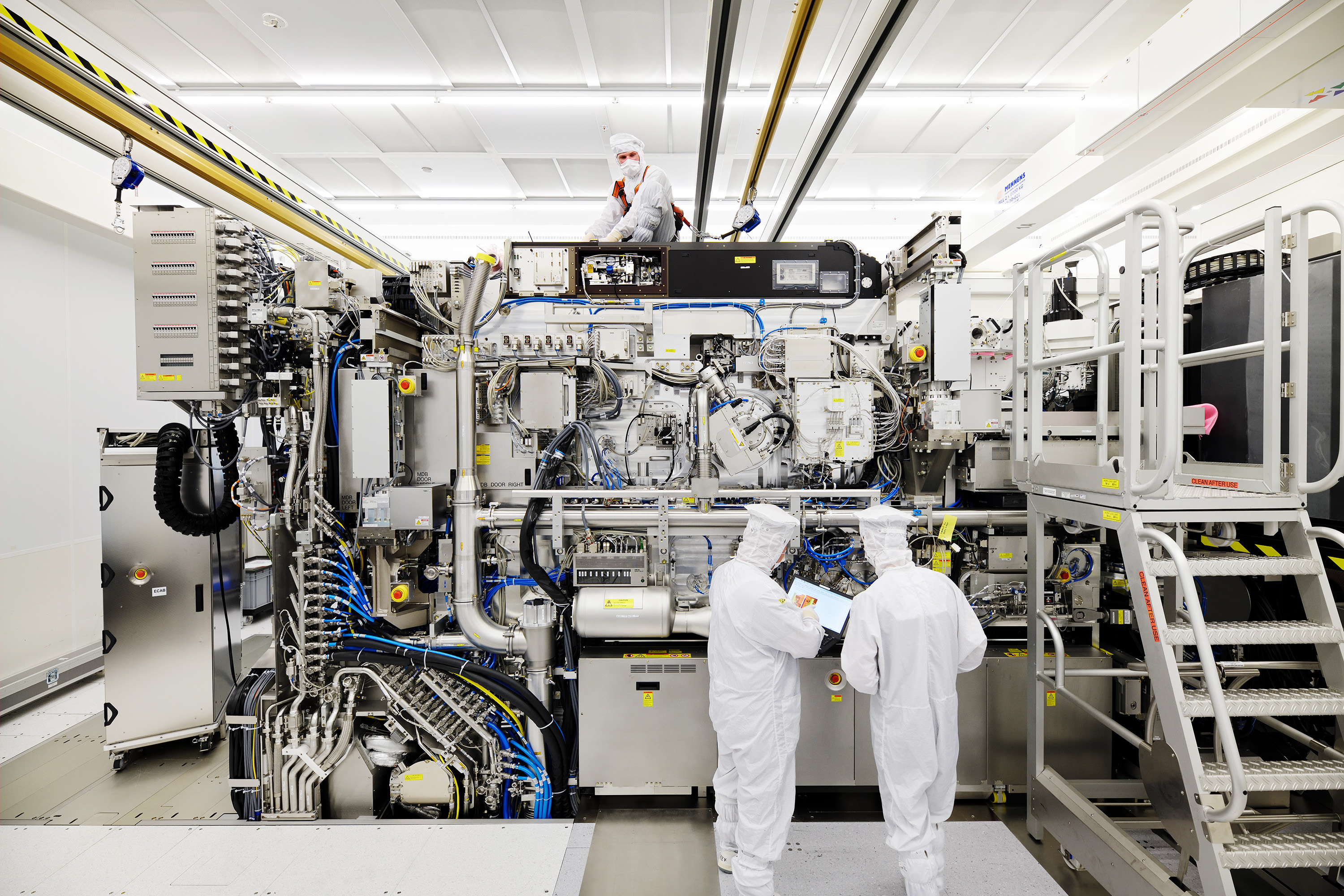

One reason for the consolidation is that building a facility to make the most advanced chips costs between $5 billion and $20 billion. These fabs make chips with features as small as a few nanometers; in industry jargon they’re called 5-nanometer and 7-nanometer nodes. Much of the cost of new fabs goes toward buying the latest equipment, such as a tool called an extreme ultraviolet lithography (EUV) machine that costs more than $100 million. Made solely by ASML in the Netherlands, EUV machines are used to etch detailed circuit patterns with nanometer-size features.

Chipmakers have been working on EUV technology for more than two decades. After billions of dollars of investment, EUV machines were first used in commercial chip production in 2018. “That tool is 20 years late, 10x over budget, because it’s amazing,” says David Kanter, executive director of an open engineering consortium focused on machine learning. “It’s almost magical that it even works. It’s totally like science fiction.”

Such gargantuan effort made it possible to create the billions of tiny transistors in Apple’s M1 chip, which was made by TSMC; it’s among the first generation of leading-edge chips to rely fully on EUV.

Only the largest tech companies are willing to pay hundreds of millions of dollars to design a chip for leading-edge nodes.

Paying for the best chips makes sense for Apple because these chips go into the latest MacBook and iPhone models, which sell by the millions at luxury-brand prices. “The only company that is actually using EUV in high volume is Apple, and they sell $1,000 smartphones for which they have insane margin,” Kanter says.

Not only are the fabs for manufacturing such chips expensive, but the cost of designing the immensely complex circuits is now beyond the reach of many companies. In addition to Apple, only the largest tech companies that require the highest computing performance, such as Qualcomm, AMD, and Nvidia, are willing to pay hundreds of millions of dollars to design a chip for leading-edge nodes, says Sri Samavedam, senior vice president of CMOS technologies at Imec, an international research institute based in Leuven, Belgium.

Many more companies are producing laptops, TVs, and cars that use chips made with older technologies, and a spike in demand for these is at the heart of the current chip shortage. Simply put, a majority of chip customers can’t afford—or don’t want to pay for—the latest chips; a typical car today uses dozens of microchips, while an electric vehicle uses many more. It quickly adds up. Instead, makers of things like cars have stuck with chips made using older technologies.

What’s more, many of today’s most popular electronics simply don’t require leading-edge chips. “It doesn’t make sense to put, for example, an A14 [iPhone and iPad] chip in every single computer that we have in the world,” says Hassan Khan, a former doctoral researcher at Carnegie Mellon University who studied the public policy implications of the end of Moore’s Law and currently works at Apple. “You don’t need it in your smart thermometer at home, and you don’t need 15 of them in your car, because it’s very power hungry and it’s very expensive.”

The problem is that even as more users rely on older and cheaper chip technologies, the giants of the semiconductor industry have focused on building new leading-edge fabs. TSMC, Samsung, and Intel have all recently announced billions of dollars in investments for the latest manufacturing facilities. Yes, they’re expensive, but that’s where the profits are—and for the last 50 years, it has been where the future is.

TSMC, the world’s largest contract manufacturer for chips, earned almost 60% of its 2020 revenue from making leading-edge chips with features 16 nanometers and smaller, including Apple’s M1 chip made with the 5-nanometer manufacturing process.

Making the problem worse is that “nobody is building semiconductor manufacturing equipment to support older technologies,” says Dale Ford, chief analyst at the Electronic Components Industry Association, a trade association based in Alpharetta, Georgia. “And so we’re kind of stuck between a rock and a hard spot here.”

Low-end chips

All this matters to users of technology not only because of the supply disruption it’s causing today, but also because it threatens the development of many potential innovations. In addition to being harder to come by, cheaper commodity chips are also becoming relatively more expensive, since each chip generation has required more costly equipment and facilities than the generations before.

Some consumer products will simply demand more powerful chips. The buildout of faster 5G mobile networks and the rise of computing applications reliant on 5G speeds could compel investment in specialized chips designed for networking equipment that talks to dozens or hundreds of Internet-connected devices. Automotive features such as advanced driver-assistance systems and in-vehicle “infotainment” systems may also benefit from leading-edge chips, as evidenced by electric-vehicle maker Tesla’s reported partnerships with both TSMC and Samsung on chip development for future self-driving cars.

But buying the latest leading-edge chips or investing in specialized chip designs may not be practical for many companies when developing products for an “intelligence everywhere” future. Makers of consumer devices such as a Wi-Fi-enabled sous vide machine are unlikely to spend the money to develop specialized chips on their own for the sake of adding even fancier features, Kanter says. Instead, they will likely fall back on whatever chips made using older technologies can provide.

The majority of today's chip customers make do with the cheaper commodity chips that represent a trade-off between cost and performance.

And lower-cost items such as clothing, he says, have “razor-thin margins” that leave little wiggle room for more expensive chips that would add a dollar—let alone $10 or $20—to each item’s price tag. That means the climbing price of computing power may prevent the development of clothing that could, for example, detect and respond to voice commands or changes in the weather.

The world can probably live without fancier sous vide machines, but the lack of ever cheaper and more powerful chips would come with a real cost: the end of an era of inventions fueled by Moore’s Law and its decades-old promise that increasingly affordable computation power will be available for the next innovation.

The majority of today’s chip customers make do with the cheaper commodity chips that represent a trade-off between cost and performance. And it’s the supply of such commodity chips that appears far from adequate as the global demand for computing power grows.

“It is still the case that semiconductor usage in vehicles is going up, semiconductor usage in your toaster oven and for all kinds of things is going up,” says Willy Shih, a professor of management practice at Harvard Business School. “So then the question is, where is the shortage going to hit next?”

A global concern

In early 2021, President Joe Biden signed an executive order mandating supply chain reviews for chips and threw his support behind a bipartisan push in Congress to approve at least $50 billion for semiconductor manufacturing and research. Biden also held two White House summits with leaders from the semiconductor and auto industries, including an April 12 meeting during which he prominently displayed a silicon wafer.

The actions won’t solve the imbalance between chip demand and supply anytime soon. But at the very least, experts say, today’s crisis represents an opportunity for the US government to try to finally fix the supply chain and reverse the overall slowdown in semiconductor innovation—and perhaps shore up the US’s capacity to make the badly needed chips.

An estimated 75% of all chip manufacturing capacity was based in East Asia as of 2019, with the US share sitting at approximately 13%. Taiwan’s TSMC alone has nearly 55% of the foundry market that handles consumer chip manufacturing orders.

Looming over everything is the US-China rivalry. China’s national champion firm SMIC has been building fabs that are still five or six years behind the cutting edge in chip technologies. But it’s possible that Chinese foundries could help meet the global demand for chips built on older nodes in the coming years. “Given the state subsidies they receive, it’s possible Chinese foundries will be the lowest-cost manufacturers as they stand up fabs at the 22-nanometer and 14-nanometer nodes,” Khan says. “Chinese fabs may not be competitive at the frontier, but they could supply a growing portion of demand.”

The global semiconductor industry will need to almost double overall capacity by 2030 to keep pace with demand, according to the Semiconductor Industry Association (SIA), a Washington-based industry group, which has advocated for strengthening the global supply chain rather than attempting to build fully “self-sufficient” domestic manufacturing capability.

But in a nod to the importance of advanced chips for national security and critical infrastructure, the SIA suggests that the US provide “market-driven incentives” for companies to build two or three new leading-edge fabs domestically. That could help ensure that the nation’s core telecommunications networks and data centers—along with the US military—have a domestic supply of chips.

The White House photo ops with the president called to mind the role that the government has played since the dawn of the semiconductor industry that gave Silicon Valley its name. “Making that front and center is not something that the president has talked about in that way since Ronald Reagan,” says Margaret O’Mara, a historian at the University of Washington in Seattle. “Biden sitting there waving a wafer around—I don’t think I’ve seen that in a presidential hand ever.”

The US government became “the Valley’s first, and perhaps its greatest, venture capitalist,” O’Mara wrote in her 2019 book The Code: Silicon Valley and the Remaking of America. Large government orders for chips to supply NASA’s Apollo program and the military’s Minuteman intercontinental ballistic missiles encouraged chipmakers to begin mass production and helped lower the cost of the first silicon chips from $1,000 each in 1960 to just $25 by 1965.

The price drop made computing power affordable to many beyond just deep-pocketed government agencies. It kick-started the golden age of Moore’s Law, in which customers reaped the benefits of cheaper chips that also delivered better performance every few years. And you might not know its promise was in peril if all you had to go on was Apple’s latest ad.

While I was interviewing O’Mara for this story, a delivery person showed up at her door as if on cue.

“Speaking of chips, I’m just getting handed my brand-new computer,” she said with a laugh. “Yes, I’ve got my new MacBook with my M1 chip.”

Jeremy Hsu is a technology and science journalist based in New York City.

Deep Dive

Computing

How ASML took over the chipmaking chessboard

MIT Technology Review sat down with outgoing CTO Martin van den Brink to talk about the company’s rise to dominance and the life and death of Moore’s Law.

Why it’s so hard for China’s chip industry to become self-sufficient

Chip companies from the US and China are developing new materials to reduce reliance on a Japanese monopoly. It won’t be easy.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.