This is the Stanford vaccine algorithm that left out frontline doctors

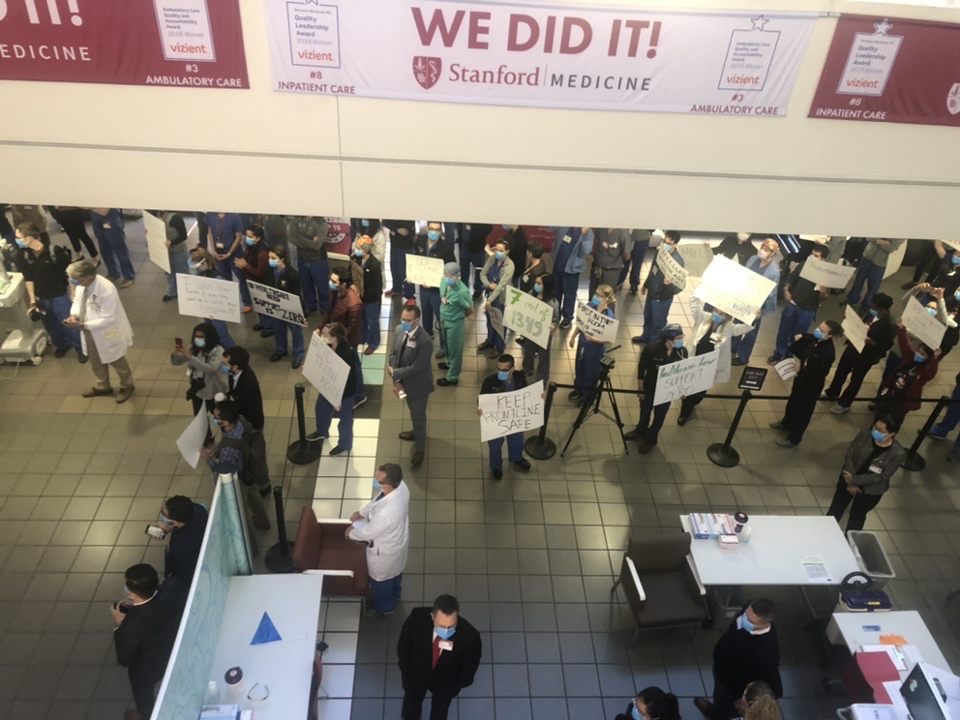

When resident physicians at Stanford Medical Center—many of whom work on the front lines of the covid-19 pandemic—found out that only seven out of over 1,300 of them had been prioritized for the first 5,000 doses of the covid vaccine, they were shocked. Then, when they saw who else had made the list, including administrators and doctors seeing patients remotely from home, they were angry.

During a planned photo op to celebrate the first vaccinations taking place on Friday, December 18, at least 100 residents showed up to protest. Hospital leadership apologized for not prioritizing them, and blamed the errors on “a very complex algorithm.”

“Our algorithm, that the ethicists, infectious disease experts worked on for weeks … clearly didn’t work right,” Tim Morrison, the director of the ambulatory care team, told residents at the event in a video posted online.

Many saw that as an excuse, especially since hospital leadership had been made aware of the problem on Tuesday—when only five residents made the list—and responded not by fixing the algorithm, but by adding two more residents for a total of seven.

“One of the core attractions of algorithms is that they allow the powerful to blame a black box for politically unattractive outcomes for which they would otherwise be responsible,” Roger McNamee, a prominent Silicon Valley insider turned critic, wrote on Twitter. “But *people* decided who would get the vaccine,” tweeted Veena Dubal, a professor of law at the University of California, Hastings, who researches technology and society. “The algorithm just carried out their will.”

But what exactly was Stanford’s “will”? We took a look at the algorithm to find out what it was meant to do.

How the algorithm works

The slide describing the algorithm came from residents who had received it from their department chair. It is not a complex machine-learning algorithm (which are often referred to as “black boxes”) but a rules-based formula for calculating who would get the vaccine first at Stanford. It considers three categories: “employee-based variables,” which have to do with age; “job-based variables”; and guidelines from the California Department of Public Health. For each category, staff received a certain number of points, with a total possible score of 3.48. Presumably, the higher the score, the higher the person’s priority in line. (Stanford Medical Center did not respond to multiple requests for comment on the algorithm over the weekend.)

The employee variables increase a person’s score linearly with age, and extra points are added to those over 65 or under 25. This gives priority to the oldest and youngest staff, which disadvantages residents and other frontline workers who are typically in the middle of the age range.

Job variables contribute the most to the overall score. The algorithm counts the prevalence of covid-19 among employees’ job roles and department in two different ways, but the difference between them is not entirely clear. Neither the residents nor two unaffiliated experts we asked to review the algorithm understood what these criteria meant, and Stanford Medical Center did not respond to a request for comment. They also consider the proportion of tests taken by job role as a percentage of the medical center’s total number of tests collected.

What these factors do not take into account is exposure to patients with covid-19, say residents. That means the algorithm did not distinguish between those who had caught covid from patients and those who got it from community spread—including employees working remotely. And, as first reported by ProPublica, residents were told that because they rotate between departments rather than maintain a single assignment, they lost out on points associated with the departments where they worked.

The algorithm’s third category refers to the California Department of Public Health’s vaccine allocation guidelines. These focus on exposure risk as the single highest factor for vaccine prioritization. The guidelines are intended primarily for county and local governments to decide how to prioritize the vaccine, rather than how to prioritize between a hospital’s departments. But they do specifically include residents, along with the departments where they work, in the highest-priority tier.

It may be that the “CDPH range” factor gives residents a higher score, but still not high enough to counteract the other criteria.

“Why did they do it that way?”

Stanford tried to factor in a lot more variables than other medical facilities, but Jeffrey Kahn, the director of the Johns Hopkins Berkman Institute of Bioethics, says the approach was overcomplicated. “The more there are different weights for different things, it then becomes harder to understand—‘Why did they do it that way?’” he says.

Kahn, who sat on Johns Hopkins’ 20-member committee on vaccine allocation, says his university allocated vaccines based simply on job and risk of exposure to covid-19.

He says that decision was based on discussions that purposefully included different perspectives—including those of residents—and in coordination with other hospitals in Maryland. Elsewhere, the University of California San Francisco’s plan is based on a similar assessment of risk of exposure to the virus. Mass General Brigham in Boston categorizes employees into four groups based on department and job location, according to an internal email reviewed by MIT Technology Review.

“There’s so little trust around so much related to the pandemic, we cannot squander it.”

“It’s really important [for] any approach like this to be transparent and public …and not something really hard to figure out,” Kahn says. “There’s so little trust around so much related to the pandemic, we cannot squander it.”

Algorithms are commonly used in health care to rank patients by risk level in an effort to distribute care and resources more equitably. But the more variables used, the harder it is to assess whether the calculations might be flawed.

For example, in 2019, a study published in Science showed that 10 widely used algorithms for distributing care in the US ended up favoring white patients over Black ones. The problem, it turned out, was that the algorithms’ designers assumed that patients who spent more on health care were more sickly and needed more help. In reality, higher spenders are also richer, and more likely to be white. As a result, the algorithm allocated less care to Black patients with the same medical conditions as white ones.

Irene Chen, an MIT doctoral candidate who studies the use of fair algorithms in health care, suspects this is what happened at Stanford: the formula’s designers chose variables that they believed would serve as good proxies for a given staffer’s level of covid risk. But they didn’t verify that these proxies led to sensible outcomes, or respond in a meaningful way to the community’s input when the vaccine plan came to light on Tuesday last week. “It’s not a bad thing that people had thoughts about it afterward,” says Chen. “It’s that there wasn’t a mechanism to fix it.”

A canary in the coal mine?

After the protests, Stanford issued a formal apology, saying it would revise its distribution plan.

Hospital representatives did not respond to questions about who they would include in new planning processes, or whether the algorithm would continue to be used. An internal email summarizing the medical school’s response, shared with MIT Technology Review, states that neither program heads, department chairs, attending physicians, nor nursing staff were involved in the original algorithm design. Now, however, some faculty are pushing to have a bigger role, eliminating the algorithms’ results completely and instead giving division chiefs and chairs the authority to make decisions for their own teams.

Other department chairs have encouraged residents to get vaccinated first. Some have even asked faculty to bring residents with them when they get vaccinated, or delay their shots so that others could go first.

Some residents are bypassing the university health-care system entirely. Nuriel Moghavem, a neurology resident who was the first to publicize the problems at Stanford, tweeted on Friday afternoon that he had finally received his vaccine—not at Stanford, but at a public county hospital in Santa Clara County.

“I got vaccinated today to protect myself, my family, and my patients,” he tweeted. “But I only had the opportunity because my public county hospital believes that residents are critical front-line providers. Grateful.”

Deep Dive

Policy

Is there anything more fascinating than a hidden world?

Some hidden worlds--whether in space, deep in the ocean, or in the form of waves or microbes--remain stubbornly unseen. Here's how technology is being used to reveal them.

A brief, weird history of brainwashing

L. Ron Hubbard, Operation Midnight Climax, and stochastic terrorism—the race for mind control changed America forever.

What Luddites can teach us about resisting an automated future

Opposing technology isn’t antithetical to progress.

Africa’s push to regulate AI starts now

AI is expanding across the continent and new policies are taking shape. But poor digital infrastructure and regulatory bottlenecks could slow adoption.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.