Of course technology perpetuates racism. It was designed that way.

Today the United States crumbles under the weight of two pandemics: coronavirus and police brutality.

Both wreak physical and psychological violence. Both disproportionately kill and debilitate black and brown people. And both are animated by technology that we design, repurpose, and deploy—whether it’s contact tracing, facial recognition, or social media.

We often call on technology to help solve problems. But when society defines, frames, and represents people of color as “the problem,” those solutions often do more harm than good. We’ve designed facial recognition technologies that target criminal suspects on the basis of skin color. We’ve trained automated risk profiling systems that disproportionately identify Latinx people as illegal immigrants. We’ve devised credit scoring algorithms that disproportionately identify black people as risks and prevent them from buying homes, getting loans, or finding jobs.

So the question we have to confront is whether we will continue to design and deploy tools that serve the interests of racism and white supremacy,

Of course, it’s not a new question at all.

Uncivil rights

In 1960, Democratic Party leaders confronted their own problem: How could their presidential candidate, John F. Kennedy, shore up waning support from black people and other racial minorities?

An enterprising political scientist at MIT, Ithiel de Sola Pool, approached them with a solution. He would gather voter data from earlier presidential elections, feed it into a new digital processing machine, develop an algorithm to model voting behavior, predict what policy positions would lead to the most favorable results, and then advise the Kennedy campaign to act accordingly. Pool started a new company, the Simulmatics Corporation, and executed his plan. He succeeded, Kennedy was elected, and the results showcased the power of this new method of predictive modeling.

Racial tension escalated throughout the 1960s. Then came the long, hot summer of 1967. Cities across the nation burned, from Birmingham, Alabama, to Rochester, New York, to Minneapolis Minnesota, and many more in between. Black Americans protested the oppression and discrimination they faced at the hands of America’s criminal justice system. But President Johnson called it “civil disorder,” and formed the Kerner Commission to understand the causes of “ghetto riots.” The commission called on Simulmatics.

As part of a DARPA project aimed at turning the tide of the Vietnam War, Pool’s company had been hard at work preparing a massive propaganda and psychological campaign against the Vietcong. President Johnson was eager to deploy Simulmatics’s behavioral influence technology to quell the nation’s domestic threat, not just its foreign enemies. Under the guise of what they called a “media study,” Simulmatics built a team for what amounted to a large-scale surveillance campaign in the “riot-affected areas” that captured the nation’s attention that summer of 1967.

Three-member teams went into areas where riots had taken place that summer. They identified and interviewed strategically important black people. They followed up to identify and interview other black residents, in every venue from barbershops to churches. They asked residents what they thought about the news media’s coverage of the “riots.” But they collected data on so much more, too: how people moved in and around the city during the unrest, who they talked to before and during, and how they prepared for the aftermath. They collected data on toll booth usage, gas station sales, and bus routes. They gained entry to these communities under the pretense of trying to understand how news media supposedly inflamed “riots.” But Johnson and the nation’s political leaders were trying to solve a problem. They aimed to use the information that Simulmatics collected to trace information flow during protests to identify influencers and decapitate the protests’ leadership.

They didn’t accomplish this directly. They did not murder people, put people in jail, or secretly “disappear” them.

But by the end of the 1960s, this kind of information had helped create what came to be known as “criminal justice information systems.” They proliferated through the decades, laying the foundation for racial profiling, predictive policing, and racially targeted surveillance. They left behind a legacy that includes millions of black and brown women and men incarcerated.

Reframing the problem

Blackness and black people. Both persist as our nation’s—dare I say even our world’s—problem. When contact tracing first cropped up at the beginning of the pandemic, it was easy to see it as a necessary but benign health surveillance tool. The coronavirus was our problem, and we began to design new surveillance technologies in the form of contact tracing, temperature monitoring, and threat mapping applications to help address it.

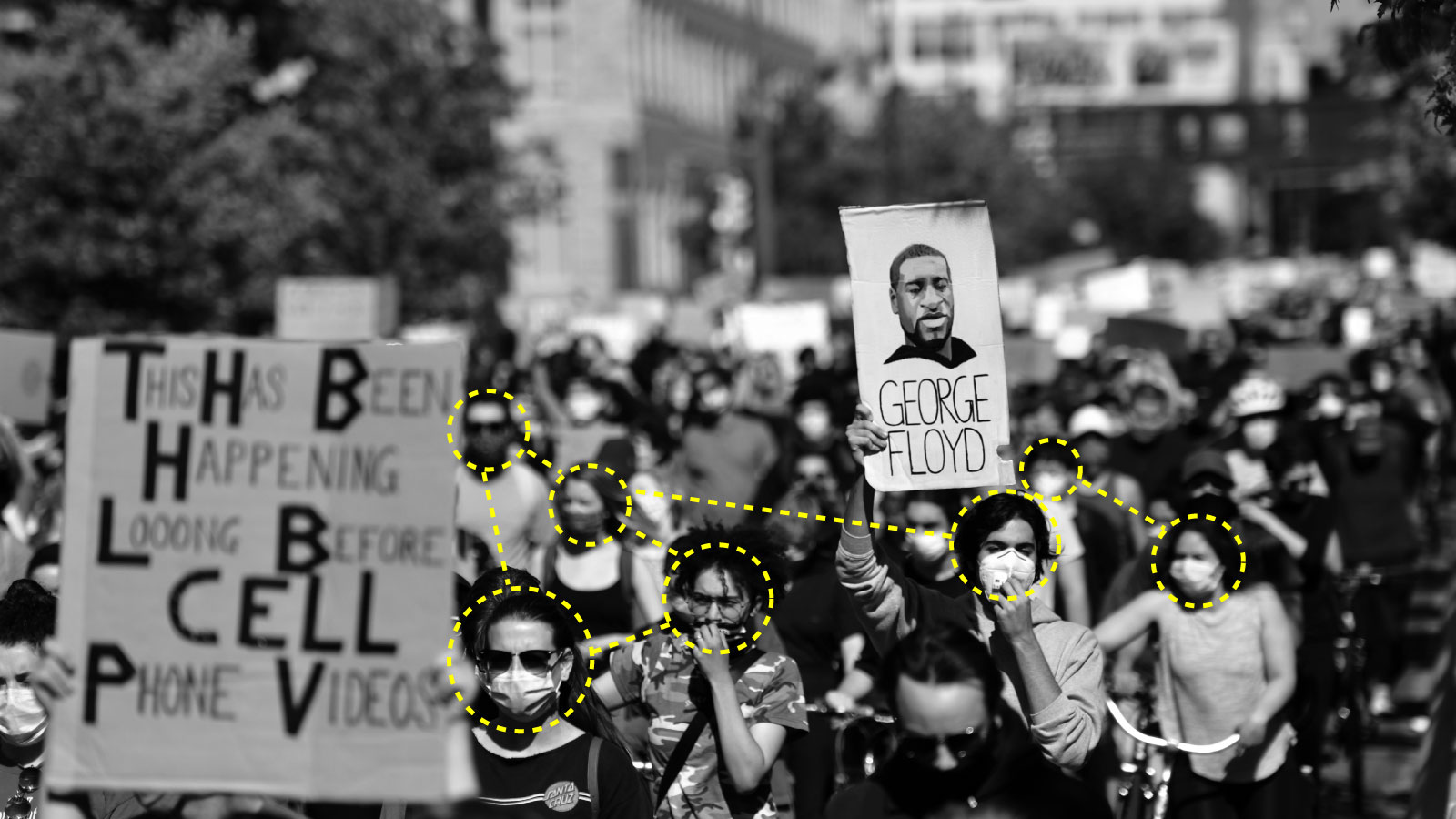

But something both curious and tragic happened. We discovered that black people, Latinx people, and indigenous populations were disproportionately infected and affected. Suddenly, we also became a national problem; we disproportionately threatened to spread the virus. That was compounded when the tragic murder of George Floyd by a white police officer sent thousands of protesters into the streets. When the looting and rioting started, we—black people—were again seen as a threat to law and order, a threat to a system that perpetuates white racial power. It makes you wonder how long it will take for law enforcement to deploy those technologies we first designed to fight covid-19 to quell the threat that black people supposedly pose to the nation’s safety.

If we don’t want our technology to be used to perpetuate racism, then we must make sure that we don’t conflate social problems like crime or violence or disease with black and brown people. When we do that, we risk turning those people into the problems that we deploy our technology to solve, the threat we design it to eradicate.

Charlton McIlwain is a professor of media, culture, and communication at New York University and author of Black Software: The Internet & Racial Justice, From the AfroNet to Black Lives Matter

Deep Dive

Policy

Is there anything more fascinating than a hidden world?

Some hidden worlds--whether in space, deep in the ocean, or in the form of waves or microbes--remain stubbornly unseen. Here's how technology is being used to reveal them.

A brief, weird history of brainwashing

L. Ron Hubbard, Operation Midnight Climax, and stochastic terrorism—the race for mind control changed America forever.

What Luddites can teach us about resisting an automated future

Opposing technology isn’t antithetical to progress.

Africa’s push to regulate AI starts now

AI is expanding across the continent and new policies are taking shape. But poor digital infrastructure and regulatory bottlenecks could slow adoption.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.