We need mass surveillance to fight covid-19—but it doesn’t have to be creepy

I stop the car when I see him walking slowly down the empty footpath outside our now shuttered building—I know he lives on campus and is far from home. I sent my students away more than a week ago; I think of them as diasporic now, not necessarily remote, but it is still a shock to see him. We talk about his studies, and his fiancée in San Francisco, and how strange this moment in which we find ourselves is—we are at the edges of what language can describe. After one last check-in and the promise to call me if I can help, he says in an awkward voice, “You know I will have to report this.”

The Australian National University (ANU), at which I work, is moving quickly in response to covid-19. Our classes have gone online, and we have sent our staff home; we are all navigating a new world of digital intermediation and distance. For the students who remain in the residence halls, locked in a country that has closed its borders and to which airlines no longer fly, it is an ever-changing situation. Keeping them safe is a big priority; there is social distancing, and increased cleaning and temporal staggering of access to services. There are rules and prescriptions and the looming reality of daily temperature checks. And apparently there is a contact log in which I will now feature, and which could be turned over to the local health services at a later point.

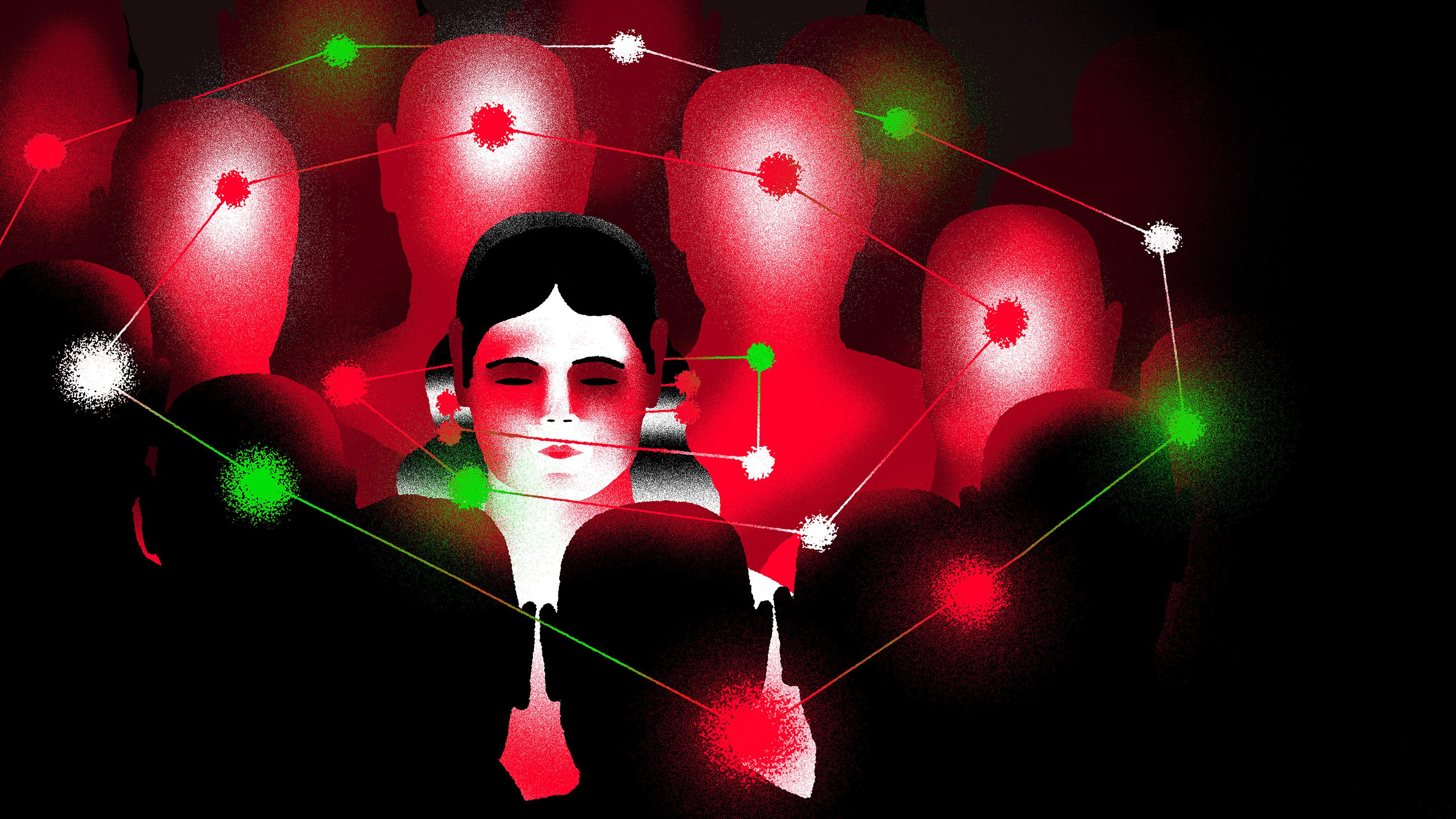

The rigorous use of contact tracing, across digital and physical realms, has been credited with helping limit the spread of covid-19 in a number of places, notably Singapore, Taiwan, and South Korea, as well as Kerala, India. As a methodology, it has a long history of use against diseases from SARS and AIDS to typhoid and the 1918-19 influenza pandemic. In its current instantiations—such as the mobile-phone app that South Koreans exposed to the virus must download so they can be monitored during self-quarantine—it has raised new concerns about surveillance and privacy, and about the trade-offs between health, community well-being, and individual rights. Even here at the ANU, we are trying to find a way to balance it all.

Perhaps we are negotiating new social contracts, with our neighbors, our communities, and our governments, that extend to the role technology plays in responding to a health crisis. And as we negotiate these new contracts, questions inevitably arise about our relationships to the data that exists about us, the sheer abundance of information that we generate, and how it could be used to help us or hurt us.

Imagine doing contact tracing on yourself. Do you know where you were yesterday, and with whom?

It is a lot to contemplate. Imagine doing contact tracing on yourself. Do you know where you were yesterday, and with whom? What you were doing? How about a week ago? Two weeks ago? How would you track back? Your calendar? Your in-box? Your credit card receipts or digital wallet? Facebook? Google Maps? Your mass transit card? Your shared services profiles? Your dating app? Your chat apps? Your smart watch? Your camera? Your phone? Would you rely on your memory or someone else’s? Your digital devices; your data; their data? Could you reconstruct it all?

And if you could, what would it mean and how could it be used, and by whom, for what, and for how long? How would it feel to know you were part of someone else’s reconstruction; that you were a trace in their days and weeks? Or to know that a passing moment was now captured, stabilized, stripped of its context, and used to tell a different kind of story—a story not about two people, but about two possible nodes in an epidemic?

And when you knew the arc of the last two weeks, and all its points of intersection and encounter, whom would you feel comfortable telling? Your kids? Your partner? Your parents? Your best friend? Your lover? Your service provider? Your employer? Your teacher? Your doctor? Your neighbors? Your community? Your government? How would you feel if you didn’t have a choice in the disclosure? What if you didn’t even know disclosure had happened?

As a little girl, I visited Port Arthur with my mother. It was a prison camp, built in Tasmania to house the most recalcitrant prisoners sent to Australia during its early colonial period. In 1853 a new prison was built there, modeled on the Eastern State Penitentiary in Philadelphia and strongly influenced by Jeremy Bentham’s ideas of the panopticon, a prison where every inmate can be watched at all times, but never see the watcher—a proto-version of mass surveillance. In Port Arthur, the guards could see each other, and watch the prisoners, through a small keyhole—colloquially known as a judas hole—in each cell door, placed so that no part of the cell was out of its sight. The prisoners could see no one. In the one hour a day they were released from their cells, they were masked and walked in silence in walled, open-air yards. The life of the prisoner was regimented, documented, and constrained; of course, they found ways to resist and subvert the process, but it was a stark existence. The relationships between power, surveillance, and discipline were clear to me even as a child.

Contact tracing has this kind of history too. It was used to identify Mary Mallon, an Irish immigrant cook, as an asymptomatic carrier of typhoid in 1900s New York City. She was repeatedly quarantined and demonized, and survives to this day in the phrase “Typhoid Mary.” It was deployed at scale during World War II to manage the spread of venereal disease by American soldiers in the United Kingdom—the overlays of nationalism, prurient interest in sex, and power dynamics in gender relationships are all highly visible. In the 1980s in Australia, it was used to identify at-risk communities at the start of the AIDs epidemic, and gay men bore the brunt of conservative politics, religious backlash, and stigma.

The question is, can we imagine contact tracing, and other forms of data revelation, that don’t feel like a judas hole?

Against this backdrop, we might need to reevaluate how we think about “contact” (which in the latter two examples meant sexual contact that society disapproved of) and “tracing” (associated with criminal investigations and punishment) and ask: can we strip them of their moral and punitive overlays? We have to break some of the social and cultural associations of the past to use these tactics most effectively in the future.

So I guess the question is, can we imagine contact tracing, and other forms of data revelation, that don’t feel like a judas hole?

Part of the answer lies in how we think about the basis of contact tracing—data, and its collection. Of course, there are already long-standing worries about the ways large corporations and governments use and control data. There will surely be questions: Who can use the data, or own it? Can data from sources that were originally supposed to stay separate, such as health services and the police, be combined? Will decisions about who gets access to your data be automated, or will humans review them? Will your diagnoses and antibody statuses be shared with other countries when you travel, or will you be tested at the border? Will at-risk people be targeted, and by whom? And let’s not forget that all of this is happening within larger systems and contexts.

Work is already under way in multiple countries on how to better regulate data collection, prevent algorithmic bias, and limit the use of mass surveillance (including facial recognition technology): it will clearly be relevant in answering such questions. So will the regulations and standards currently emerging—mostly from Europe—on privacy, the uses of personal data, and algorithmically enhanced decision-making. And it all needs to happen, as a friend of mine has taken to reminding me, at the speed of the virus—which is to say, very quickly indeed.

However, there is more to unpicking the potential panopticon than merely implementing technical and legal constraints on who controls your data. We might also need to think differently about why the data is being collected, and to what end.

Perhaps we can start by differentiating between three distinct purposes for contact tracing: one centered on public health, another on patients, and the last on citizens. All are necessary; all are different.

Public health is the most obvious focus. This is the sense in which countries like South Korea and Singapore have been doing contact tracing for the coronavirus, as well as the attendant medical interventions—notification, disclosure, registration, isolation, treatment. It is about helping make the best use of finite resources in the name of broader public health: here, contact tracing is how you might contain an outbreak before it gets too big.

The patient-centered purpose requires us to modify our notion of contact tracing to something that resembles a patient journey. Here the focus could be helping someone decide whether and how to seek care, and guiding health-care providers to the appropriate treatment. As one physician put it to me recently, it’s about helping patients “triage their worry”—work out when they should be concerned and, equally important, when they should not. Early examples are being trialed in Massachusetts and elsewhere.

A focus on citizens, however, is something quite different. Can we imagine community contact tracing? It could be a way of identifying hot spots without identifying individuals—a repository of anonymized traces and patterns, or decentralized, privacy-preserving proximity tracing. This data might help researchers or government agencies create community-level strategies—perhaps changing the layout of a park to reduce congestion, for instance. It might help us see our world a little differently and make different choices—a collective curve flattening. We could create open-source solutions or locally based tools.

The speed of the virus and the response it demands shouldn’t seduce us into thinking we need to build solutions that last forever.

In all three contexts, we need to considerably expand our understanding of the data, platforms, and devices that could be useful. Could mobile-phone data identify places that need help in achieving better social distancing? Could smart thermometers help identify potential hot spots? Is community-level data as useful as personal data for mapping an epidemic and the responses to it? We would also need to shift our sense-making around data: the issue we must grapple with isn’t just personal data anymore, or the ideas of privacy we have been contesting for years. It is also intimate and shared data, and data that implicates others. It might be about the patterns, not the individuals at all. How this data is stored and accessed, and by whom, will also vary depending on the tools available for accessing it. There will be many decisions—and, one hopes, many conversations.

The speed of the virus and the response it demands shouldn’t seduce us into thinking we need to build solutions that last forever. There’s a strong argument that much of what we build for this pandemic should have a sunset clause—in particular when it comes to the private, intimate, and community data we might collect. The decisions we make to opt in to data collection and analysis now might not resemble the decisions we would make at other times. Creating frameworks that allow a change in values and trade-off calculations feels important too.

There will be many answers and many solutions, and none will be easy. We will trial solutions here at the ANU, and I know others will do the same. We will need to work out technical arrangements, update regulations, and even modify some of our long-standing institutions and habits. And perhaps one day, not too long from now, we might be able to meet in public, in a large gathering, and share what we have learned, and what we still need to get right—for treating this pandemic, but also for building just, equitable, and fair societies with no judas holes in sight.

Genevieve Bell is director of the Autonomy, Agency, and Assurance Institute at the Australian National University and a senior fellow at Intel.

Deep Dive

Policy

Is there anything more fascinating than a hidden world?

Some hidden worlds--whether in space, deep in the ocean, or in the form of waves or microbes--remain stubbornly unseen. Here's how technology is being used to reveal them.

A brief, weird history of brainwashing

L. Ron Hubbard, Operation Midnight Climax, and stochastic terrorism—the race for mind control changed America forever.

What Luddites can teach us about resisting an automated future

Opposing technology isn’t antithetical to progress.

Africa’s push to regulate AI starts now

AI is expanding across the continent and new policies are taking shape. But poor digital infrastructure and regulatory bottlenecks could slow adoption.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.