Farewell to file clerks

Today, the web runs so smoothly that most people take it for granted. Every time you fire off an e-mail to a web-based account or search for something online, standard protocols allow computers around the world to relay the information seamlessly.

But the idea that the web would be built on standard protocols—let alone protocols developed in an open, collaborative way—was by no means a foregone conclusion back when the web was an unexplored digital frontier.

In the 1970s, some young MIT computer scientists working in what was known as the Dynamic Modeling Group (DMG) were focused on the heady task of inventing new computer technologies for a world that didn’t yet know it needed them. Managed by Albert Vezza, the group worked on such things as developing the programming language MDL (which several of them would use to write the legendary computer game Zork) and designing a computer system that translated Morse code into readable text. But automating the work of telegraph operators was a small part of a much bigger vision: using technology to transform how virtually every job in an office gets done.

Vezza and his team saw office work as an inefficient flurry of paper: orders and memoranda were dictated and typed up, sent off to the relevant departments, and then filed away. Computers, they realized, could greatly simplify such laborious communication. In June 1976, Vezza wrote to MIT’s patent office, describing his team’s idea for an “office communication system [that] is not a paging system … but rather a mechanized means for creating, viewing, sending, storing in a computer system, and retrieval of memoranda and letter-like, written communications.”

Later that year, he spoke at a National Bureau of Standards symposium about his team’s effort to develop what he called “a user interface that is understandable to those unsophisticated in computer lore.” MIT had been a node on Arpanet, the first incarnation of the internet, since 1970, but Vezza’s group was thinking ahead to a day when using computers to share information wasn’t just the purview of computer scientists. He went on to describe electronic messaging services that “automate the secretary’s and the file clerk’s functions” and “transcend the boundary between the office environment and the delivery or postal system.”

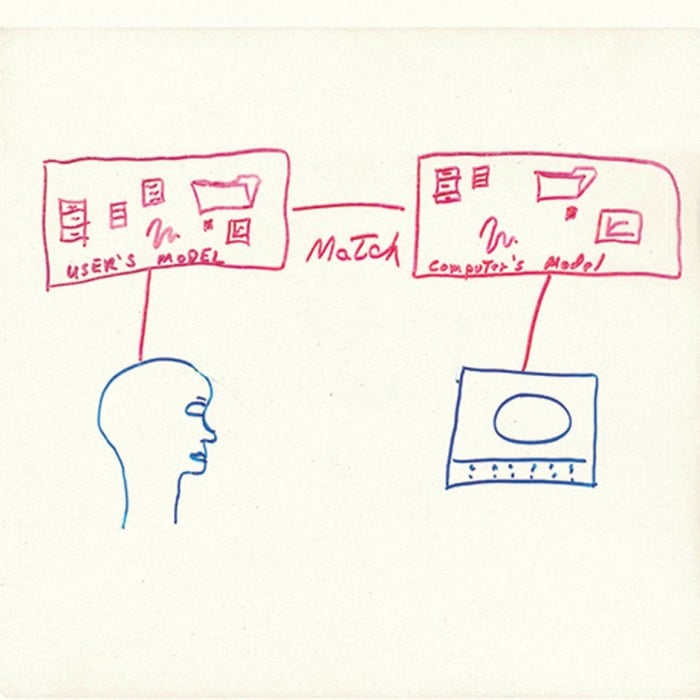

In 1977, the same year that TCP—the internet protocol that allows computers on different networks to communicate—was being tested on Arpanet, Vezza gave a talk at the first MIT Alumni Summer College. One clear acetate slide from his presentation included a hand drawing showing how a typical office, filled with files, folders, and drawers, could be replicated in a hypothetical environment shared by computers and their users—what we think of today as “cyberspace.”

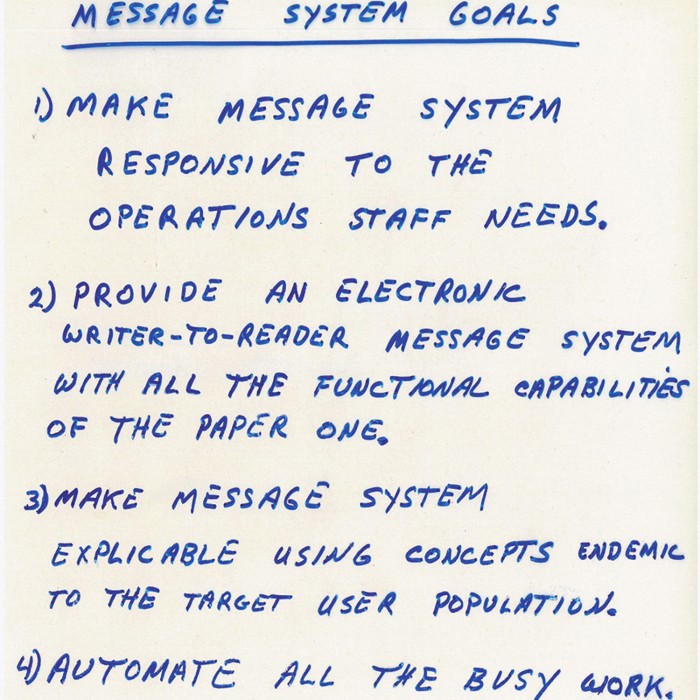

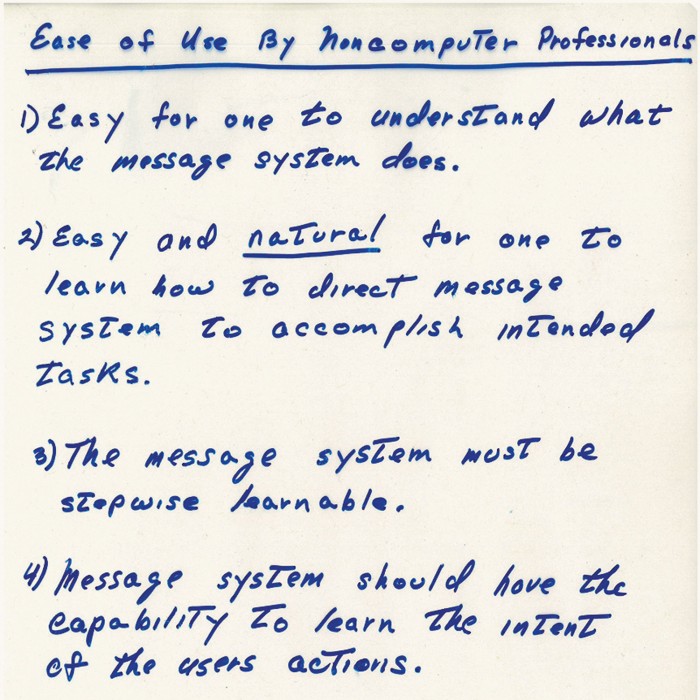

Al Vezza used these slides in his 1977 talk about the Dynamic Modeling Group’s vision for a computerized message system.

On another slide, Vezza listed the goals of what he called a “Message System,” or “an electronic writer-to-reader message system with all the functional capabilities of the paper one.” It should “automate all the busy work,” he said. Another slide stressed the importance of making sure the system could be used by what he called “noncomputer professionals.” It had to be “easy for one to understand what the message system does” and “easy and natural for one to learn how to direct message system to accomplish intended tasks.” He underscored the word “natural.” And he included an even loftier goal: “Message system should have the capability to learn the intent of the user’s actions.”

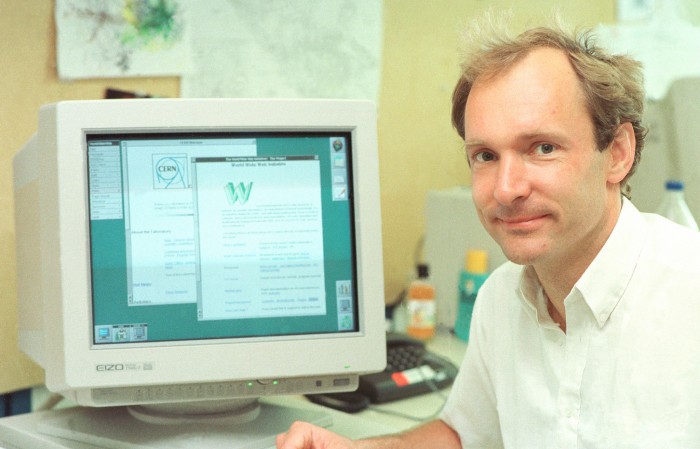

Of course, Vezza and the Dynamic Modeling Group weren’t the only ones with this vision. In 1990, halfway across the globe, a young engineer at the European Organization for Nuclear Research (CERN), Tim Berners-Lee, was using his hypertext markup language, or HTML, to build the world’s first website. Then as now, CERN was a behemoth of an institution with over 3,000 staff members and more than 6,000 fellows, associates, and students working on an enormous range of projects—a perfect place for an interconnected electronic workspace. So Berners-Lee came up with a straightforward way to share information using the internet. And he gave away the code so anyone could use it.

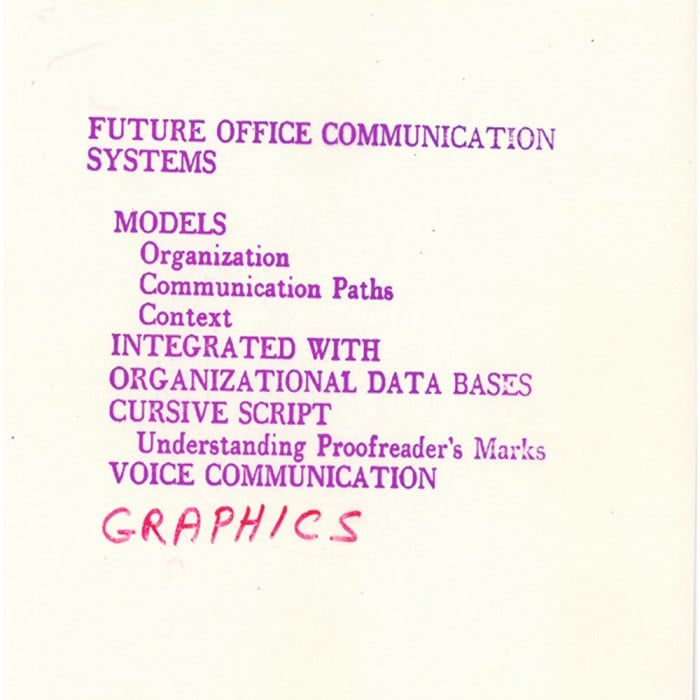

More of the slides that Al Vezza used in his 1977 talk.

When Vezza, by then associate director of MIT’s Laboratory for Computer Science, first encountered Berners-Lee’s work, he realized its potential to open the internet’s doors to the public at large. “I had bumped into the web and was playing with a browser before I knew who Berners-Lee was,” he recalls. “I realized it made life much easier in using the net.”

He also saw potential for trouble if such a network were to be developed by uncoordinated groups—or, worse yet, warring factions using competing standards. “The web could have fragmented,” he recalls. He points to the mobile-phone market as an example of what could have been: “Japan, Korea, Europe, and the US use different signaling standards, presumably to favor their domestic industries. Could the web have gone the same way? Luckily, we’ll never know.”

Early on, it was clear that a cooperative approach was needed. This wasn’t uncharted territory for MIT: after creating the X graphical user interface in 1984, Vezza’s colleagues established the nonprofit MIT X Consortium to develop open standards for its use. So when someone suggested that MIT launch a similar group to hammer out web standards and honor Berners-Lee’s concept of developing the web as an open platform, Vezza raised his hand.

“I said I had no clue how to do it, and we haven’t got anybody here who knows,” he recalls. Then he laughs. “I’m sure they were setting me up, but they’ll never admit it, and Hal Abelson said, ‘What a great idea! Let’s go off and get Tim Berners-Lee!’”

So that’s what Vezza did.

Berners-Lee saw the potential of the system he had created, and he wanted to develop it further. But by the 1990s CERN was ramping up to build the Large Hadron Collider—arguably the largest science experiment in history—and couldn’t spare resources for developing the web. So Vezza talked Berners-Lee into coming to MIT, where the two of them cofounded the World Wide Web Consortium, or W3C, with Berners-Lee as director and Vezza as chair. Under their leadership, what started as a tentative collaboration between MIT and CERN has evolved into a group that spans hundreds of technology companies, laboratories, and research groups around the world. Over the years, W3C members have worked together to flesh out the standards of HTTP and develop others that are now as ubiquitous as they are essential.

By the time he retired from MIT and the W3C in 1996, Vezza had seen an idea grow into a truly global network of computers. More than a singular system of virtual drawers, folders, and desks, it has expanded into nearly all of our living spaces—from our bedrooms to our cars—and profoundly changed how we share and access information.

Keep Reading

Most Popular

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

How scientists traced a mysterious covid case back to six toilets

When wastewater surveillance turns into a hunt for a single infected individual, the ethics get tricky.

The problem with plug-in hybrids? Their drivers.

Plug-in hybrids are often sold as a transition to EVs, but new data from Europe shows we’re still underestimating the emissions they produce.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.