A mathematical model captures the political impact of fake news

Policymakers and opinion formers the world over are grappling to come to terms with the nature of fake news and its implications for society, democracy, and even truth itself.

However, the debate is clouded by disagreements over how to define the phenomenon. Without a proper definition, policymakers, journalists, and ordinary people struggle to tackle it. So a clear and powerful way to think about fake news that allows for objective analysis is desperately needed.

Enter Dorje Brody from the University of Surrey and David Meier from Brunel University, both in the UK. These guys have uses the mathematical theory of communication to to devise a mathematical model that simulates the way fake news influences referendums and elections—and points to ways of mitigating its effects.

First some background. The fundamental problem of communication is to reproduce at one point in the universe a message created at another point. The problem is made more difficult by the fact that there is always noise that distorts this message—0s get flipped into 1s, b’s sound like d’s, and smoke signals get, well, blown away.

So the receiver of any message has to have a strategy to deal with this noise. That turns out to be entirely possible in many situations. The mathematician and engineer Claude Shannon proved that a message can always be reproduced more or less exactly, provided noise is below some threshold level. The key idea here is that fake news can be treated mathematically like a special kind of noise.

Brody and Meier define fake news as information that is inconsistent with factual reality. In this sense, it is noise that needs to be removed before a signal can be properly interpreted. However, this noise has special properties in that it is biased in a specific way, unlike random noise.

This means that the flow of information contains several components. There is the factually correct message plus various factually incorrect details and rumors, which are random noise. But there are also deliberately biased messages that correspond to fake news. The key to interpreting a signal is to strip away both the random noise and the bias to leave the unadulterated message.

This is no easy task. But it turns out that mathematicians have powerful mathematical tools exactly suited for this purpose. Developed in the 1950 and 1960s, filtering theory is a branch of communication theory that aims to filter out noise in communication channels. Crucially, this theory treats bias and random noise in different ways to get at the underlying signal.

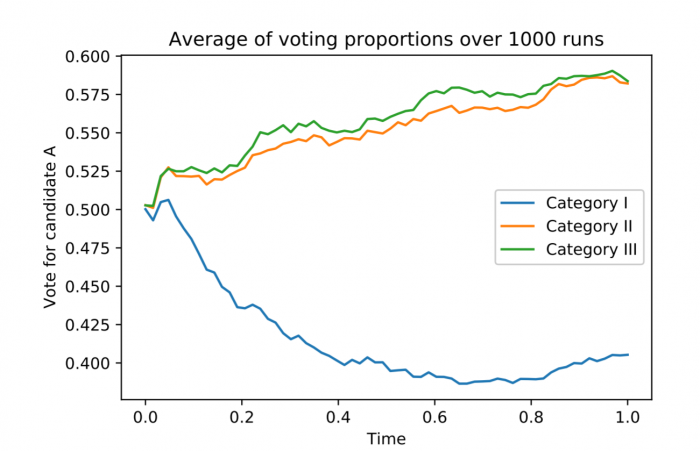

Brody and Meier use this idea to model the way voters interpret news. The researchers say voters fall into three categories. First are those who are unaware of fake news and so treat it like ordinary noise. Being unaware that news could be fake, they are entirely confident in their views. “This category are most vulnerable to exposure to fake news,” say the researchers.

The second group are those who are aware of fake news but do not know how to separate it from noise. This group is less susceptible to fake news but is less confident in its opinions because of the uncertainty that fake news creates. “The people in this category are considerably more aware of the uncertainties in their estimates,” say Brody and Meier.

And finally, there are those voters who can spot fake news and immediately remove it from their calculations. These people are confident in their views because they are unaffected by the bias fake news introduces. However, Brody and Meier think of this group as an idealization. “After all, it is an almost insurmountable task for any given individual to perfectly identify which items of news are fake and which ones are not,” they point out.

They go on to simulate an election in which these groups of people are fed a series of news items that are contaminated by random noise and by fake news. They run the simulation over 1,000 times to see how fake news influences voting preferences.

The results make for interesting reading. It turns out, not unexpectedly, that voters in the the first group are easily manipulated by fake news. Similarly, those in the third group are unaffected by fake news.

However, the second group is the most interesting. Voters in this category are aware of the existence of fake news, but do not know the timing of its release. So they tend to overcompensate for the possibility that the information they are receiving may be contaminated. However, once the fake news has been released, those in this group do well at removing its influence.

“One can interpret this as an indication that mere knowledge of the possibility of fake news is already a powerful antidote to its effects,” say Brody and Meier.

Tha provides some hope that the effect of fake news can be mitigated.

The work leaves some important questions unanswered. A big unknown, of course, is the proportion of voters in the first category and how, or even whether, it is possible to move them to the second category.

Another important issue is the nature of factual reality. Many observers will question whether it is reasonable to assume that an objective factual reality exists, particularly when it comes to political issues and future-gazing.

And even if there is an objective reality, does the process of communication help us understand its true nature or merely help us agree on what it might be?

Like many before them, Brody and Meier are not yet able to help with that thorny conundrum.

Ref: arxiv.org/abs/1809.00964 : How To Model Fake News

Deep Dive

Policy

Is there anything more fascinating than a hidden world?

Some hidden worlds--whether in space, deep in the ocean, or in the form of waves or microbes--remain stubbornly unseen. Here's how technology is being used to reveal them.

A brief, weird history of brainwashing

L. Ron Hubbard, Operation Midnight Climax, and stochastic terrorism—the race for mind control changed America forever.

What Luddites can teach us about resisting an automated future

Opposing technology isn’t antithetical to progress.

Africa’s push to regulate AI starts now

AI is expanding across the continent and new policies are taking shape. But poor digital infrastructure and regulatory bottlenecks could slow adoption.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.