The tricks propagandists use to beat science

Back in the 1950s, health professionals became concerned that smoking was causing cancer. Then, in 1952, the popular magazine Reader’s Digest published “Cancer by the Carton,” an article about the increasing body of evidence that proved it. The article caused widespread shock and media coverage. Today the health dangers of smoking are clear and unambiguous.

And yet smoking bans have been slow to come into force, most having appeared some 40 years or more after the Reader’s Digest article.

The reason for this sluggishness is easy to see in hindsight and described in detail by Naomi Oreskes and Erik Conway in their 2010 book Merchants of Doubt. Here the authors explain how the tobacco industry hired a public relations firm to manufacture controversy surrounding the evidence and cast doubt on its veracity.

Together, tobacco companies and the PR firm created and funded an organization called the Tobacco Industry Research Committee to produce results and opinions that contradicted the view that smoking kills. This led to a false sense of uncertainty and delayed policy changes that would otherwise have restricted sales.

The approach was hugely successful for the tobacco industry at the time. In the same book, Oreskes and Conway show how a similar approach has influenced the climate change debate. Again, the scientific consensus is clear and unambiguous but the public debate has been deliberately muddied to create a sense of uncertainty. Indeed, Oreskes and Conway say that some of the same people who dreamt up the tobacco strategy also worked on undermining the climate change debate.

That raises an important question: How easy is it for malicious actors to distort the public perception of science?

Today we get an answer thanks to the work of James Owen Weatherall, Cailin O'Connor at the University of California, Irvine, and Justin Bruner at the Australian National University in Canberra, who have created a computer model of the way scientific consensus forms and how this influences the opinion of policy makers. The team studied how easily these views can be distorted and determined that today it is straightforward to distort the perception of science with techniques that are even more subtle than those used by the tobacco industry.

The original tobacco strategy involved several lines of attack. One of these was to fund research that supported the industry and then publish only the results that fit the required narrative. “For instance, in 1954 the TIRC distributed a pamphlet entitled ‘A Scientific Perspective on the Cigarette Controversy’ to nearly 200,000 doctors, journalists, and policy-makers, in which they emphasized favorable research and questioned results supporting the contrary view,” say Weatherall and co, who call this approach biased production.

A second approach promoted independent research that happened to support the tobacco industry’s narrative. For example, it supported research into the link between asbestos and lung cancer because it muddied the waters by showing that other factors can cause cancer. Weatherall and his team call this approach selective sharing.

Weatherall and co investigated how these techniques influence public opinion. To do this they used a computer model of the way the scientific process influences the opinion of policy makers.

This model contains three types of actors. The first is scientists who come to a consensus by carrying out experiments and allowing the results, and those from their peers, to influence their view.

Each scientist begins with the goal of deciding which of two theories is better. One of these theories is based on “action A,” which is well understood and known to work 50 percent of time. This corresponds to theory A.

By contrast, theory B is based on an action that is poorly understood. Scientists are uncertain about whether or not it is better than A. Nevertheless, the model is set up so that theory B is actually better.

Scientists can make observations using their theory, and crucially, these have probabilistic outcomes. So even if theory B is the better of the two, some results will back theory A.

At the beginning of the simulation, the scientists are given a random belief in theory A or B. For example, a scientist with a 0.7 credence believes there is a 70 percent chance that theory B is correct and therefore applies theory B in the next round of experiments.

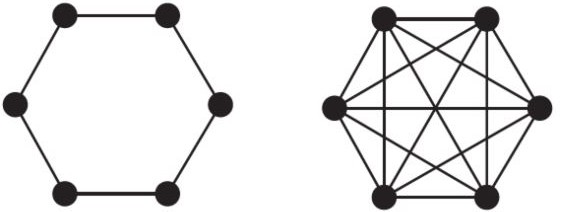

After each round of experiments, the scientists update their views based on the results of their experiment and the results of scientists they are linked to in the network. In the next round, they repeat this process and update their beliefs again, and so on.

The simulation stops when all scientists believe one theory or the other or when the belief in one theory reaches some threshold level. In this way, Weatherall and co simulate the way that scientists come to a consensus view.

But how does this process influence policy makers? To find out, Weatherall and his team introduced a second group of people into the model—the policy makers—who are influenced by the scientists (but do not influence the scientists themselves). Crucially, policy makers do not listen to all the scientists, only a subset of them.

The policy makers start off with a view and update it after each round, using the opinions of the scientists they listen to.

But the key focus of the team’s work is how a propagandist can influence the policy makers’ views. So Weatherall and co introduce a third actor into this model. This propagandist observes all the scientists and communicates with all the policy makers with the goal of persuading them that the worse theory is correct (in this case, theory A). They do this by searching only for views that suggest theory A is correct and sharing these with the policy makers.

The propagandist can work in two ways that correspond to biased production or selective sharing. In the first, the propagandist uses an in-house team of scientists to produce results that favor theory A. In the second, the propagandist simply cherry-picks those results from independent scientists who favor theory A.

Both types of influence can make a big impact, say Weatherall and co—selective sharing turns out to be just as good as biased production. “We find that the presence of a single propagandist who communicates only actual findings of scientists can have a startling influence on the beliefs of policy makers,” they explain. “Under many scenarios we find that while the community of scientists converges on true beliefs about the world, the policy makers reach near certainty in the false belief.”

And that’s without any fraudulent or bad science, just cherry-picking the results. Indeed, the propagandists don’t even need to use their own in-house scientists to back specific ideas. When there is natural variation in the results of unbiased scientific experiments, the propagandists can have a significant influence by cherry-picking the ones that back their own agenda. And it can be done at very low risk because all the results they choose are “real” science.

That finding has important implications. It means that anybody who wants to manipulate public opinion and influence policy makers can achieve extraordinary success with relatively subtle tricks.

Indeed, it’s not just nefarious actors who can end up influencing policy makers in ways that do not match the scientific consensus. Weatherall and co point out that science journalists also cherry-pick results. Reporters are generally under pressure to find the most interesting or sexy or amusing stories, and this biases what policy makers see. Just how significant this effect is in the real world isn’t clear, however.

The team’s key finding will have profound consequences. “One might have expected that actually producing biased science would have a stronger influence on public opinion than merely sharing others’ results,” say Weatherall and co. “But there are strong senses in which the less invasive, more subtle strategy of selective sharing is more effective than biased production.”

The work has implications for the nature of science too. This kind of selective sharing is effective only because of the broad variation in results that emerge from certain kinds of experiment, particularly those that are small, low-powered studies.

This is a well-known problem, and the solution is clear: bigger, more highly powered studies. “Given some fixed financial resources, funding bodies should allocate those resources to a few very high-powered studies,” argue Weatherall and co, who go on to suggest that scientists should be given incentives for producing that kind of work. “For instance, scientists should be granted more credit for statistically stronger results—even in cases where they happen to be null.”

That would make it more difficult for propagandists to find spurious results they can use to distort views.

But given how powerful selective sharing seems to be, the question now is, who is likely to make effective use of Weatherall and co’s conclusions first: propagandists or scientists/policy makers?

Ref: arxiv.org/abs/1801.01239: How to Beat Science and Influence People: Policy Makers and Propaganda in Epistemic Networks

Deep Dive

Policy

Is there anything more fascinating than a hidden world?

Some hidden worlds--whether in space, deep in the ocean, or in the form of waves or microbes--remain stubbornly unseen. Here's how technology is being used to reveal them.

A brief, weird history of brainwashing

L. Ron Hubbard, Operation Midnight Climax, and stochastic terrorism—the race for mind control changed America forever.

What Luddites can teach us about resisting an automated future

Opposing technology isn’t antithetical to progress.

Africa’s push to regulate AI starts now

AI is expanding across the continent and new policies are taking shape. But poor digital infrastructure and regulatory bottlenecks could slow adoption.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.