Genetic Engineering Holds the Power to Save Humanity or Kill It

How likely is it that humanity will destroy itself? Various scientists have studied this probability, and the basic calculation is straightforward. The key parameters are the number of people capable of destroying the planet and the likelihood that they will do so.

This likelihood has been hard to measure, since it depends on the psychological stability of the commanders in charge—how likely are they to trigger a nightmare scenario?

The number of these individuals has been much easier to gauge. Throughout the second half of the 20th century and the early 21st century, this number has been the American and Soviet/Russian leaders in charge of massive nuclear arsenals.

So perhaps as few as two people have truly civilization-destroying power. That may not be entirely reassuring, given the nature of those individuals, but it is a bed of roses compared to the future, says John Sotos, who is affiliated with the Joint Forces Headquarters of the California National Guard.

Sotos says the calculus of civilization-ending technologies is about to change dramatically, and the consequences for humanity are devastating.

Back in the 20th century, people all over the world became aware of an existential threat to civilization. Indeed, this possibility became an important part of the political strategies of the world’s two superpowers, the U.S. and the Soviet Union.

The threat came from the technologies behind nuclear weapons, and the nightmare scenario was called mutually assured destruction. This involved both sides letting loose their nuclear arsenals in an attempt to destroy the other. The outcome of this process was intended to be so disastrous that neither side could benefit from triggering it and would therefore never start such a war.

Whether by luck or judgment, this strategy has worked—so far. But the U.S. and Russia maintain their planet-destroying capabilities, and the threat of all-out nuclear war still hangs over the planet.

A similar threat comes from climate change. And again the power to control or unleash it rests with the relatively small number of individuals who run the world’s major economies. Again, an important unknown is how likely they are to rein in the destructive power of greenhouse gas emissions. But the world looks to be moving toward a planet-saving strategy, although the efficacy of this approach is unknown.

Now a new technology is posing a global threat. This is the ability to engineer organisms that can kill large numbers of people—perhaps almost everyone—in a global pandemic. Until recently, the development of bioweapons has required the kind of large-scale investment that only nation states can bring to bear. That has allowed this work to be carefully monitored on an international scale. Consequently, the use of bioweapons has been largely controlled by international agreement.

But the ability to engineer lethal organisms is spreading. That’s because the same technology that allows researchers to design viruses and vaccines for specific genetic targets also allows them to design organisms that can spread and kill.

Sotos points to the Cancer Moonshot project, which aims to accelerate the use of immunotherapies to treat cancer. The goal is to test this technology on 20,000 cancer patients in various trials by 2020. As a result, large numbers of individuals in hospitals and research facilities all over the world will have access to a technology that has a frightening dark side.

To get a sense of the numbers of people involved. Sotos has searched the PubMed database of scientific papers for authors who have worked on “genetic techniques.” This search produced over 1.5 million unique names, of which 180,000 have authored more than five papers.

If only a fraction of these have, or will soon have, the capability to engineer organisms that could end civilization, that represents a very significant increase in the threat level.

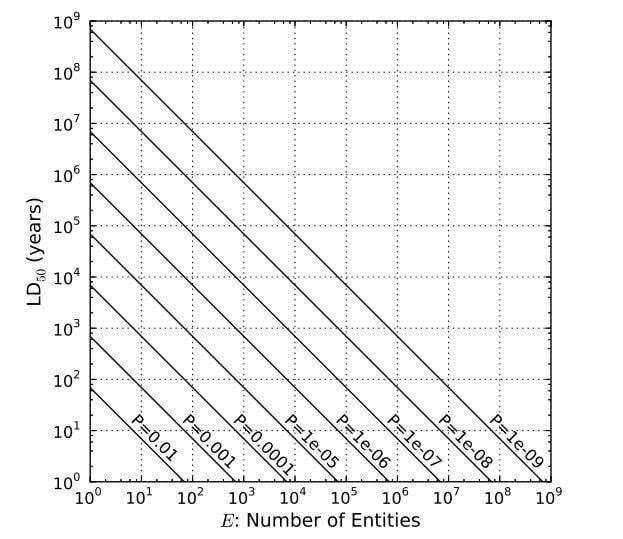

Given this number, how likely are they to release a civilization-ending biotechnology? Sotos thinks of it as the likelihood per year that a person with this destructive technology will use it. There is no way of knowing what this probability is for humanity, so Sotos simply puts a few probabilities into his model to see how this influences the likely lifetime of our civilization.

The results are sobering. If there is a one in 100 chance that somebody with the technology will release it, and there are a few hundred individuals like this, then our civilization is doomed on a timescale of 100 years or so. If there are 100,000 individuals with this technology, then the probability of them releasing it needs to be less than one in 109 for our civilization to last 1,000 years.

But people are not all equally likely to behave in this way. A frightening scenario is the case of a person for whom the probability of releasing this technology is certain when they get hold of it. If people like this exist, the end of civilization is a certainty.

Of course, Sotos’s model has some shortcomings. For example, it does not account for defensive strategies, such as the development of a treatment or cure. Humanity’s ability to detect and respond to global pandemics is in its infancy and certainly well behind our ability to create and release pandemics. Nevertheless, the chances of creating a timely treatment for billions of people seems remote.

Another thought-provoking point is the nature of future advances in personalized medicine. “An especially concerning scenario arises if, someday, hospitals employ people who routinely write patient-specific molecular-genetic programs and package them into replicating viruses that are therapeutically administered to patients, especially cancer patients,” says Sotos.

This technology allows these same people to create and release pandemics. And if this kind of health care spreads around the world, the number of people who have access to it will explode. “If the world attained the European Union’s per capita hospital density, this could mean 200,000 hospitals employing perhaps one million people who might genetically engineer viruses every workday,” says Sotos.

That’s a stark warning with broader implications. One longstanding puzzle is that the universe is filled with stars like our own, presumably with the potential to evolve intelligent life, and yet we can see no sign of these civilizations. “Where is everybody?” said Enrico Fermi, the physicist who first posed this paradox.

One line of thought is that there is some kind of filtering mechanism that prevents civilizations surviving indefinitely. During the Cold War, the obvious mechanism behind this “Great Filter” was nuclear war. Could humanity avoid blowing itself up?

Now the question has morphed into whether humanity can prevent a catastrophic release of a lethal pandemic. If the answer is no, then Sotos’s numbers neatly solve the Fermi Paradox. He says there are 1024 stars and planets in the visible universe and yet only one civilization—our own.

If civilizations destroy themselves with biotechnology, Sotos’s numbers suggest that there is likely to be only one intelligent civilization today. “Most remarkably, the present model supplies the quantitative 24 orders-of-magnitude winnowing required of a Great Filter,” he says.

So what to do? Sotos has an answer, and his voice has some influence given that, in addition to his affiliation with the California National Guard, he is chief medical officer at Intel Health and Life Sciences. “I would advise advanced technical civilizations to optimize not on megascale computation nor engineering nor energetics, but on defense from individually possessable self-replicating existential threats, such as microbes or nanomachines,” he says.

You have been warned.

Ref: arxiv.org/abs/1709.01149: Biotechnology and the Lifetime of Technical Civilizations

Keep Reading

Most Popular

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

How scientists traced a mysterious covid case back to six toilets

When wastewater surveillance turns into a hunt for a single infected individual, the ethics get tricky.

The problem with plug-in hybrids? Their drivers.

Plug-in hybrids are often sold as a transition to EVs, but new data from Europe shows we’re still underestimating the emissions they produce.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.