How PayPal Boosts Security with Artificial Intelligence

To PayPal, the transactions signal fraud: a U.S. user’s account is accessed in the U.K., China, and elsewhere around the world. But PayPal’s security system—thanks to a growing reliance on an artificial-intelligence technology known as deep learning—is now able to spot possible fraud without making mistakes. That’s because algorithms mine data from the customer’s purchasing history—in addition to reviewing patterns of likely fraud stored in its databases—and can tell whether, for example, the suspect transactions were innocent actions of a globe-hopping pilot.

From a cybersecurity perspective, PayPal has a target on its back: it processed $235 billion in payments last year from four billion transactions by its more than 170 million customers. Fraud is always possible via theft of consumer data in breaches such as “phishing” e-mails that con users into entering their credentials. To keep ahead, PayPal relies on intensive, real-time analysis of transactions.

When a pattern is revealed—for example, if sudden strings of many small purchases at convenience stores turn out to be fraud—it’s turned into a “feature,” or a rule that can be applied in real time to stop purchases that fit this profile. “We now process thousands of ‘features’ in our system, compared to hundreds when the system was first put to use in 2013,” says Hui Wang, the company’s senior director of global risk sciences.

As a result, PayPal can now do things like tell the difference between friends buying concert tickets together and a thief making similar purchases with a list of stolen accounts. And it’s all done in-house to avoid even the tiny latency that would occur if the company relied on a cloud provider. “Thousands of ‘features’ searching through 16 years of users’ history all needs to be done in less than a second,” Wang says.

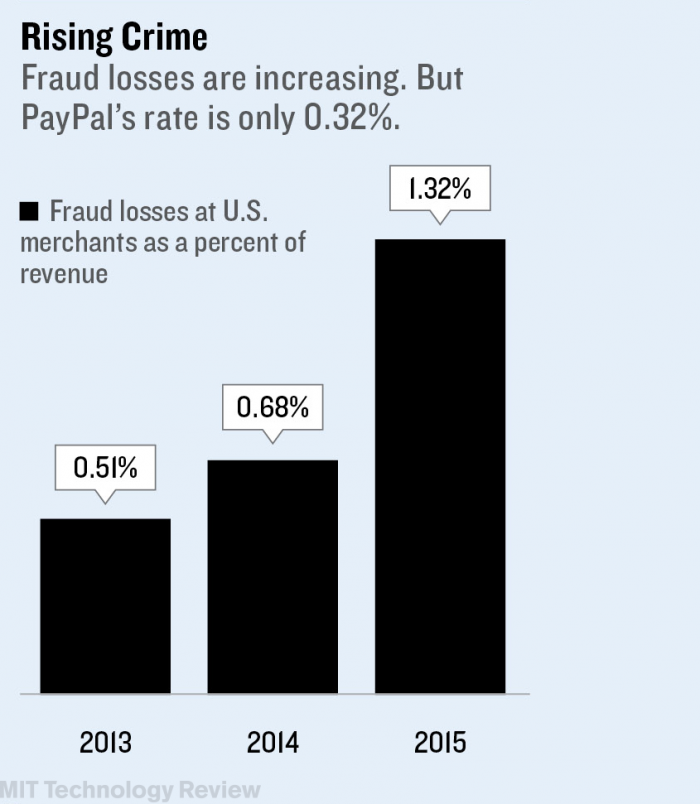

Deep learning and other artificial-intelligence approaches are quickly becoming the only way to keep up with threats, she adds. They’ve worked to help keep PayPal’s fraud rate remarkably low, at 0.32 percent of revenue—a figure far better than the 1.32 percent average that merchants see, according to a study by LexisNexis. The most recent Federal Reserve Payments Study found that $6.1 billion in fraudulent purchases were made in 2012, and the problem appears to be getting worse.

PayPal isn’t the only company using deep learning to improve cybersecurity. The Israeli startup Deep Instinct has applied the technique to spotting malware, claiming that this works 20 percent better than traditional approaches. And Ashar Aziz, vice chairman and founder of the security firm FireEye, said that his company has been using deep learning for everything from detecting network intrusions to rooting out phishing attacks.

Companies can further improve cybersecurity if they share data repositories on cyberattacks and fraud, says Aziz. “If you continue to get more data—and more power to process it—then you can get even better,” he says.

Keep Reading

Most Popular

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

How scientists traced a mysterious covid case back to six toilets

When wastewater surveillance turns into a hunt for a single infected individual, the ethics get tricky.

The problem with plug-in hybrids? Their drivers.

Plug-in hybrids are often sold as a transition to EVs, but new data from Europe shows we’re still underestimating the emissions they produce.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.