Data-Toting Cops

Mornings at 7:00, Wade Brabble has decisions to make. So in the last year, he has come to rely upon a computer-generated forecast of where crime will happen on his day shift as a police lieutenant in Fort Lauderdale, Florida. Depending on the report, which comes out of a system built in a year-old partnership with IBM, he’ll move his 15 patrol officers around, telling some to focus on hot spots while assigning routine calls to everyone else. “I base a lot of it on numbers,” he says.

Twenty years after the New York Police Department pioneered the idea with a program called CompStat, computerized crime analysis is moving to a new level. Back then, the innovation was a map tracking past crimes, which higher-ups used to hold district commanders accountable. Now the push is for widespread adoption of analytics that predict crime in close to real time, identifying target areas to within 250,000 square feet. Bigger data sets, commercially available analytics and forecasting software, and faster computers are driving the improvement, say the Rand Corporation’s John Hollywood and Walt Perry, authors of a 2013 report on the trend.

Critics like the Electronic Frontier Foundation, however, fear that such projects will promote racial profiling, and skeptics like Maria Haberfeld, a professor of criminal justice at John Jay College, think they are as likely to move crime a few blocks away as they are to prevent it.

Some big departments, like the Los Angeles Police Department, simply base predictions on data about past crime locations and time and type of crime, says UCLA anthropologist Jeff Brantingham, who is also cofounder of PredPol, the company that helped design the LAPD’s software. At the other extreme is Chicago, which has gone as far as using data to predict whether specific potential criminals may be involved in violence. Fort Lauderdale takes a middle path: it uses crime history but factors in details such as events that are expected to draw crowds, and even the likely impact of weather.

The analytics aren’t good enough to say a specific store will be hit on Tuesday, but they can predict a 70 percent chance of burglaries in one area, or a 40 percent chance of muggings somewhere else.

The approach seems to work—but as with any experiment in a living city, it’s hard to be certain why crime is down. In Fort Lauderdale, crimes like murder, robbery, larceny, and sexual assault fell 6 percent in the first eight months of 2014. Assistant police chief Michael Gregory says that in addition to the computer analytics, the department has implemented tactics such as distributing anti-theft kits in a burglary-prone neighborhood.

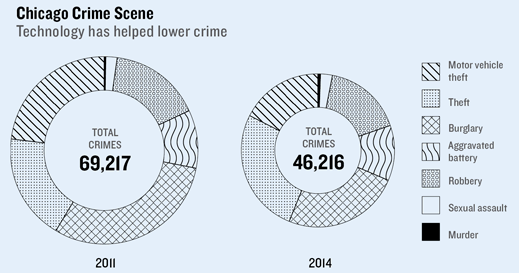

In Chicago, violent crime was down 13 percent year over year as of October, and the number of murders could be the lowest since 1965. Chicago’s “hot people” strategy was based on a list of the 400 Chicagoans, all with arrest records and connections to known criminals,that a computer model identified as most at risk of becoming either a perpetrator or a victim of violence, though it can’t predict which.

Since 2013, people on the list have been getting personal visits from local cops—usually the head of their precinct, according to Commander Jonathan Lewin, head of the department’s public-safety information technology unit. They’re handed a letter that explains the consequences of breaking the law and offers social services. The hot 400 are as much as 500 times more likely than average to be involved in a crime, Lewin says, and most of the data used to build the list has to do with the level of connectedness to criminals: “It does not—repeat, not—include gender or race.” There have been some problems, including reports that minor offenders were listed. Soon the list will be weighted by probation history, outstanding warrants, and record of narcotics and weapon possession.

Los Angeles eschews modeling aimed at identifying specific criminals, and Brantingham warns that nothing in predictive policing generates enough probable cause for a search warrant or justifies a stop-and-frisk. In the end, even the best systems can’t entirely replace human judgment.“It takes a little time for people to get out of the mind-set that it’s a cure-all,” Brabble says.

Video and social networks like Twitter are increasingly sources of data for analysis, and in time, systems with more decision support built in may be deployed as well, putting more data into the hands of officers using mobile devices and in-car computers in the field. One thing that won’t change: controversy over what kinds of data are relevant, and politically acceptable, to include in crime forecasting.

Keep Reading

Most Popular

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

How scientists traced a mysterious covid case back to six toilets

When wastewater surveillance turns into a hunt for a single infected individual, the ethics get tricky.

The problem with plug-in hybrids? Their drivers.

Plug-in hybrids are often sold as a transition to EVs, but new data from Europe shows we’re still underestimating the emissions they produce.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.