The World’s Most Powerful 3-D Laser Imager

Airborne laser scanning has produced stunning maps and insights in the last few years. Among others, it revealed the faint outlines of a vanished medieval city street grid obscured by the jungle surrounding Cambodia’s Angkor Wat (see “Laser Scanning Reveals New Parts of an Ancient Cambodian City”), a feat that required 20 hours of helicopter flight time to map 370 square kilometers to a resolution of one meter.

But in a secure hangar at Hanscom Air Force Base in Bedford, Massachusetts, the belly of a Bombardier turboprop has been outfitted with technology that could pull off the Cambodian job in about half an hour. The fuselage holds a new LIDAR (light detection and ranging) 3-D imaging system that works with unprecedented speed and high resolution, says Dale Fried, principal developer of the system at Lincoln Laboratory, a federally funded R&D center run by MIT.

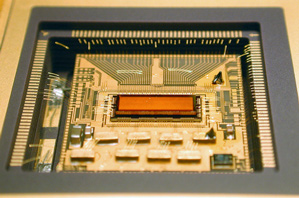

LIDAR systems fire lasers and detect returning photons, using the timing of those return trips to measure distance and thus make 3-D images. At the heart of the new imaging system is a microchip bearing the largest-ever array of pixels that detect just one photon apiece—more than 16,384 pixels in all. The array of pixels, when paired with optical lenses, allows imaging of wider areas. “Arrays of these single-photon detectors are able to map wide areas very quickly,” Fried says.

In today’s airborne LIDAR systems, individual detectors are much less sensitive; and they are mechanically moved along with the laser that emits the light to capture a wider field of view.

While no images from the new system taking shape in Hanscom are publicly available, an earlier generation of the technology—built four years ago with only one-quarter as many pixels—has been tested. The system was dispatched by the U.S. military on a humanitarian mission after the January 2010 Haiti earthquake; a single pass by a business jet at 10,000 feet over Port-au-Prince was able to capture instantaneous snapshots of 600-meter squares of the city at a resolution of 30 centimeters, displaying the precise height of rubble strewn in city streets.

This system was already roughly four times faster and more detailed than the Angkor Wat system. But the detector array now in the Hanscom hangar is another 10 times better and could produce much larger maps more quickly, Fried says.

The technology uses a semiconductor called indium gallium arsenide, which operates in the infrared spectrum at a relatively long wavelength that allows for higher power and thus longer ranges for airborne laser scanning.

Only in the past decade or so have arrays of pixels that can detect single photons been built. For the most part, the resulting imaging systems have been confined to government and military work, such as rapidly mapping much of Afghanistan’s craggy terrain to the one-meter resolution needed for helicopter crews to find landing zones.

The technology has been licensed to two companies, Princeton Lightwave of Cranbury, New Jersey, and Spectrolab, a unit of Boeing, in Sylmar, California, which are engineering them into products. Last year, Princeton Lightwave became the first to commercialize the technology that imaged Haiti. The resulting package was the size of a shoebox, albeit at a hefty initial price of $150,000, aimed at the defense contractor market.

But as the chip-making process improves and prices come down, the technology could find far more applications in fields including glaciology, agriculture, and archaeology—and perhaps even find its way into autonomous mass-market cars, says Mark Itzler, CEO of Princeton Lightwave. Right now, commercial versions of automotive LIDAR that can see farther than a few meters cost upwards of $30,000, and usually require a bulky mechanical apparatus.

While a number of different kinds of LIDAR are being considered for cars, the indium gallium arsenide approach has a long-term advantage; it can safely be ramped up to extremely high power levels. Current systems use silicon, which operates within visible light frequencies. As a result, powering them up to levels high enough to be able to tackle important jobs—like detecting an animal 200 meters ahead even on foggy highway—raises the risk of eye injury. “Enough of the [auto suppliers] see eye safety as a long-term issue that they are very interested in single-photon sensitivity at wavelengths where you have this enormously eye-safer operation,” Itzler says.

Princeton Lightwave is in discussion with primary suppliers to the auto industry to build an early prototype. Itzler says costs will have to come down precipitously, but there’s precedent for that: “The first optical receiver [using indium gallium arsenide] for high-speed telecom networks was $5,000—and today it costs $10,” he notes.

Whether or not the technology makes it into cars, Fried says, eliminating the need for some moving parts and developing larger arrays of single-photon detectors will ultimately “revolutionize the price point for 3-D imagery, by a factor of 10 or more, thereby opening up new applications.”

Meanwhile, new advances in chip-based arrays of emitters could make it easier to send out light without spinning parts. In a paper in Nature last year, other MIT researchers showed how a 64-by-64 array of silicon antennas could take a single laser beam and send it wherever the user wanted by tweaking voltages on the chip.

Keep Reading

Most Popular

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

How scientists traced a mysterious covid case back to six toilets

When wastewater surveillance turns into a hunt for a single infected individual, the ethics get tricky.

The problem with plug-in hybrids? Their drivers.

Plug-in hybrids are often sold as a transition to EVs, but new data from Europe shows we’re still underestimating the emissions they produce.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.