First Quantum-Enhanced Images of a Living Cell

Many of the most important but least understood processes of life occur inside cells at the sub-nanometer scale, beyond the realm of ordinary optical imaging. That makes it hard for biologists to see these processes at work or to understand the behaviour of the molecular machinery behind them.

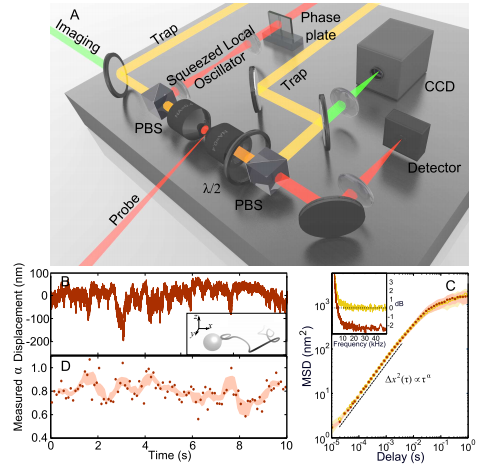

Today, Michael Taylor at the University of Queensland in Australia and a few pals reveal a new way to create optical images of cells that dramatically increases their resolution beyond the conventional diffraction limit. Their trick relies on a peculiar quantum phenomenon called “squeezed light” which has allowed them to resolve spatial structures inside a living cells at a resolution of 10 nanometres; that’s a 14 per cent finer resolution than is possible with conventional techniques.

Conventional optical imaging is limited by the process of diffraction, the way light spreads out when it passes an object. The amount of diffraction depends, in part, on natural uncertainties in the position of the photons. Physicists think of this uncertainty as quantum noise.

In recent years, however, they’ve have worked out how to minimise the amount quantum noise by carefully manipulating the way photons are created. They call the resulting photons “squeezed light” and there has been no little excitement over their potential to beat the conventional diffraction limit in all kinds of applications.

One obvious use is in cellular imaging where squeezed light offers biologists a clear advantage for exploring cellular processes. Various groups have used squeezed light to make pioneering measurements inside cells. But the process of imaging to reveal spatial variations in the structure of a cell, has so far eluded them.

That all changes with the work of Taylor and his buddies. These guys have used squeezed light to monitor the movement of naturally occurring nanoparticles inside a cell as they are buffeted by the molecular machines that surround them. They say this is the first time that anybody has created quantum enhanced images of a living cell. “We report the first demonstration of sub-diffraction limited quantum imaging in biology,” they say.

This kind of imaging should lead to important new insights. The movement of these nanoparticles is a kind of Brownian motion but biologists have known for some time that the nanoparticles don’t diffuse in a standard way. Instead, their diffusion is limited by the various molecular processes around them.

So mapping out the way this diffusion process differs in one part of the cell compared to another could reveal important insights into what’s going on. This kind of map is exactly what Taylor and co have produced.

(One criticism of this work is whether it can be truly described as “imaging”. An analogy would be spending a few minutes in a darkened room, wandering around with your arms outstretched and then saying you’ve imaged it. Mapping or sampling might be better terms.)

Squeezed light offers another benefit. Instead of increasing the resolution of images, physicists can use it to achieve the same resolution of ordinary light but at much lower intensity. That’s important because light itself can damage the molecular machinery inside cells or change the way it works. Taylor and co say they can match conventional image resolution but with a 42 per cent reduction in light intensity.

Whichever method biologists choose, squeezed light imaging is set to make a significant impact on the way they view and understand living cells at work. Expect to see a lot more about it.

Ref: arxiv.org/abs/1305.1353: Sub-diffraction Limited Quantum Imaging of a Living Cell

Keep Reading

Most Popular

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

How scientists traced a mysterious covid case back to six toilets

When wastewater surveillance turns into a hunt for a single infected individual, the ethics get tricky.

The problem with plug-in hybrids? Their drivers.

Plug-in hybrids are often sold as a transition to EVs, but new data from Europe shows we’re still underestimating the emissions they produce.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.