Memory Implants

Theodore Berger, a biomedical engineer and neuroscientist at the University of Southern California in Los Angeles, envisions a day in the not too distant future when a patient with severe memory loss can get help from an electronic implant. In people whose brains have suffered damage from Alzheimer’s, stroke, or injury, disrupted neuronal networks often prevent long-term memories from forming. For more than two decades, Berger has designed silicon chips to mimic the signal processing that those neurons do when they’re functioning properly—the work that allows us to recall experiences and knowledge for more than a minute. Ultimately, Berger wants to restore the ability to create long-term memories by implanting chips like these in the brain.

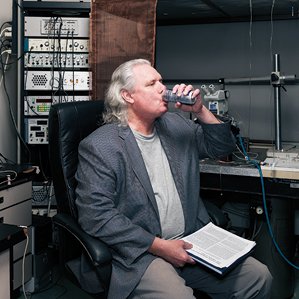

The idea is so audacious and so far outside the mainstream of neuroscience that many of his colleagues, says Berger, think of him as being just this side of crazy. “They told me I was nuts a long time ago,” he says with a laugh, sitting in a conference room that abuts one of his labs. But given the success of recent experiments carried out by his group and several close collaborators, Berger is shedding the loony label and increasingly taking on the role of a visionary pioneer.

Berger and his research partners have yet to conduct human tests of their neural prostheses, but their experiments show how a silicon chip externally connected to rat and monkey brains by electrodes can process information just like actual neurons. “We’re not putting individual memories back into the brain,” he says. “We’re putting in the capacity to generate memories.” In an impressive experiment published last fall, Berger and his coworkers demonstrated that they could also help monkeys retrieve long-term memories from a part of the brain that stores them.

If a memory implant sounds farfetched, Berger points to other recent successes in neuroprosthetics. Cochlear implants now help more than 200,000 deaf people hear by converting sound into electrical signals and sending them to the auditory nerve. Meanwhile, early experiments have shown that implanted electrodes can allow paralyzed people to move robotic arms with their thoughts. Other researchers have had preliminary success with artificial retinas in blind people.

Still, restoring a form of cognition in the brain is far more difficult than any of those achievements. Berger has spent much of the past 35 years trying to understand fundamental questions about the behavior of neurons in the hippocampus, a part of the brain known to be involved in forming memory. “It’s very clear,” he says. “The hippocampus makes short-term memories into long-term memories.”

What has been anything but clear is how the hippocampus accomplishes this complicated feat. Berger has developed mathematical theorems that describe how electrical signals move through the neurons of the hippocampus to form a long-term memory, and he has proved that his equations match reality. “You don’t have to do everything the brain does, but can you mimic at least some of the things the real brain does?” he asks. “Can you model it and put it into a device? Can you get that device to work in any brain? It’s those three things that lead people to think I’m crazy. They just think it’s too hard.”

Cracking the Code

Berger often speaks in sentences that stretch to paragraph length and have many asides, footnotes, and complete diversions from the point. I ask him to define memory. “It’s a series of electrical pulses over time that are generated by a given number of neurons,” he says. “That’s important because you can reduce it to this and put it back into a framework. Not only can you understand it in terms of the biological events that happened; that means that you can poke it, you can deal with it, you can put an electrode in there, and you can record something that matches your definition of a memory. You can find the 2,147 neurons that are part of this memory. And what do they generate? They generate this series of pulses. It’s not bizarre. It’s something you can handle. It’s useful. It’s what happens.”

This is the conventional view of memory, but it only scratches the surface. And to Berger’s perpetual frustration, many colleagues who probe this mysterious realm of the brain haven’t attempted to go much deeper. Neuroscientists track electrical signals in the brain by monitoring action potentials, microvolt changes on the surfaces of neurons. But all too often, says Berger, their reports oversimplify what’s actually taking place. “They find an important event in the environment and count action potentials,” he says. “They say, ‘It went up from 1 to 200 after I did something. I’m finding something interesting.’ What are you finding? ‘Activity went up.’ But what are you finding? ‘Activity went up.’ So what? Is it coding something? Is it representing something that the next neuron cares about? Does it make the next neuron do something different? That’s what we’re supposed to be doing: explaining things, not just describing things.”

Berger takes a marker and fills a whiteboard from top to bottom with a line of circles that represent neurons. Next to each one, he draws a horizontal line that has a different pattern of blips on it. “This is you in my brain,” he says. “My hippocampus has already formed a long-term memory of you. I’ll remember you into next week. But how can I distinguish you from the next person? Let’s say there are 500,000 cells in the hippocampus that represent you, and there are all sorts of things that each cell is coding—like how your nose is relative to your eyebrow—and they code that with different patterns. So the reality of the nervous system is really complicated, which is why we’re still asking such basic, limited questions about it.”

If one neuron fires at a specific time and place, what exactly do the neighboring neurons do in response?

In graduate school at Harvard, Berger’s mentor was Richard Thompson, who studied localized, learning-induced changes in the brain. Thompson used a tone and a puff of air to condition rabbits to blink their eyes, aiming to determine where the memory he induced was stored. The idea was to find a specific place in the brain where the learning was localized, says Berger: “If the animal did learn and you removed it, the animal couldn’t remember.”

Thompson, with Berger’s help, managed to do just that; they published the results in 1976. To find the site in the rabbits, they equipped the animals’ brains with electrodes that could monitor the activity of a neuron. Neurons have gates on their membranes, which let electrically charged particles like sodium and potassium in and out. Thompson and Berger documented the electrical spikes seen in the hippocampus as rabbits developed a memory. Both the spikes’ amplitude (representing the action potential) and their spacing formed patterns. It can’t be an accident, Berger thought, that cells fire in a way that forms patterns with respect to time.

This led him to a central question that underlies his current work: as cells receive and send electrical signals, what pattern describes the quantitative relationship between the input and the output? That is, if one neuron fires at a specific time and place, what exactly do the neighboring neurons do in response? The answer could reveal the code that neurons use to form a long-term memory.

But it soon became clear that the answer was extremely complex. In the late 1980s, Berger, working at the University of Pittsburgh with Robert Sclabassi, became fascinated by a property of the neuronal network in the hippocampus. When they stimulated the hippocampus of a rabbit with electrical pulses (the input) and charted how signals moved through different populations of neurons (the output), the relationship they observed between the two wasn’t linear. “Let’s say you put in 1 and get 2,” says Berger. “That’s pretty easy. It’s a linear relation.” It turns out, however, that there’s “essentially no condition in the brain where you get linear activity, a linear summation,” he says. “It’s always nonlinear.” Signals overlap, with some suppressing an incoming pulse and some accentuating it.

By the early 1990s, his understanding—and computing hardware—had advanced to the point that he could work with his colleagues at the University of Southern California’s department of engineering to make computer chips that mimic the signal processing done in parts of the hippocampus. “It became obvious that if I could get this stuff to work in large numbers in hardware, you’ve got part of the brain,” he says. “Why not hook up to what’s existing in the brain? So I started thinking seriously about prosthetics long before anybody even considered it.”

A Brain Implant

Berger began working with Vasilis Marmarelis, a biomedical engineer at USC, to begin making a brain prosthesis (see “Regaining Lost Brain Function”). They first worked with hippocampal slices from rats. Knowing that neuronal signals move from one end of the hippocampus to the other, the researchers sent random pulses into the hippocampus, recorded the signals at various locales to see how they were transformed, and then derived mathematical equations describing the transformations. They implemented those equations in computer chips.

Next, to assess whether such a chip could serve as a prosthesis for a damage hippocampal region, the researchers investigated whether they could bypass a central component of the pathway in the brain slices. Electrodes placed in the region carried electrical pulses to an external chip, which performed the transformations normally done in the hippocampus. Other electrodes delivered the signals back to the slice of brain.

Then the researchers took a leap forward by trying this in live rats, showing that a computer could in fact serve as an artificial component of the hippocampus. They began by training the animals to push one of two levers to receive a treat, recording the series of pulses in the hippocampus as they chose the correct one. Using those data, Berger and his team modeled the way the signals were transformed as the lesson was converted into a long-term memory, and they captured the code believed to represent the memory itself. They proved that their device could generate this long-term memory code from input signals recorded in rats’ brains while they learned the task. Then they gave the rats a drug that interfered with their ability to form long-term memories, causing them to forget which lever produced the treat. When the researchers pulsed the drugged rats’ brains with the code, the animals were again able to choose the right lever.

“I never thought I’d see this go into humans, and now our discussions are about when and how. I never thought I’d live to see the day.”

Last year, the scientists published primate experiments involving the prefrontal cortex, a part of the brain that retrieves the long-term memories created by the hippocampus. They placed electrodes in the monkey brains to capture the code formed in the prefrontal cortex that they believed allowed the animals to remember an image they had been shown earlier. Then they drugged the monkeys with cocaine, which impairs that part of the brain. Using the implanted electrodes to send the correct code to the monkeys’ prefrontal cortex, the researchers significantly improved the animal’s performance on the image-identification task.

Within the next two years, Berger and his colleagues hope to implant an actual memory prosthesis in animals. They also want to show that their hippocampal chips can form long-term memories in many different behavioral situations. These chips, after all, rely on mathematical equations derived from the researchers’ own experiments. It could be that the researchers were simply figuring out the codes associated with those specific tasks. What if these codes are not generalizable, and different inputs are processed in various ways? In other words, it is possible that they haven’t cracked the code but have merely deciphered a few simple messages.

Berger allows that this may well be the case, and his chips may form long-term memories in only a limited number of situations. But he notes that the morphology and biophysics of the brain constrain what it can do: in practice, there are only so many ways that electrical signals in the hippocampus can be transformed. “I do think we’re going to find a model that’s pretty good for a lot of conditions and maybe most conditions,” he says. “The goal is to improve the quality of life for somebody who has a severe memory deficit. If I can give them the ability to form new long-term memories for half the conditions that most people live in, I’ll be happy as hell, and so will be most patients.”

Despite the uncertainties, Berger and his colleagues are planning human studies. He is collaborating with clinicians at his university who are testing the use of electrodes implanted on each side of the hippocampus to detect and prevent seizures in patients with severe epilepsy. If the project moves forward as envisioned, Berger’s group will piggyback on the trial to look for memory codes in those patients’ brains.

“I never thought I’d see this go into humans, and now our discussions are about when and how,” he says. “I never thought I’d live to see the day, but now I think I will.”

Keep Reading

Most Popular

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

The problem with plug-in hybrids? Their drivers.

Plug-in hybrids are often sold as a transition to EVs, but new data from Europe shows we’re still underestimating the emissions they produce.

Google DeepMind’s new generative model makes Super Mario–like games from scratch

Genie learns how to control games by watching hours and hours of video. It could help train next-gen robots too.

How scientists traced a mysterious covid case back to six toilets

When wastewater surveillance turns into a hunt for a single infected individual, the ethics get tricky.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.