Conversational Interfaces

Conversational Interfaces

Breakthrough

Combining voice recognition and natural language understanding to create effective speech interfaces for the world’s largest Internet market.Why it matters

It can be time-consuming and frustrating to interact with computers by typing.Key players

Baidu; Google; Apple; Nuance; Facebook

Stroll through Sanlitun, a bustling neighborhood in Beijing filled with tourists, karaoke bars, and luxury shops, and you’ll see plenty of people using the latest smartphones from Apple, Samsung, or Xiaomi. Look closely, however, and you might notice some of them ignoring the touch screens on these devices in favor of something much more efficient and intuitive: their voice.

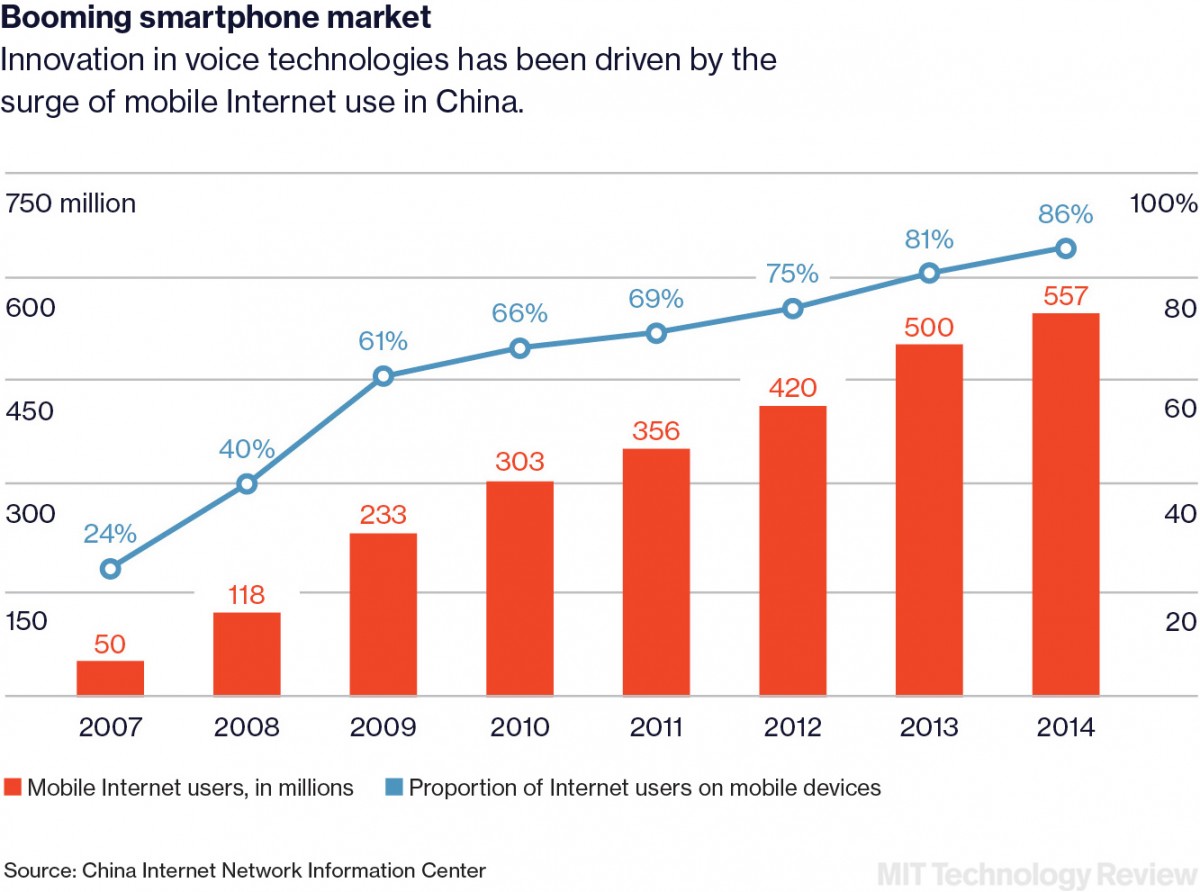

A growing number of China’s 691 million smartphone users now regularly dispense with swipes, taps, and tiny keyboards when looking things up on the country’s most popular search engine, Baidu. China is an ideal place for voice interfaces to take off, because Chinese characters were hardly designed with tiny touch screens in mind. But people everywhere should benefit as Baidu advances speech technology and makes voice interfaces more practical and useful. That could make it easier for anyone to communicate with the machines around us.

“I see speech approaching a point where it could become so reliable that you can just use it and not even think about it,” says Andrew Ng, Baidu’s chief scientist and an associate professor at Stanford University. “The best technology is often invisible, and as speech recognition becomes more reliable, I hope it will disappear into the background.”

Voice interfaces have been a dream of technologists (not to mention science fiction writers) for many decades. But in recent years, thanks to some impressive advances in machine learning, voice control has become a lot more practical.

The systems offer a glimpse of a future in which there’s less need to learn a new interface for every device.

No longer limited to just a small set of predetermined commands, it now works even in a noisy environment like the streets of Beijing or when you’re speaking across a room. Voice-operated virtual assistants such as Apple’s Siri, Microsoft’s Cortana, and Google Now come bundled with most smartphones, and newer devices, like Amazon’s Alexa, offer a simple way to look up information, cue up songs, and build shopping lists with your voice. These systems are hardly perfect, sometimes mishearing and misinterpreting commands in comedic fashion, but they are improving steadily, and they offer a glimpse of a graceful future in which there’s less need to learn a new interface for every new device.

Baidu is making particularly impressive progress, especially with the accuracy of its voice recognition, and it has the scale to advance conversational interfaces even further. The company—founded in 2000 as China’s answer to Google, which is currently blocked there—dominates the country’s domestic search market, with 70 percent of all queries. And it has evolved into a purveyor of many services, from music and movie streaming to banking and insurance.

A more efficient mobile interface would come as a big help in China. Smartphones are far more common than desktops or laptops, and yet browsing the Web, sending messages, and doing other tasks can be painfully slow and frustrating. There are thousands of Chinese characters, and although a system called Pinyin allows them to be generated phonetically from Latin ones, many people (especially those over 50) do not know the system. It’s also common in China to use messaging apps such as WeChat to do all sorts of tasks, such as paying restaurant tabs. And yet in many of China’s poorer regions, where there is perhaps more opportunity for the Internet to have big social and economic effects, literacy levels are still low.

“It is a challenge and an opportunity,” says Ng, who was named one of MIT Technology Review’s Innovators Under 35 in 2008 for his work in AI and robotics at Stanford. “Rather than having to train people used to desktop computers to new behaviors appropriate for cell phones, many of them can learn the best ways to use a mobile device from the start.”

Ng believes that voice may soon be reliable enough to be used for interacting with all sorts of devices. Robots or home appliances, for example, could be easier to deal with if you could simply talk to them. The company has research teams at its headquarters in Beijing and at a facility in Silicon Valley that are dedicated to advancing the accuracy of speech recognition and working to make computers better at parsing the meaning of sentences.

Jim Glass, a senior research scientist at MIT who has been working on voice technology for the past few decades, agrees that the timing may finally be right for voice control. “Speech has reached a tipping point in our society,” he says. “In my experience, when people can talk to a device rather than via a remote control, they want to do that.”

Last November, Baidu reached an important landmark with its voice technology, announcing that its Silicon Valley lab had developed a powerful new speech recognition engine called Deep Speech 2. It consists of a very large, or “deep,” neural network that learns to associate sounds with words and phrases as it is fed millions of examples of transcribed speech. Deep Speech 2 can recognize spoken words with stunning accuracy. In fact, the researchers found that it can sometimes transcribe snippets of Mandarin speech more accurately than a person.

Baidu’s progress is all the more impressive because Mandarin is phonetically complex and uses tones that transform the meaning of a word. Deep Speech 2 is also striking because few of the researchers in the California lab where the technology was developed speak Mandarin, Cantonese, or any other variant of Chinese. The engine essentially works as a universal speech system, learning English just as well when fed enough examples.

Few of those behind Deep Speech 2 speak Mandarin or Cantonese. It’s a universal language engine.

Most of the voice commands that Baidu’s search engine hears today are simple queries—concerning tomorrow’s weather or pollution levels, for example. For these, the system is usually impressively accurate. Increasingly, however, users are asking more complicated questions. To take them on, last year the company launched its own voice assistant, called DuEr, as part of its main mobile app. DuEr can help users find movie show times or book a table at a restaurant.

The big challenge for Baidu will be teaching its AI systems to understand and respond intelligently to more complicated spoken phrases. Eventually, Baidu would like for DuEr to take part in a meaningful back-and-forth conversation, incorporating changing information into the discussion. To get there, a research group at Baidu’s Beijing offices is devoted to improving the system that interprets users’ queries. This involves using the kind of neural-network technology that Baidu has applied in voice recognition, but it also requires other tricks. And Baidu has hired a team to analyze the queries fed to DuEr and correct mistakes, thus gradually training the system to perform better.

“In the future, I would love for us to be able to talk to all of our devices and have them understand us,” Ng says. “I hope to someday have grandchildren who are mystified at how, back in 2016, if you were to say ‘Hi’ to your microwave oven, it would rudely sit there and ignore you.”

Keep Reading

Most Popular

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

The problem with plug-in hybrids? Their drivers.

Plug-in hybrids are often sold as a transition to EVs, but new data from Europe shows we’re still underestimating the emissions they produce.

Google DeepMind’s new generative model makes Super Mario–like games from scratch

Genie learns how to control games by watching hours and hours of video. It could help train next-gen robots too.

How scientists traced a mysterious covid case back to six toilets

When wastewater surveillance turns into a hunt for a single infected individual, the ethics get tricky.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.