This startup claims its deepfakes will protect your privacy

As the saying goes: If it looks like a duck, swims like a duck, and quacks like a duck, it’s probably a duck. Now, if someone in a video clip is the same race, gender, and age as you, has the same gestures and emotional expressions as you—and is, in fact, based on you—yet doesn’t exactly look like you … is it still you?

No, according to Gil Perry, cofounder of Israeli privacy company D-ID. And that, he claims, is why his startup works. D-ID takes video footage—captured by a camera inside a store, for example—and uses computer vision and deep learning to create an alternative that protects the subject’s identity. The process turns the “you” in the video into an avatar that has all the same attributes but looks a little different.

The upside for businesses is that this new, “anonymized” video no longer gives away the exact identity of a customer—which, Perry says, means companies using D-ID can “eliminate the need for consent” and analyze the footage for business and marketing purposes. A store might, for example, feed video of a happy-looking white woman to an algorithm that can surface the most effective ad for her in real time. (It’s worth noting that the legitimacy of emotion recognition has been challenged, with a prominent AI research group recently calling for its use to be banned completely.)

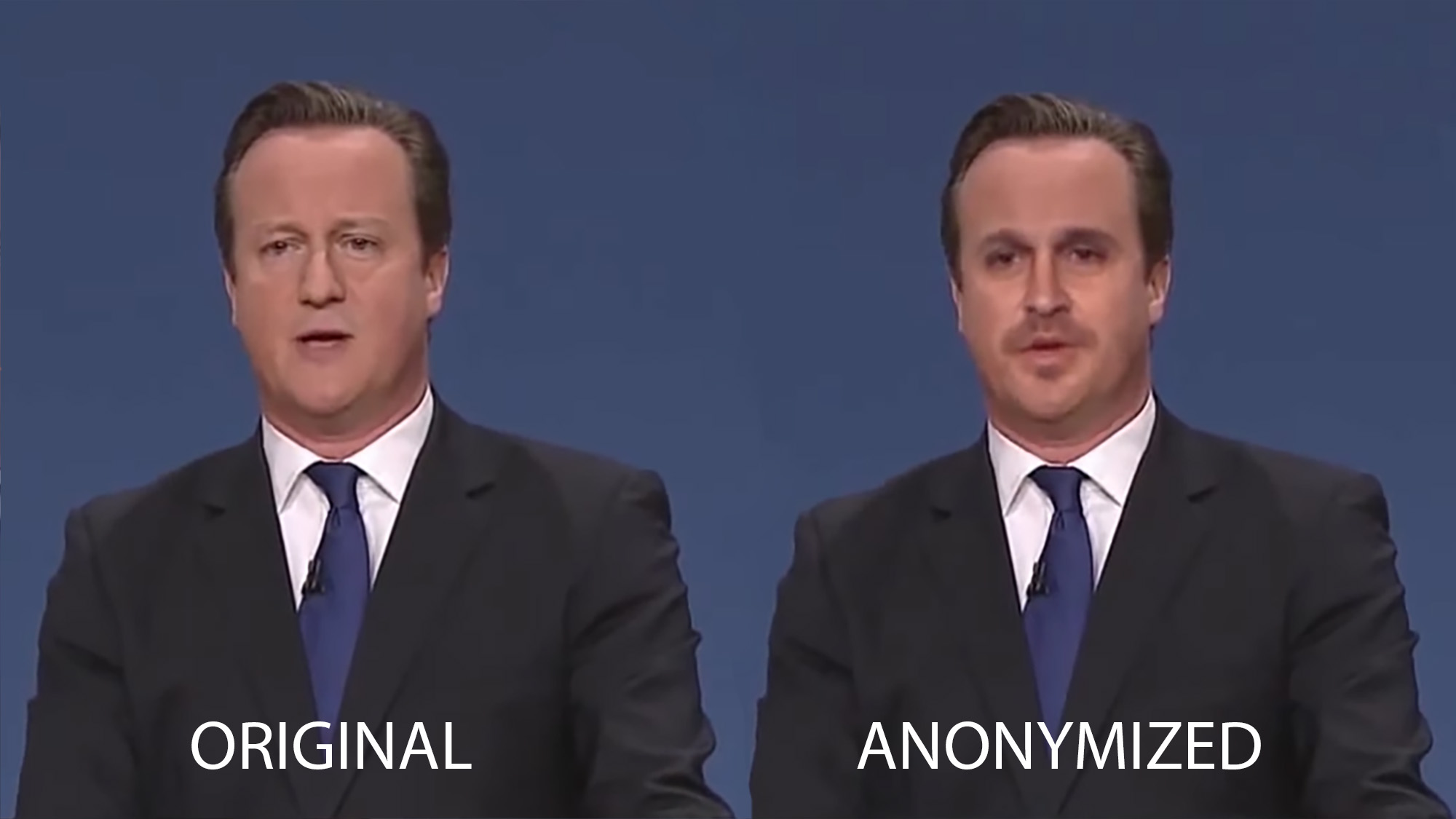

Examples of D-ID’s “smart anonymization” service show varying levels of success at obscuring identity in video. In one demonstration, former British prime minister David Cameron looks a bit like David Cameron with a mustache. In another still image, a woman looks somewhat different, but the two images still seem disconcertingly similar. In a third, Brad Pitt does become unrecognizable.

D-ID would not name particular customers that use its technology, but Perry said the company mainly works with retailers, car companies, and “large conglomerates that deploy CCTV in Europe.” Ann Cavoukian, a D-ID advisory board member and former privacy commissioner for the Canadian province of Ontario, says the solution is “a total win-win.”

Other experts, however, say that the company misinterprets Europe’s General Data Protection Rule, doesn’t do what it’s supposed to, and—even if it does—probably shouldn’t be doing it anyway.

GDPR violation?

Three leading European privacy experts who spoke to MIT Technology Review voiced their concerns about D-ID’s technology and its intentions. All say that, in their opinion, D-ID actually violates GDPR. (It could, however, be legal in non-EU areas.)

Race is a special category under GDPR, which means processing data that infers race is illegal without explicit consent, explains Gaëtan Goldberg, a data privacy lawyer with GDPR watchdog NOYB. Violations of GDPR rules can result in fines of €20 million or 4% of a company’s annual revenue, whichever is higher.

When presented with these concerns, David Mirchin, a privacy lawyer who advises D-ID, disagreed with the experts. He claims that “there never is a point where D-ID’s Smart Anonymization solution analyzes, reveals, or stores” this type of sensitive data. Perry adds that D-ID is happy to anonymize ethnicity data as well. The outside lawyers, however, contend that simply detecting the faces, whether to “anonymize” them or not, is a violation.

Perry and Mirchin also argue that it’s okay to feed anonymized biometric data to algorithms. But while GDPR doesn’t apply to anonymous data, the startup doesn’t actually anonymize the video in any case, says Michael Veale, a privacy expert at University College London. The scrambled video would not be considered anonymous under GDPR. With truly anonymous data, one piece of information can’t be linked back to a particular individual even when it is combined with other data. That’s a high bar.

But even if altered footage doesn’t look exactly like you, it can be combined with other information—location data taken from social media, for example, or credit card records—and traced back. Plus, says Veale, the technology still would not automatically give companies carte blanche to “repurpose CCTV for quite frivolous business purposes that aren’t something with a serious public interest, like fighting crime.”

Spirit of the law

The fact that D-ID positions itself as a privacy solution is revealing. With elements of new data protection laws like GDPR and the California Consumer Privacy Act remaining open to interpretation, some companies are proving ingenious at marketing themselves. These technologies “comply” with regulations in a way that benefits the companies that want to make money from data, rather than the people whose data is being captured. This is a violation of the spirit of the law, say critics. Britt Paris, an information science scholar at Rutgers University, calls D-ID exploitative and an example of “further encroachment of the datafication of everyday life.”

But Cavoukian, the D-ID board member, says she is not a “privacy fundamentalist” and thinks there’s nothing wrong with collecting data so long as people’s exact identities are obscured.

“If you don’t want any data collected about you at all, in this day and age, even though there’s no privacy issue because your identifiers have been stripped, you’re not going to be able to go outside your house very far,” she says. “Those are the realities of the day.”

Surveillance is becoming more and more widespread. A recent Pew study found that most Americans think they’re constantly being tracked but can’t do much about it, and the facial recognition market is expected to grow from around $4.5 billion in 2018 to $9 billion by 2024. Still, the reality of surveillance isn’t keeping activists from fighting back. D-ID may see itself as a middle ground between privacy purism and hoovering up raw data, but without the technology, perhaps companies covered by GDPR wouldn’t use this video data at all.

“These rules are there for a purpose, right?” says Lilian Edwards, a GDPR expert at Newcastle Law School. “[D-ID] says ‘Collect visual data while complying with privacy regulations’ and what it really means is ‘Collect visual data while avoiding privacy regulations.’ You know, the law is not actually completely stupid.”

Deep Dive

Policy

Is there anything more fascinating than a hidden world?

Some hidden worlds--whether in space, deep in the ocean, or in the form of waves or microbes--remain stubbornly unseen. Here's how technology is being used to reveal them.

What Luddites can teach us about resisting an automated future

Opposing technology isn’t antithetical to progress.

A brief, weird history of brainwashing

L. Ron Hubbard, Operation Midnight Climax, and stochastic terrorism—the race for mind control changed America forever.

Africa’s push to regulate AI starts now

AI is expanding across the continent and new policies are taking shape. But poor digital infrastructure and regulatory bottlenecks could slow adoption.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.